Stop Being Basic and Start Fine-Tuning Your SEO Models

Why Generic AI Is Costing Your SEO Strategy

Fine tuned models SEO is the practice of adapting a general-purpose large language model (LLM) on your own content, brand data, and SEO patterns — so it produces outputs that actually match your voice, strategy, and ranking goals.

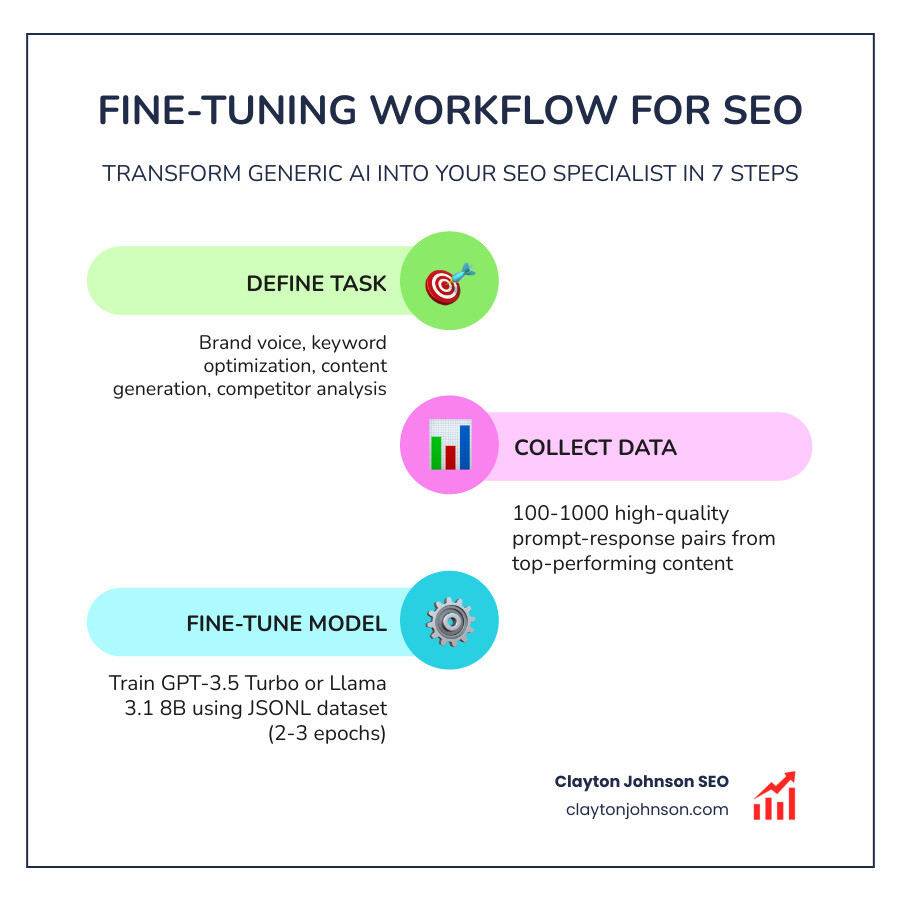

Here’s the quick answer on how fine-tuning works for SEO:

- Define your SEO task — brand voice, keyword optimization, content generation, competitor analysis

- Collect 100–1,000 high-quality prompt/response pairs from your best-performing content

- Fine-tune a base model (e.g., GPT-3.5 Turbo or Llama 3.1 8B) using your dataset

- Deploy the fine-tuned model inside your content workflow or CMS

- Measure and iterate using metrics like editing time saved, traffic lift, and F1 score

Generic models like GPT-4 are powerful. But they’re generalists. Ask them to write in your brand voice, follow your internal linking patterns, or produce SEO content that matches your top-performing pages — and the output starts to look like everyone else’s.

That’s the problem fine-tuning solves.

Think of it like the difference between hiring a general contractor and a specialist who has studied your building’s blueprints for months. One follows instructions. The other already knows what you need.

The results back this up. A lightweight fine-tuned model cut editing time per article by 37% compared to out-of-the-box GPT-4. And fine-tuning an 8B Llama model on 1,000 examples costs as little as $2–$3 on an H100 GPU — about 20 minutes of training time.

This isn’t a research project anymore. It’s a practical workflow upgrade.

I’m Clayton Johnson — SEO strategist and growth systems architect. My work sits at the intersection of technical SEO, AI-augmented workflows, and scalable content architecture, and fine tuned models SEO is one of the highest-leverage tools I’ve seen for founders and marketing leaders who want compounding results instead of one-off content spikes. This guide breaks down exactly how to build, deploy, and measure fine-tuned models inside a real SEO system.

Beyond Prompting: Why Fine-Tuning Beats Generic AI

Most marketers start their AI journey with Zero-shot learning. This is when you give a model a prompt with no examples and hope for the best. It’s the “write a blog post about Minneapolis SEO” approach. It works, but it’s basic.

Then there is Few-shot learning, where you provide 3–5 examples within the prompt to show the model the desired style. While better, this eats up your context window (the amount of text the model can “remember” at once), increasing token costs and latency.

Fine-tuning changes the game by baking the patterns directly into the model weights. Instead of reminding the model how to write every time you hit “send,” the model becomes the expert. This drastically reduces hallucinations because the model isn’t guessing your brand’s preferences—it has been physically re-wired to prioritize them.

Comparing AI Learning Methods for SEO

| Feature | Zero-Shot | Few-Shot | Fine-Tuning |

|---|---|---|---|

| Setup Effort | None | Low | Moderate |

| Accuracy | General | Better | Domain Specialist |

| Token Cost | Low | High (per request) | Lower (per request) |

| Brand Voice | Generic | Mimicked | Inherent |

| Use Case | Brainstorming | One-off tasks | Scalable Systems |

By moving beyond simple prompts, we can build the ultimate guide to ai-driven seo strategy and systems that don’t just generate text, but execute a structured growth architecture.

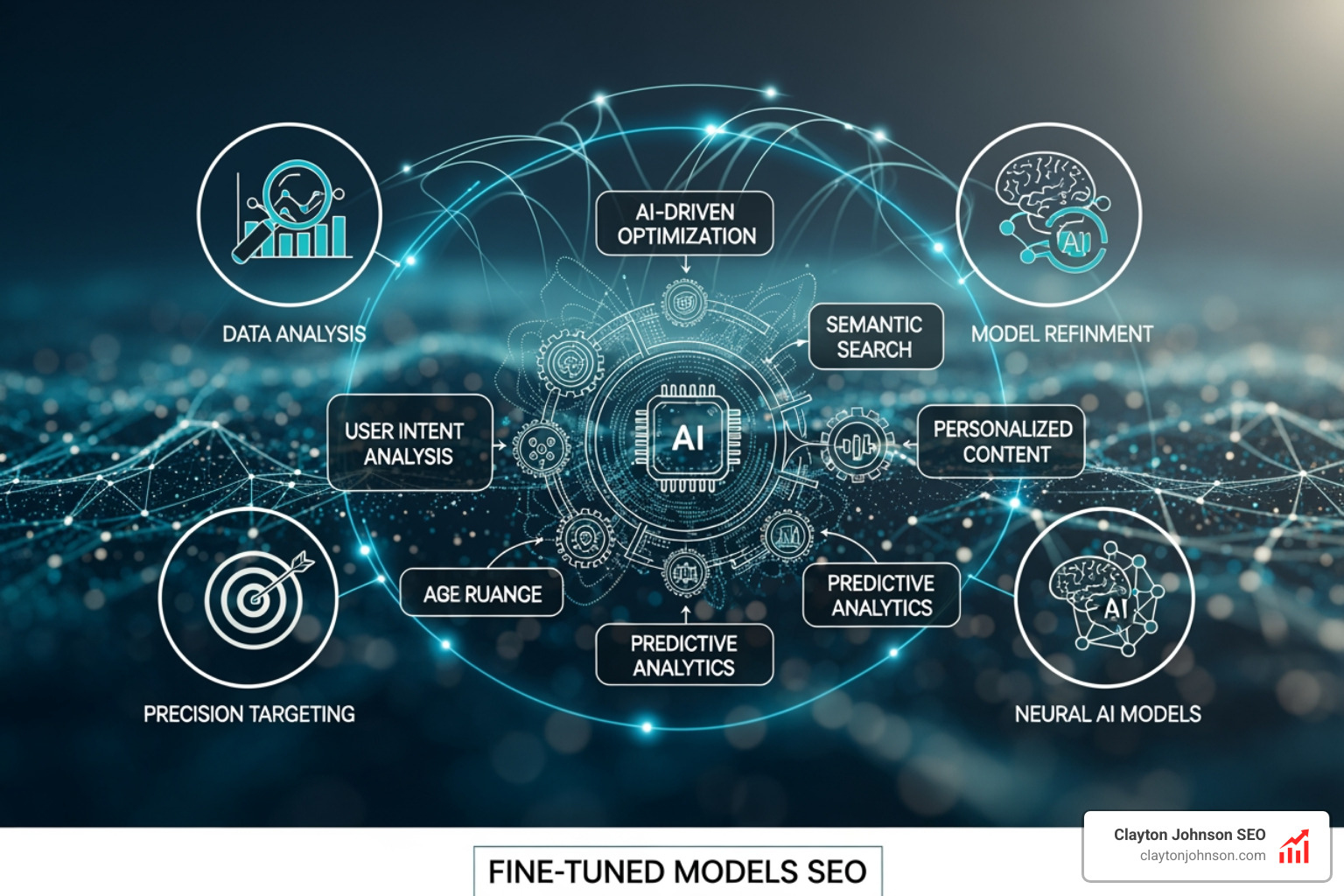

Strategic Applications of Fine-Tuned Models in SEO

Why go through the effort? Because generic AI lacks the “structured growth” mindset. When we apply fine tuned models SEO strategies, we are solving specific architectural problems.

- Brand Voice Consistency: We can train a model on your historical best-performing, human-edited content. The result is an AI writer that understands your tone, avoids your “forbidden” words, and uses your specific industry terminology without being told.

- Content Generation at Scale: Instead of massive editing rounds, fine-tuned models produce drafts that are 90% ready. Research shows a 37% reduction in editing time when using specialized models.

- Competitor Analysis: We can fine-tune models to identify “topical authority gaps.” By feeding the model data from top-ranked pages and your own URLs, it can pinpoint exactly what subtopics you’re missing to outrank the competition.

- Keyword Optimization: Generic models often “keyword stuff” or use unnatural phrasing. A fine-tuned model understands semantic density and how to naturally weave in long-tail variations.

- Internal Linking: You can train a model to recognize your site’s taxonomy and suggest dynamic internal links that pass maximum link equity.

For those looking to move fast, Clayton Johnson Content Generation Solutions provide the framework to turn these AI capabilities into SEO strategy for enterprises that actually move the needle in Minneapolis and beyond.

Building Your Dataset: The Blueprint for Fine-Tuned Models SEO

The secret to a high-performing model isn’t the code—it’s the data. “Garbage in, garbage out” has never been more true than in AI training.

To start, you need a dataset formatted in JSONL (JSON Lines). Each line in this file is a standalone training example consisting of a “prompt” (the instruction) and a “completion” (the ideal output).

Data Quality vs. Quantity

You don’t need millions of rows. In fact, for models like GPT-3.5 Turbo, you can see noticeable improvements with just 50–100 high-quality samples. For more complex tasks like full article generation, aim for 100–1,000 examples.

- Expert Auditing: Every example in your dataset should be “Gold Standard.” Use your best-performing articles that have already proven they can rank and convert.

- Synthetic Data: If you lack examples, you can use a more powerful model (like GPT-4) to generate “ideal” responses based on your top content, which you then use to train a smaller, faster model.

- Formatting: Follow the official Preparing Your Dataset for Fine-Tuning guide to ensure your JSONL structure is flawless.

When your data is clean, finding keywords with ai is easier than you think because the model already understands the intent and context of your niche.

Scaling Content with Fine-Tuned Models SEO

For companies wanting to avoid “vendor lock-in,” open-source models like Llama 3.1 or Mistral are fantastic options. Using techniques like QLoRA (Quantized Low-Rank Adaptation), we can update only a small fraction of the model’s parameters.

This makes training incredibly efficient. You can fine-tune an 8B parameter model on an H100 GPU in about 20 minutes for roughly $2–$3. Once trained, these models are often 10x cheaper and faster to run than proprietary giants, making them ideal for high-volume SEO tasks.

Measuring ROI: Costs, Metrics, and Performance

Is it worth the investment? Let’s look at the numbers. While fine tuned models SEO requires upfront training costs, the long-term efficiency is where the ROI lives.

- Training Costs: Expect to spend between $20–$40 for an initial training run on platforms like OpenAI or Google Vertex AI.

- Inference Costs: Fine-tuned models often have higher per-token costs (sometimes 3x to 8x higher than base models). However, because the prompts are much shorter (you don’t need to provide examples every time), the total cost per request can actually be lower.

- Performance Metrics:

- Precision: How many of the generated keywords were actually relevant?

- Recall: Did the model miss any critical SEO elements you trained it to include?

- F1 Score: The “harmonic mean” of precision and recall. Aim for a score of 0.8 or higher before moving to production.

We track these Fine-Tuning Cost Considerations closely to ensure that every AI implementation contributes to a compounding growth system rather than just being a shiny new toy.

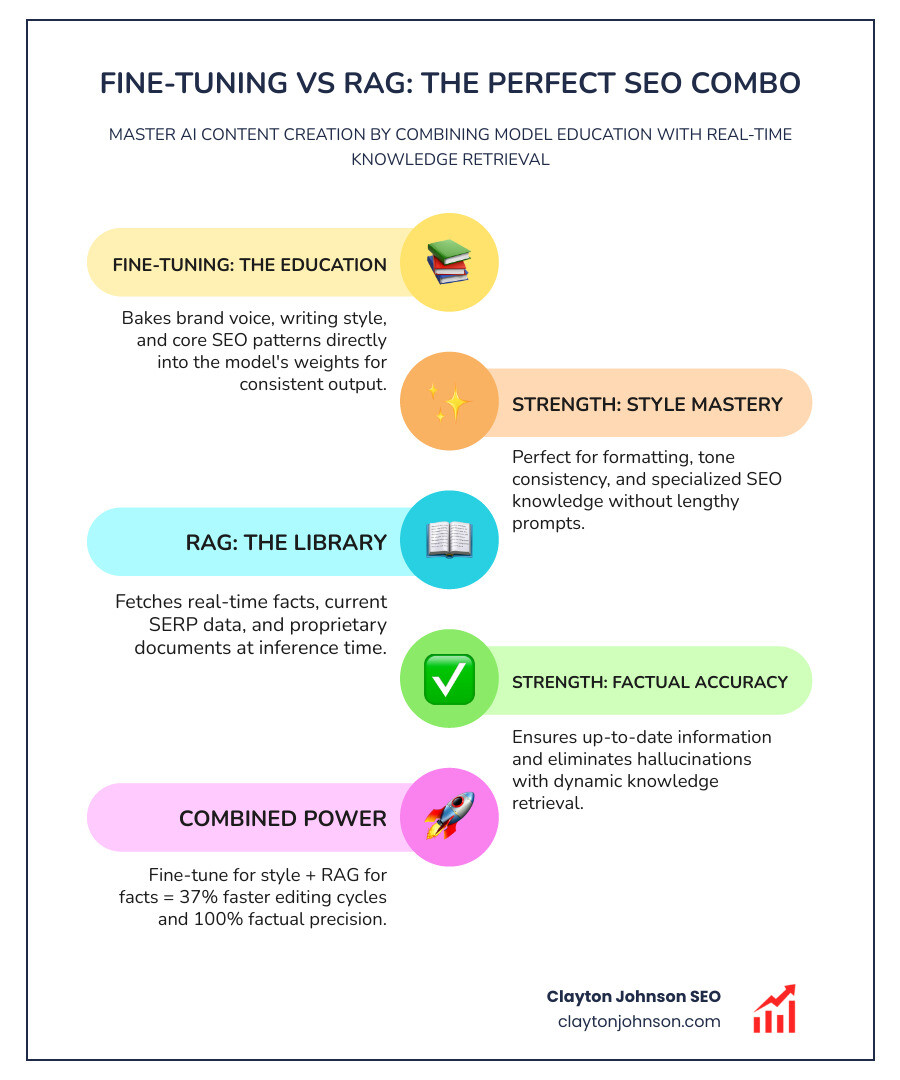

Advanced Workflows: RAG and Model Optimization

The most powerful SEO systems don’t rely on fine-tuning alone. They combine it with Retrieval-Augmented Generation (RAG).

Think of it this way:

- Fine-Tuning is the model’s education (how it speaks, its style, its fundamental SEO knowledge).

- RAG is the model’s library (its ability to look up real-time facts, current SERP data, or specific product specs).

By using Clayton Johnson AI Model Integrations, we can build systems where a fine-tuned model (specialized in your brand voice) pulls real-time data from a Knowledge Graph to write perfectly factual, SEO-optimized content. This workflow is essential for the ultimate guide to running ai models via api in a professional environment.

Future-Proofing with Fine-Tuned Models SEO and RAG

Google’s spam updates made one thing clear: helpful content wins. Google doesn’t penalize AI content; it penalizes unhelpful, low-effort content.

Fine-tuning helps you stay compliant by ensuring your AI output isn’t generic “slop.” By training on your proprietary data and combining it with dynamic retrieval, you create content that provides real value. This “reasoning” approach ensures that your content survives algorithm shifts because it’s built on a foundation of actual expertise, not just word prediction.

Frequently Asked Questions about Fine-Tuning

How much data is needed for fine-tuning?

For most SEO tasks, you can see a significant difference with 50–100 high-quality samples. If you want the model to master a complex brand voice or a specific content structure (like a 2,000-word guide), aim for 100–300 examples. Diminishing returns typically kick in after 1,000 samples.

Is fine-tuning better than RAG for SEO?

They serve different purposes. Fine-tuning is better for form, style, and behavior (e.g., “always use H2 tags for subheaders” or “write like a Minneapolis local”). RAG is better for facts and real-time data (e.g., “what is the current price of this service?”). For the best SEO results, use them together.

Does fine-tuning help with Google’s spam updates?

Yes. Google’s updates target “scaled content abuse”—which usually means generic, low-quality AI text. Because fine-tuned models are trained on your unique, high-quality data, the output is much more likely to be classified as “helpful” and “original” by Google’s helpful content systems.

Conclusion

The era of “push-button” generic AI is over. To win in today’s search landscape, you need a structured growth architecture.

At Clayton Johnson SEO, we don’t just “do SEO.” We build the infrastructure—the taxonomy-driven systems and AI-augmented workflows—that allow your brand to scale without losing its soul. Fine tuned models SEO is a core pillar of that leverage.

By specializing your models, you stop being basic and start building a competitive moat that generic AI simply cannot cross.

Ready to move beyond basic prompts? Explore our AI models and see how Demandflow can transform your growth strategy from a list of tactics into a compounding system.