What AI platforms compare best for your specific needs

Why Knowing How to Compare AI Models Actually Matters

Knowing how to compare AI is the difference between picking a tool that compounds your work and one that quietly wastes your time.

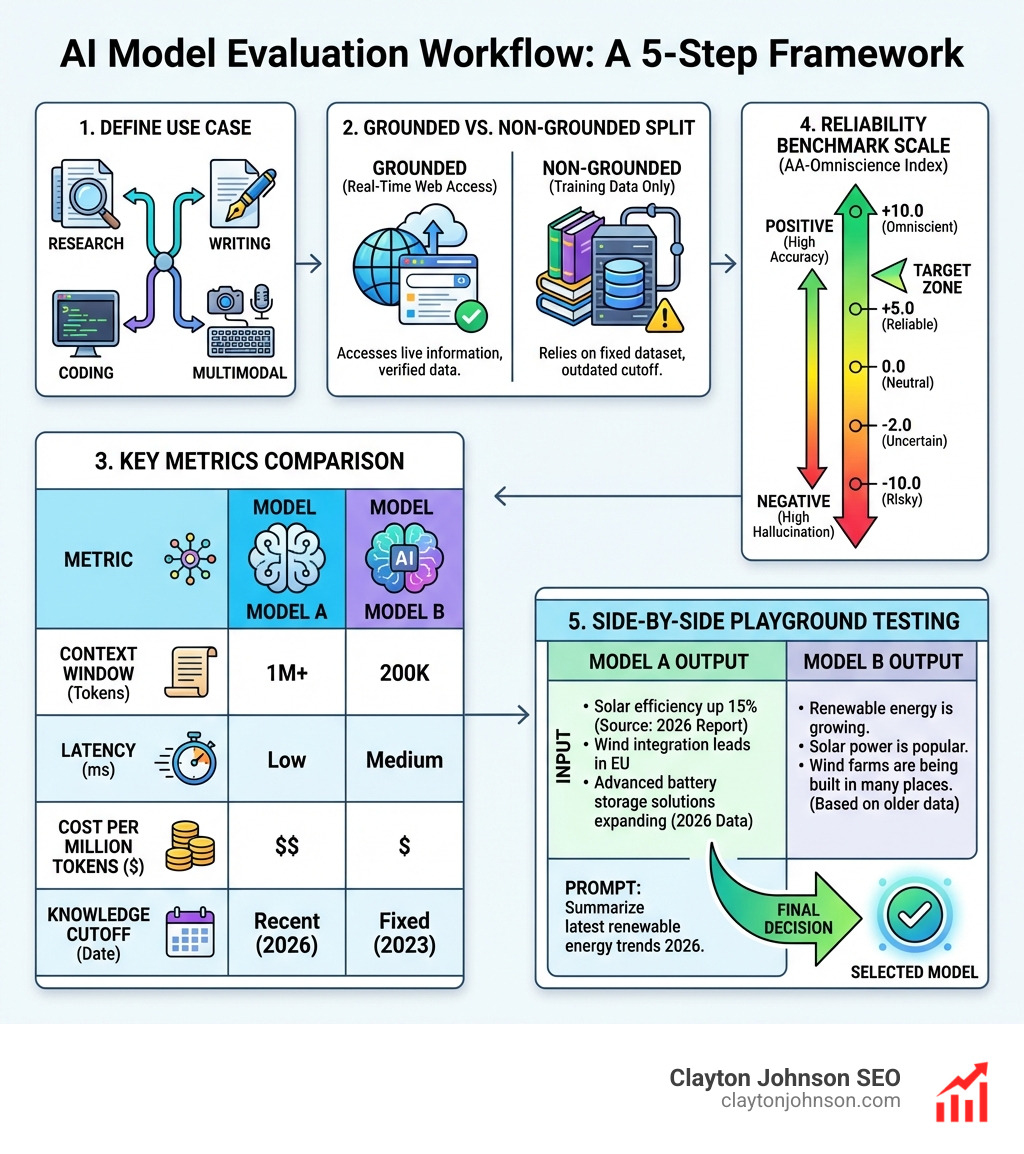

Here is a quick framework to evaluate any AI model:

- Define your use case – research, writing, coding, or multimodal tasks

- Check grounding – does the model use real-time web access or only training data?

- Review key metrics – context window, price per million tokens, latency, and knowledge cutoff

- Look at reliability benchmarks – use independent scores like the AA-Omniscience Index to measure hallucination risk

- Test side-by-side – run your actual prompts in a playground before committing

The short version: match the model to the job, verify with benchmarks, and test before you trust.

There are now dozens of major AI models competing for your attention. Claude, GPT-5, Gemini, Grok, Perplexity – each with different strengths, pricing structures, and reliability profiles. For founders and marketing leaders, picking the wrong one is not just a minor inconvenience. It means slower research, weaker output, and strategic decisions built on hallucinated facts.

The problem is that most comparisons are either too surface-level or buried in technical jargon that does not connect to real decisions.

I am Clayton Johnson, an SEO strategist and growth operator who has built AI-augmented marketing workflows across dozens of growth engagements – and learning how to compare AI models is one of the most practical skills I have applied to drive measurable results. Let me walk you through exactly how I evaluate these tools so you can make smarter, faster decisions for your own stack.

Relevant articles related to How to compare AI:

The Framework: How to Compare AI Models Effectively

When we sit down to evaluate a new model, we don’t just ask “is it good?” We look at a specific set of technical and practical levers. The AI landscape moves fast, but the underlying metrics stay the same. To truly understand how to compare AI, you need to look past the marketing hype and focus on the architecture of the model.

The first thing we look at is the context window. Think of this as the model’s “short-term memory.” A model like Gemini might offer a massive 2 million token context window, allowing you to upload entire codebases or hundreds of PDFs at once. Meanwhile, others might hover around 128k or 200k tokens. If you are summarizing a single email, any model works. If you are analyzing a year’s worth of SEO data, context window is your most important metric.

Next, we evaluate key performance metrics like latency (how fast the first word appears) and throughput (how fast it writes the rest). For a customer service bot, speed is everything. For a deep strategic analysis, we’re happy to wait an extra ten seconds for a more “intelligent” response. We also track the knowledge cutoff, which tells us when the model’s training ended. If a model was last updated months ago and lacks web access, it won’t know about the latest Google algorithm update.

Finally, consider the price per million tokens. In a production environment, costs can scale quickly. We often see models like GPT-5.2 priced around $1.75 per million input tokens, while “mini” or “flash” versions cost a fraction of that. Balancing intelligence vs. cost is the core of any professional AI strategy.

How to Compare AI for Research Accuracy

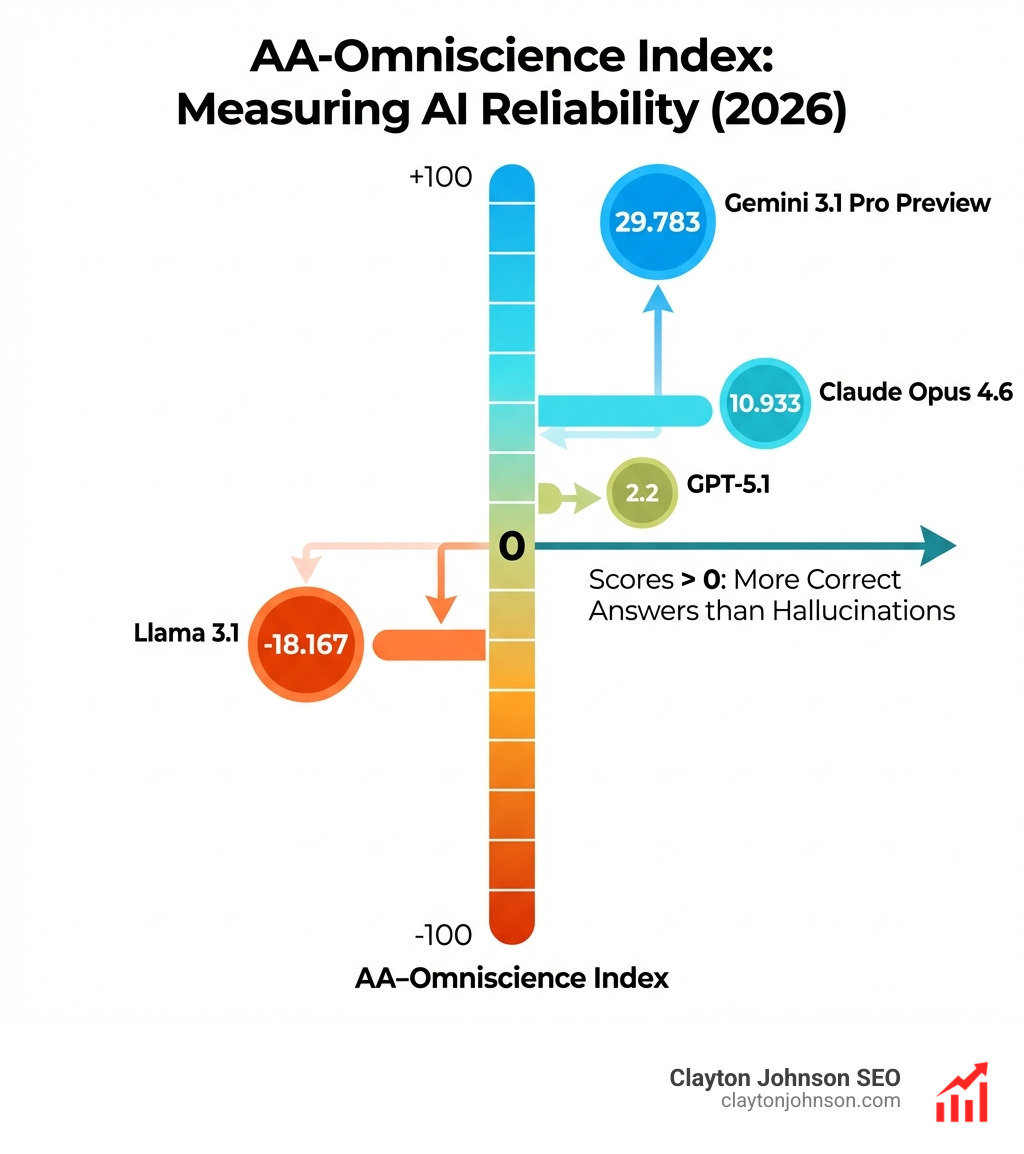

Accuracy is the “make or break” for research. We use the AA-Omniscience Index to cut through the noise. This index measures knowledge reliability on a scale of -100 to 100. It’s a brutal test: models get points for being right, lose points for “hallucinating” (making things up), and aren’t penalized for saying “I don’t know.”

For high-stakes research, we lean toward tools like Perplexity AI for current facts and sources. These “grounded” tools don’t just rely on what they learned during training; they search the live web and provide citations. However, we always teach our team that AI is a supplement, not a substitute. You should still verify critical facts against trusted library databases or primary sources. Even the best grounded tools can occasionally misattribute a quote or cite a low-quality blog post.

How to Compare AI for Developer Workflows

For our developers in Minneapolis and beyond, the criteria for how to compare AI shifts toward code logic and multi-file reasoning. A GitHub Copilot model comparison reveals that different engines excel at different coding tasks.

- GPT-4.1: Great for general-purpose coding and writing docstrings.

- GPT-5.2: The powerhouse for complex debugging and agentic tasks.

- Claude Sonnet 4.5: Exceptional for “full-stack” reasoning and legacy code modernization (like turning old COBOL into Node.js).

- GPT-5 mini: Perfect for fast, repetitive tasks like filtering lists or basic syntax help.

The goal isn’t just to generate code; it’s to find a model that understands the architecture of the entire project.

Grounded vs. Non-Grounded: Choosing Your Engine

Understanding the difference between grounded and non-grounded AI is the most important step in learning how to compare AI.

Non-Grounded AI (like the free version of ChatGPT or standard Claude models) relies entirely on its training data. These are your “creative geniuses.” They are fantastic for brainstorming, drafting creative copy, and sophisticated reasoning. Because they aren’t distracted by the “noise” of the live web, they often produce more cohesive and stylistically pleasing writing. We recommend ChatGPT for brainstorming and drafting when you need to explore new ideas or move from a blank page to a first draft.

Grounded AI, on the other hand, is connected to the real world. These tools use Retrieval-Augmented Generation (RAG) to pull in live data. If you need to know who won the game last night or what the current stock price of a competitor is, you need a grounded tool. Claude for sophisticated reasoning often acts as a bridge, offering high-level logic that can be paired with uploaded documents to “ground” the conversation in your specific data.

Distinguishing Grounded Tools for Fact-Checking

When you’re in the middle of a deep-dive research project, you need tools that can handle real-time information. Copilot for real-time information and Gemini for integrated search are the heavy hitters here.

The key to how to compare AI in this category is citation quality. A good grounded tool won’t just give you an answer; it will show you exactly where that answer came from. We look for:

- Direct Links: Can I click the source?

- Contextual Accuracy: Does the source actually say what the AI claims it says?

- Source Authority: Is it citing a peer-reviewed journal or a random Reddit thread?

Beware of “hallucinated citations,” where a model provides a link that looks real but leads to a 404 page. This is why verification steps are essential.

The Heavyweights: Claude, GPT, and Gemini Compared

To give you a better sense of the market, let’s look at the “Big Three.” Each has a distinct personality and use case.

| Feature | Claude Opus 4.6 | GPT-5.2 | Gemini 3 Pro |

|---|---|---|---|

| Primary Strength | Creative Reasoning | Coding & Agents | Long Context/Research |

| Context Window | 200,000 | 400,000 | 2,000,000 |

| Omniscience Score | 10.933 | 2.2 | 29.783 |

| Best For | Writing/Nuance | Complex Workflows | Massive Document Sets |

We often use NotebookLM for summarizing PDFs because it leverages Gemini’s massive context window to create “source-grounded” summaries. It’s essentially a research assistant that only looks at the files you give it.

On the other hand, we look at GPT-5 system card details to understand its safety protocols and “agentic” capabilities. GPT-5.2 is designed to act more like a collaborator that can execute multi-step plans, making it ideal for automating SEO workflows.

Strengths and Weaknesses of Top Models

When we teach our clients how to compare AI, we emphasize that no model is perfect.

- Claude Sonnet: Often cited as the best “all-rounder” for coding and writing balance. It feels more “human” than GPT but can sometimes be overly cautious (refusing to answer prompts it deems “unsafe”).

- GPT-4.1: Highly reliable and fast, but its knowledge cutoff can be a hurdle for current events.

- Gemini Flash: Incredible speed and low cost, but it may sacrifice some depth in complex reasoning tasks.

- Scite Assistant: A specialized tool we love for research summaries because it focuses specifically on scientific citations and veracity.

Technical Benchmarks and the AA-Omniscience Index

If you want to move beyond “vibes” and look at data, you need to follow the leaderboards. In our Minneapolis office, we keep a close eye on several key benchmarks to see who is currently winning the AI arms race.

While many people look at the LMSYS Arena human-preference leaderboard (which is based on “blind taste tests” by real people), we prefer harder technical data. You can visit Artificial Analysis for quality benchmarks to see how models stack up on:

- Instruction Following: Did the model actually do what you asked?

- Math and Logic: Can it handle complex equations without “tripping”?

- Coding: How many bugs does it introduce per 100 lines of code?

Measuring Reliability and Hallucination

The most shocking statistic in the AI world is that over 90% of models have negative AA-Omniscience scores. A negative score means the model is more likely to give you a hallucination than a correct answer when it’s unsure.

Currently, Gemini 3.1 Pro Preview leads the pack with a score of 29.783, making it one of the few exceptions to the hallucination rule. Claude Opus 4.6 follows with a respectable 10.933. Meanwhile, models like Llama 3.1 405B have scored as low as -18.167. This is why knowing how to compare AI benchmarks is vital—it tells you which models you can trust with your data.

Practical Selection: Speed, Cost, and Multimodal Tasks

For many businesses, the “best” model is simply the one that fits the budget while being “good enough.” This is where we look at the trade-off between a “brain” and a “coin.”

If you are running a high-volume SEO operation, you might use FriendliAI for reduced GPU costs. They focus on optimizing “Time to First Token” (TTFT), which makes applications feel snappier for the end-user.

We also have to consider multimodal tasks. Can the model “see” an image and turn it into code? Can it “hear” a meeting recording and summarize it?

- GPT-5.2: Excellent at turning UML diagrams or screenshots into functional code.

- Claude: Great at analyzing complex charts and graphs.

- Gemini: The leader in video understanding and long-form audio analysis.

Side-by-side Testing and Playgrounds

The ultimate way to learn how to compare AI is to do it yourself. We recommend using a Model Playground for real-time comparison. These tools allow you to enter one prompt and see the outputs from three or four different models side-by-side.

This “parallel processing” approach reveals the “personality” of each model. You’ll notice that one might be more concise, another more creative, and a third more prone to listing facts. For our SEO work at Demandflow.ai, we often run the same keyword strategy prompt through multiple models to see which one identifies the most nuanced competitive gaps.

Frequently Asked Questions about AI Comparison

What is the difference between grounded and non-grounded AI?

Grounded AI has access to external data sources (like the web or your own uploaded files) to verify its answers. Non-grounded AI relies solely on the information it was given during its initial training. Grounded is better for facts; non-grounded is often better for creative writing.

Which AI model has the highest knowledge reliability score?

According to recent benchmarks, Gemini 3.1 Pro Preview holds the highest AA-Omniscience Index score (29.783), indicating it is the most reliable at providing correct information while minimizing hallucinations.

How do I choose between a fast model and an intelligent model?

It depends on the volume and complexity of the task. For simple, repetitive tasks like sentiment analysis or basic formatting, choose a “Flash” or “Mini” model for speed and cost-efficiency. For strategic planning, coding complex features, or deep research, choose a “Pro” or “Opus” model.

Conclusion

Learning how to compare AI is not a one-time task; it is an ongoing process of evaluation. At Clayton Johnson SEO, we believe that clarity and structure are the foundations of leverage. Whether we are building a structured SEO strategy or an AI-augmented marketing workflow, we always start by picking the right tool for the job.

The major models—Claude, GPT, and Gemini—each offer unique advantages. By using frameworks like the AA-Omniscience Index and testing models side-by-side in playgrounds, you can stop guessing and start building.

If you’re looking for a structured growth architecture that integrates these advanced AI workflows into a measurable SEO system, that is exactly why we built Demandflow.ai. We don’t just provide tactics; we provide the infrastructure for compounding growth.

Ready to level up your stack? Find the right AI tools for your SEO strategy and start building your own growth engine today.