How to Keep Your Algorithms on Their Best Behavior

Why AI Ethics Principles Matter for Every Business Building With AI

What are AI ethics principles is one of the most important questions any founder or marketing leader should be asking. Here is a quick answer:

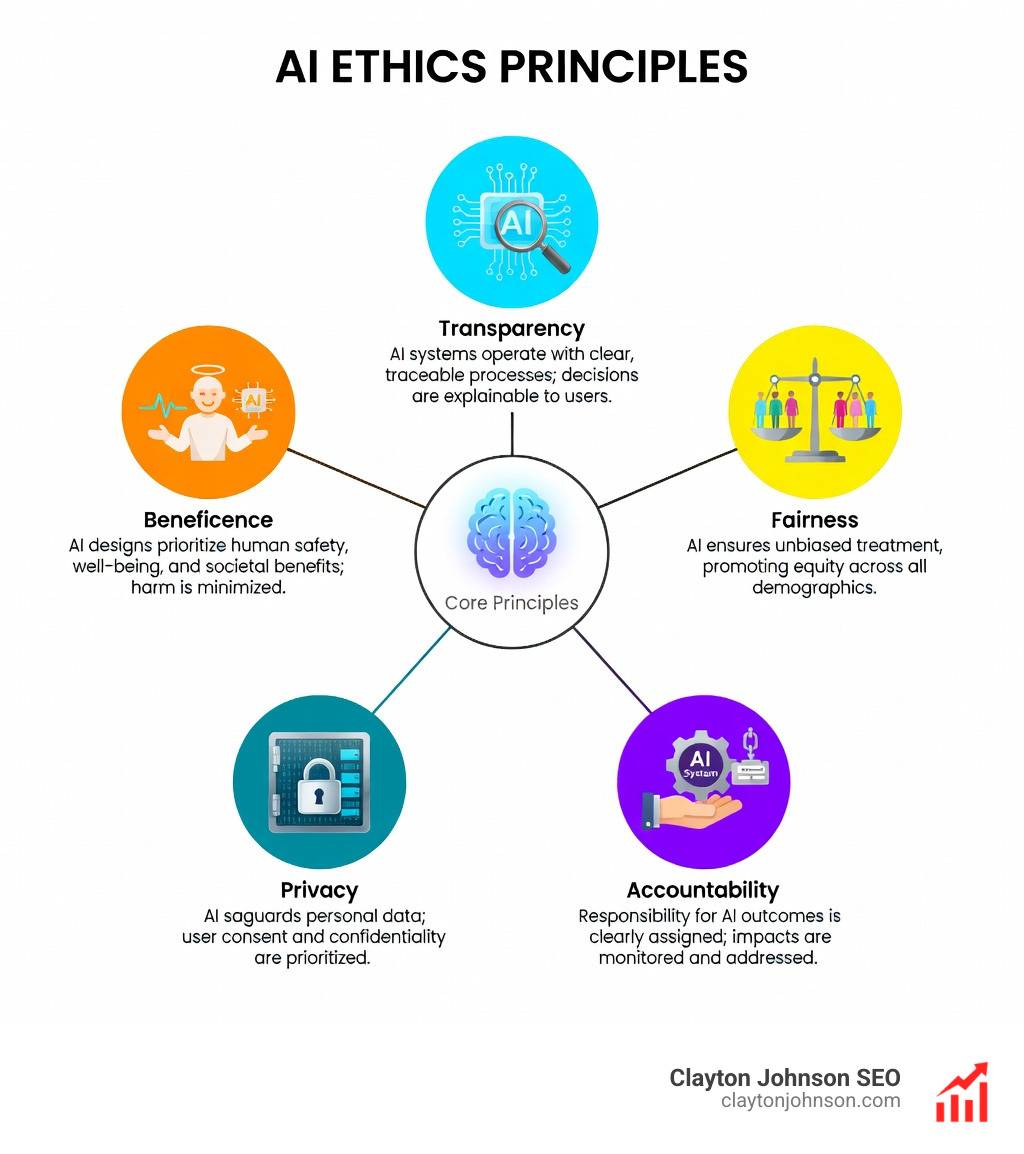

AI ethics principles are a set of guidelines that govern how AI systems are designed, deployed, and managed responsibly. The core principles are:

- Transparency – AI decisions should be explainable and understandable

- Fairness – AI systems must treat all people equitably, without bias

- Accountability – Clear responsibility must exist for AI outcomes

- Privacy – Personal data must be protected throughout the AI lifecycle

- Reliability – AI systems should perform consistently and safely

- Human oversight – Humans must remain in control of consequential decisions

These are not just ideals. They are the operating standards that separate trustworthy AI from dangerous AI.

People have been thinking about these questions for decades, and what was once mostly speculative is now a business-critical reality. Research from the Capgemini Research Institute found that AI creates ethical issues in at least 9 out of 10 businesses. That means if you are building with AI, you are almost certainly navigating an ethical challenge right now – whether you recognize it or not.

The stakes are high. Biased systems cause real harm. Opaque decisions erode trust. And without clear accountability, organizations face serious legal, reputational, and operational risk.

I’m Clayton Johnson, an SEO and growth strategist who works at the intersection of AI systems, structured content architecture, and scalable marketing infrastructure – and understanding what are AI ethics principles is foundational to building AI workflows that are both effective and defensible. In the sections below, I will break down each principle clearly, show where organizations get it wrong, and give you a practical framework for building AI systems your users and stakeholders can trust.

What are AI ethics principles word roundup:

Defining AI Ethics and Why They Matter

At its heart, AI ethics is a multidisciplinary field focused on ensuring that artificial intelligence respects human values and avoids causing undue harm. We aren’t just talking about robots taking over the world; we are talking about the algorithms that decide who gets a mortgage, which resume moves to the top of the pile, and how your personal data is used to predict your next purchase.

The reason these principles matter so much today is that AI adoption is outstripping our typical safety checks. A recent global study found that 73% of consumers trust content produced by generative AI, yet awareness of risks like misinformation and misuse remains dangerously low. When 9 out of 10 businesses are already hitting ethical roadblocks, we can no longer treat ethics as a “nice-to-have” feature.

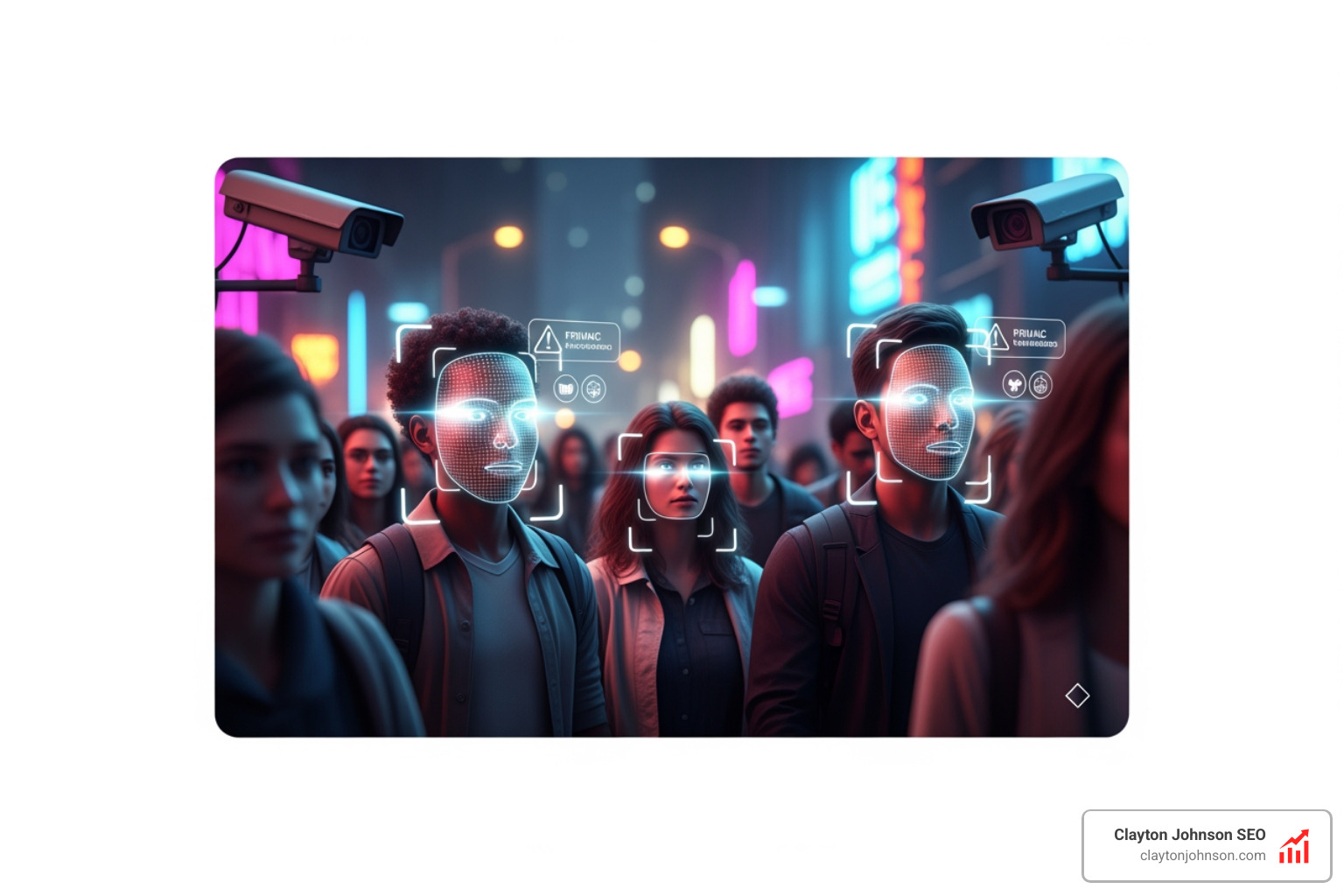

Ethical foundations protect human rights and maintain societal safety. Without them, AI can inadvertently amplify existing inequalities, infringe on privacy through mass surveillance, or even threaten physical safety in sectors like healthcare and autonomous transit. For us, building “trustworthy tech” means moving beyond the hype to ensure that every automated decision is grounded in a moral framework.

What are AI ethics principles?

When we ask, “What are AI ethics principles?“, we are looking for the guardrails of the digital age. These principles act as a compass for developers, helping them navigate the “black box” of machine learning. While different organizations use slightly different wording, the consensus usually lands on several core pillars.

- Transparency: The inner workings of the AI shouldn’t be a secret.

- Fairness: The system shouldn’t have “favorites” based on race, gender, or background.

- Accountability: If the AI breaks something, we need to know who is responsible.

- Privacy: Our data belongs to us, and AI should respect that.

- Reliability: The AI needs to do what it says it will do, every single time.

To see how these fit into broader industry expectations, you can explore a comprehensive list of current AI governance standards which help bridge the gap between abstract values and technical requirements.

Transparency and Explainability in Decision-Making

One of the biggest hurdles in AI is the “black box” problem. This happens when an algorithm makes a decision—like denying a loan—but even the developers can’t explain exactly why it made that choice. For AI to be ethical, it needs to be transparent.

Transparency isn’t just about dumping thousands of lines of code onto a public website. Real transparency involves explainability: the ability to provide a human-readable reason for an outcome. For example, if a bank uses AI to screen applications, the customer should receive a clear explanation of the factors that led to a refusal.

We achieve this through:

- Algorithmic Disclosure: Being open about which models are being used.

- Public Education: Helping non-technical users understand how AI impacts their lives.

- Traceability: Keeping a record of the data and processes that led to a specific decision.

If you are a researcher or developer, you might want to download your copy now of specialized guides that help you train AI responsibly from the ground up.

Accountability and Governance Throughout the Lifecycle

If a self-driving car causes an accident, who is at fault? Is it the programmer, the owner, or the manufacturer? This is the core of the accountability principle.

Accountability means that “AI actors”—the people and companies building these tools—must take responsibility for the system’s impact. This requires a comprehensive guide to AI governance that covers the entire lifecycle, from the first line of code to post-deployment monitoring.

Organizations should implement:

- Audit Trails: Detailed logs of system performance and changes.

- Risk Management: Systematic checks for potential harm at every stage.

- Corporate Responsibility: A culture where ethical failures are treated as seriously as financial ones.

Mitigating Algorithmic Bias Through Data Quality

The old saying “garbage in, garbage out” has never been more true than in AI. If you train an AI on biased data, you will get biased results. For example, some hiring algorithms have favored male candidates because they were trained on historical data from an era when men held most of the roles.

Similarly, a well-known case from the University of Washington found that some AI resume screeners selected White-associated names 85% of the time, while Black-associated names had only a 9% chance of moving forward. We also see this in the justice system, where predictive policing software has led to over-policing in communities of color based on skewed arrest data.

To mitigate this, we must focus on:

- Representative Sampling: Ensuring training data reflects the real-world diversity of the population.

- Inclusive Design: Involving diverse teams in the development process to spot blind spots.

- Ethical Data Sourcing: Respecting consent and privacy when gathering datasets.

Global Standards: UNESCO, OECD, and the EU AI Act

Because AI doesn’t stop at national borders, we need international cooperation. Several major frameworks now guide how we think about what are AI ethics principles on a global scale.

| Framework | Key Focus Areas | Scope |

|---|---|---|

| UNESCO Recommendation | Human rights, dignity, and environmental flourishing | 194 Member States |

| OECD AI Principles | Innovation, trust, and human-centric values | Global standard for policy |

| EU AI Act | Risk-based regulation and strict compliance | European Union (Global influence) |

The UNESCO Recommendation on the Ethics of AI was the first-ever global standard, emphasizing that human rights must be the cornerstone of technology. Meanwhile, the OECD AI Principles provide a roadmap for governments to align their policies with international standards.

For businesses, mastering enterprise AI governance and regulatory standards is essential for staying compliant as these global rules begin to take the shape of local laws, like the EU AI Act.

Practical Steps for Building Ethical AI Systems

Building ethical AI isn’t a one-time task; it’s a continuous process. We recommend a “shift-left” mentality, which means integrating ethical considerations at the very beginning of the design phase rather than trying to fix problems after the product has launched.

Here are the practical steps we take:

- Ethical Audits: Regularly evaluate your AI systems for bias, fairness, and unintended consequences.

- Interdisciplinary Teams: Don’t just leave it to the engineers. Include ethicists, social scientists, and legal experts in your development cycles.

- User Feedback Loops: Create ways for real-world users to report issues or biased outcomes.

- Continuous Monitoring: AI models can “drift” over time as they ingest new data, so they need constant supervision.

To get started, consider charting the course for ethical AI implementation by establishing a clear internal policy. From there, you can focus on building a responsible AI framework that aligns with your specific business goals.

Corporate Case Studies: Google, Microsoft, and IBM

Leading tech companies have already begun operationalizing these principles. While we should always look at corporate claims with a critical eye, their frameworks provide a useful template for others.

- Google: Focuses on socially beneficial AI and avoiding the creation or reinforcement of unfair bias.

- Microsoft: Uses six core principles—accountability, inclusiveness, reliability, fairness, transparency, and privacy—to guide their Azure AI tools.

- IBM: Is often cited for its trustworthy AI initiatives, utilizing a “Responsible Use of Technology” framework to ensure their systems are transparent and robust.

These companies show that ethics can be integrated into the business model, helping to mitigate risk while fostering innovation.

Future Concerns: Deepfakes, Emotional AI, and Automation

As technology evolves, new ethical frontiers are opening up. We are no longer just worried about simple bias; we are facing challenges that could fundamentally alter our reality.

- Deepfakes: The rise of deepfakes poses a massive threat to personal identity and can be used as a weapon for disinformation.

- Emotional AI: Research from researchers at MIT warns that AI designed to detect and respond to human emotions could lead to unprecedented levels of manipulation.

- Autonomous Weapons: There is a growing global movement among groups like Human Rights Watch to ban autonomous weapons that can make lethal decisions without human intervention.

- Job Displacement: A Goldman Sachs report suggests that AI could replace hundreds of millions of full-time jobs, raising questions about economic equity and the future of work.

These issues remind us that the question of what are AI ethics principles is not a static one. It must evolve as quickly as the code itself.

Frequently Asked Questions about AI Ethics

What is the difference between ethical AI and responsible AI?

While the terms are often used interchangeably, “Ethical AI” usually refers to the moral philosophy and principles (the “why”), whereas “Responsible AI” refers to the practical application, governance, and frameworks used to implement those principles (the “how”).

Who is accountable for AI failures?

Accountability typically rests with the “AI actors” involved in the system’s lifecycle. This includes the developers who wrote the code, the organizations that deployed it, and the operators managing it. Clear traceability and human oversight are required to ensure that responsibility is never shifted solely to the algorithm.

How does biased data affect AI?

Biased data acts as a flawed foundation. If the data used to train an AI is incomplete or reflects historical prejudices, the AI will learn those patterns and replicate them. This leads to discriminatory outcomes in areas like hiring, lending, and law enforcement, often hitting marginalized communities the hardest.

Conclusion

Understanding what are AI ethics principles is the first step toward building a sustainable, growth-oriented business in the modern era. At Clayton Johnson SEO, we believe that the most successful companies won’t just be the ones with the fastest AI, but the ones with the most structured growth architecture and the highest levels of trust.

By prioritizing transparency, fairness, and accountability, we can move away from the “move fast and break things” mentality and toward a future where technology empowers everyone. Whether you are building SEO strategy, content architecture, or AI-enhanced execution systems, ethics must be the infrastructure that holds it all together.

For more information on how to manage these systems effectively, visit our guide on AI Governance to see how we help founders and marketing leaders build for the long term.