Chain Reaction: How to Scale Your AI Workflows with Multi-Layer Prompts

Why Single Prompts Break Down at Scale (And What to Do Instead)

How chaining prompts scales AI by breaking complex tasks into focused, sequential steps — where each prompt handles one job, passes its output to the next, and the whole system compounds in reliability and quality.

Here’s the core idea in plain terms:

- Decompose — Split a complex task into discrete subtasks

- Sequence — Feed the output of each step as input to the next

- Control — Debug, test, and improve each step independently

- Scale — Handle workflows that would overwhelm any single prompt

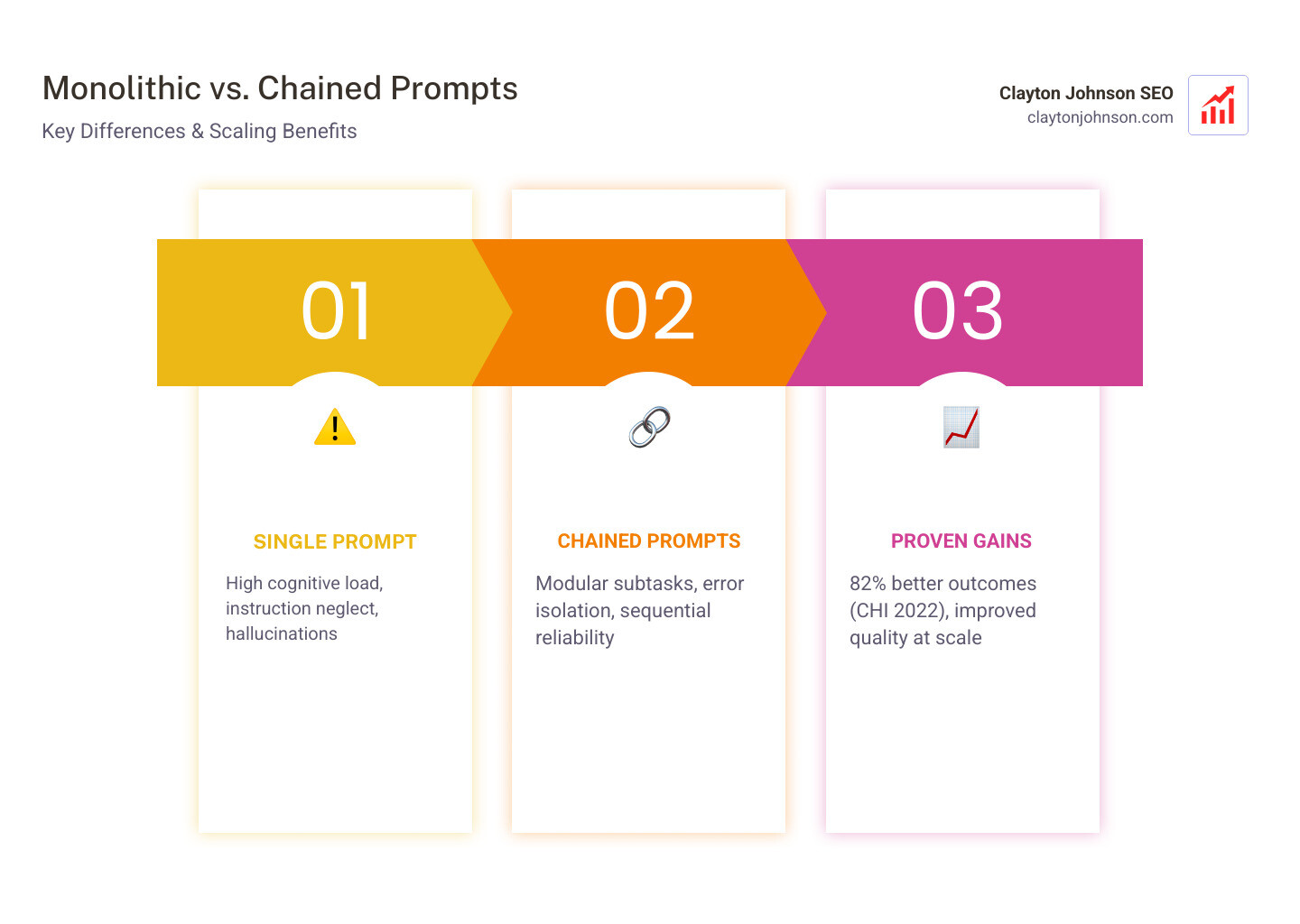

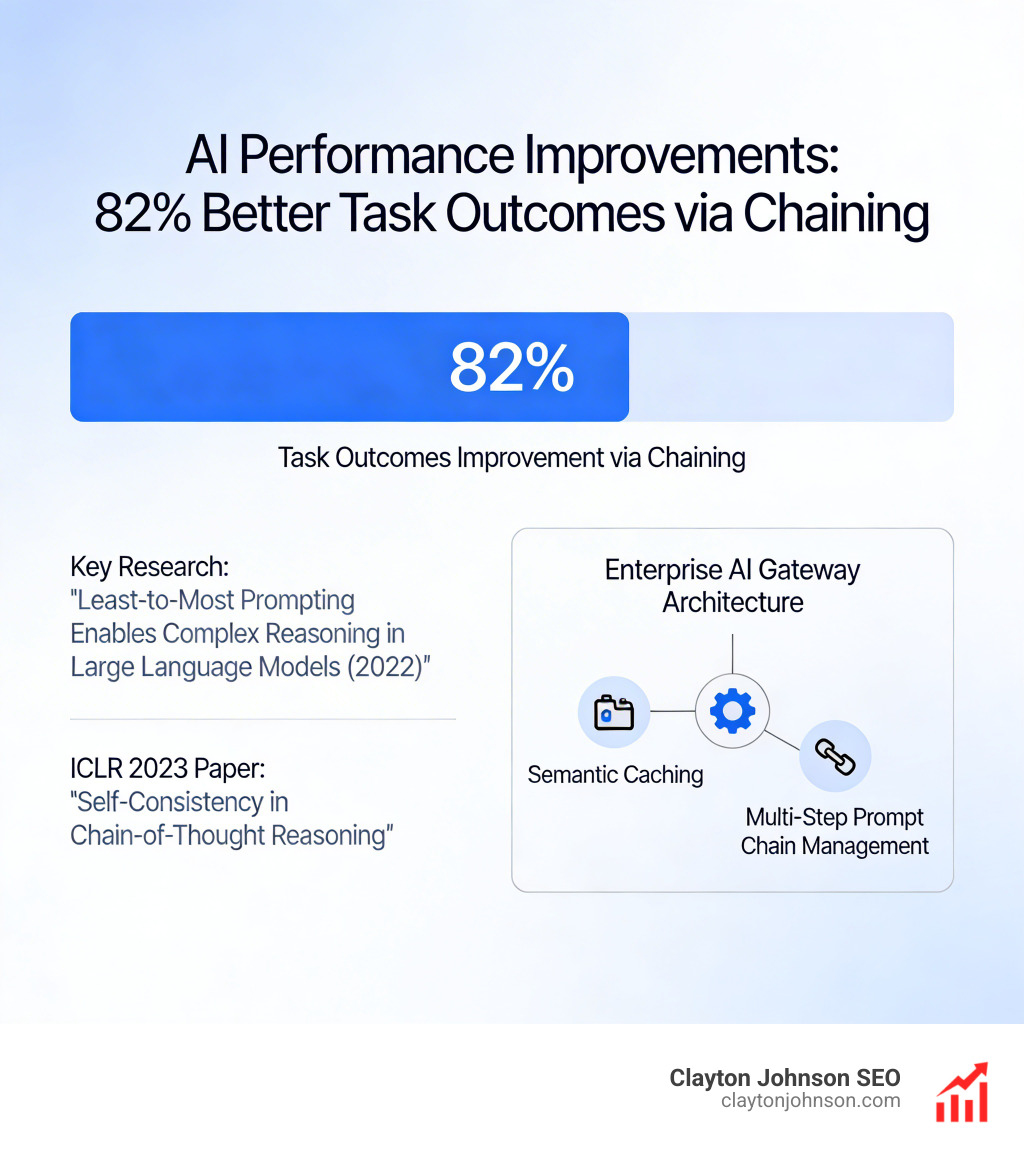

Think about what happens when you hand a single prompt a multi-step task. The model has to summarize, analyze, validate, and format — all at once. Research from CHI 2022 found that chained approaches improved task outcomes 82% of the time compared to single-prompt interfaces. The reason is simple: cognitive load. When a model is asked to do too much at once, it drops instructions, drifts in context, or hallucinates details. Breaking tasks apart fixes all three problems.

This isn’t just a theory. It’s how reliable AI systems get built at scale.

I’m Clayton Johnson — SEO strategist and AI workflow architect. I’ve spent years building AI-augmented marketing systems and structured growth frameworks, and understanding how chaining prompts scales AI is central to every production-grade workflow I design. Let’s walk through exactly how to apply it.

What is Prompt Chaining and How Does It Work?

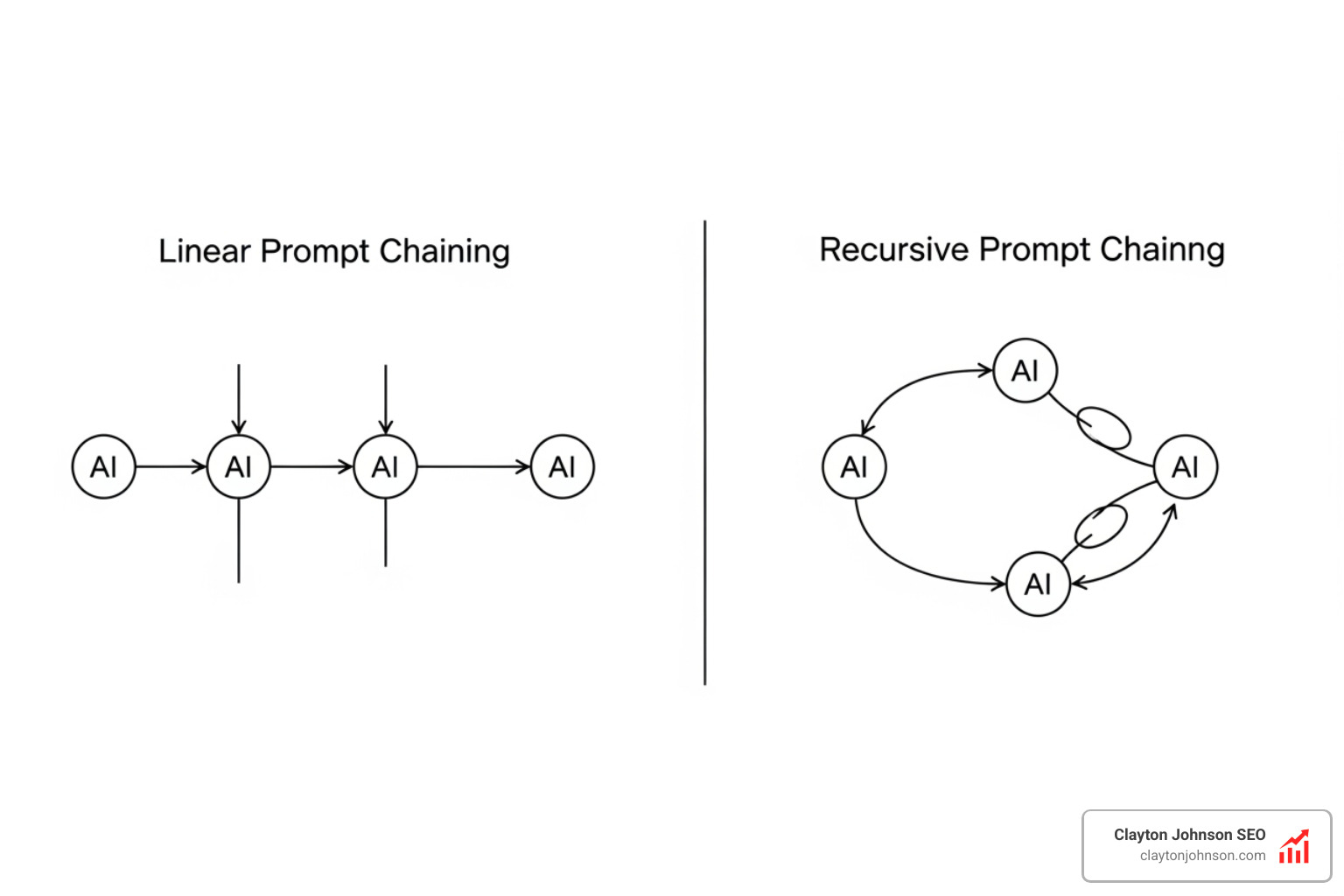

At its heart, prompt chaining is the process of linking multiple Large Language Model (LLM) calls together. Instead of asking an AI to “write a 2,000-word SEO article with research, keywords, and formatting” in one go, we break that massive request into a series of smaller, interconnected prompts.

In a chain, the output of Prompt A becomes the input for Prompt B. This creates a “data contract” between steps. For example, Prompt A might extract key themes from a transcript and output them as a clean JSON list. Prompt B then takes that JSON list and expands each theme into a detailed paragraph. By defining these handoffs, we ensure the AI stays on track.

According to this guide to prompt chaining techniques, this systematic approach decomposes complex operations into sequential, focused subtasks. This is the foundation of building “agentic” workflows—systems that don’t just respond to a query, but execute a plan.

How chaining prompts scales AI through modularity

The secret to scaling isn’t just “more prompts”; it’s modularity. When we build AI workflows using atomic operations, we treat each step like a Lego brick. If the “summarization” step of our chain starts hallucinating, we don’t have to rewrite the entire system. We just fix that one specific prompt.

This modularity allows for better context engineering. By only giving the AI the specific data it needs for a single subtask, we prevent “instruction neglect”—a common issue where models ignore the middle of a long prompt. Organizations like NivaLabs AI emphasize that this approach is foundational for building sophisticated, context-aware AI agents that can think, plan, and act step-by-step.

The difference between chaining and chain-of-thought

It’s easy to confuse prompt chaining with “Chain-of-Thought” (CoT) prompting. While they sound similar, they serve different purposes:

- Chain-of-Thought (CoT): This happens within a single prompt. You ask the AI to “think step-by-step” before giving an answer. It’s like a student showing their work on a math test. (You can learn more in our beginners-guide-to-ai-prompt-engineering).

- Prompt Chaining: This involves multiple distinct prompts. Each step is a separate API call. This provides much higher transparency because you can see exactly what the AI “thought” at Step 1 before it moved to Step 2.

Chaining is essentially an externalized version of CoT that gives human developers more control and the ability to intervene between steps.

The Research Evidence: Why Chaining Outperforms Monolithic Prompts

Is the extra effort of building a chain actually worth it? The data says yes. Recent research published in the ACL 2024 Findings paper shows that prompt chaining consistently outperforms monolithic prompts in tasks like text summarization.

When a single prompt tries to handle drafting, critiquing, and refining all at once, the quality of the final output suffers. Chained refinement—where one prompt drafts and a second, separate prompt critiques—delivers superior results.

| Feature | Monolithic Stepwise Prompt | Chained Refinement |

|---|---|---|

| Accuracy | Lower (Instruction Drift) | Higher (Focused Subtasks) |

| Transparency | Low (Black Box) | High (Step-by-Step Logs) |

| Debuggability | Hard (Must tweak everything) | Easy (Isolate the weak link) |

| Reliability | Variable | Consistent (15.6% improvement) |

How chaining prompts scales AI reasoning quality

Beyond simple text generation, how chaining prompts scales AI reasoning is even more impressive. On mathematical benchmarks like GSM8K and SVAMP, techniques like self-consistency (a form of chaining) have achieved double-digit accuracy improvements.

Research on Least-to-Most Prompting Enables Complex Reasoning in Large Language Models found that breaking a problem into its smallest components allowed GPT-3 to achieve near-perfect compositional generalization. This means the AI could solve problems significantly harder than the examples it was originally shown, simply because the chain provided a logical ladder to climb. Reference the ICLR 2023 paper on self-consistency for more on how sampling diverse reasoning paths leads to the most consistent and correct answers.

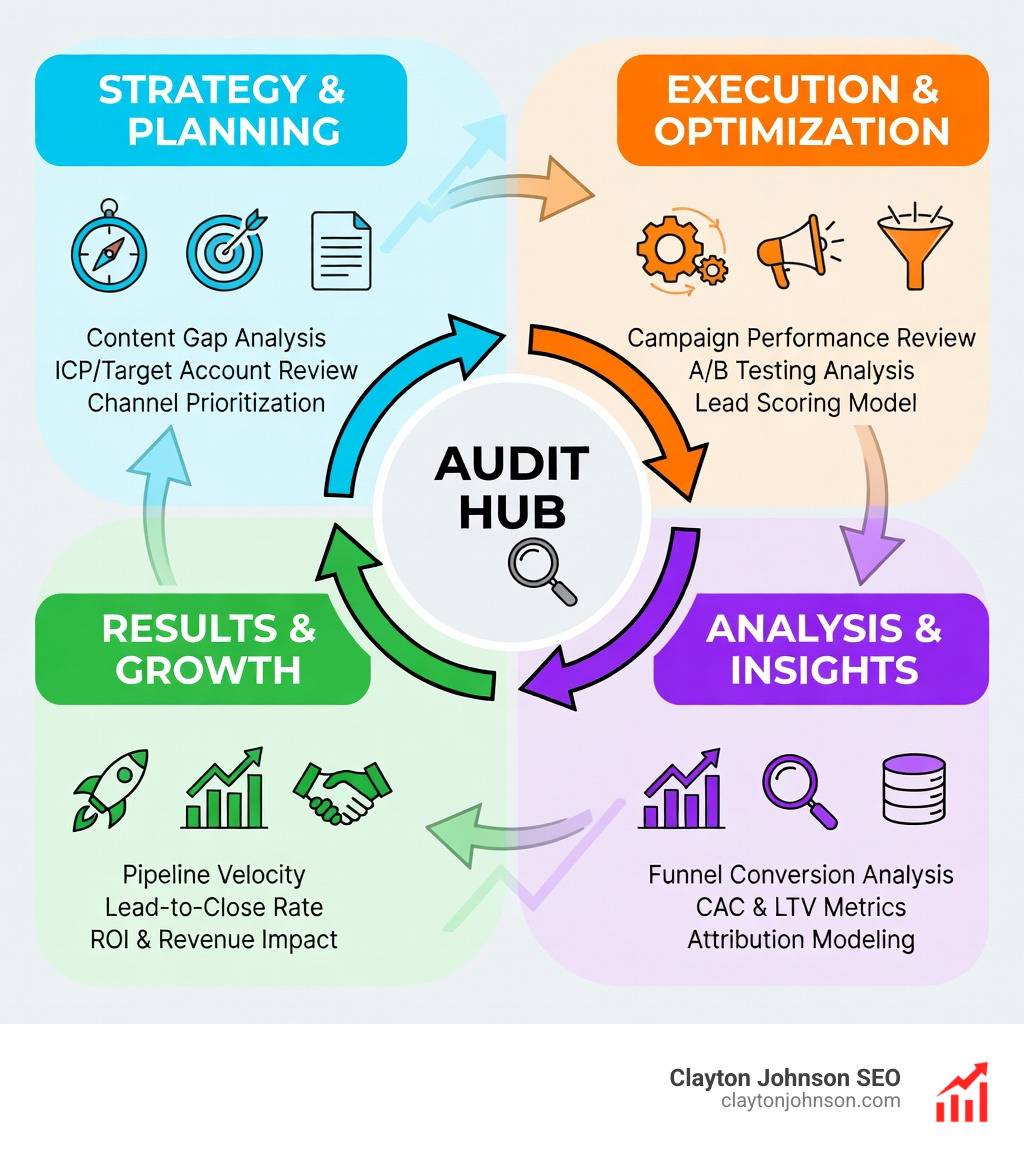

How Chaining Prompts Scales AI: A Production Framework

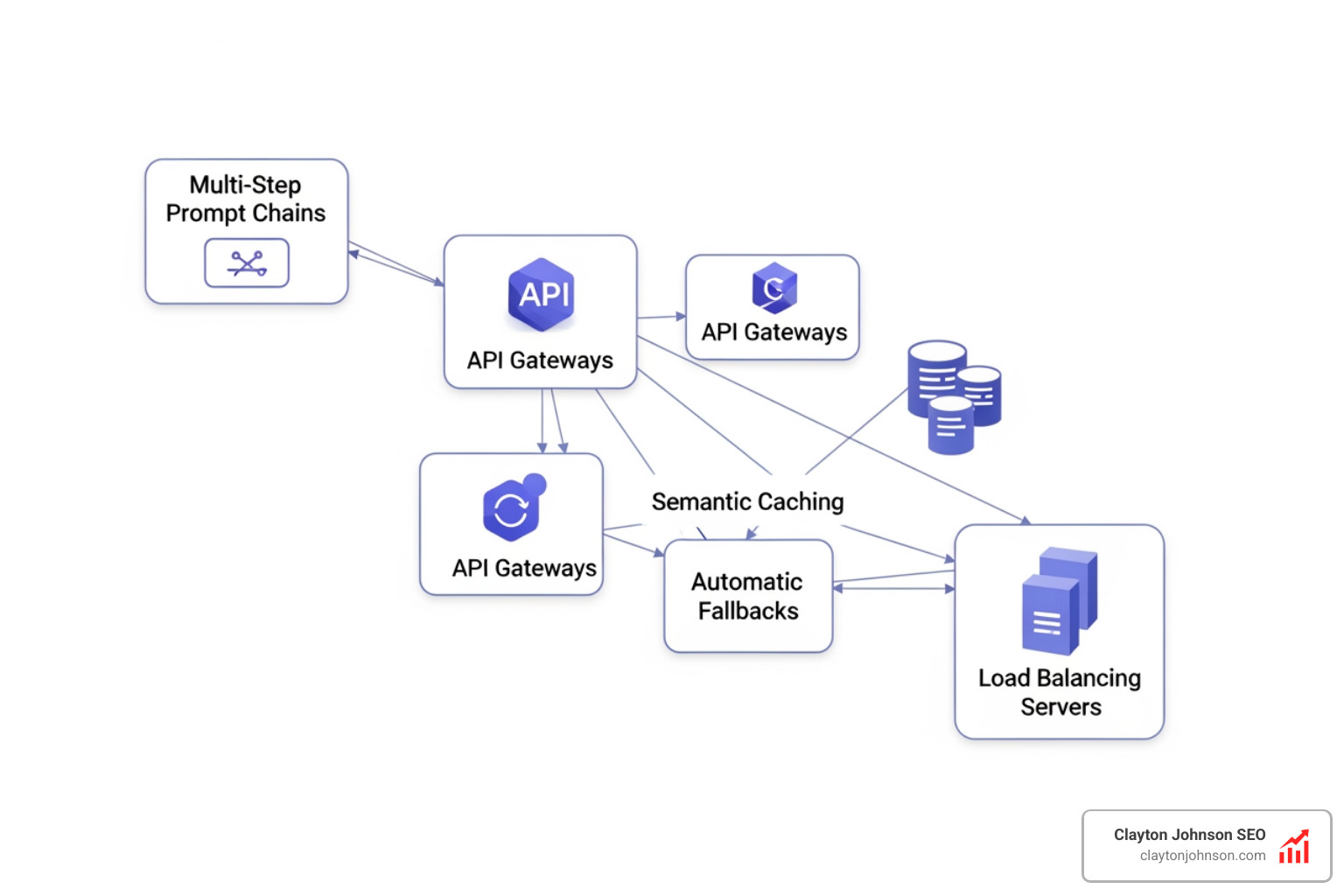

To move from a “cool experiment” to a production-grade system, you need a robust infrastructure. You can’t just string together five API calls and hope for the best. You need an architecture that handles errors, latency, and costs.

A professional setup often involves an AI gateway. This acts as a central hub for your chains, providing features like:

- Semantic caching: If the AI has already answered a specific subtask, the gateway serves the cached response, saving you money and time.

- Automatic fallbacks: If your primary model is down or slow, the system automatically switches to a backup provider to keep the chain moving.

- Load Balancing: Distributing requests to ensure no single model hits its rate limit.

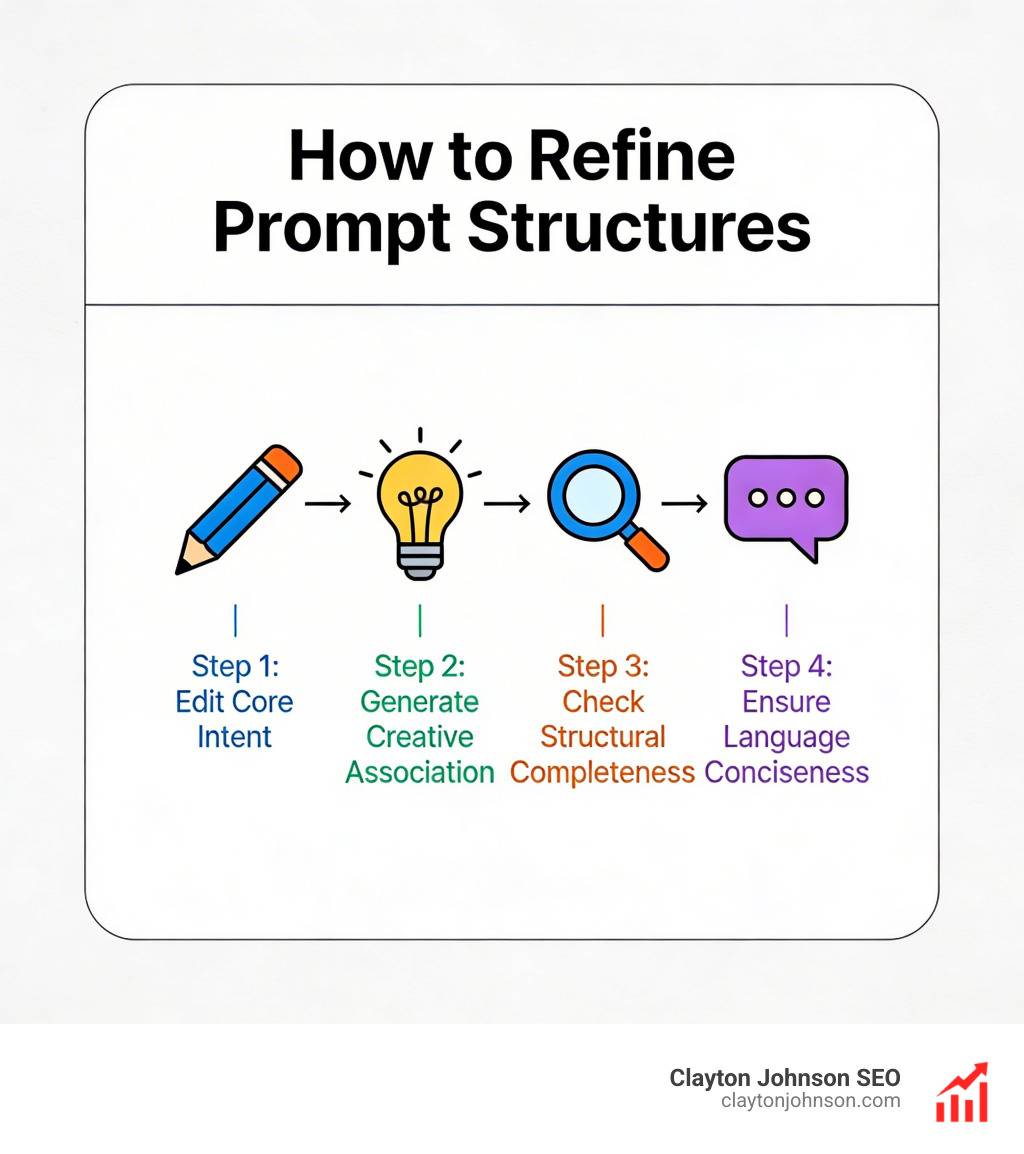

Designing robust data handoffs and XML tags

A challenge in prompt chaining is ensuring Step 2 understands Step 1. We recommend using structured outputs like JSON or XML tags.

In our stop-yelling-at-the-bot-a-guide-to-better-claude-prompts, we discuss how using XML tags (like

or

Measuring success with quality and operational metrics

You can’t manage what you don’t measure. When scaling AI workflows, we track four key categories of metrics:

- Quality Metrics: We use “LLM-as-a-judge” systems to evaluate the faithfulness and relevance of outputs. However, research like the Benchmarking Generation and Evaluation Capabilities of Large Language Models warns that AI judges can sometimes misalign with human judgment in specialized fields.

- Structural Metrics: Are the outputs following the required JSON schema?

- Operational Metrics: What is the total latency of the chain? What is the token cost per successful run?

- Reliability Metrics: How often does the chain break? Using datasets like the InstruSum: Instruction Controllable Summarization Dataset can help benchmark your system against known standards.

Real-World Use Cases for Scalable Prompt Architectures

How chaining prompts scales AI is best seen in action. Here are three ways we implement this for our clients:

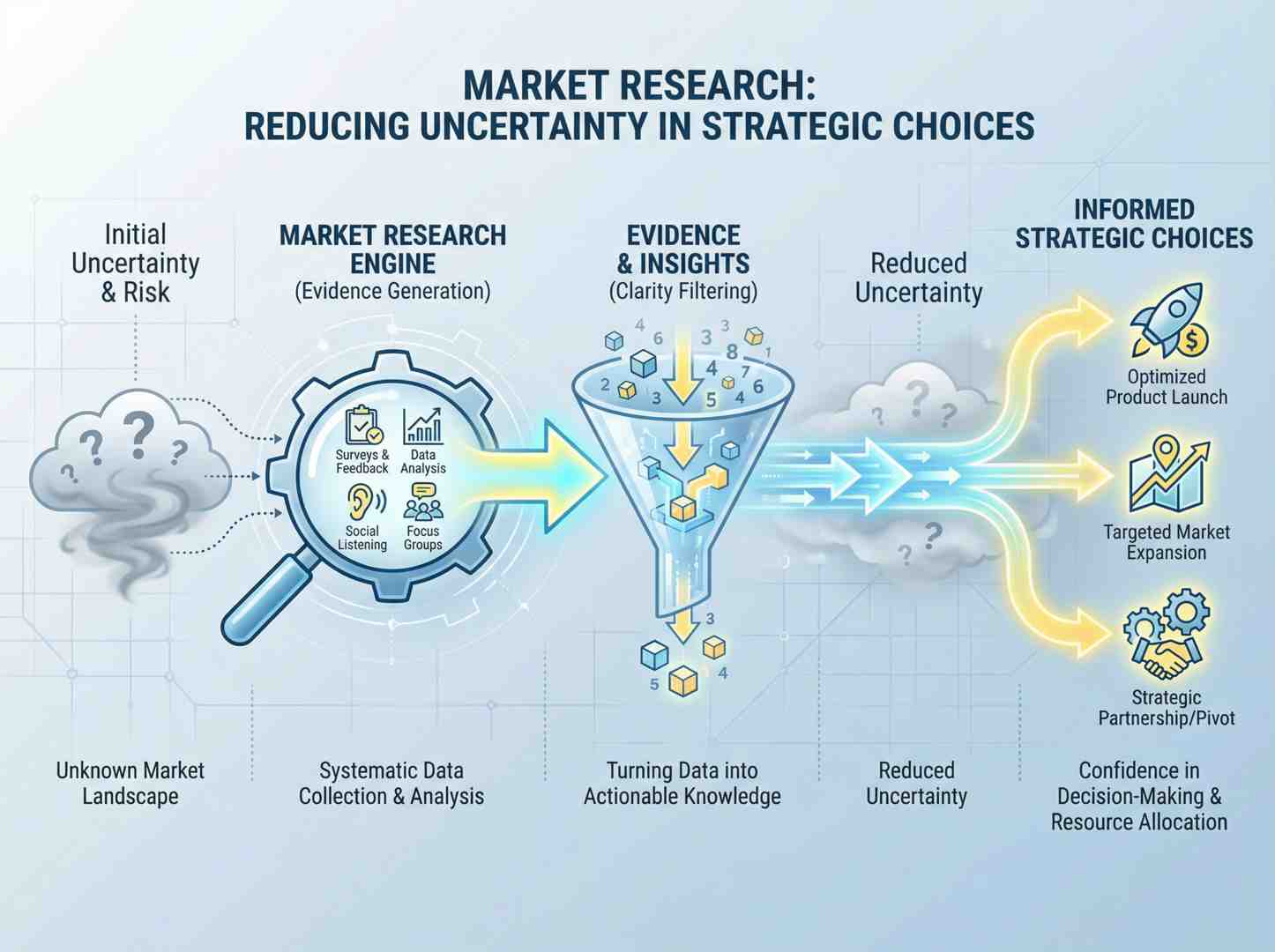

1. Advanced RAG (Retrieval-Augmented Generation)

In a standard RAG setup, the AI just looks at a document and answers a question. In a chained RAG setup, we:

- Step 1: Rewrite the user’s query for better search.

- Step 2: Retrieve relevant documents.

- Step 3: Filter out irrelevant “noise” from the documents.

- Step 4: Generate the answer based only on the filtered facts.

- Step 5: Fact-check the answer against the original source.

This multi-step process drastically reduces hallucinations. For a deep dive, check out the-ultimate-claude-chain-of-thought-tutorial.

2. Content Creation Pipelines

Marketing teams use chains to move from a single idea to a full campaign.

- Step 1: Research the topic and extract 10 key statistics.

- Step 2: Draft a blog post outline based on those stats.

- Step 3: Write the blog post sections.

- Step 4: Review the post for SEO optimization and brand voice.

- Step 5: Generate social media snippets and email subject lines.

3. Data Processing and Analysis

Analysts use prompt chaining to turn messy, unstructured data into actionable insights. A chain might extract data from 100 customer reviews, categorize them by sentiment, identify the top three complaints, and then draft a response strategy for the product team. Stop wasting time on manual cleanup and stop-yelling-at-your-ai-and-start-prompt-engineering by building these automated pipelines.

Frequently Asked Questions about Prompt Chaining

When should teams use prompt chaining over single prompts?

You should switch to chaining when a single prompt results in “instruction neglect” (the AI ignores part of your request) or when you need high reliability. If your task has more than three distinct steps—like research, drafting, and formatting—it’s time to chain.

What are the main trade-offs regarding latency and cost?

The primary trade-off is that chaining is slower and more expensive. Every “link” in the chain is a new API call, which adds latency and consumes tokens. However, you can mitigate this by using faster, cheaper models (like GPT-4o-mini or Claude Haiku) for the simple steps and saving the “heavyweight” models for the complex reasoning stages.

How do platforms like Maxim AI and Voiceflow support chaining?

Platforms like Maxim AI provide a “data engine” for experimentation, allowing you to simulate and test chains under different conditions. Voiceflow and LangGraph enable “cyclical” chains, where the AI can loop back and refine its work until it meets a certain quality threshold. These tools make it much easier to visualize and debug the flow of information.

Conclusion

Scaling AI isn’t about finding a “magic” prompt that solves everything. It’s about building structured growth architecture. Just as we build SEO systems with a clear taxonomy and hierarchy, we build AI workflows with modular, chained prompts that provide clarity, leverage, and compounding growth.

At Demandflow, we believe that the companies that win with AI won’t just be the ones using the biggest models—they’ll be the ones with the best execution systems. By mastering how chaining prompts scales AI, you move from unpredictable “magic” to a reliable, industrial-grade marketing machine.

Ready to build your own AI-enhanced execution system? Explore our resources on artificial-intelligence/prompt-engineering and start turning your AI experiments into a scalable chain reaction.