The Law of the Land for Artificial Intelligence

Navigating the Future of Artificial Intelligence: Regulatory Guidelines

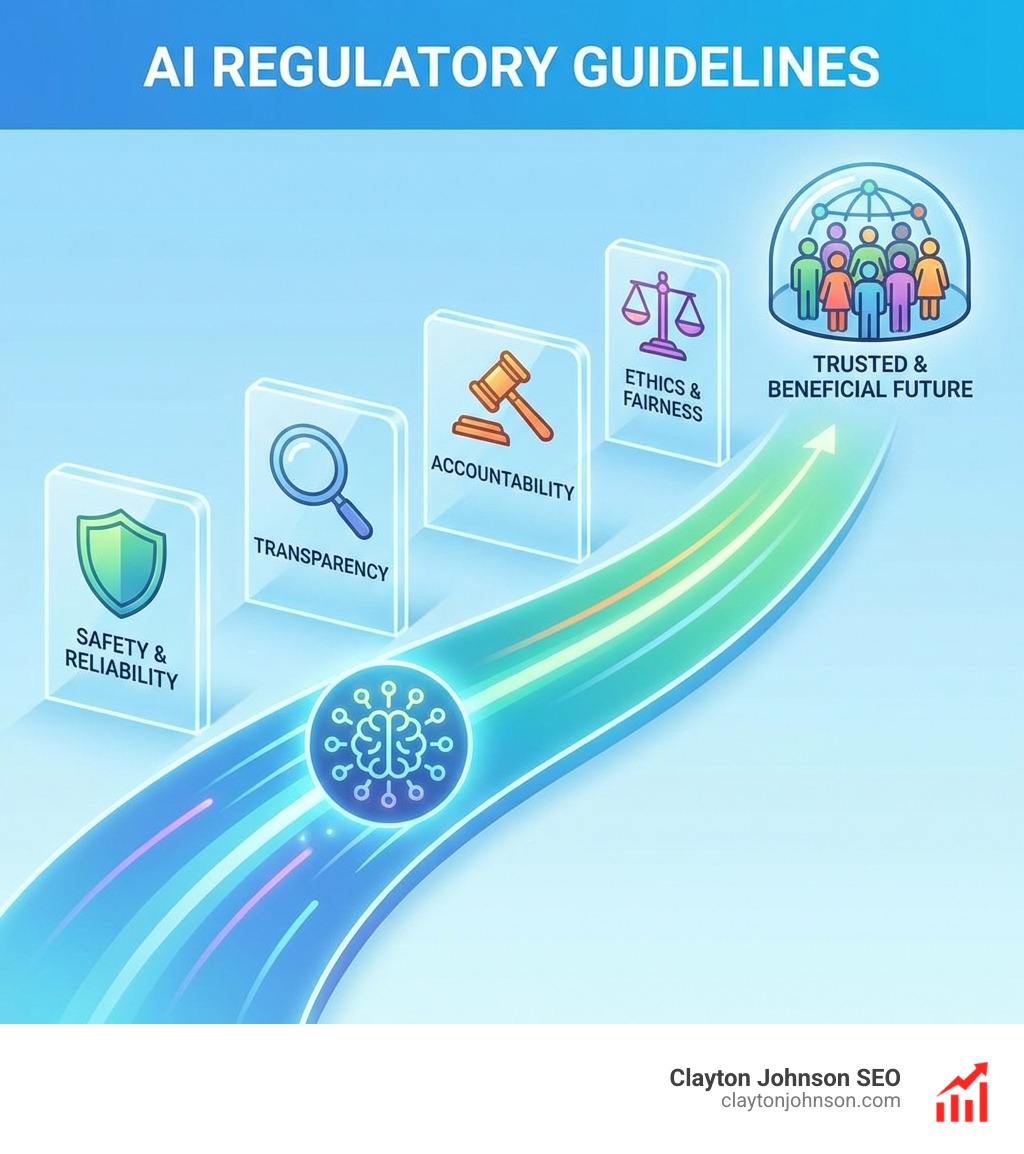

What are AI regulatory guidelines? They are a comprehensive set of rules, principles, and frameworks designed to govern the development, deployment, and use of artificial intelligence technologies. These guidelines aim to ensure that AI systems are developed responsibly, ethically, and safely, while also fostering innovation.

- Ethical Principles: Foundations rooted in human rights, fairness, transparency, and accountability.

- Risk-Based Approaches: Categorizing AI systems by their potential for harm to apply appropriate oversight.

- Legal Frameworks: Binding laws enacted by governments and international bodies to enforce compliance.

- Voluntary Standards: Industry-led best practices and codes of conduct that promote responsible AI.

The rapid evolution of AI, particularly with advanced capabilities like Large Language Models, has amplified the need for structured oversight. This isn’t just about preventing harm; it’s about building public trust and creating a stable environment for innovation.

As Clayton Johnson, an SEO strategist and growth operator, my work consistently involves navigating the complex landscape of What are AI regulatory guidelines to help businesses build resilient digital infrastructure. Understanding these frameworks is crucial for engineering scalable traffic systems and developing structured strategic frameworks that drive measurable business outcomes.

What are AI regulatory guidelines terms to remember:

Defining the Framework: What are AI regulatory guidelines?

When we ask, “What are AI regulatory guidelines?” we are looking at the guardrails for the most transformative technology of our era. These aren’t just technical suggestions; they are the evolving “Law of the Land” that dictates how machines can interact with human society. At their heart, these guidelines shift the focus from “can we build it?” to “should we build it, and how do we keep it safe?”

Most modern frameworks rely on a risk-based approach. This means that a spam filter in your inbox doesn’t face the same scrutiny as an AI system used to diagnose cancer or drive a semi-truck. The goal is to match the level of oversight to the potential for real-world harm.

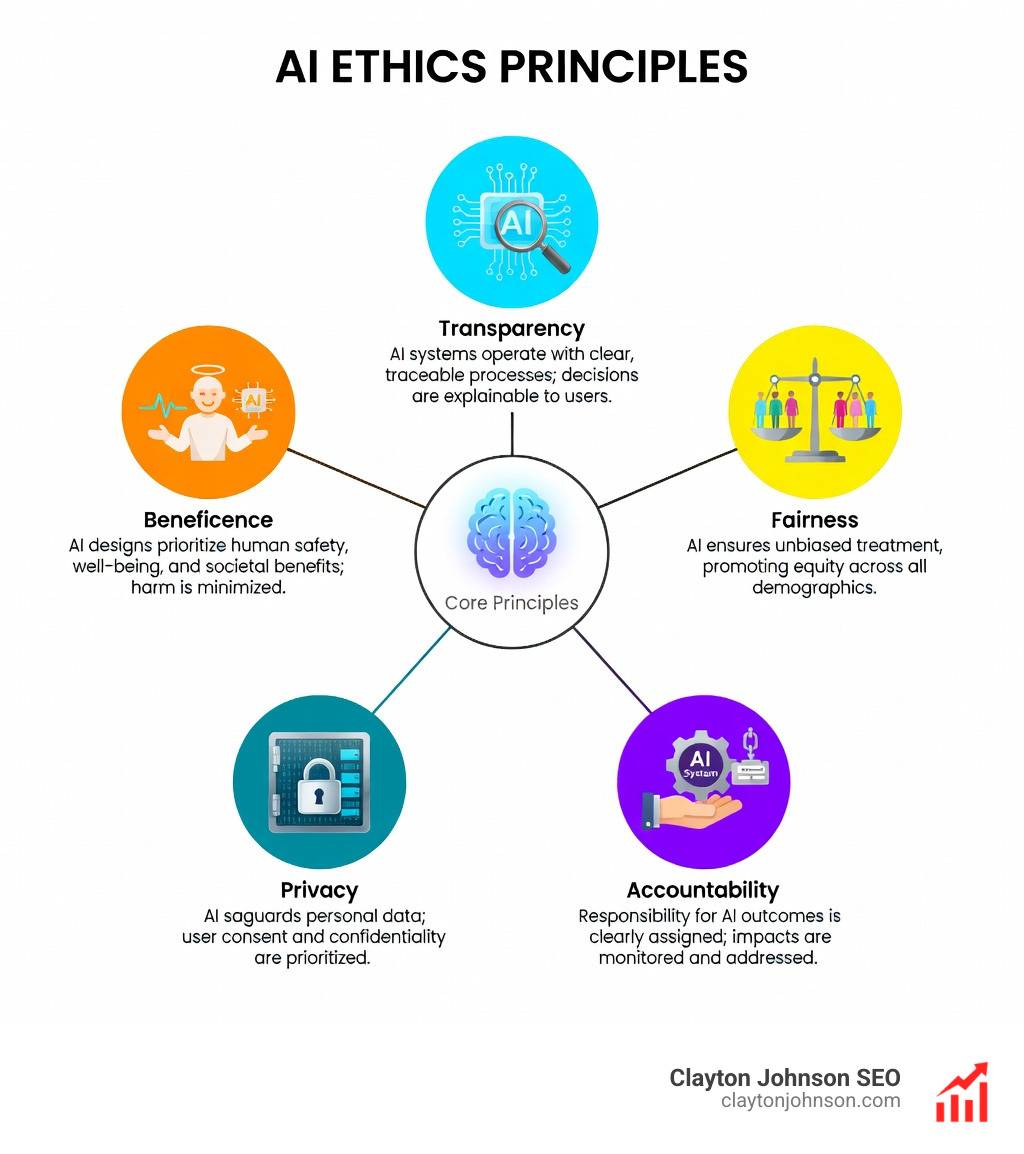

Key pillars of these guidelines include:

- Transparency: Knowing when you are interacting with an AI and understanding how it was trained.

- Fairness: Ensuring the system doesn’t discriminate based on race, gender, or disability.

- Explainability: The ability for humans to understand why an AI made a specific decision.

To achieve this, the Ethical Guidelines for Trustworthy AI emphasize that AI should be lawful, ethical, and robust.

Soft Law vs. Hard Law: A Comparison

| Feature | Soft Law (Guidelines/Standards) | Hard Law (Regulations/Acts) |

|---|---|---|

| Enforcement | Voluntary; based on reputation | Mandatory; legally binding |

| Flexibility | High; easy to update as tech evolves | Lower; requires legislative process |

| Penalties | None (typically market-driven) | Heavy fines and legal sanctions |

| Examples | NIST AI RMF, OECD Principles | EU AI Act, State Privacy Laws |

Core Principles of Trustworthy Systems

For any AI system to be considered “trustworthy” under modern guidelines, it generally must respect several core principles:

- Human Agency and Oversight: AI should support human autonomy, not replace it. Humans should always have the “kill switch.”

- Technical Robustness and Safety: Systems must be resilient against hacking and perform reliably under stress.

- Privacy and Data Governance: AI shouldn’t be a vacuum for personal data without consent.

- Societal and Environmental Well-being: We must consider the carbon footprint of training massive models and the impact on the labor market.

The Necessity of Formal Oversight

Why can’t we just let the tech companies police themselves? While many companies have internal ethics boards, formal oversight is necessary to prevent a “race to the bottom” where safety is sacrificed for speed.

Scientific research on global AI governance suggests that without central standards, we risk systemic biases that could exclude entire populations from financial or medical services. Formal guidelines provide the public trust necessary for AI to be integrated into critical infrastructure like power grids and transportation.

Global Perspectives on AI Governance

The world is not a monolith when it comes to AI. Different regions have adopted philosophies that reflect their cultural and political values. Research on AI-deploying organizations shows that companies operating internationally face a complex “patchwork” of rules.

- The European Union: Takes a “rights-first” approach, focusing on protecting the individual from state or corporate overreach.

- China: Focuses on “state-led” development, with specific rules on synthetic content (deepfakes) and algorithm filing to ensure social stability.

- United States: Historically a “market-led” environment, though this is shifting toward more structured oversight through Executive Orders and state-level privacy acts.

- Canada: Their Digital Charter aims to balance innovation with a strong emphasis on data privacy and individual rights.

Understanding the EU AI Act and its Risk Tiers

The EU AI Act is currently the most comprehensive “hard law” in existence. It categorizes AI into four distinct risk tiers:

- Unacceptable Risk: These are banned. Think social scoring by governments or real-time facial recognition in public spaces for general surveillance.

- High Risk: Systems used in education, employment (resume screening), or critical infrastructure. These must pass rigorous testing and documentation phases.

- Transparency Risk: Systems like chatbots or deepfakes. Users must be informed they are interacting with AI.

- Minimal Risk: Most AI applications, like video games or spam filters, fall here and face no new rules.

Violating these rules isn’t just a slap on the wrist; financial penalties can reach up to 7% of a company’s global annual turnover.

The US Landscape: Federal and State AI regulatory guidelines

In the US, the approach is more fragmented. Since there is no single federal AI law, we are seeing a “patchwork” of state-level actions.

- Federal Actions: Federal agencies now report nearly 2,000 AI use cases. Guidelines from the White House emphasize safety testing and “red-teaming” (trying to break the system) before release.

- The State Patchwork: States like Minnesota are focusing on specific harms. For example, some states have introduced over 1,000 bills collectively to address AI in elections and consumer privacy.

- Consumer Protection: Agencies like the FTC are using existing laws to crack down on AI-driven discrimination in hiring or lending.

Specialized Oversight for Large Language Models (LLMs)

Large Language Models (LLMs) like those powering ChatGPT or Gemini present unique challenges. Because they are “general purpose,” they can be used for everything from writing poetry to creating phishing emails. This versatility makes them harder to regulate than a narrow AI used for one specific task.

The UNESCO recommendations on AI ethics highlight the need for these massive models to be transparent about the data they use, especially concerning copyrighted material.

Compliance Standards for LLM AI regulatory guidelines

To keep LLMs in check, emerging guidelines focus on:

- Data Provenance: Proving where training data came from and respecting “opt-out” requests from creators.

- Model Card Reporting: A “nutrition label” for AI that lists a model’s strengths, weaknesses, and known biases.

- Watermarking: Ensuring that AI-generated images or text can be identified by other machines to prevent the spread of misinformation.

- Compute Thresholds: The EU and US are looking at “compute thresholds” (measured in FLOPs). If a model is powerful enough, it is presumed to pose a “systemic risk” and requires extra security audits.

Open Source vs. Closed Source Governance

One of the biggest debates in What are AI regulatory guidelines is whether AI code should be open or closed.

- Open Source Proponents: Argue that transparency allows the global community to find and fix security flaws. It prevents “corporate control” by a few tech giants.

- Closed Source Proponents: Argue that keeping the code secret prevents malicious actors from “un-censoring” the AI to create bioweapons or malware.

Current guidelines often provide exemptions for open-source models, provided they don’t meet the “systemic risk” threshold.

The Strategic Balance: Innovation vs. Regulation

The central tension in AI policy is the “innovation vs. regulation” debate. If the rules are too strict, startups may flee to more permissive jurisdictions. If they are too loose, we risk a catastrophic safety failure. Scientific research on AI and society suggests that well-designed regulation actually helps innovation by providing a clear set of rules that reduces legal uncertainty.

Arguments for Robust Oversight

- Existential and Safety Risks: Ensuring that highly autonomous systems don’t act in ways that harm human life.

- Bias Prevention: Preventing AI from reinforcing historical prejudices in hiring or policing.

- National Security: Protecting critical infrastructure from AI-enhanced cyberattacks.

- Public Trust: People won’t use AI if they don’t believe it’s safe and fair.

Challenges to Implementation

- The Pacing Problem: Technology moves at the speed of light; the legislative process moves at the speed of… well, government.

- Regulatory Capture: The fear that big tech companies will write the rules to favor themselves and block smaller competitors.

- Technical Complexity: Most lawmakers aren’t computer scientists, making it difficult to write accurate technical standards.

Best Practices for Organizational Compliance

For organizations looking to stay ahead of the curve, compliance shouldn’t be an afterthought. Following Latest research on AI procurement, we recommend a proactive approach.

Building a Compliance Roadmap

- Inventory Your AI: You can’t regulate what you don’t track. List every AI tool your company uses, from chatbots to predictive analytics.

- Conduct Impact Assessments: For any “high-impact” system, document how it makes decisions and what data it uses.

- Establish Human Oversight: Ensure there is a “human in the loop” for any decision that affects a person’s life or livelihood.

- Continuous Monitoring: AI “drifts” over time. Regularly test your systems for new biases or security vulnerabilities.

Future Developments in AI regulatory guidelines

The next phase of AI governance will likely focus on international harmonization. We are already seeing the G7 and the UN work toward a “Global Digital Compact” to ensure that AI rules are similar across borders. This reduces the burden on companies and prevents a “race to the bottom” in safety standards.

Frequently Asked Questions about AI Regulation

How do AI guidelines affect small businesses?

Most guidelines, including the EU AI Act, have “proportional” rules for SMEs and startups. This means smaller companies face lower fines and have access to “regulatory sandboxes”—safe environments where they can test new AI tools under the guidance of regulators without fear of immediate penalties.

What happens if a company violates the EU AI Act?

The consequences are severe. Companies can be fined millions of euros or a percentage of their total global turnover. Beyond the money, they may be forced to withdraw their AI product from the market entirely, which can be devastating for a tech firm.

Is there a global standard for AI safety?

Not yet, but we are close. The OECD AI Principles serve as the most widely accepted “soft law” global benchmark, endorsed by over 40 countries. Organizations like the G7 are also working on a voluntary “Code of Conduct” for developers of the most powerful AI systems.

Conclusion

At Clayton Johnson and Demandflow.ai, we believe that clarity and structure are the foundations of leverage. Just as a business needs a structured growth architecture to scale, the AI industry needs structured regulatory guidelines to evolve safely.

Most companies don’t lack the tactics to use AI; they lack the structured growth architecture to do it responsibly. By aligning your SEO and AI execution systems with these emerging global standards, you aren’t just staying compliant—you are building an authority-building ecosystem that is resilient, ethical, and prepared for compounding growth.

The thesis is simple: Clarity → Structure → Leverage → Compounding Growth. Understanding the “Law of the Land” for AI is the first step toward achieving that clarity.