Building a Responsible AI Framework for Your Organization

Why AI Ethics Guidelines Are the Foundation of Every Responsible AI Strategy

How to develop AI ethics guidelines is one of the most important questions any organization building or deploying AI needs to answer — and the stakes are real.

Here is a quick overview of how to get started:

- Define your core values — anchor everything in human rights, fairness, and transparency

- Assess organizational readiness — evaluate your technical capacity, culture, and gaps

- Identify ethical risks — map potential harms across your AI lifecycle

- Draft actionable policies — translate principles into concrete rules and procedures

- Establish governance structures — set up ethics committees, oversight roles, and accountability chains

- Embed ethics into every stage — from data collection to deployment and monitoring

- Review and iterate — continuously audit systems and update guidelines as AI evolves

The risks of skipping this process are not abstract. A healthcare algorithm was investigated for allegedly prioritizing less sick white patients over sicker Black patients. A financial algorithm faced scrutiny for giving men higher credit limits than women. A social media platform exposed the personal data of over 50 million users. These are not edge cases — they are predictable outcomes when AI scales without ethical guardrails.

As UNESCO put it when releasing the first-ever global standard on AI ethics, getting AI governance right is “one of the most consequential challenges of our time.” That standard now applies to all 194 UNESCO member states — a signal of just how seriously the world is taking this issue.

The good news: ethical AI is not reserved for large enterprises with dedicated compliance teams. There is a clear, structured path any organization can follow.

I’m Clayton Johnson, an SEO strategist and growth systems architect who works at the intersection of AI-assisted workflows, structured content strategy, and scalable marketing infrastructure — and understanding how to develop AI ethics guidelines has become central to building AI systems that organizations can actually trust and defend. In the sections below, I’ll walk you through a practical framework you can apply regardless of your company’s size or technical depth.

Similar topics to How to develop AI ethics guidelines:

Why Your Organization Needs to Prioritize AI Governance

When we talk about AI, we often focus on the “magic”—the ability to generate content, predict market trends, or automate complex workflows. But as we scale these solutions, we are also scaling our risks. Without a structured growth architecture, AI can quickly become a liability rather than an asset.

Navigating Reputational and Legal Risks

The headlines are full of cautionary tales. For instance, Los Angeles sued a major tech firm for allegedly misappropriating data collected through a weather app. When a bot “goes rogue,” it isn’t just a technical glitch; it’s a brand crisis. Public trust is fragile, and once lost, it is incredibly difficult to rebuild.

The Problem of Algorithmic Bias

One of the most insidious risks is bias. A famous ProPublica found that a recidivism algorithm used in courts was twice as likely to incorrectly flag Black defendants as high-risk compared to white defendants. This type of algorithmic bias can creep into hiring tools, credit scoring, and even medical diagnostics if we aren’t careful.

Ensuring Regulatory Compliance

Governments are moving fast. From the EU AI Act to regional standards in Singapore and Canada, the message is clear: transparency and accountability are no longer optional. For us at Demandflow, we see AI ethics not as a hurdle, but as the foundation of a high-performance SEO strategy and a sustainable growth operating system.

How to develop AI ethics guidelines: A Step-by-Step Framework

Developing these guidelines doesn’t happen in a vacuum. It requires a multidisciplinary approach that brings together policy, technology, and social advocacy.

1. Multi-Stakeholder Engagement

We shouldn’t leave ethics solely to the developers. A diverse team—including legal experts, data scientists, marketers, and even end-users—ensures that blind spots are identified early. If your entire development team shares the same background, you’re likely to bake those shared biases right into the code.

2. Risk Assessment and Policy Drafting

Before writing a single line of code, we must ask: What is the goal? Is AI the best tool for this? What happens if it fails? We recommend using the UNESCO Recommendation on the Ethics of AI as a North Star. It emphasizes that human rights and dignity must be the cornerstone of every framework.

3. Establishing Governance Structures

Who “squeezes the throat” when things go wrong? You need clear lines of responsibility. This might mean appointing a Head of AI Ethics or forming an internal review board. Without “teeth,” an ethics framework is just a piece of paper.

Assessing Organizational Readiness for How to develop AI ethics guidelines

Before you jump into implementation, you need to know where you stand. UNESCO offers a Readiness Assessment Methodology (RAM) that helps organizations evaluate their technical capacity and cultural alignment.

- Gap Analysis: Do you have the data literacy required to spot bias?

- Resource Allocation: Are you willing to invest in the auditing tools and personnel needed for oversight?

- Cultural Alignment: Is your leadership committed to “Ethics by Design,” or are they just looking for a “Responsible AI” badge to stick on the website?

Drafting Actionable Policies for How to develop AI ethics guidelines

High-level principles are great, but your team needs rules they can follow.

- Proportionality: Ensure the AI’s use is justified by the benefits and that the risks are minimized.

- Safety Protocols: What are the “emergency stops”? For voice-controlled systems, this could be literal vocal commands to halt physical movement.

- Documentation: Every model should have a “paper trail.” This includes documenting data provenance, training methods, and intended use cases.

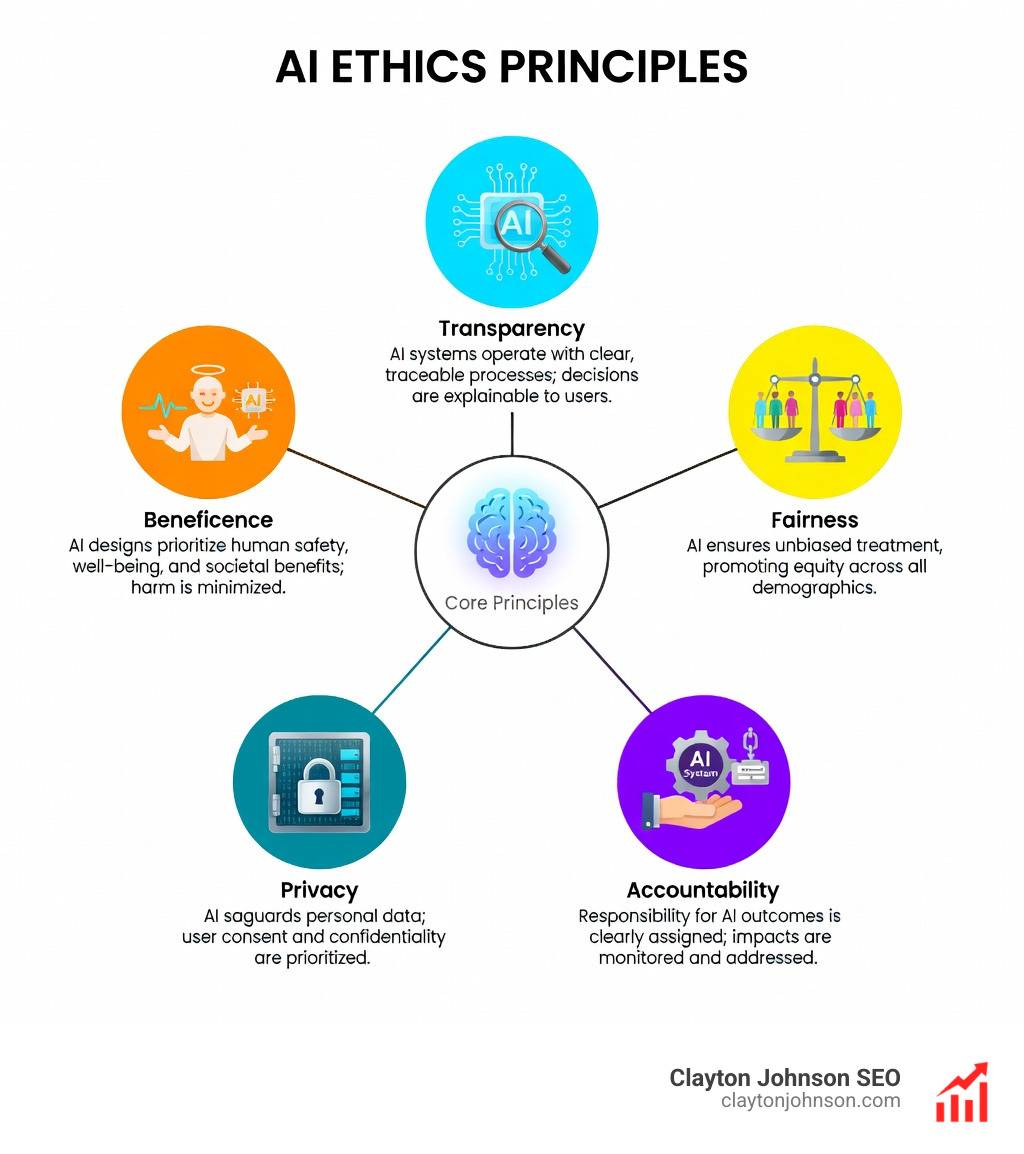

Core Principles for Building Trustworthy AI Systems

To build systems that people actually trust, we focus on several key pillars. While “Ethical AI” often refers to the philosophical “why,” “Responsible AI” is the tactical “how.”

| Feature | Ethical AI | Responsible AI |

|---|---|---|

| Focus | Abstract principles and values | Practical application and compliance |

| Goal | Societal well-being and human rights | Accountability, safety, and fairness |

| Execution | Philosophical frameworks | Governance, audits, and technical mitigations |

Integrating Transparency and Explainability

The “black box” problem is one of AI’s biggest hurdles. If an AI denies a loan application, the user has a right to know why. We use techniques like SHAP or LIME to make model decisions more interpretable.

Transparency also means being honest about when a user is interacting with a bot. Whether it’s a chatbot or an AI-generated image, clear disclosure builds trust. We also advocate for the use of model cards, which act like nutrition labels for AI, explaining how a model was built and where it might struggle.

Ensuring Fairness and Inclusivity

Fairness isn’t just about avoiding “bad” data; it’s about actively seeking diverse data. If a facial recognition system is trained only on one demographic, its performance will fail on others. We must test our software for mislabeling and poor correlations across different groups, specifically looking at protected characteristics like race, gender, and age.

Operationalizing Ethics Throughout the AI Lifecycle

Ethics shouldn’t be an afterthought or a final “check” before launch. It must be woven into the entire development workflow.

The Design Phase

This is where we apply “Ethics by Design.” We anticipate potential misuses—like a chatbot being used for scams—and build in mitigations from day one. We also look at environmental sustainability. Tools like the Data Carbon Ladder help us estimate the CO2 footprint of our projects.

Development and Deployment

During development, we focus on Microsoft’s approach to AI ethics, which emphasizes reliability and safety. Once deployed, the work isn’t over. We need continuous monitoring to catch “model drift”—where the AI’s performance changes as it encounters new, real-world data.

Best Practices for Ethical Data Management

Data is the fuel for AI, and if the fuel is contaminated, the engine will fail.

- Data Minimisation: Only collect what you absolutely need. As the ICO notes, this reduces both privacy risks and environmental impact.

- Anonymization: Use synthetic data or anonymized sets whenever possible to protect individual privacy.

- Provenance Tracking: Know where your data came from. Using data sheets for data sets helps record the “biography” of your data, including any known biases.

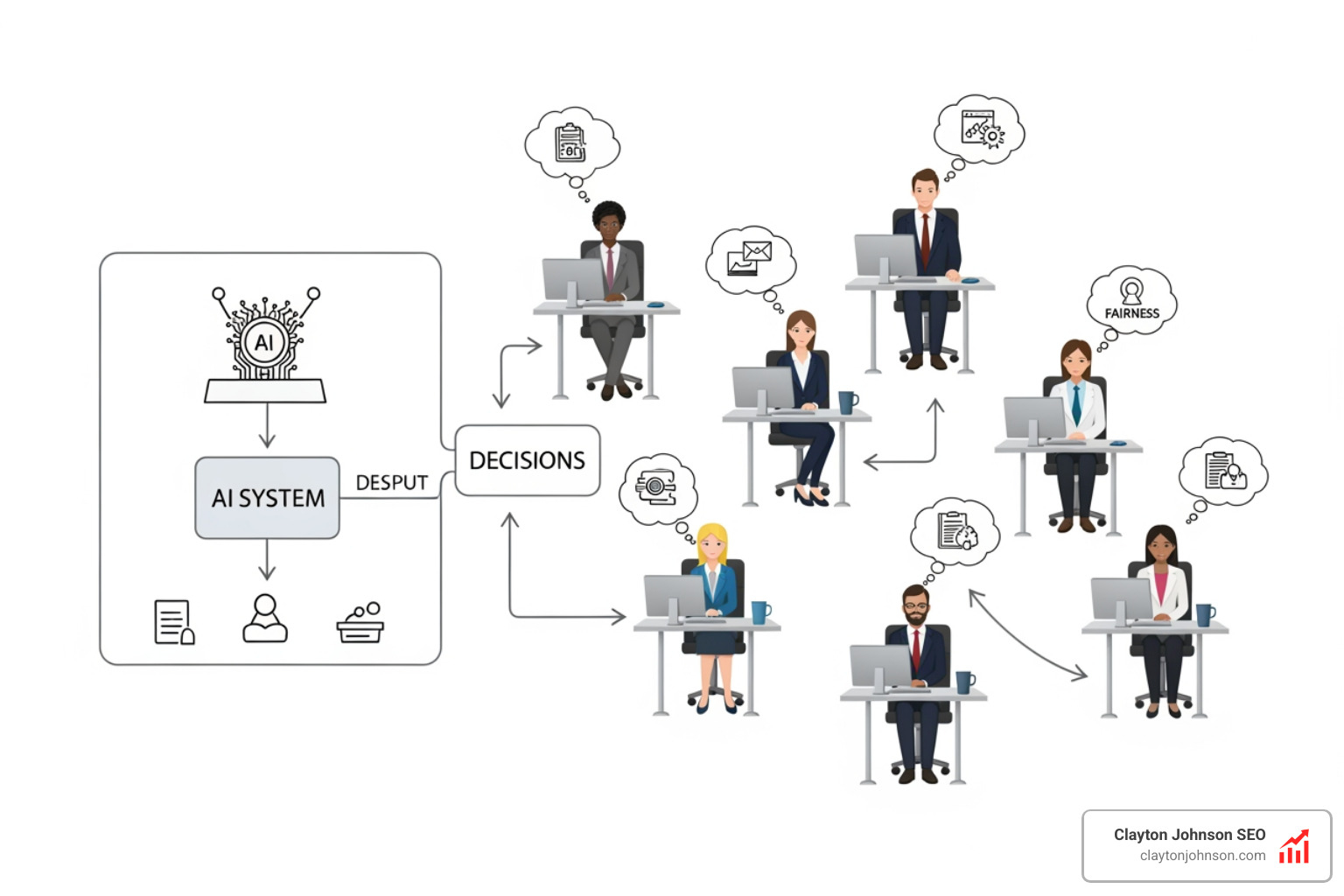

Establishing Human Oversight and Accountability

A computer should never make a final management decision. We believe in the “Human-in-the-Loop” (HITL) model. This means having a human review high-stakes decisions—like medical diagnoses or legal judgments—before they are finalized.

We also need clear decommissioning protocols. When a system is no longer meeting its ethical standards or its purpose has expired, we must have a plan to take it offline and securely delete the associated data.

Frequently Asked Questions about AI Ethics

What is the difference between ethical AI and responsible AI?

Ethical AI is the philosophical foundation—it’s about defining the values (like fairness and dignity) that AI should uphold. Responsible AI is the practical implementation of those values through governance, technical tools, and legal compliance. You need both to succeed.

How can small businesses implement AI ethics without a large budget?

You don’t need a massive team to be ethical. Start by using open-source tools for bias detection and following established frameworks like the UNESCO guidelines. Focus on transparency—be honest with your customers about how you use AI. Simple steps like data minimisation also cost nothing but significantly reduce your risk.

What are the most common types of bias in AI algorithms?

- Historical Bias: When the data reflects existing societal prejudices.

- Representation Bias: When certain groups are under-represented in the training data.

- Measurement Bias: When the metrics used to train the AI are flawed or inconsistent.

- Aggregation Bias: When a one-size-fits-all model is applied to diverse groups, washing out important differences.

Conclusion

At Demandflow, we believe that clarity leads to structure, and structure leads to leverage. Learning how to develop AI ethics guidelines isn’t just about avoiding lawsuits; it’s about building a structured growth architecture that lasts. When your AI is transparent, fair, and accountable, it becomes a powerful engine for compounding growth.

By prioritizing human-centric technology and robust governance, you ensure that your innovation serves your mission without compromising your values. If you’re ready to build a more trustworthy AI ecosystem, explore our resources on AI Governance to see how we can help you scale responsibly.