New AI Models Are Worse at SEO and Here is the Proof

Newer AI Models Are Getting Worse at SEO Tasks — Here’s the Data

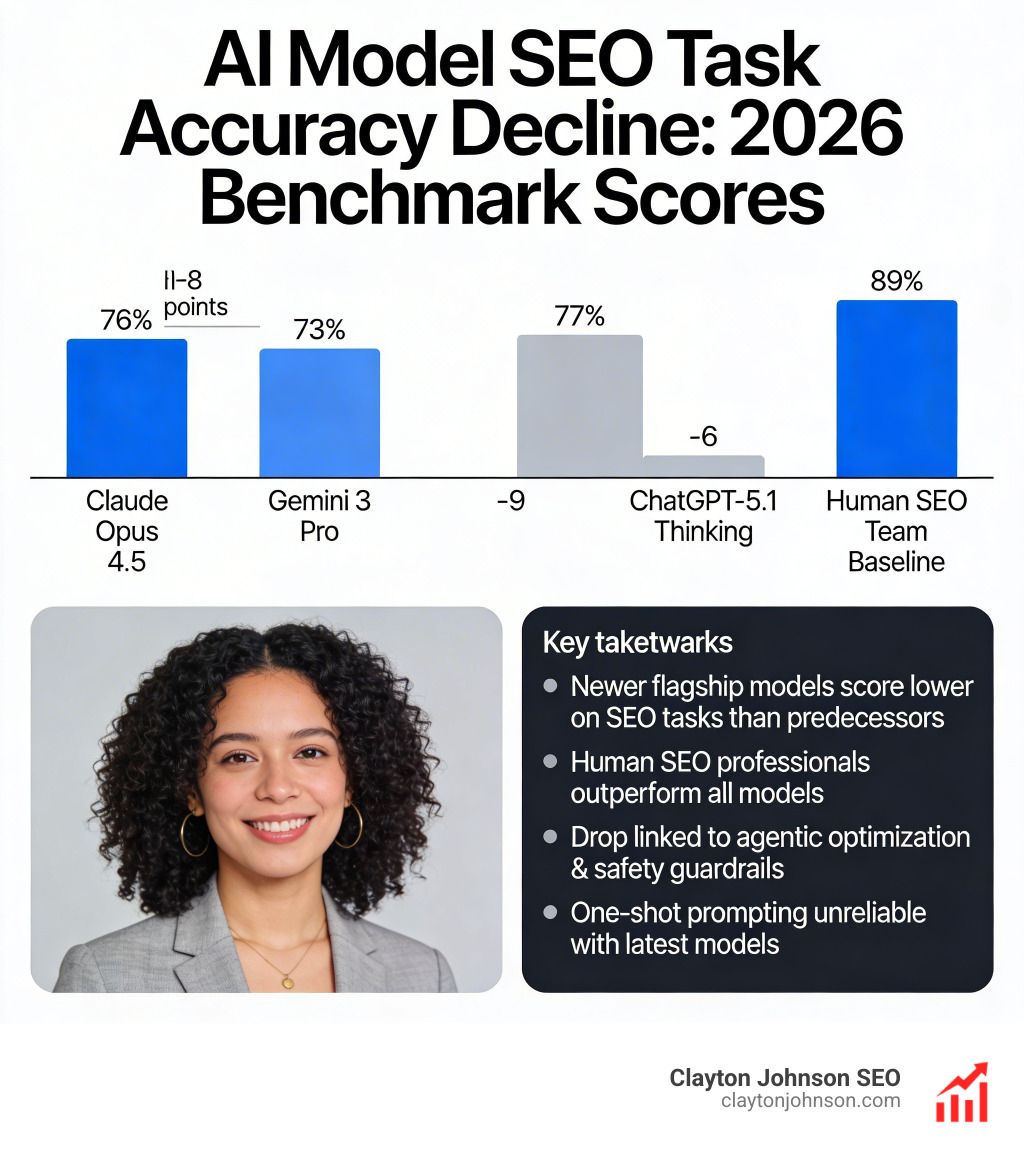

AI model benchmarks SEO performance has dropped sharply across the latest flagship models. Here is a quick snapshot of where the top models currently stand:

| AI Model | SEO Task Accuracy | Change |

|---|---|---|

| Claude Opus 4.5 | 76% | -8 points |

| Gemini 3 Pro | 73% | -9 points |

| ChatGPT-5.1 Thinking | 77% | -6 points |

| Human SEO Team | 89% | Baseline |

Key takeaways at a glance:

- Newer flagship models score lower on SEO tasks than their predecessors

- Human SEO professionals still outperform every model tested

- The drop is linked to agentic optimization and safety guardrails — not model intelligence

- One-shot prompting no longer works reliably with the latest models

Most marketers assume newer AI means better SEO output. The data says the opposite.

A benchmark study analyzing over 184,000 queries across 20 LLM models found a consistent pattern: the latest flagship releases from Anthropic, Google, and OpenAI all regressed on SEO task accuracy compared to their previous versions. The gap between human SEO expertise and AI output is widening, not closing.

This is not a glitch. It is a structural shift in how these models are built — and it has real consequences for anyone using AI in their SEO workflow.

I’m Clayton Johnson, an SEO strategist with deep experience building AI-augmented marketing systems and tracking AI model benchmarks SEO performance across real client workflows. This guide breaks down the data, explains what is driving the regression, and shows you how to adapt.

AI model benchmarks SEO definitions:

- How to assess LLM scalability enterprise

- How test enterprise models

- Model selection SEO optimization

The Regression of Flagship AI Model Benchmarks SEO

In technology, we are conditioned to believe that “newer equals better.” However, recent data from the Previsible AI SEO Benchmark has shattered this myth. We are seeing a statistically significant regression in how flagship models handle standard SEO tasks, such as technical audits, keyword intent classification, and on-page optimization logic.

The numbers tell a sobering story. Claude Opus 4.5, once the gold standard for nuanced reasoning, saw its SEO task accuracy drop from 84% to 76%. Gemini 3 Pro followed a similar trajectory, falling 9% in performance compared to the 2.5 Pro version. Even the highly anticipated “Thinking” models from OpenAI have shown a 6% decline in accuracy for SEO-specific workflows.

This regression is particularly visible in technical SEO and strategy categories. While LLMs still perform relatively well in creative content generation (averaging 85% accuracy), they struggle with complex e-commerce SEO logic, where scores have plummeted to an average of 63%. For practitioners relying on these tools for site migrations or technical troubleshooting, this 20-point gap between content and technical execution is a dangerous trap.

Why Newer Models Struggle with AI Model Benchmarks SEO

If the models are getting “smarter” in general, why are they getting worse at SEO? The answer lies in three specific areas: safety guardrails, agentic optimization, and reasoning drift.

- Safety Guardrails Overload: Newer models are heavily tuned to avoid “harmful” content. Unfortunately, technical SEO tasks—like requesting a crawl of a site or analyzing robots.txt files—can sometimes trigger these safety filters. Models may refuse to perform a technical audit because the request resembles a cybersecurity probe.

- The Agentic Gap: Modern LLMs are being optimized to function as “agents” that perform multi-step tasks rather than “chatbots” that answer one-shot prompts. This shift means they often overthink simple SEO questions, leading to what we call “reasoning drift.” Instead of giving a direct answer, the model might hallucinate complex scenarios that don’t apply.

- Probabilistic Errors: At their core, LLMs are probabilistic. They guess the next best word. In SEO, where a single character in a canonical tag or a trailing slash in a URL matters, “guessing” leads to failure. This is why we often see better results when we compare the major AI models and find that older, more stable versions sometimes outperform the latest “thinking” models.

Benchmarking AI Search Visibility and Brand Mentions

While accuracy in performing SEO tasks is down, the importance of appearing in AI search results is at an all-time high. We are no longer just optimizing for Google’s blue links; we are optimizing for “Share of Model” (SoM).

Research into brand mention rates shows a massive disparity between platforms. Microsoft Copilot currently leads the pack with a 95.29% competitor mention rate, meaning if a user asks about a product category, Copilot is almost guaranteed to cite specific brands. On the other end of the spectrum, models like Claude and DeepSeek often provide zero-citation responses, even when they mention brand names.

Perplexity has emerged as a powerhouse for commercial visibility, citing business and commercial sites in 77.6% of its responses. For brands, this means that even if traditional Google CTR is dropping due to AI Overviews, visibility within the “answer engine” can compensate—if you are the one being cited.

Impact of Query Intent on AI Model Benchmarks SEO

Not all queries are treated equally by AI models. The density of competition changes based on the user’s intent:

- BOFU (Bottom of Funnel): Queries with high purchase intent (e.g., “best CRM for small business”) trigger 2.5x more competitor mentions than awareness queries. The average BOFU response mentions 4.78 competitors, creating a “battlefield” where brand positioning is critical.

- TOFU (Top of Funnel): Awareness queries are less crowded, averaging only 1.92 mentions.

Interestingly, we’ve found that Claude’s concise nature makes it a favorite for users seeking quick answers, but its lack of citations makes it harder for SEOs to track ROI. Meanwhile, Google AI Overviews provide highly detailed responses (averaging over 6,000 characters), which can cut traditional CTR by 15-20% but offer more opportunities for “source” placement.

Essential SEO Performance Benchmarks for the AI Era

To survive the shift in AI model benchmarks SEO, we must maintain a dual-track strategy. We need to hit traditional technical targets while simultaneously preparing for AI-driven discovery.

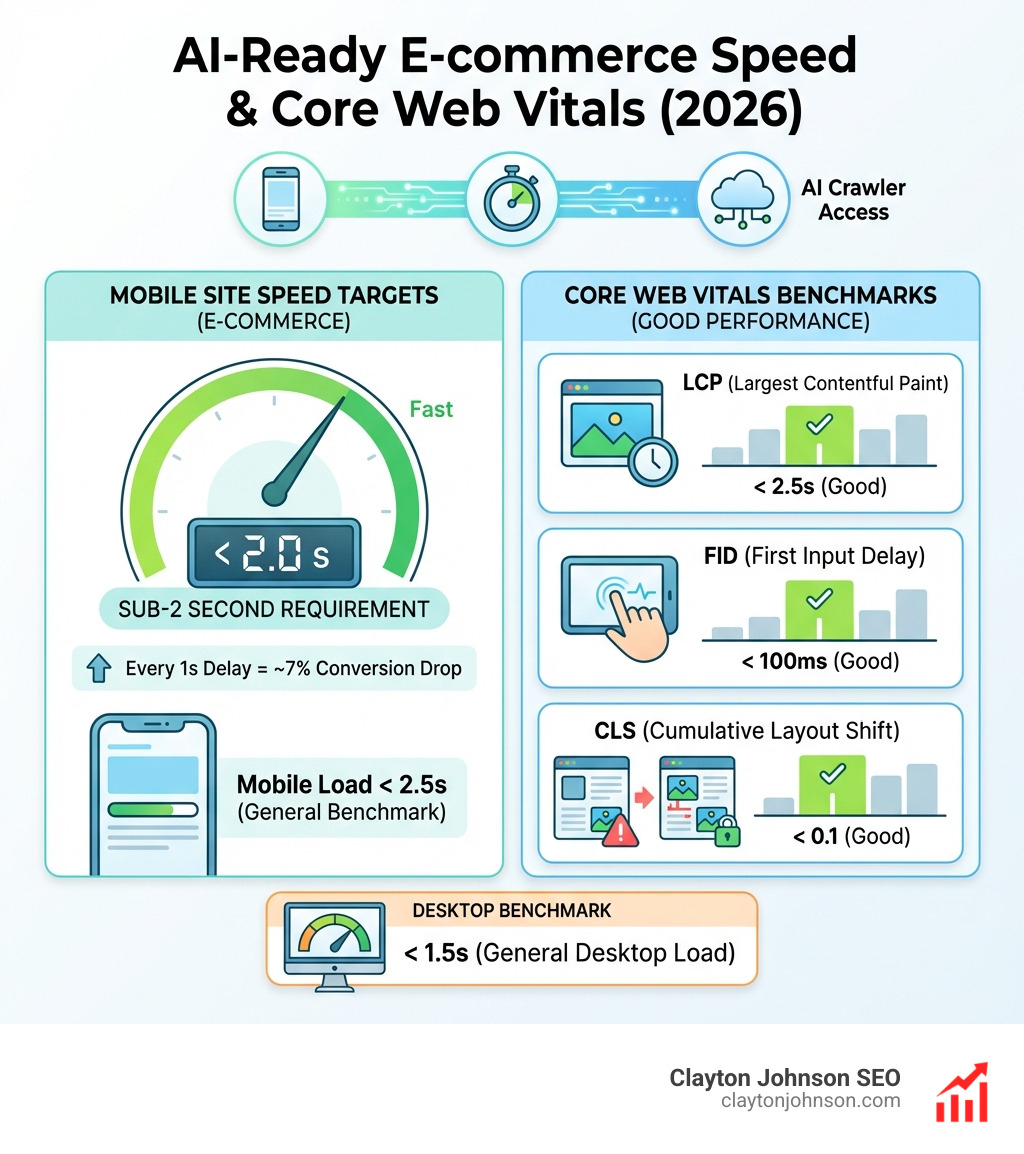

Site speed remains the “silent killer” of SEO. Even in an AI world, if a model’s crawler (like GPTBot) cannot efficiently access your site, you won’t be cited. Current benchmarks demand a mobile site speed of less than 2.5 seconds and desktop speeds under 1.5 seconds, adhering to Google’s Core Web Vitals guidelines. For e-commerce, the threshold is even tighter—sub-2 seconds is the requirement to prevent a 7% drop in conversions for every second of delay.

Leading vs Lagging SEO KPIs

We categorize SEO metrics into two buckets: what happened (lagging) and what is happening (leading).

- Leading KPIs (The Inputs): These are metrics we can control today. They include web performance scores, the growth of your organic keyword footprint, and the health of your technical SEO (crawlability and indexability).

- Lagging KPIs (The Results): These include organic traffic, engaged sessions, and total backlink growth.

In our experience, a healthy competitive site should aim for 15-20% annual backlink growth. However, quality now trumps quantity. With the rise of AI-generated content “flooding” the web, search engines are placing higher value on authentic discussions from forums like Reddit and high-authority industry resources.

Top AI SEO Tools for Tracking Generative Visibility

Tracking your rankings in a world of generative search requires a new toolkit. Traditional rank trackers that only look at positions 1-10 are no longer sufficient. You need tools that can monitor your brand’s “Share of Model” and sentiment within AI-generated answers.

Platforms like SE Ranking and Search Atlas have integrated AI-powered analytics that show how often your brand appears in generative results. For enterprise-level tracking, tools like SE Visible and Atomic AGI provide deep dives into how different LLMs view your brand compared to competitors.

Content Optimization and AI-Powered Platforms

The goal isn’t just to write content; it’s to build a “structured growth architecture.” This is where AI writing assistants like Jasper or Clearscope come in, helping to structure data in a way that AI models can easily digest.

When choosing a tool, we recommend using a model matchmaker guide to align the specific AI model with your task. For example, while GPT-4o-mini is excellent for diverse sourcing and keyword research, Claude 3.5 Sonnet remains superior for maintaining a consistent brand voice in long-form content.

Frequently Asked Questions about AI SEO Performance

Why are newer AI models performing worse on SEO tasks?

Newer models are being optimized for deep reasoning and safety rather than one-shot accuracy. This “agentic optimization” means they often over-complicate simple SEO requests or refuse them entirely due to strict safety guardrails. To fix this, we recommend moving away from raw prompts and using “contextual containers” like Custom GPTs that are pre-loaded with your brand’s SEO rules.

How do AI Overviews affect traditional CTR benchmarks?

AI Overviews typically cut CTR for the top organic positions by 15-20%. Because the AI provides the answer directly on the search results page, users have less incentive to click through. To combat this, SEOs must focus on “zero-click” optimization—ensuring their brand is cited within the AI Overview itself.

Which AI model is currently best for SEO content generation?

Claude 3.5 Sonnet and GPT-4o are currently the top performers for content. They strike the best balance between SEO logic and natural language. However, adding an “SEO Expert” persona to your prompts can improve performance across all models by an average of 2.8%.

Conclusion

The data is clear: the era of relying on “out-of-the-box” AI for high-level SEO strategy is over. As flagship models regress in their ability to handle technical SEO tasks, the value of human expertise and structured strategy has never been higher.

At Demandflow, we believe that most companies don’t lack tactics—they lack a structured growth architecture. We help founders and marketing leaders move beyond the “chaos of prompts” into a system driven by clarity and leverage. Whether you are navigating the complexities of different AI models or trying to scale your organic traffic by 250%, the key is to build a foundation that compounds over time.

Don’t let your SEO strategy be a victim of AI reasoning drift. It is time to treat your growth like infrastructure.