Don’t Overpay for Intelligence: How to Choose Cost Effective LLMs

Why Paying More for AI Doesn’t Mean Getting More

How choose cost effective LLMs is one of the most important decisions you can make for your AI budget — and most teams get it wrong by defaulting to the most expensive model available.

Here is a quick framework to guide your selection:

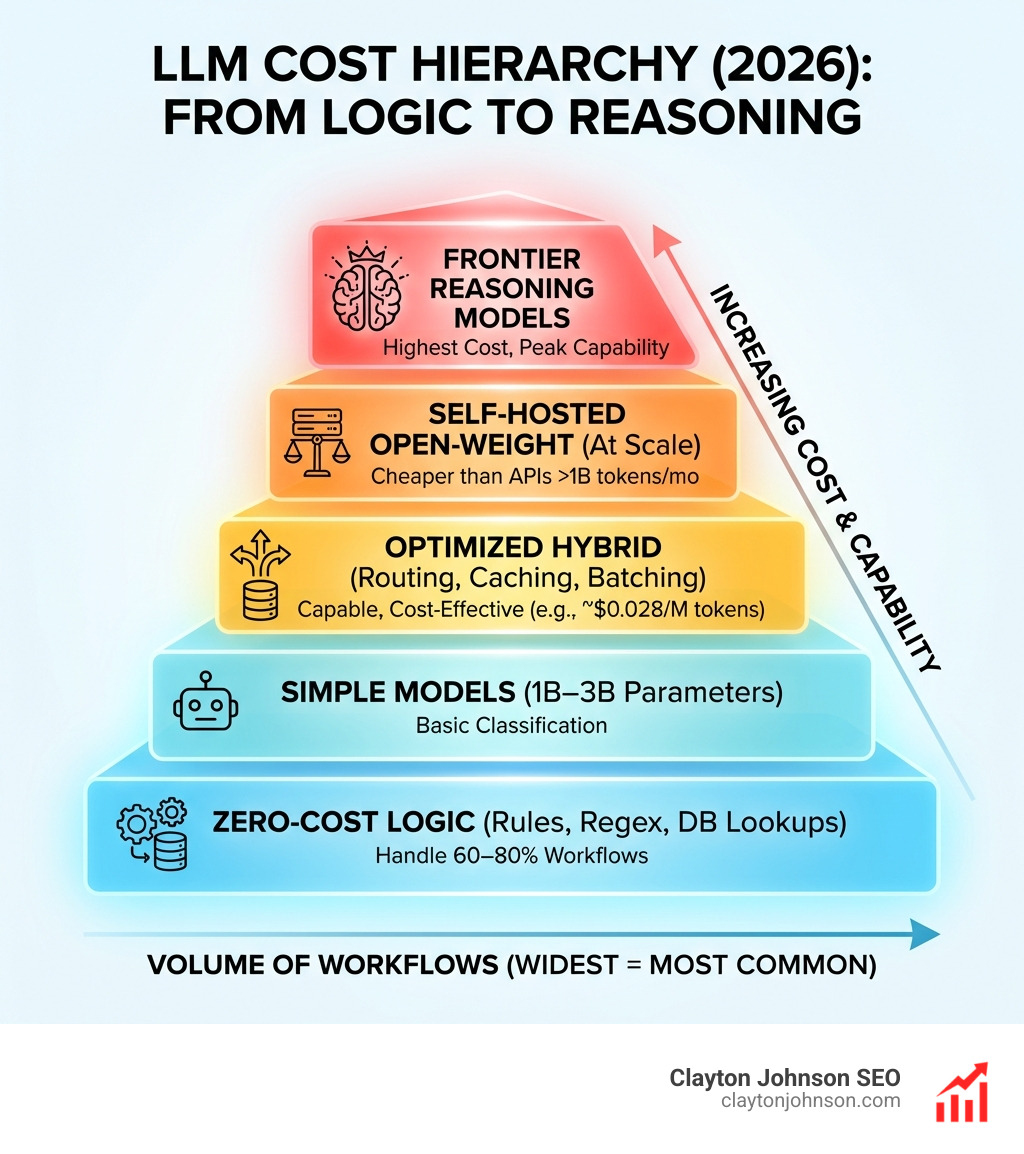

- Start without an LLM — Rules, regex, and database lookups handle 60–80% of workflows at zero cost

- Match model size to task complexity — Simple classification needs a 1B–3B model, not GPT-4

- Compare price-to-performance ratios — DeepSeek V3 delivers near-frontier quality at ~$0.028 per million input tokens

- Apply caching and batching — Prompt caching alone can cut costs by up to 90% on repeated context

- Use tiered routing — Route simple queries to small models, escalate only when needed

- Monitor continuously — Regular audits uncover 30–50% savings through simple configuration changes

- Evaluate self-hosting at scale — Open-weight models like Llama become cheaper than APIs above ~1 billion tokens per month

The gap between smart and naive LLM usage is dramatic. A naive GPT-4o setup running 10,000 interactions per month costs roughly $75. An optimized hybrid architecture handling the same workload costs under $1. That is not a small efficiency gain — that is a structural advantage.

LLM pricing has dropped roughly 90% since the first generation of frontier models launched. What was once a $30-per-million-token decision now has capable alternatives under $0.10. The market has matured, but most teams haven’t updated their selection logic to match.

I’m Clayton Johnson, an SEO strategist and growth systems architect who has spent years building AI-augmented marketing workflows — including cost-optimized LLM stacks — for founders and marketing leaders at the $500K–$20M ARR stage. My work on how choose cost effective LLMs is grounded in real production architectures, not theoretical benchmarks. In the sections ahead, we’ll break down exactly how to build a model selection strategy that compounds your efficiency over time.

Key How choose cost effective LLMs vocabulary:

The Economics of Intelligence: How Choose Cost Effective LLMs

When we talk about How choose cost effective LLMs, we aren’t just looking for the lowest price tag. We are looking for the best “intelligence per dollar.” In the early days of generative AI, pricing was high and options were few. Today, the landscape is a hyper-competitive market where prices change almost monthly.

To master the economics of AI, we have to look beyond the headline price. Most providers use token-based billing, where you are charged for both the “input” (the prompt you send) and the “output” (the response the AI generates). Crucially, output tokens often cost 3x to 10x more than input tokens. If your application generates long-form blog posts, your costs will scale much faster than if you are just generating short SEO meta descriptions.

Another factor is the context window. This is the amount of information the model can “remember” or process in a single go. While massive context windows (up to 2 million tokens) are impressive, they come with a “long-context penalty” on some platforms. The more data you stuff into the prompt, the more every subsequent token costs to process.

For a deeper dive into managing these expenses at an organizational level, check out The Complete Guide to LLM Cost Optimization or our own framework on how to assess LLM scalability without breaking the bank.

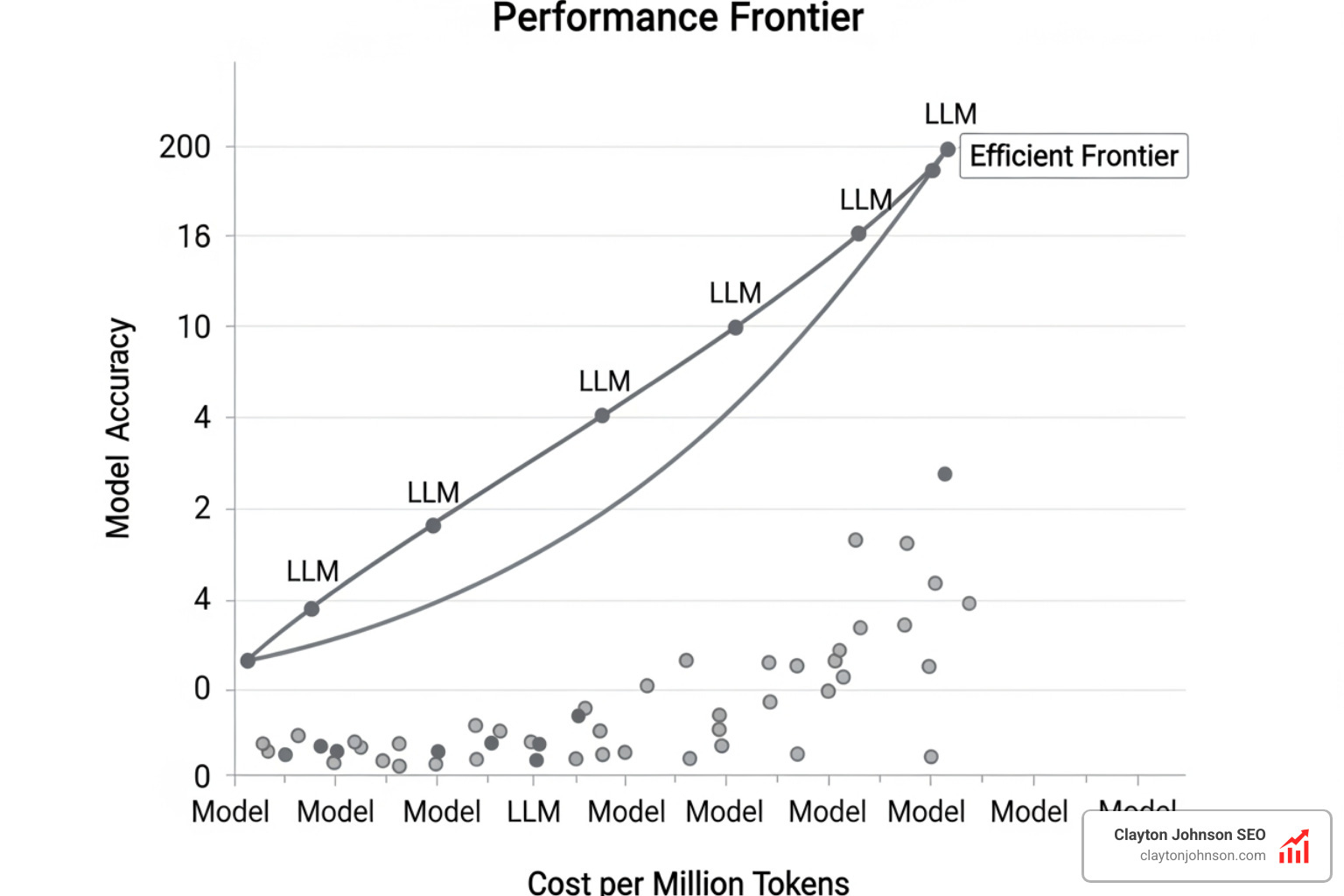

Evaluating Price-to-Performance Ratios

In the current market, “mini” models are the unsung heroes of ROI. Models like GPT-4o-mini, Gemini Flash, and Claude Haiku offer performance that rivals the giants of just a few years ago but at a fraction of the cost.

For example, DeepSeek V3 has sent shockwaves through the industry by offering near-GPT-4 quality for roughly $0.14 per million input tokens. To put that in perspective, using a flagship model for basic tasks is like hiring a PhD to file your taxes when a simple software program would do. We recommend keeping an eye on the Cost Efficiency Leaderboard to see which models are currently winning the value war.

Understanding Hidden Context Costs

We often see developers make the mistake of “prompt inflation.” This happens when you send the same 5,000-word background document with every single query. Without optimization, you are paying for those 5,000 tokens every time you ask the AI a simple question.

This is where Retrieval-Augmented Generation (RAG) becomes a cost-saving tool. Instead of sending the whole library, you only send the specific “snippets” the AI needs to answer the question. It’s the difference between buying the whole bookstore and just checking out the one page you need. You can learn how to build these efficient systems in the ultimate guide to modular RAG pipeline components.

Benchmarking for Real-World Value

How do we know if a model is actually “good enough”? We use benchmarks. But be careful — public benchmarks like MMLU can be “gamed” by model trainers. For real-world value, we prefer benchmarks that reflect how humans actually interact with AI.

- HumanEval+: This measures how well an LLM can solve actual coding problems. It’s a great proxy for logic and reasoning.

- ChatBot Arena: This is a “blind taste test” where users vote on which AI gave a better answer. It’s widely considered the gold standard for “vibes” and general helpfulness.

- SWE-bench: This tests how well a model can resolve real GitHub issues, which is the ultimate test for software engineering tasks.

By looking at ChatBot Arena user-based rankings, we can see that mid-tier models often rank surprisingly close to premium ones, proving that How choose cost effective LLMs often leads you away from the most expensive names.

Matching Use Cases to How Choose Cost Effective LLMs

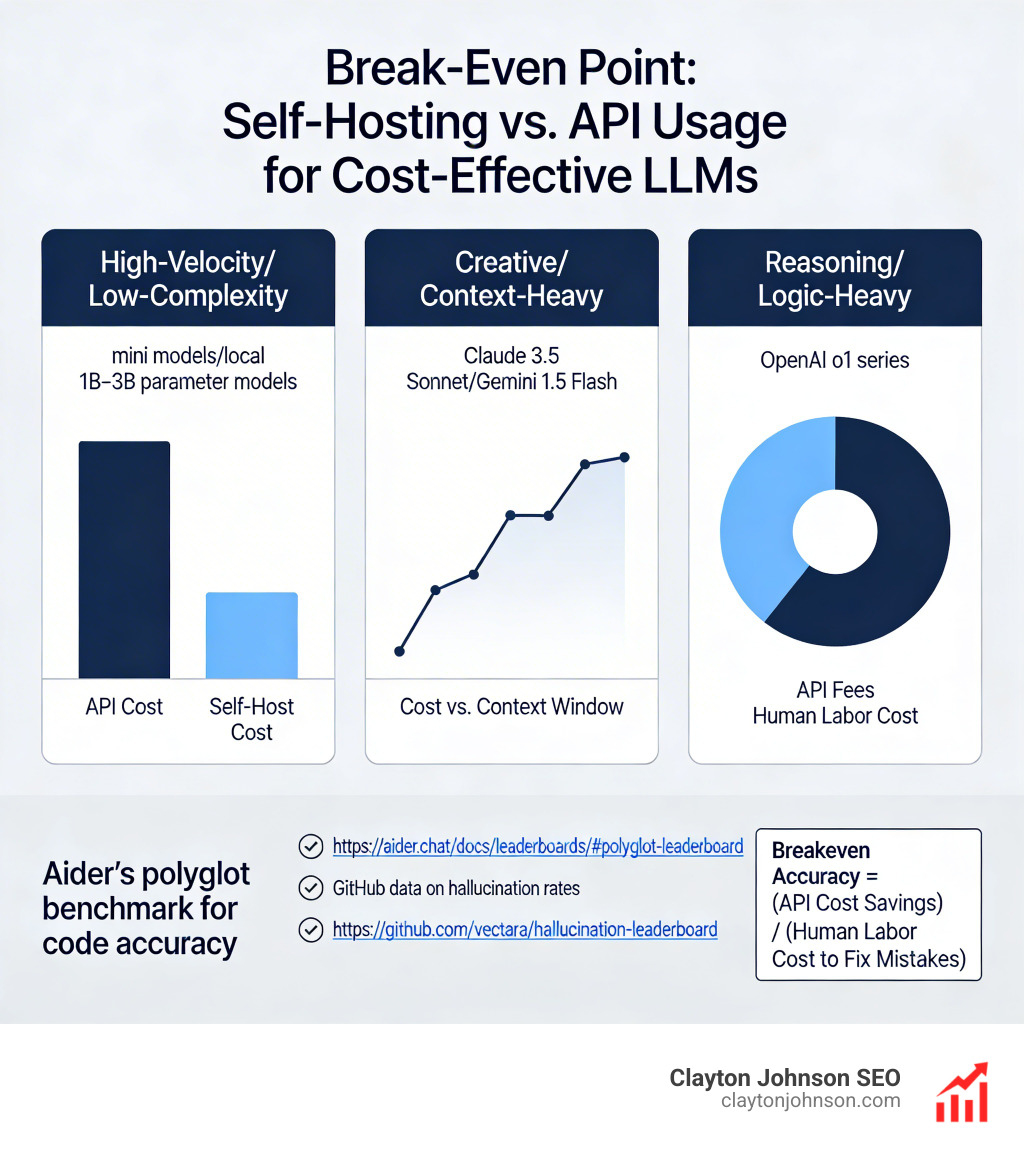

We find that tasks generally fall into three buckets:

- High-Velocity/Low-Complexity: Tasks like sentiment analysis, language translation, or basic classification. These should almost always go to “mini” models or even local 1B–3B parameter models.

- Creative/Context-Heavy: Writing blog posts or analyzing long documents. Here, models like Claude 3.5 Sonnet or Gemini 1.5 Flash excel because they balance creativity with large context windows.

- Reasoning/Logic-Heavy: Complex coding, math, or multi-step strategic planning. This is the only place where we justify the cost of “Reasoning” models (like the OpenAI o1 series).

For developers, Aider’s polyglot benchmark for code accuracy is a fantastic resource for matching specific programming languages to the most efficient model.

Identifying Hallucination Rates and Accuracy Targets

A “cheap” model is very expensive if it gives you the wrong answer 20% of the time. In Minneapolis, businesses we work with prioritize reliability. If you are using AI for customer support, a hallucination (making things up) can lead to lost revenue or legal headaches.

We use the GitHub data on hallucination rates to identify which models stay grounded in facts. Interestingly, some budget models now have lower hallucination rates than older flagship models. We always calculate a “breakeven accuracy.” If a model saves you $100 in API fees but costs you $200 in human labor to fix its mistakes, it’s not cost-effective.

Advanced Optimization: Cutting Costs by 90%

If you really want to win at How choose cost effective LLMs, you have to move beyond just picking a model. You need to optimize the way you use it.

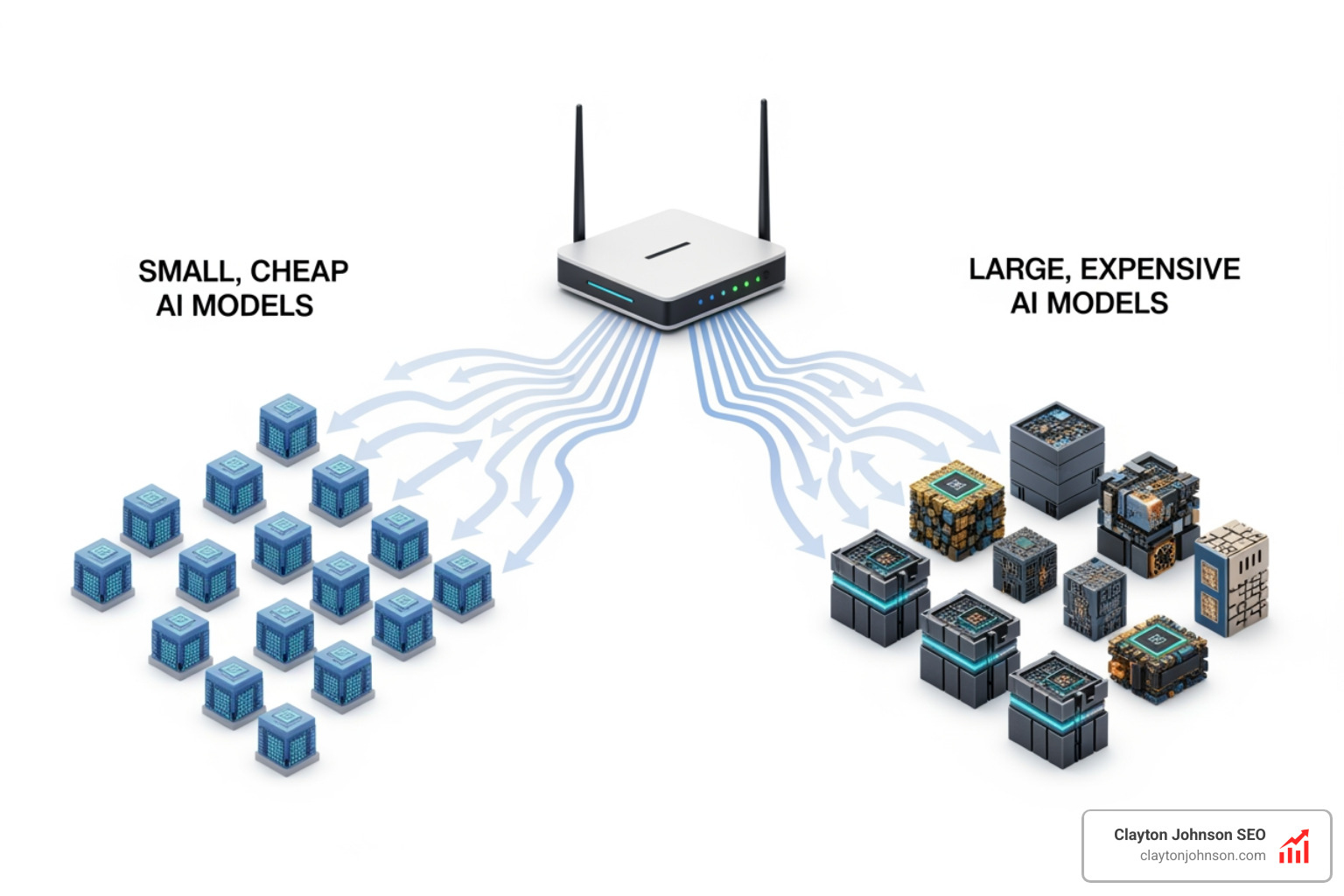

Tiered Architectures and How Choose Cost Effective LLMs

The most sophisticated setups we build use a “Router” architecture. Imagine a receptionist at a hospital. If you have a scratch, they send you to a nurse (a small, cheap model). If you have a broken leg, they send you to a doctor (a medium model). Only if you need heart surgery do they call in the specialist (the expensive frontier model).

By using a tiny 1B model as a “classifier” to decide where a query should go, we’ve seen companies reduce their AI bill by 90% without any drop in quality. You can find inference platforms that won’t make you grow a beard waiting for these complex routings to execute.

Leveraging Structured Outputs and Batching

Two technical tricks can save you a fortune:

- Prompt Caching: Platforms like Anthropic and OpenAI now offer discounts (up to 90%) if you send the same “context” repeatedly. If your system prompt is 2,000 words long, you only pay the full price once; every subsequent use is pennies.

- Batching: If you don’t need an answer right now (e.g., you’re processing 10,000 SEO descriptions overnight), use the Batch API. OpenAI, for example, offers a 50% discount for tasks that can wait 24 hours.

For more on this, see the OpenAI guide to structured outputs to ensure your AI doesn’t waste tokens on “fluff” and gives you exactly the JSON data you need.

Self-Hosting vs. API: Finding the Break-Even Point

At some point, paying for every token feels like paying for every drop of water from a faucet. You might wonder: “Should I just buy the well?”

Self-hosting open-weight models like Llama 3.1 or Qwen 2.5 is becoming a viable option for businesses in Minnesota with high data volumes. When you host your own model, you pay for the hardware (the GPU) and the electricity, but the “tokens” are free.

Break-Even Volume Analysis

The magic number is usually around 1 billion tokens per month. If you are doing less than that, the convenience and low overhead of an API (like the ultimate guide to running AI models via API) usually wins.

However, if you are a high-volume agency, self-hosting can drop your costs from thousands of dollars to a few hundred. Plus, you get total data privacy. Tools like ollama local model library and LM Studio for offline AI make it incredibly easy to test these models on your own machine before committing to a server cluster.

Hardware and Deployment Complexity

Don’t underestimate the “hidden” costs of self-hosting. You need engineers to maintain the servers, and GPUs like the H100 are expensive and hard to find. For most Minneapolis-based companies, we recommend starting with a “Managed Inference” provider like Groq or Together AI. They host the open-source models for you and charge a tiny fee per token, giving you the best of both worlds.

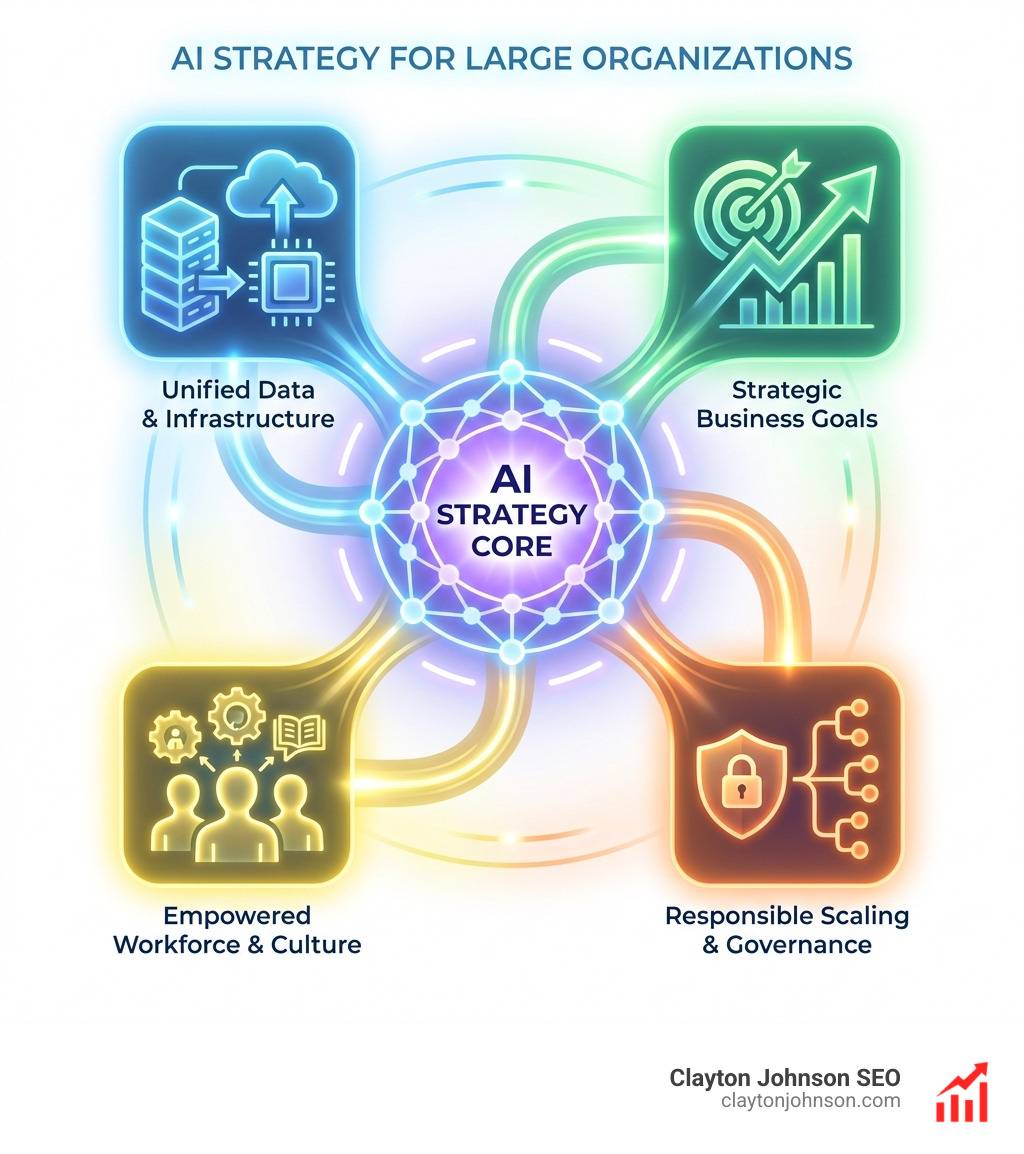

We’ve detailed these trade-offs in our guide on why you need an open source AI platform and AI infrastructure best practices for smart organizations.

Common Pitfalls in AI Model Selection

We see the same mistakes over and over again. The biggest one? The “GPT-4 for Everything” Mistake.

Using a frontier model to summarize a 200-word email is like using a Ferrari to drive across your driveway. It’s overkill, and it’s expensive.

The “GPT-4 for Everything” Mistake

Before you reach for an LLM, ask: “Can a regular computer program do this?”

- Classification: Can a list of keywords or a Regex pattern identify the topic?

- Data Extraction: Can a standard database lookup find the customer’s phone number?

- Formatting: Can a simple script change the date format?

If the answer is yes, the cost is $0. We call this “Tier 0” of the AI hierarchy. For a look at how we personally rank these models, see my completely subjective comparison of major AI models.

Monitoring and Continuous Re-evaluation

The AI world moves fast. A model that was the “value leader” last month might be overpriced today. We recommend a quarterly audit of your AI stack.

- Cost Attribution: Do you know which specific feature is costing you the most?

- Anomaly Detection: Did a “infinite loop” in your code just cost you $500 in one hour?

- Quarterly Audits: Is there a new “mini” model that can replace your current “medium” model?

Staying on top of the AI ecosystem explained is the only way to ensure your growth remains profitable.

Frequently Asked Questions about Cost-Effective LLMs

What are the best budget models under $0.10 per million tokens?

Currently, DeepSeek V3 and GPT-4o-mini are the heavyweight champions of budget AI. If you need extreme speed, Llama 3.1 8B running on Groq pricing and speed is nearly unbeatable for real-time applications.

How much can prompt caching actually save?

On platforms like Anthropic, prompt caching can save you up to 90% on input tokens. This is massive for RAG systems where you are searching through the same large “knowledge base” for every user query. You can see the full breakdown on the Anthropic pricing details page.

Should I use multiple providers in production?

Yes! We call this “Multi-LLM Redundancy.” Not only does it allow you to route tasks to the cheapest capable model, but it also protects you if one provider goes down. Platforms like Together AI model variety allow you to access dozens of different models through a single interface.

Conclusion

At the end of the day, How choose cost effective LLMs is about leverage. At Clayton Johnson SEO, we believe that AI should be a force multiplier for your business, not a drain on your resources.