Neural Search 101: How It Works and Why It Wins

How Neural Networks Search — And Why It Changes Everything

How neural networks search is one of the most important questions in modern information retrieval — and the answer is reshaping how users find information online.

Here’s the short answer:

- Encode — The system converts your query and all indexed content into numerical vectors (embeddings) using deep neural networks (DNNs).

- Match — Instead of matching keywords, it measures semantic similarity between your query vector and content vectors.

- Rank — A scoring layer (often a cross-encoder) re-ranks results by contextual relevance, not just keyword frequency.

- Return — The most semantically relevant results surface — even if they share zero words with your query.

This means a search for “drama exploring modern parenting challenges” can surface the right film without those exact words appearing anywhere in its description.

Traditional keyword search breaks down on queries like that. Neural search doesn’t.

The gap matters more than most founders realize. Only 15% of B2C customers use site search — but that group drives 45% of online revenue. When your search returns the wrong results, you’re not just losing clicks. You’re losing conversions.

I’m Clayton Johnson, an SEO and growth strategist who has spent years mapping how AI-powered systems — including neural search — reshape discoverability and demand architecture. Understanding how neural networks search is central to building content systems that compound rather than stagnate. In the sections below, I’ll break down exactly how the mechanism works and where it creates the most strategic leverage.

Essential How neural networks search terms:

What is Neural Search?

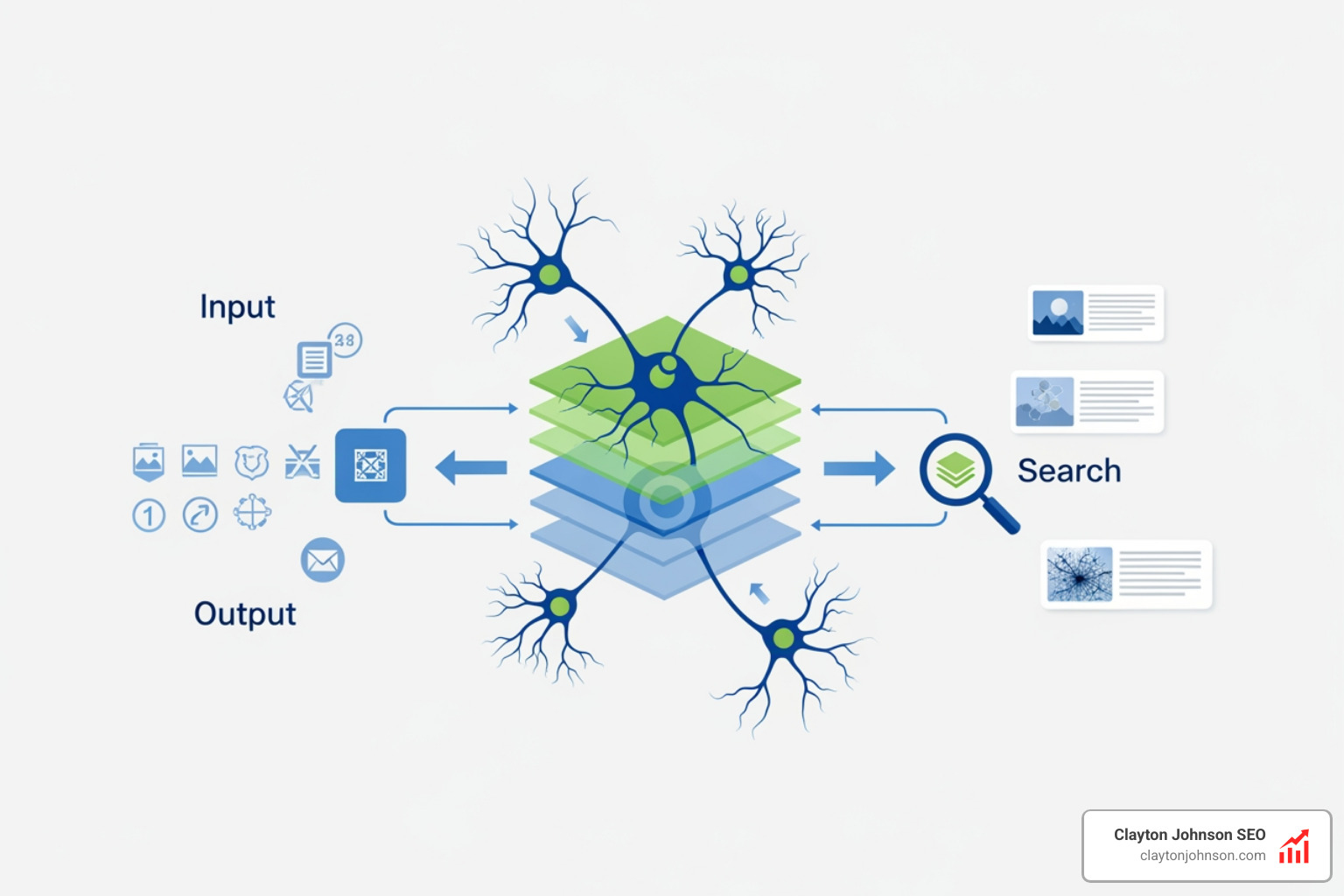

At its core, neural search is a method of information retrieval that uses Deep Neural Networks (DNNs) to handle the entire search process. Unlike traditional methods that rely on matching strings of text, neural search focuses on understanding the “why” and “what” behind a query.

By leveraging advanced AI models, these systems can interpret the contextual meaning of data. Think of it as moving from a librarian who only looks at book titles to one who has actually read every page and understands the themes within.

Neural search achieves this through vector embeddings. It takes unstructured data—like a messy product description, a raw image, or a complex user query—and transforms it into a multi-dimensional mathematical point. This allows the system to perform human-like reasoning. If you search for “something to keep me warm in a blizzard,” the neural network knows you need a “heavy-duty parka,” even if the word “warm” or “blizzard” isn’t in the product title. It understands the relationship between extreme cold and protective gear.

How Neural Networks Search: The Core Mechanism

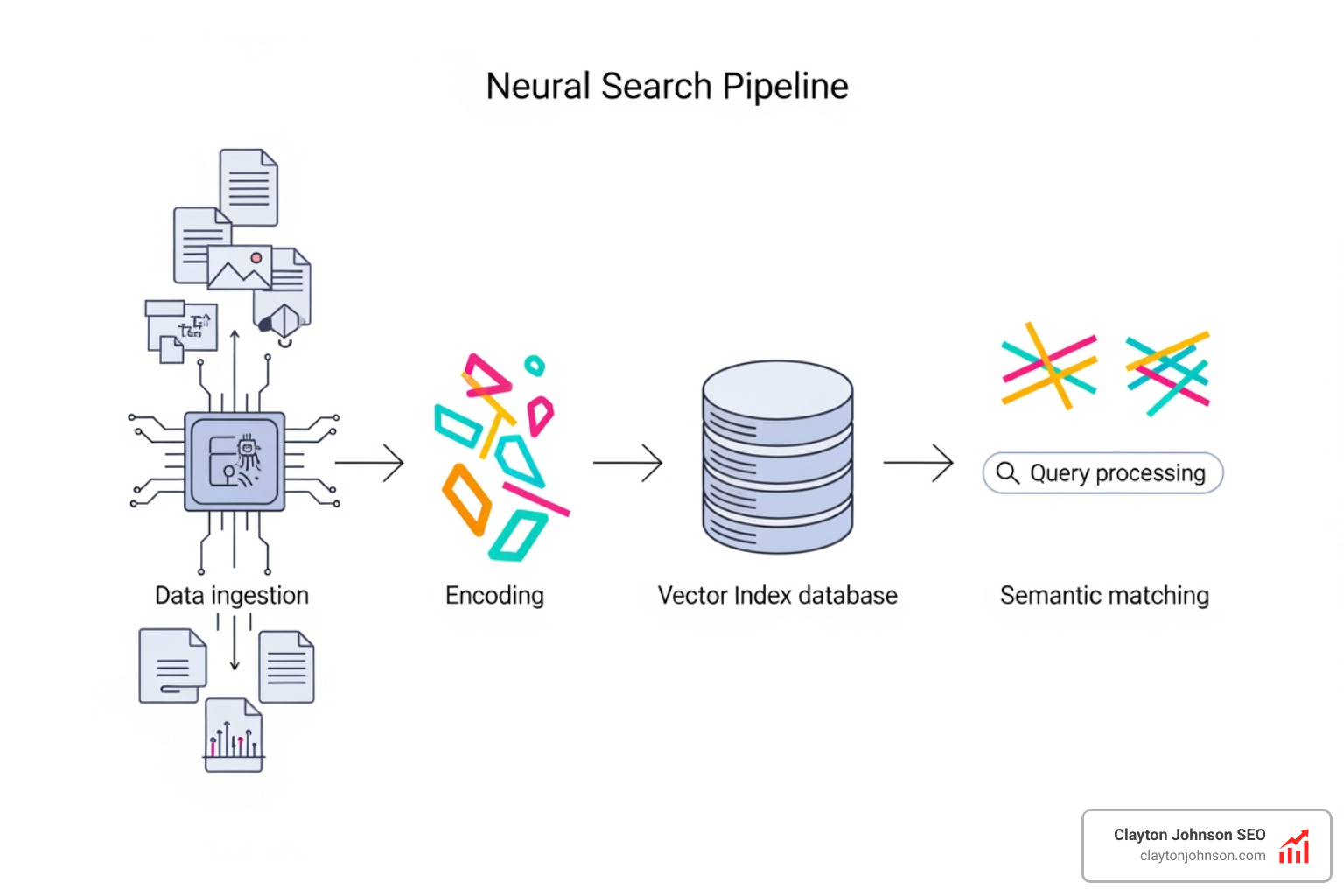

To understand how neural networks search, we have to look at the pipeline. It isn’t just a simple lookup; it’s a sophisticated translation process.

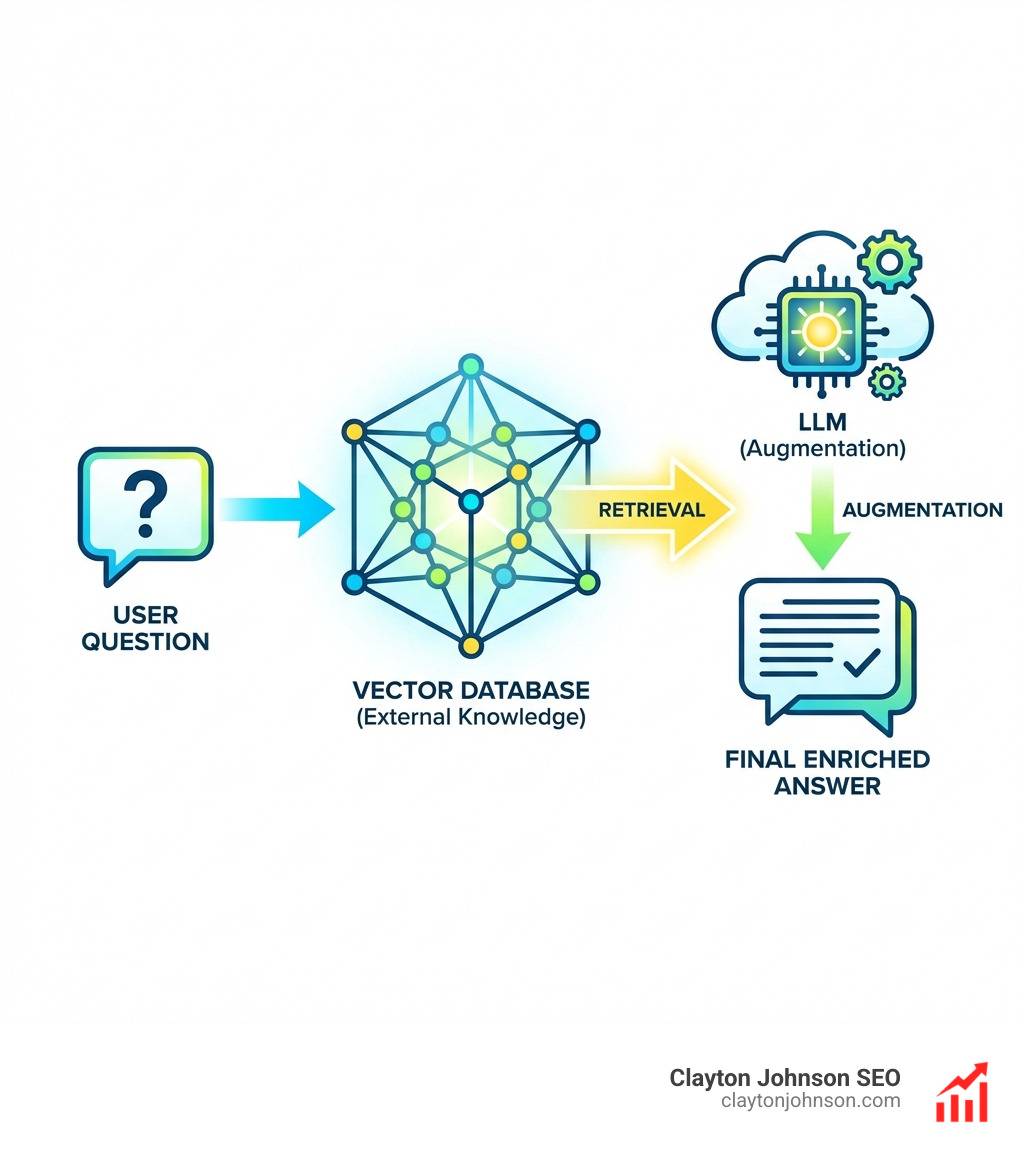

- Data Encoding: Every piece of content (text, image, or video) is passed through a neural network that “encodes” it. This creates a dense vector—a long string of numbers—that represents the semantic essence of the item.

- Indexing: These vectors are stored in a specialized index. Unlike a keyword index that lists where words appear, this index maps where meanings sit in a multi-dimensional space.

- Query Encoding: When a user types a query, that query is encoded by the same (or a compatible) neural network. This ensures the query and the data are “speaking the same language.”

- Semantic Matching: The system calculates the distance between the query vector and the content vectors. Research on Dense Passage Retrieval shows that this “bi-encoder” approach allows for incredibly fast and accurate retrieval across massive datasets.

How neural networks search using semantic matching

The real magic happens during the matching and ranking phase. While a bi-encoder is great for finding a broad set of candidates, neural search often employs cross-encoders for the final selection.

A cross-encoder, such as Jina’s AI Reranker, looks at the query and the potential result simultaneously. It performs a deep comparison to generate a similarity score. This ranking optimization ensures that the results aren’t just “nearby” in a mathematical sense, but are truly contextually relevant to the user’s specific intent. It filters out “false positives” that might have similar words but different meanings.

Why neural networks search more effectively for e-commerce

In e-commerce, how neural networks search is the difference between a sale and a bounce. Traditional search often fails on long-tail queries—those specific, conversational searches like “blue dress for a summer wedding that isn’t too formal.”

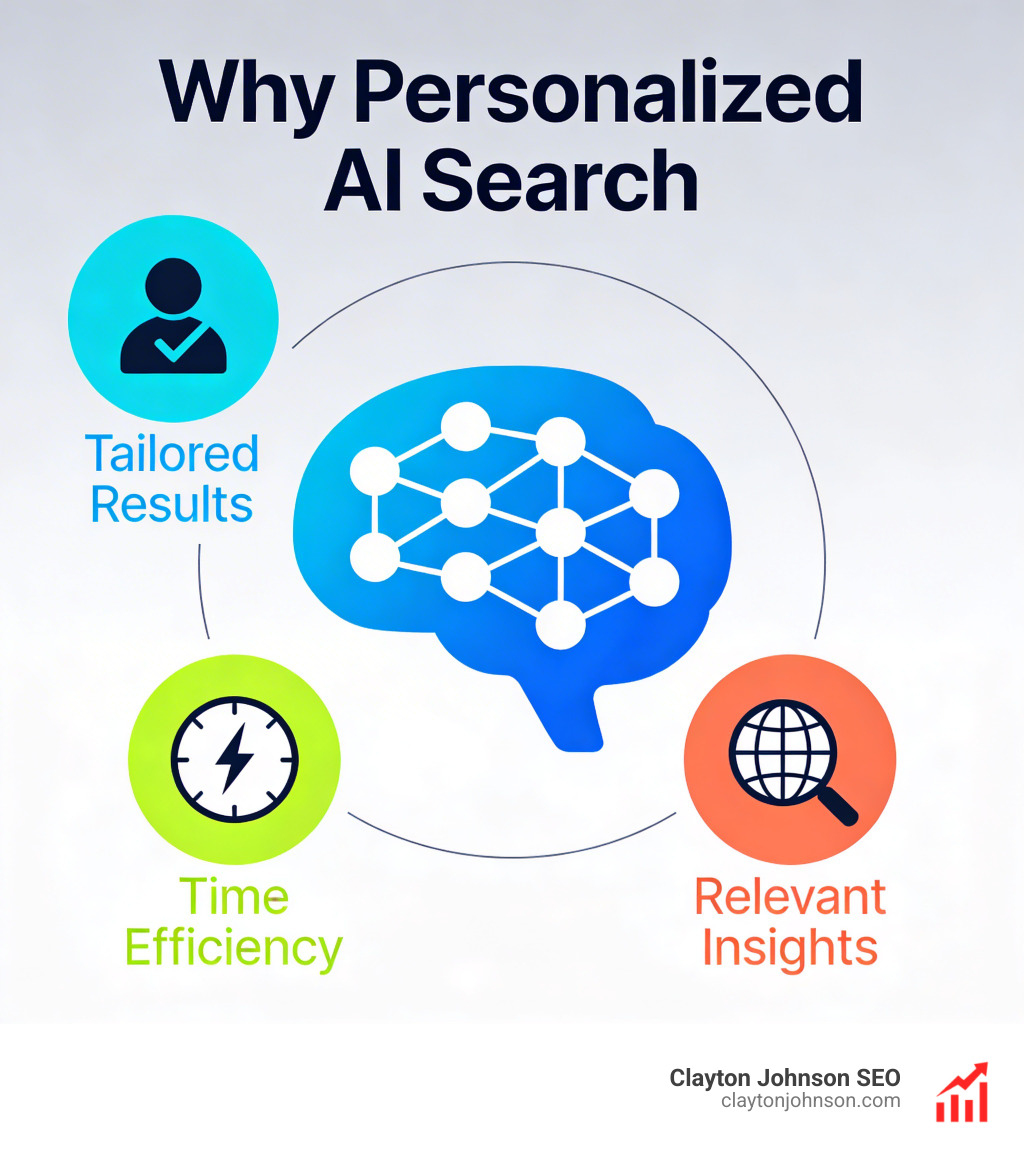

Neural search understands the user intent behind these phrases. According to Think With Google image statistics, 50% of online shoppers say images directly influence their purchasing decisions. Neural search allows for multimodal capabilities, meaning a user can upload a photo of a dress they like and find similar items in your catalog based on style, pattern, and cut.

This level of personalization and accuracy directly impacts conversion rates. When users find exactly what they want on the first try, revenue growth follows. In fact, research shows that while only 15% of B2C customers use site search, they drive nearly half of the total revenue. Making that search “smarter” is high-leverage growth architecture.

Neural Search vs. Traditional Keyword and Vector Methods

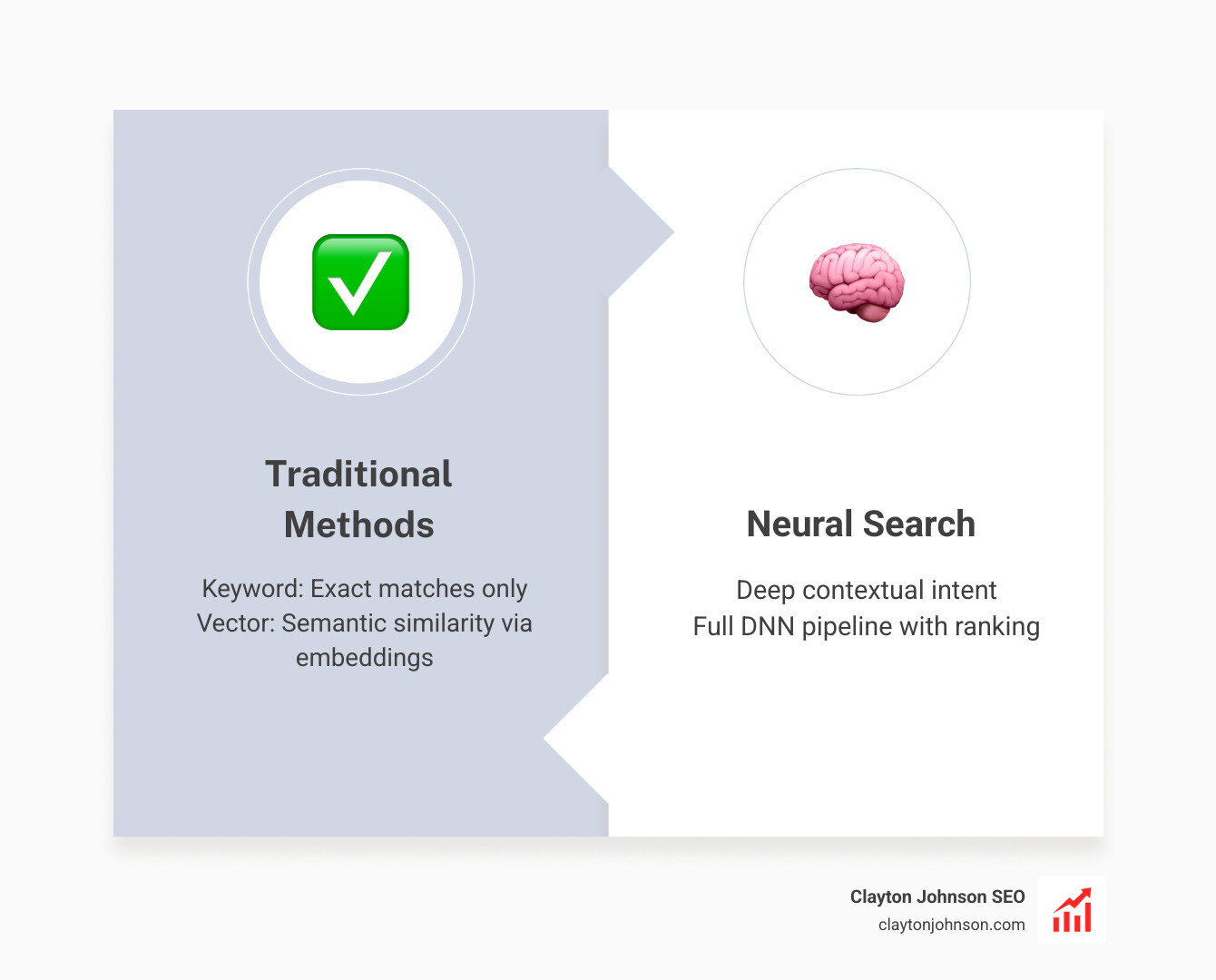

| Feature | Keyword Search | Vector Search | Neural Search |

|---|---|---|---|

| Primary Mechanism | Exact token matching | Mathematical embeddings | Full DNN pipeline |

| Understanding | Zero (literal only) | Semantic similarity | Deep contextual intent |

| Data Types | Text only | Multimodal (mostly) | True Multimodal |

| Maintenance | High (manual synonyms) | Moderate | Low (self-learning) |

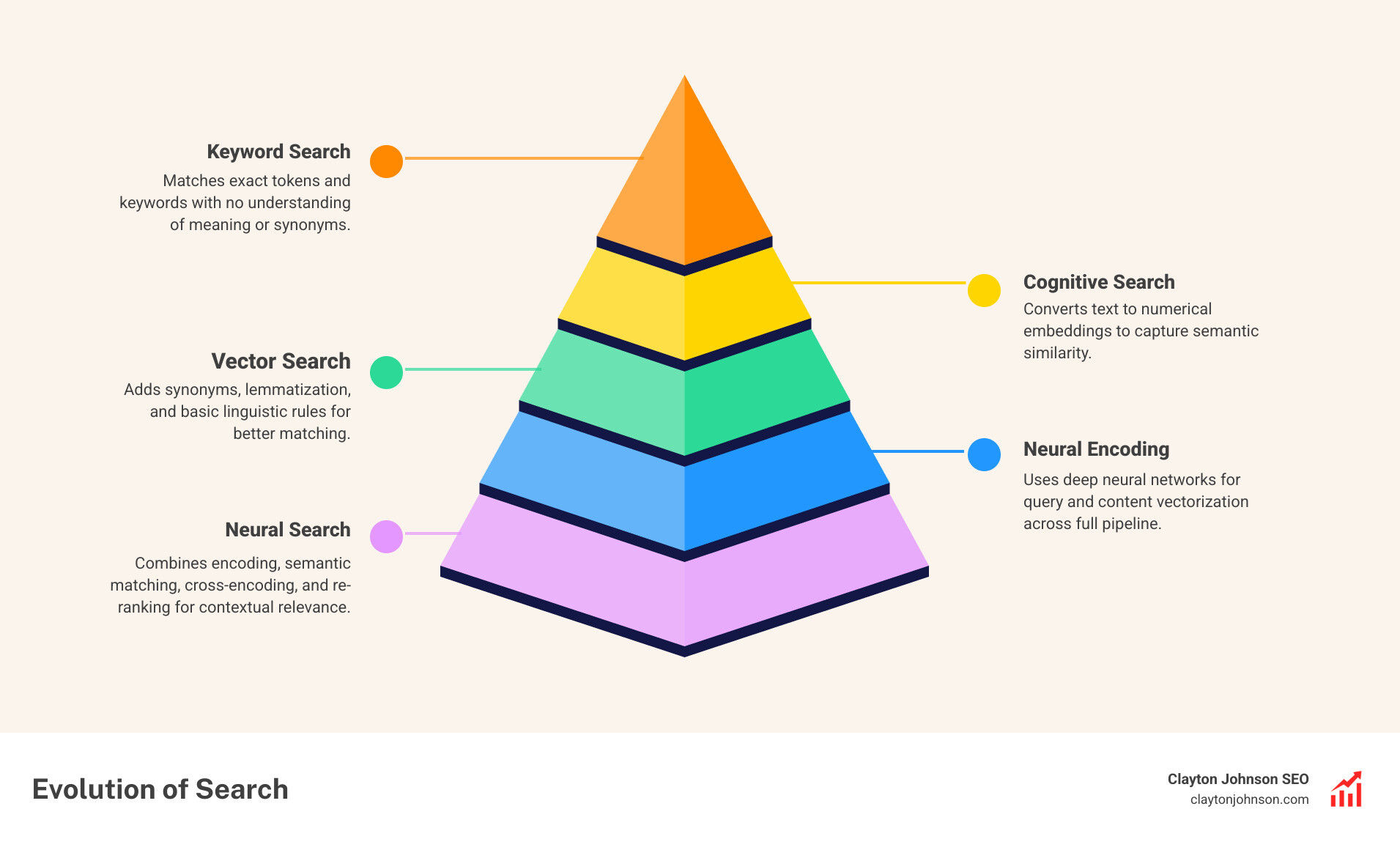

We often see founders confused by these terms. Let’s clarify. Keyword search is what we’re all used to—it’s fast but “dumb.” If you don’t use the exact word, you don’t get the result.

Vector search was a major leap forward, using multi-model AI search strategies to map relationships. However, “pure” vector search often relies on external algorithms (like Approximate Nearest Neighbor) to find results.

Neural search is the most advanced. It uses deep neural networks for every single step. It doesn’t just find “related” things; it understands semantic nuances. It’s the difference between “Cognitive search,” which might use a dictionary to find synonyms, and a neural system that learns that “connection issues” and “session timeouts” are the same problem without being told. Many modern systems use a hybrid search approach, combining the speed of keywords with the brains of neural networks to get the best of both worlds.

Real-World Applications: From E-commerce to Healthcare

Neural search isn’t just theoretical; it’s already powering the tools we use daily.

- Google Shopping: Google uses generative AI and neural search to summarize key product factors and provide personalized recommendations that go far beyond simple filters.

- Healthcare Transformation: Companies like Deep6 AI use neural search to analyze patient histories and genomic data. This helps identify clinical trial candidates in minutes instead of months.

- Multimodal Image Search: Industrial companies use CNN-powered search engines to allow workers to snap a photo of a part and instantly find its manual and stock levels in a massive database.

- ML Model Retrieval: Platforms like Hugging Face use hybrid systems to help developers find the right machine learning model among thousands by understanding the context of the developer’s project.

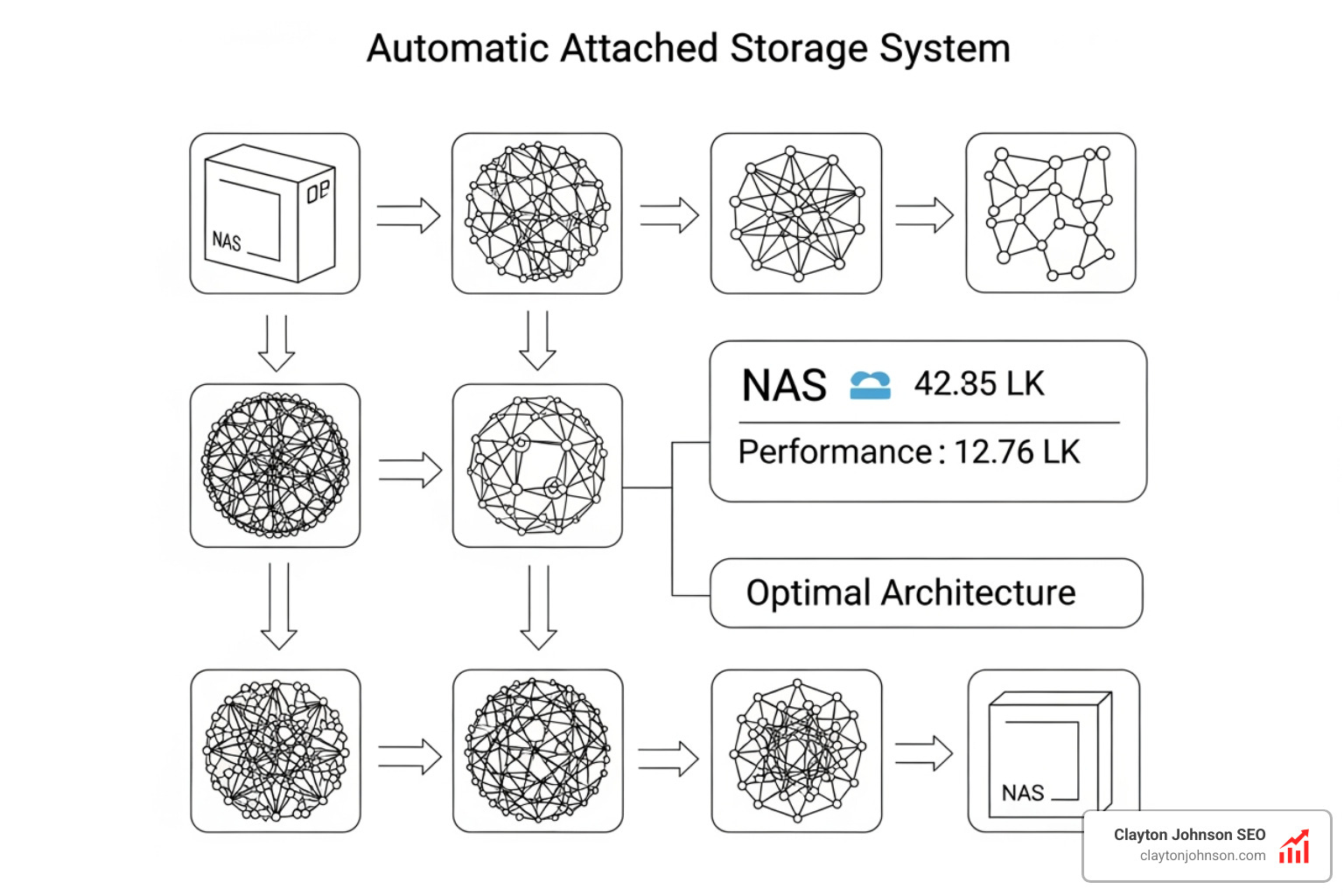

Neural Architecture Search (NAS) vs. Neural Search

It’s easy to get these two confused because they share a name, but they serve very different purposes.

Neural search is about finding information using a network.

Neural Architecture Search (NAS) is about finding the network itself.

NAS is a form of automated design. Instead of a human engineer spending weeks testing different layers and nodes, NAS uses algorithms (often Reinforcement Learning) to automatically discover the most efficient model design.

Scientific research on Neural Architecture Search has led to breakthroughs like EfficientNet and BERT. These models are “state-of-the-art” because an AI helped design them to be faster and more accurate than anything a human could build manually. While NAS is a “meta-learning” tool for developers, it’s the reason why the neural search models we use today are so powerful.

The Future of AI-Powered Information Retrieval

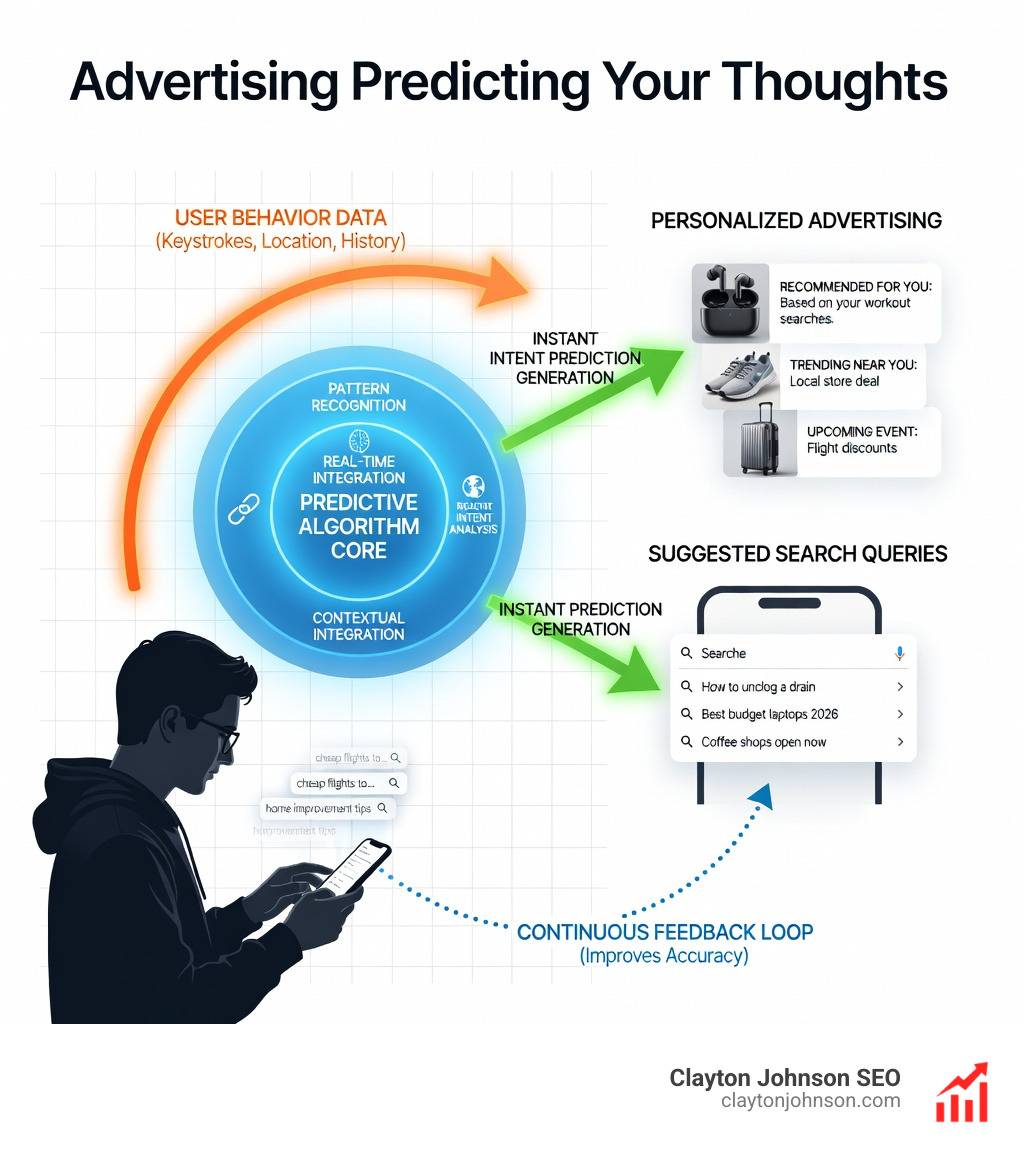

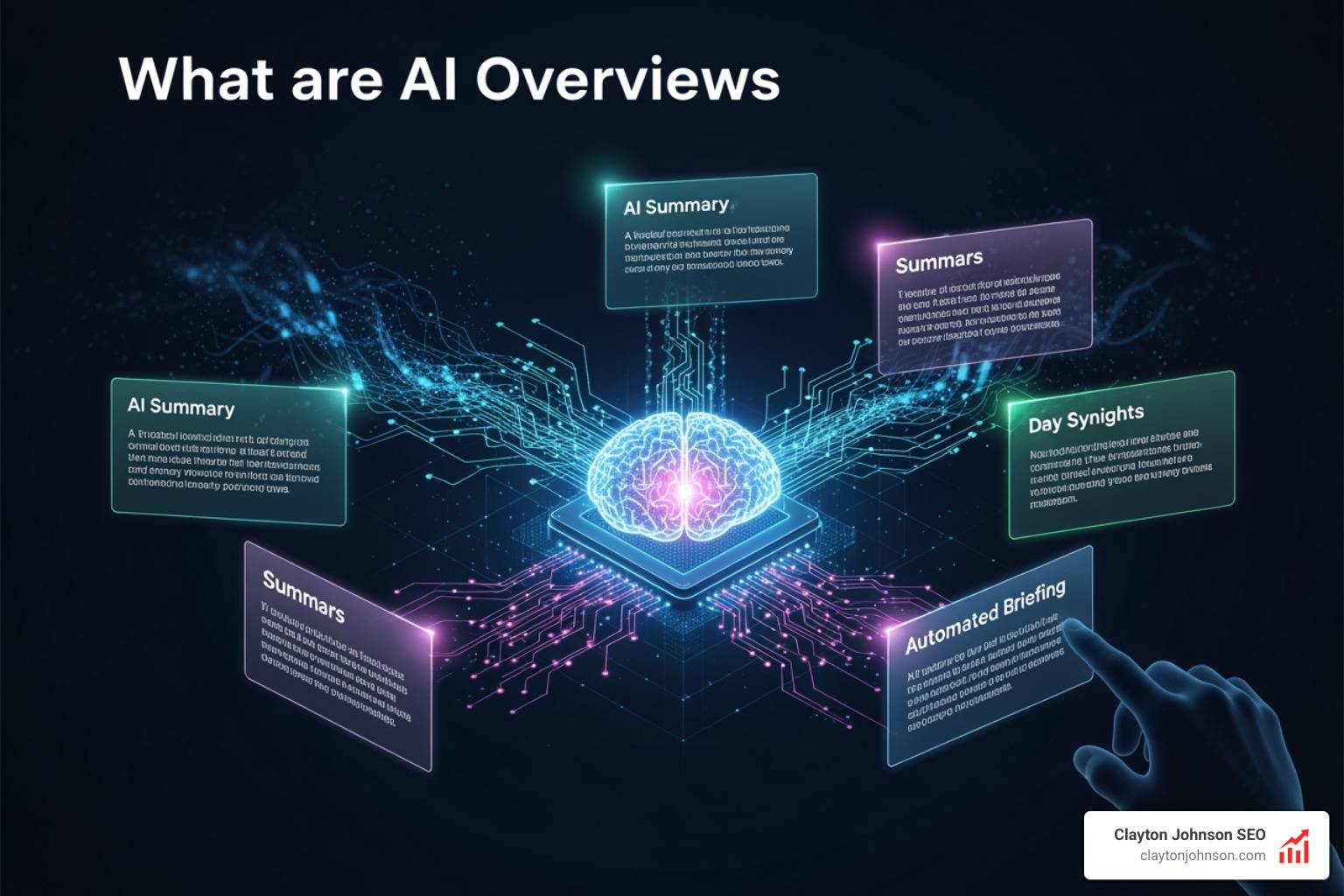

The future of how neural networks search is moving toward end-to-end discovery. We are reaching a point where neural networks can actually learn to create their own data structures and algorithms from scratch.

Imagine a search engine that doesn’t just use a pre-built index but invents the most efficient way to store and retrieve your specific company data. This is called algorithmic discovery. When paired with Generative AI, search moves from “finding a document” to “synthesizing an answer.”

Continuous learning will also play a massive role. Future search systems won’t just be trained once; they will use Reinforcement Learning to improve every time a user clicks (or doesn’t click) on a result. The system becomes a living “growth architecture” that gets smarter the more it’s used.

Frequently Asked Questions about Neural Search

What is the difference between neural search and vector search?

While vector search uses mathematical embeddings to find similarity, neural search uses Deep Neural Networks for the entire pipeline, including the final ranking and reranking. Neural search is generally more “intelligent” regarding context, while pure vector search is often focused on mathematical distance.

How does neural search improve e-commerce revenue?

By understanding user intent and supporting multimodal inputs (like images), neural search reduces “null results.” When users find what they need quickly—even with vague or long-tail queries—bounce rates drop and conversion rates climb. Since site searchers often drive 45% of revenue, even a small improvement in accuracy has a massive impact on the bottom line.

What are the computational costs of neural networks?

They are significant. DNNs require substantial processing power, which is why NVIDIA holds a 90% share of the GPU market. High-end GPUs can cost over $2,500 each, and AI engineers command salaries 30% higher than traditional roles. However, for most businesses, the “cost” is managed by using optimized models or third-party API providers that handle the heavy lifting.

Conclusion

Understanding how neural networks search is no longer just for data scientists—it’s a requirement for any founder or marketing leader building a structured growth architecture.

At Clayton Johnson and Demandflow.ai, we believe that tactics aren’t enough. You need infrastructure. By integrating neural search principles into your authority-building ecosystems, you move from chasing keywords to owning the semantic space. This is how you build AI-enhanced execution systems that don’t just work today but compound over time.

Ready to evolve your search strategy? It’s time to leverage AI search for compounding growth and turn your information retrieval into a competitive advantage.