A Comprehensive List of Current AI Governance Standards

Why AI Governance Standards Matter for Every Organization Using AI

AI governance standards are the frameworks, principles, and regulations that guide how artificial intelligence systems are developed, deployed, monitored, and held accountable.

Here is a quick overview of the most widely recognized AI governance standards today:

| Standard / Framework | Origin | Binding? | Key Focus |

|---|---|---|---|

| NIST AI Risk Management Framework (AI RMF) | United States | Voluntary | Risk management across the AI lifecycle |

| EU AI Act | European Union | Legally binding | Risk-based classification of AI systems |

| OECD AI Principles | International (47 countries) | Non-binding | Human-centric, trustworthy AI development |

| UNESCO AI Ethics Framework | Global (UN member states) | Voluntary | First global AI ethics standard |

| G7 Code of Conduct for Advanced AI | G7 Nations | Voluntary | Foundation models and generative AI |

| UK Pro-Innovation AI Framework | United Kingdom | Non-statutory | Flexible, context-driven governance |

| Canada AI and Data Governance Standardization Collaborative | Canada | Voluntary | Standardization for domestic and global markets |

AI is moving fast. Very fast.

And most organizations are deploying AI systems without a clear governance structure in place. That creates real risk — legal, ethical, reputational, and operational.

The challenge is that the landscape of AI governance is fragmented. There are international principles, regional regulations, voluntary frameworks, and sector-specific standards — all evolving simultaneously.

As NIST puts it, the goal of AI governance is to “better manage risks to individuals, organizations, and society associated with artificial intelligence.” That is a simple statement. But executing it across a real organization, with real AI systems, is anything but simple.

The stakes are high across every industry — from healthcare and finance to transportation and public services. And as the EU AI Act demonstrates, governance is increasingly becoming a legal requirement, not just a best practice.

I’m Clayton Johnson, an SEO strategist and systems thinker who works at the intersection of AI, structured content architecture, and scalable growth frameworks — and tracking the evolution of ai governance standards is central to how I advise organizations building AI-integrated operations. This guide breaks down every major framework you need to know, in plain language.

Ai governance standards terms simplified:

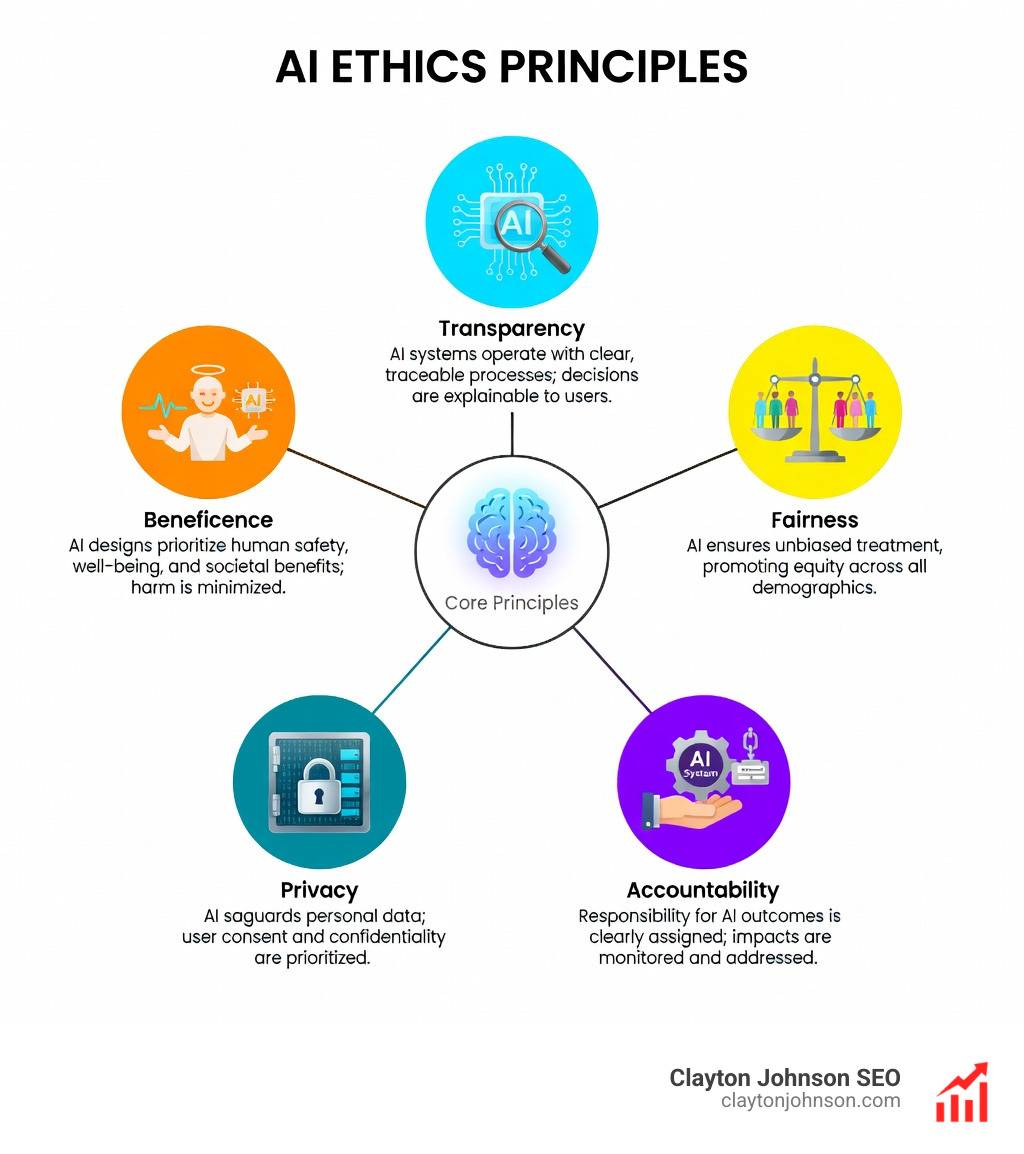

Core Principles of Global AI Governance Standards

Before we dive into the specific “rulebooks,” we need to understand the underlying values. Most global ai governance standards are built upon a shared set of core principles. Think of these as the “Ten Commandments” for robots (and the humans who build them).

1. Human Oversight

AI shouldn’t run on autopilot without a “kill switch.” Standards like the EU AI Act and the NIST AI RMF emphasize that humans must maintain meaningful control. This ensures that if a model starts hallucinating or making biased loan approvals, a person can intervene.

2. Transparency and Explainability

If an AI denies your mortgage application, you deserve to know why. Transparency means being open about how a system was built, while explainability focuses on making the “black box” of AI logic understandable to non-technical users.

3. Accountability

Who is responsible when an AI makes a mistake? Accountability ensures there is a clear chain of command. Organizations must define who owns the AI system’s outcomes, from the data scientists to the C-suite.

4. Safety and Robustness

AI systems must be secure and resilient against attacks (like prompt injection) and function reliably under stress. This is especially critical in “high-stakes” sectors like healthcare.

5. Fairness and Non-Discrimination

Algorithms can inherit human biases. Governance standards require proactive testing to ensure AI doesn’t discriminate based on race, gender, or age. Building a responsible AI framework requires setting these fairness metrics before a single line of code is deployed.

6. Privacy and Data Protection

AI is fueled by data. Standards mandate that this data is collected ethically and stored securely, often aligning with existing laws like GDPR.

7. Proportionality

Not every AI needs the same level of red tape. A chatbot that summarizes internal meeting notes requires less oversight than an AI used for surgical assistance. Governance should be “risk-based”—matching the level of control to the potential for harm.

| Principle | NIST AI RMF Focus | EU AI Act Focus | OECD Principles Focus |

|---|---|---|---|

| Human Agency | Human-AI configuration risks | Mandatory for high-risk systems | Human-centric values |

| Transparency | Documentation and metadata | Disclosure of AI-generated content | Openness about datasets |

| Safety | Resilience and security | Prohibiting “unacceptable” risks | Robustness across lifecycle |

| Fairness | Bias measurement and mitigation | Algorithmic discrimination audits | Inclusive growth and equity |

Major International AI Governance Frameworks

Because AI doesn’t stop at national borders, international bodies have stepped in to create “connective tissue” between different countries’ rules.

OECD AI Principles

The OECD AI Principles Overview represents the first intergovernmental standard for trustworthy AI. Adopted by 47 adherents (including nearly all of Europe and North America), these principles focus on inclusive growth, human-centric values, and accountability. In May, these were updated to specifically address the rise of generative AI and misinformation.

UNESCO Ethics Framework

The UNESCO framework is the first global standard on AI ethics voluntarily adopted by UN member states. It places a unique emphasis on environmental sustainability and gender equality—areas often overlooked by more technical frameworks.

G7 Code of Conduct

Established by the world’s largest economies, the G7 Code of Conduct provides voluntary best practices for developers of foundation models. It focuses on “frontier AI”—the most powerful systems that pose the greatest potential risks to global security.

For more on how these high-level principles translate to corporate policy, check out our Enterprise AI strategy 101.

Regional and National Regulatory Standards

While international frameworks provide the “vibe,” regional laws provide the “teeth.”

The EU AI Act: The Gold Standard

The European Union’s AI Act is the world’s first comprehensive, legally binding AI law. It uses a tiered, risk-based system:

- Unacceptable Risk: Banned (e.g., social scoring by governments).

- High Risk: Strict controls (e.g., AI in recruitment or healthcare).

- Limited/Minimal Risk: Light transparency requirements (e.g., spam filters).

NIST AI Risk Management Framework (United States)

The NIST AI Risk Management Framework (AI RMF) is the primary standard in the U.S. While voluntary, it is widely adopted by enterprises because of its practical, four-function structure: Govern, Map, Measure, and Manage. You can Download the AI RMF 1.0 to see the full technical breakdown.

Canada’s Standardization Collaborative

Canada is taking a unique approach through the AI and Data Governance Standardization Collaborative. This initiative focuses on helping small-to-medium enterprises (SMEs) scale their AI responsibly while ensuring indigenous leadership is represented in the digital economy.

U.S. State-Level Regulation

In the absence of a single federal law, states like Colorado and California are leading the way.

- Colorado AI Act (CAIA): Prohibits algorithmic discrimination in high-risk systems like housing and insurance.

- California Automated Decision Systems Accountability Act: Seeks transparency for AI used in consequential employment decisions.

Implementing these rules is part of Scaling AI for modern organizations—it’s about building the infrastructure for growth before the regulators come knocking.

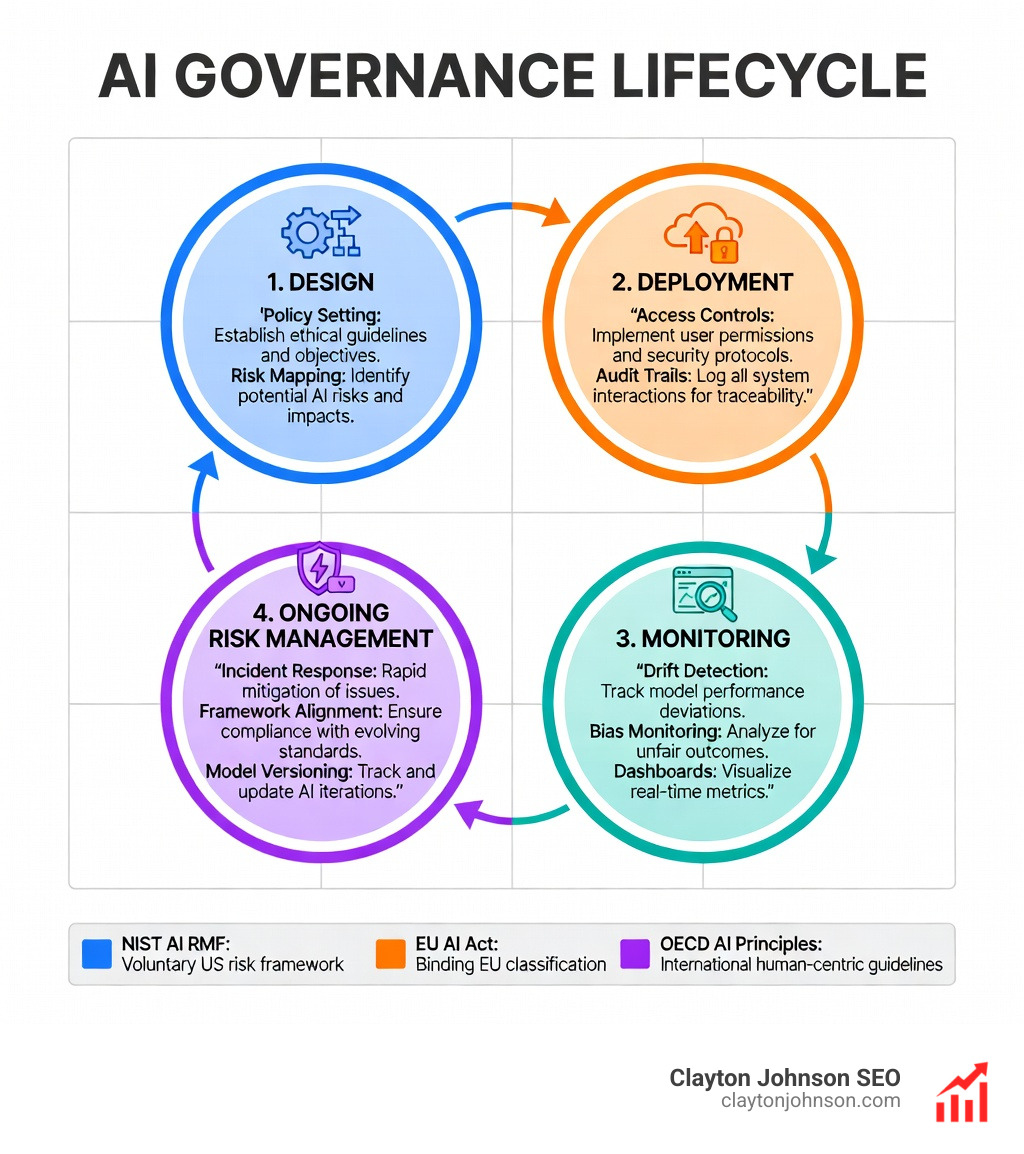

Operationalizing AI Governance Standards in the Enterprise

Governance is just a word until you put it into your workflow. At Demandflow, we believe in “structured growth architecture.” Applying that to AI means moving from theory to a “Governance Operating System.”

The NIST AI RMF provides the best roadmap for this:

- Govern: Establish the culture. Who is in charge? What are our risk tolerances?

- Map: Identify the risks. Where is this AI being used? What data is it touching?

- Measure: Use metrics. How often does the model fail? Is there a bias in the output?

- Manage: Take action. If the model drifts, how do we retrain it?

To support this, organizations should implement AI infrastructure best practices, such as:

- Internal Review Boards: Cross-functional teams (legal, tech, ethics) that approve new AI projects.

- Audit Trails: Keeping a “black box” flight recorder of every decision the AI makes.

- Versioning: Tracking changes to models so you can “roll back” if something goes wrong.

Implementing AI Governance Standards for Generative AI

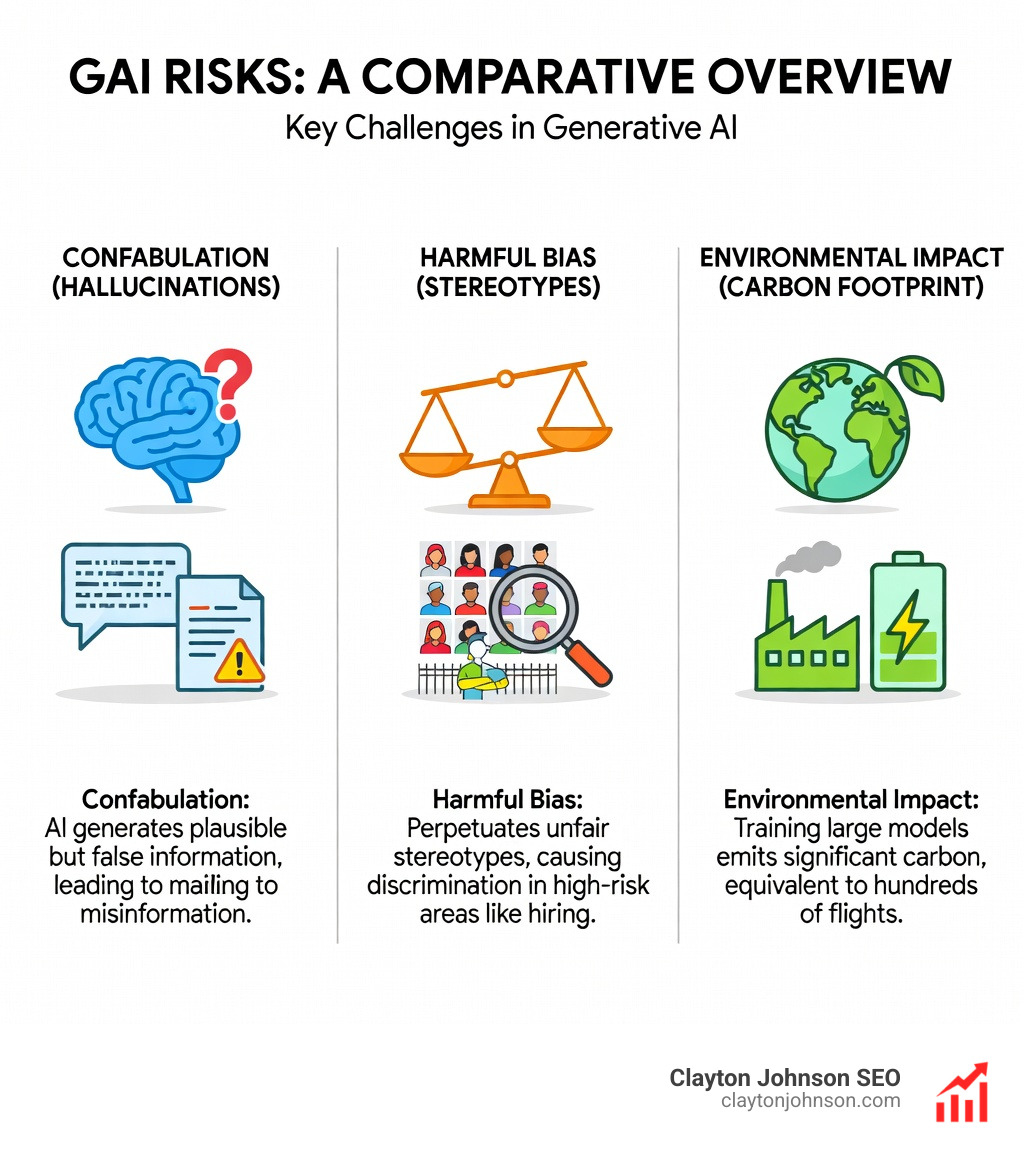

Generative AI (GAI) brings its own set of headaches. NIST recently released the Generative AI Profile to address these.

Key GAI Governance Actions:

- Content Provenance: Using watermarking and metadata to show if a video or image was AI-generated.

- Adversarial Red-Teaming: Hiring “ethical hackers” to try and trick your AI into saying something dangerous or biased.

- Environmental Tracking: Training one large transformer model can emit as much carbon as 300 round-trip flights between NY and SF. Governance now includes reporting on the energy cost of your AI.

You can View the AI RMF Playbook for specific “how-to” steps on managing these GAI risks.

Best Practices for Maintaining AI Governance Standards

Once you’ve built the system, you have to keep it running. Don’t let your governance gather dust on a shelf.

- Visual Dashboards: Real-time views of model health and compliance status.

- Drift Detection: Automated alerts when a model’s performance starts to degrade because the real world has changed.

- Incident Response Playbooks: Pre-defined steps for what to do if your AI leaks data or produces harmful content.

- Open-Source Compatibility: Using tools that work across different platforms to avoid “vendor lock-in.”

For a deeper dive into these systems, see our More info about AI governance page.

Frequently Asked Questions about AI Governance

What is the difference between AI ethics and AI governance?

AI ethics is the philosophy (the “should we?”), while AI governance is the implementation (the “how do we ensure we do?”). Ethics provides the principles; governance provides the policies, tools, and oversight to enforce them.

Is the NIST AI Risk Management Framework legally binding?

No, the NIST AI RMF is currently voluntary. However, many U.S. government agencies and large corporations are making it a requirement for their vendors. Adopting it now is a smart way to prepare for future regulations.

How do international frameworks promote global interoperability?

Frameworks like the OECD AI Principles create a “common language.” By agreeing on definitions (like what an “AI system” actually is), countries can ensure that an AI built in Canada can be legally and safely used in the EU or the U.S.

Conclusion

The era of “move fast and break things” in AI is over. Today, the organizations that win are those that move fast with ai governance standards as their foundation.

At Clayton Johnson SEO and Demandflow, we help founders and marketing leaders build “structured growth architecture.” We believe that clarity leads to structure, and structure leads to compounding growth. AI is the ultimate leverage—but only if you have the guardrails in place to keep it on track.

Whether you are aligning with the NIST AI RMF or preparing for the EU AI Act, the goal is the same: building trust through transparency.

Ready to build a Structured AI governance system that scales? Let’s get to work.