A Comprehensive Guide to AI Governance

What Is AI Governance (And Why It Matters for Your Organization)

AI governance is the structured set of policies, principles, and practices that guide how organizations develop, deploy, and oversee artificial intelligence systems responsibly and ethically.

Here is a quick breakdown:

| Element | What It Means |

|---|---|

| Policies & Procedures | Rules that define how AI is built and used |

| Ethical Guidelines | Standards for fairness, transparency, and accountability |

| Regulatory Compliance | Adherence to laws like the EU AI Act or NIST RMF |

| Risk Management | Identifying and mitigating harms before they happen |

| Oversight Mechanisms | Human checks on AI decision-making |

In short: AI governance is how organizations make sure AI systems are safe, fair, legal, and trustworthy.

An estimated 78% of businesses globally are now using AI in at least one function. That number is climbing fast. And yet, most organizations are building AI systems without a clear governance structure in place.

That creates real risk.

Without governance, AI systems can produce biased outputs, expose sensitive data, violate regulations, and erode user trust — often before anyone notices. As the EU AI Act, NIST AI Risk Management Framework, and OECD AI Principles make clear: structured oversight is no longer optional. It is foundational.

The challenge? Legislation often lags behind technology. That means organizations cannot wait for regulators to catch up. The window to build responsible AI systems — before compliance becomes enforcement — is right now.

AI governance is not just a legal checkbox. It is a strategic asset. Organizations that build governance into their AI systems early avoid costly retrofits, build stakeholder trust faster, and compete with more confidence in regulated markets.

I’m Clayton Johnson, an SEO strategist and growth operator who works at the intersection of AI-assisted workflows, structured content systems, and scalable marketing architecture — and AI governance has become a critical lens through which I evaluate every AI integration strategy I build or recommend. In the sections below, I’ll walk you through the core principles, global frameworks, implementation steps, and risk strategies you need to govern AI responsibly and effectively.

AI Governance word list:

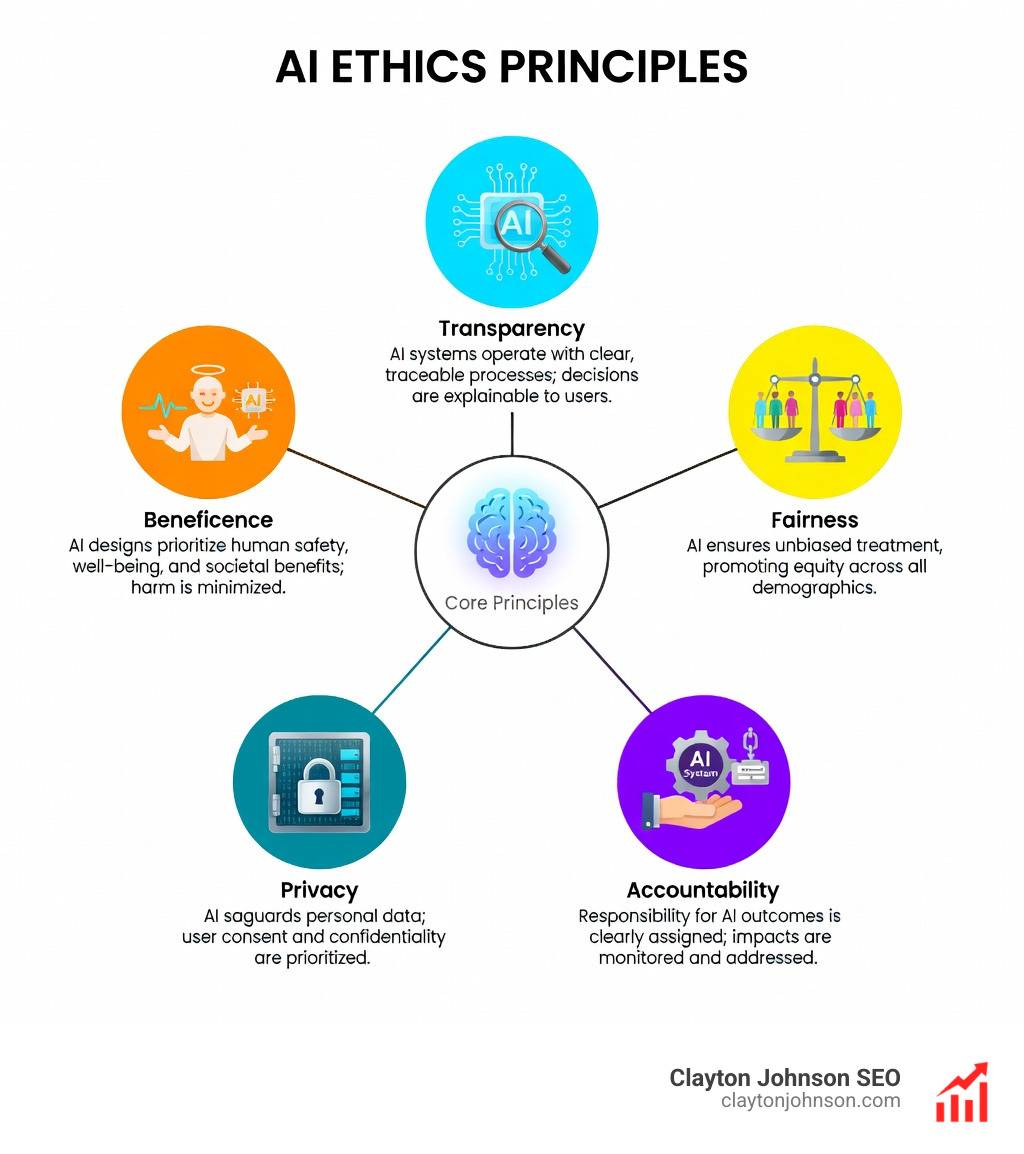

Core Principles of Ethical AI

At the heart of any AI governance strategy are the ethical pillars that ensure technology serves humanity rather than exploiting it. We believe that ethics shouldn’t just be a handbook on a shelf; it must be baked into the code and the culture of the organization.

Transparency and Explainability

Transparency is the cornerstone of trust. If a bank denies a loan or a hospital suggests a treatment based on an AI model, the “why” matters. We must move away from “black-box” models where decisions are made in a vacuum. Techniques such as execution graphs and traceable reasoning chains allow both technical and non-technical stakeholders to understand the logic behind an output.

Accountability and Human Oversight

Who is responsible when an AI makes a mistake? Effective governance mandates clear lines of accountability. This involves establishing a formal structure with designated roles like Data Stewards and AI Compliance Managers. Furthermore, maintaining “human-in-the-loop” systems ensures that high-impact decisions always have a human safety valve.

Fairness and Algorithmic Bias

AI models are only as good as the data they consume. If training data contains historical prejudices, the AI will amplify them. We prioritize fairness by using metrics like the disparate impact ratio to detect and mitigate bias. This is a core part of building a responsible AI framework that upholds individual rights.

Data Privacy and Environmental Sustainability

The UNESCO Recommendation on the Ethics of Artificial Intelligence highlights often-overlooked areas: gender equality and environmental impact. AI data centers are energy-hungry, potentially requiring 327 GW by 2030. Responsible governance includes “Green-in-AI” practices—designing energy-efficient models—to ensure our technological progress doesn’t come at the expense of the planet.

Global AI Governance Frameworks and Regulations

Navigating the landscape of AI governance can feel like trying to read a map while the terrain is still shifting. However, several major frameworks have emerged as the “North Stars” for global compliance.

| Framework | Nature | Key Focus |

|---|---|---|

| EU AI Act | Legally Binding | Risk-based tiers (Unacceptable to Minimal) |

| NIST AI RMF | Voluntary/Standard | Practical risk management (Govern, Map, Measure, Manage) |

| OECD Principles | International Guideline | Trustworthy AI and international cooperation |

| UK Framework | Non-Statutory | Pro-innovation, context-driven approach |

The European Union’s AI Act

The European Union’s AI Act is the world’s first comprehensive, legally binding AI law. It uses a risk-based classification system. Systems deemed an “unacceptable risk” (like social scoring) are banned, while “high-risk” applications in healthcare or recruitment face strict controls. Failure to comply can result in risk significant fines.

The NIST AI Risk Management Framework (AI RMF)

In the United States, the NIST AI Risk Management Framework (AI RMF) is widely adopted for its flexibility. It doesn’t tell you what to build, but how to manage the risks of what you are building through four functions: Govern, Map, Measure, and Manage.

International and Regional Efforts

The OECD AI Principles provide a global standard for trustworthy AI, while the UK pro-innovation AI framework focuses on a lighter-touch, non-statutory approach to foster growth. For businesses operating globally, staying aligned with these standards is essential for enterprise AI success.

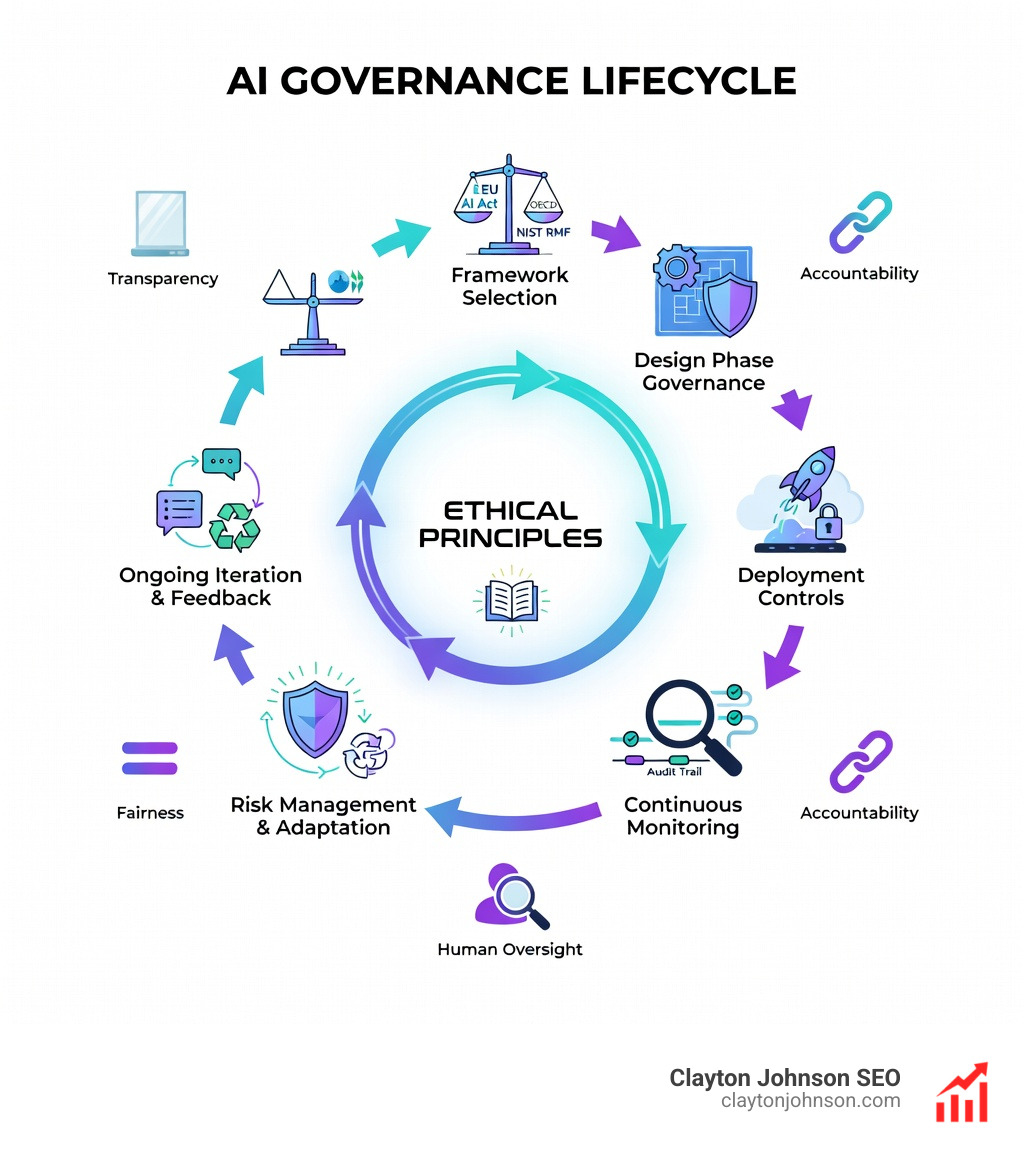

Implementing Governance Across the Lifecycle

Effective AI governance is not a one-time audit; it is a continuous thread that runs through the entire development pipeline.

The Design Phase

Governance starts before the first line of code is written. Organizations should establish internal review boards to assess the impact of proposed models. This is where we align the project with our broader enterprise AI strategy. We also evaluate the “data-hungry” nature of the model against scientific research on AI energy footprints, ensuring the project is sustainable.

Deployment and Secure Environments

When scaling AI strategically, where you host your model matters. Sensitive applications should often live in private clouds or on-premises environments to maintain data sovereignty.

Continuous Monitoring and Audit Trails

Once a model is live, the work has just begun. AI models can experience “drift”—a degradation in performance as real-world data changes. We implement automated monitoring for:

- Bias and Fairness: Real-time checks for discriminatory outputs.

- Performance Metrics: Accuracy, precision, and recall.

- Audit Trails: Traceable logs of every decision the AI makes for future review.

Ensuring Transparency and Explainability in AI Governance

The “black-box” problem is a major hurdle for stakeholder trust. To solve this, we use “model cards”—standardized documents that explain a model’s purpose, limitations, and training data. Providing Visibility into AI Agents through confidence scores and natural language explanations ensures that users aren’t just following an algorithm blindly, but are informed participants in the process.

Adapting AI Governance for Agentic Systems

The rise of AI agents—systems that can perform multi-step tasks autonomously—introduces new headaches. How do you govern a system that books a flight, fills out a form, and completes a purchase without a human clicking “OK” at every step?

Research on AI Agent Governance suggests we need three pillars:

- Inclusivity: Ensuring diverse voices shape agent behavior.

- Visibility: Clear identifiers so we know when we are interacting with an agent.

- Liability: Legal frameworks like Law-Following AI that ensure agents obey human laws and provide redress when things go wrong.

Managing Risks and Security

Securing an AI system is vastly different from securing traditional software. You aren’t just protecting against SQL injections; you’re protecting against prompt injections and model poisoning.

Threat Modeling with MITRE ATLAS

We use MITRE ATLAS (Adversarial Threat Landscape for Artificial-Intelligence Systems) to map out potential attacks. This matrix helps us identify how an adversary might try to “fool” a model or extract sensitive training data.

Red Teaming and Adversarial Testing

The best way to find a hole is to try to break in. “Red teaming” involves ethical hackers intentionally trying to trigger biased, harmful, or insecure outputs from an AI. This proactive approach is one of our AI infrastructure best practices.

Secure AI Architecture

According to research on AI security challenges, a multilayered approach is essential. This includes:

- Adversarial Training: Training the model on “malicious” inputs so it learns to ignore them.

- Homomorphic Encryption: Allowing the AI to process data without ever seeing the raw, unencrypted information.

- Incident Response: A clear plan for what happens if an AI system is compromised or begins behaving erratically.

Frequently Asked Questions about AI Governance

What is the difference between AI ethics and AI governance?

Think of AI ethics as the “why” and AI governance as the “how.” Ethics involves the philosophical principles (e.g., “We should be fair”), while governance provides the actual structure, policies, and enforcement mechanisms to make those principles a reality (e.g., “We will run a bias audit every 30 days”).

How does the EU AI Act affect non-European companies?

Much like GDPR, the EU AI Act has “extraterritorial effect.” If your AI system is used within the EU or its output is used there, you must comply, regardless of where your company is headquartered. Ignoring this can lead to massive fines and being barred from one of the world’s largest markets.

What are the first steps for a business to implement AI governance?

- Inventory Your AI: Map out every place AI is currently being used in your organization.

- Risk Assessment: Categorize those uses based on potential impact (Low, Medium, High).

- Establish a Committee: Create a cross-functional team (Legal, Tech, Ethics) to oversee the process.

- Adopt a Framework: Start with a recognized standard like the NIST AI RMF to guide your initial policies.

Conclusion

The rapid evolution of artificial intelligence is both a massive opportunity and a significant responsibility. At Clayton Johnson SEO, we see AI governance not as a barrier to innovation, but as the “structured growth architecture” that makes sustainable innovation possible.

Most companies don’t lack the tactics to build AI; they lack the infrastructure to build it right. By implementing the principles and frameworks discussed in this Comprehensive Guide to AI Governance, you aren’t just checking a compliance box—you are future-proofing your organization.

Through our work with Demandflow.ai, we help founders and marketing leaders build this exact type of structured leverage. Whether it’s through taxonomy-driven SEO systems or AI-augmented workflows, the goal remains the same: Clarity → Structure → Leverage → Compounding Growth.

If you are ready to build authority and visibility across both traditional search and the new generative AI landscape, we are here to help you navigate that journey with a strategy that is as responsible as it is effective.