Lego for LLMs: Modular RAG Pipeline Components Explained

Why Modular RAG Pipeline Components Are the Foundation of Reliable AI Systems

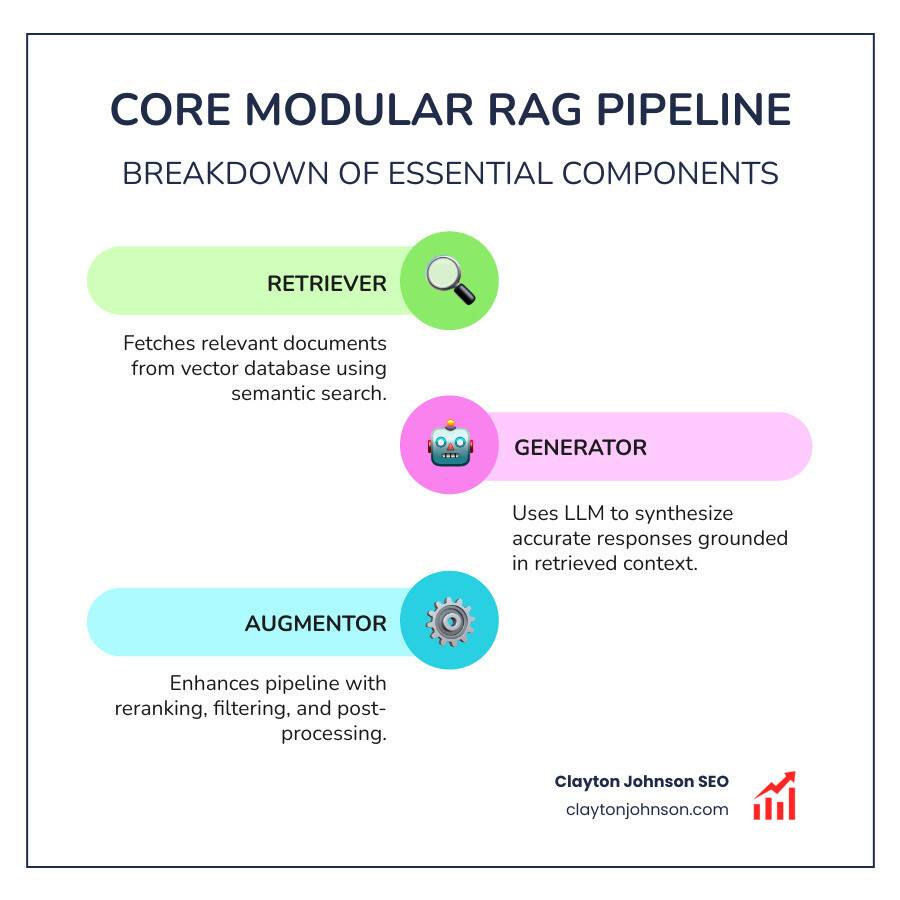

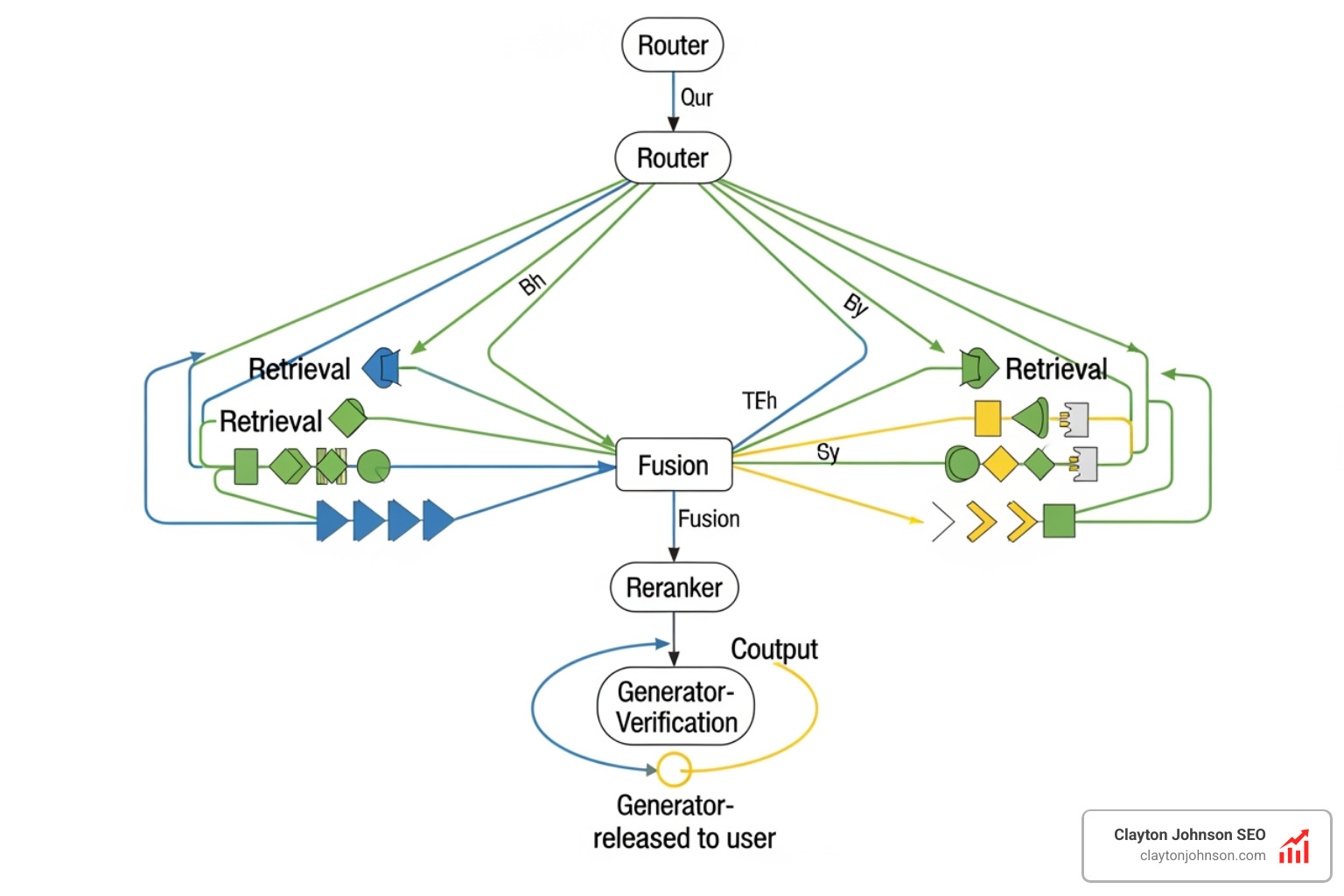

Modular RAG pipeline components are the independent, swappable building blocks that make Retrieval-Augmented Generation (RAG) systems flexible, scalable, and production-ready. Here is a quick breakdown of the core components:

| Component | Function |

|---|---|

| Retriever | Fetches relevant documents from a vector database using dense, sparse, or hybrid search |

| Generator | Uses an LLM to produce a grounded response from retrieved context |

| Router | Directs queries to the right retrieval path based on intent or complexity |

| Fusion | Combines results from multiple retrievers into a single ranked output |

| Scheduler | Controls workflow sequencing, iteration, and conditional execution |

Large language models (LLMs) are powerful — but they have a fundamental problem. Their knowledge is frozen at training time. They hallucinate. They cannot access your internal data.

RAG fixes this by connecting LLMs to external knowledge sources at inference time. Instead of retraining the model, you retrieve relevant documents and inject them as context before the model generates a response.

But basic RAG has its own limits. A rigid, monolithic pipeline breaks the moment your data changes, your use case shifts, or one component underperforms. That is where modular RAG pipeline components come in.

Modular RAG breaks the pipeline into independent, interchangeable pieces — similar to LEGO blocks. Each module handles one job. You can swap, test, or upgrade any piece without rebuilding the whole system. This is the architecture that separates experimental RAG prototypes from production-grade AI systems.

I’m Clayton Johnson, an SEO strategist and growth systems architect who works at the intersection of AI-augmented workflows and structured content architecture — including the design and evaluation of modular RAG pipeline components for scalable knowledge systems. This guide gives you the engineering clarity to build RAG pipelines that actually hold up under real-world conditions.

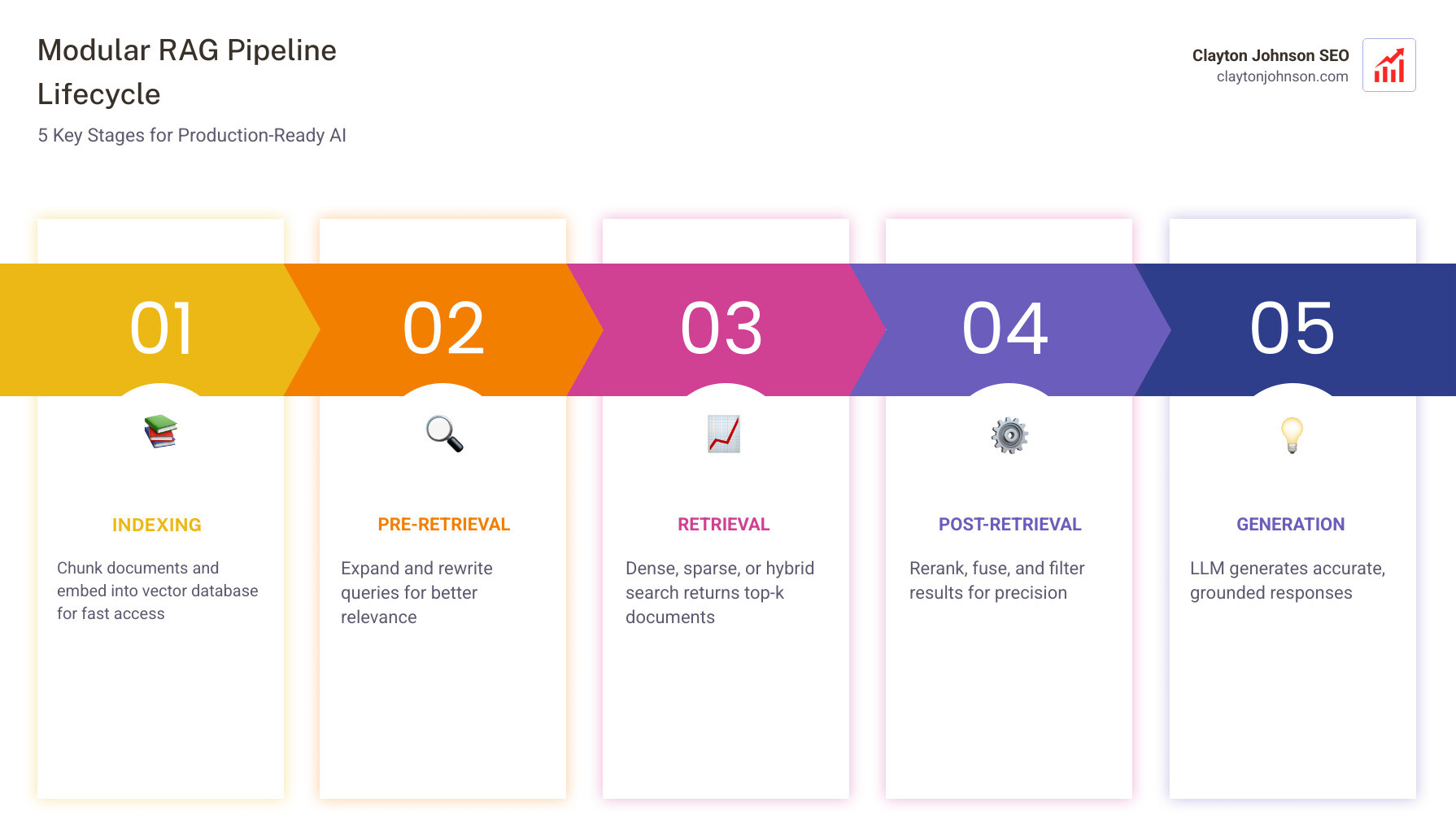

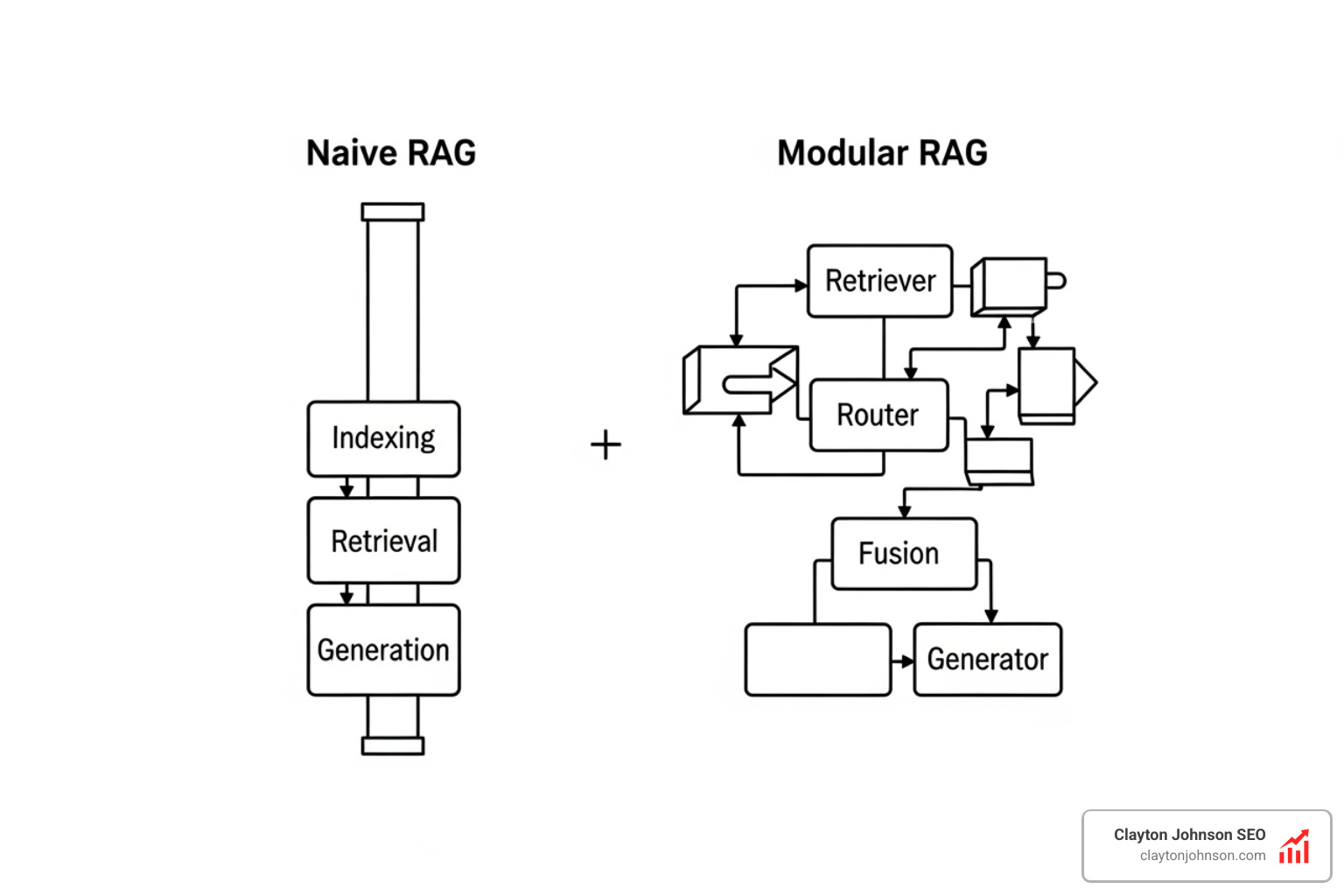

From Monolithic to Modular: The Evolution of RAG

In the early days of AI implementation, most teams started with what we call “Naive RAG.” It was a simple, linear process: take a user query, find a few matching documents in a vector store, and shove them into a prompt. While this works for basic internal FAQs, it quickly falls apart when faced with complex, multi-step questions or low-quality data.

The problem with monolithic pipelines is their rigidity. If you want to change your embedding model, you often have to rewrite the entire ingestion and retrieval logic. If your retriever starts returning irrelevant “noise,” your generator begins to hallucinate, and you have no clear way to isolate the failure.

This is why the industry has shifted toward the Modular RAG: Transforming RAG Systems into LEGO-like Reconfigurable Frameworks paradigm. By treating modular RAG pipeline components as independent services, we gain three massive advantages:

- Reconfigurability: We can swap a dense retriever for a hybrid one or change our LLM from a generalist model to a domain-specific one without breaking the system.

- Maintainability: Each module can be version-controlled, tested, and debugged in isolation.

- Interpretability: We can see exactly which module failed. Did the retriever fail to find the data, or did the generator fail to reason through it?

At Demandflow.ai, we believe that clarity leads to structure, and structure leads to leverage. Building a modular system is the ultimate expression of “structured growth architecture.” It allows your AI systems to grow and adapt alongside your business.

Core modular rag pipeline components and Their Functions

To build a high-performing system, we need to understand the individual “microservices” that make up the pipeline. Using frameworks like FlashRAG: A Modular Toolkit for Efficient Retrieval-Augmented Generation, developers can now manage these components with standardized interfaces, ensuring that the data flow between them is seamless.

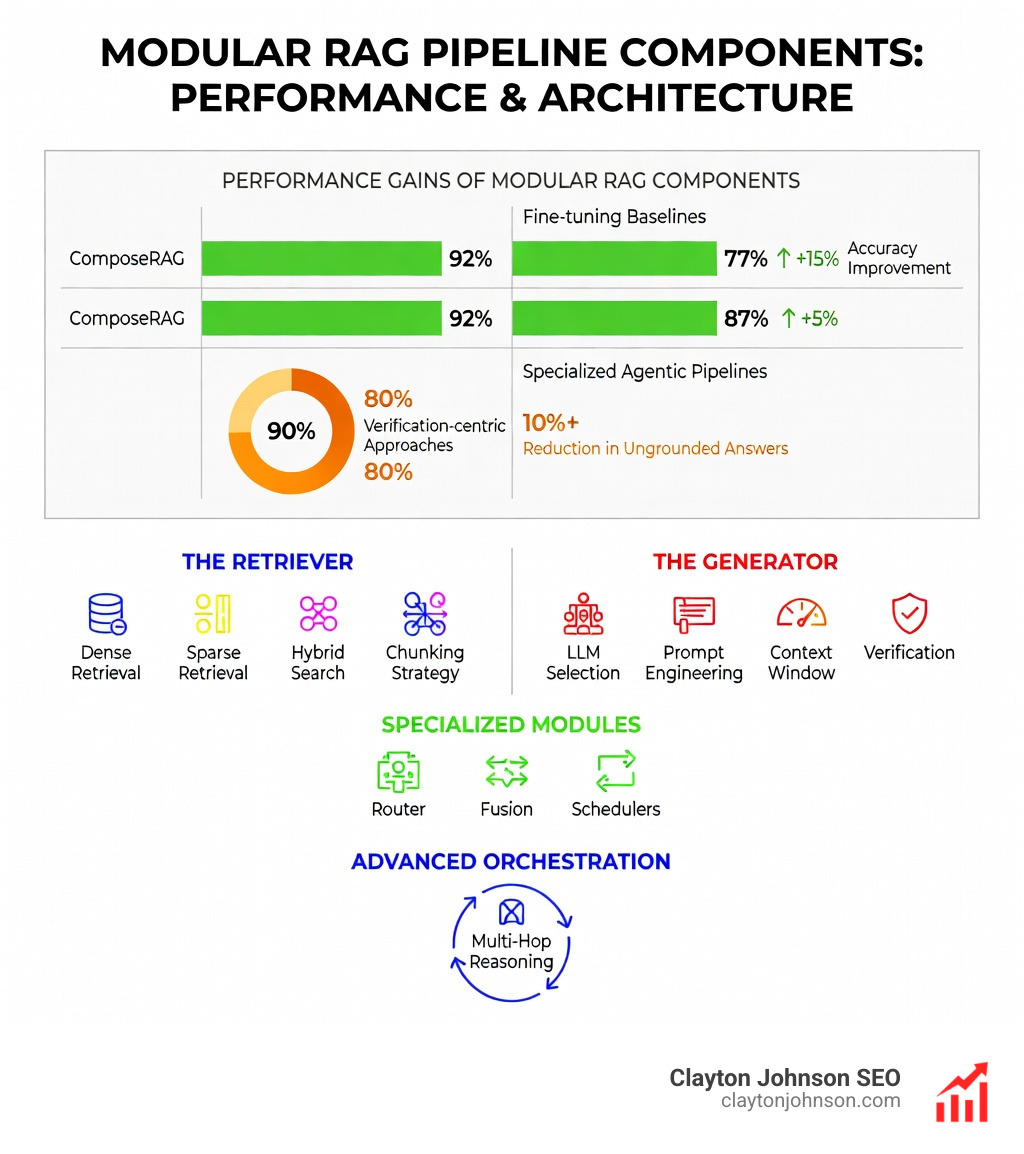

Designing the Retriever as a modular rag pipeline component

The retriever is the “researcher” of your system. Its job is to dive into your unstructured data and bring back the most relevant nuggets of information. In a modular setup, the retriever isn’t just a single search function; it’s a collection of strategies:

- Dense Retrieval: Uses vector embeddings to find documents based on semantic meaning. This is great for finding “concepts.”

- Sparse Retrieval: Uses traditional keyword matching (like BM25). This is essential for finding specific names, product IDs, or technical terms.

- Hybrid Search: Combines both dense and sparse methods to get the best of both worlds.

- Vector Databases: Tools like FAISS, Pinecone, and Milvus act as the storage layer, allowing for lightning-fast similarity searches across millions of document chunks.

The secret sauce here is the chunking strategy. We don’t just dump whole PDFs into the database. We split them into manageable pieces (e.g., 512 tokens) so the LLM gets exactly what it needs without being overwhelmed by “context noise.”

The Role of the Generator in modular rag pipeline components

If the retriever is the researcher, the generator is the “writer.” It takes the retrieved context and the user’s original question and synthesizes an answer.

In a modular architecture, the generator is decoupled from the rest of the system. This allows us to perform:

- LLM Selection: We might use a small, fast model for simple summaries and a large, reasoning-heavy model (like GPT-4 or Claude) for complex analysis.

- Prompt Engineering: We can swap out different system prompts for different tasks (e.g., “be concise” vs. “provide a detailed technical breakdown”).

- Context Window Management: The generator module ensures we don’t exceed the model’s token limit, effectively “compressing” or selecting only the most vital parts of the retrieved data.

- Hallucination Mitigation: By adding a verification step within the generator module, we can force the model to cite its sources, ensuring every claim is backed by the retrieved text.

Specialized Modules: Routers, Fusion, and Schedulers

This is where modular RAG pipeline components truly shine. Advanced systems use “middle-man” modules to handle logic that naive systems ignore.

- Routers: A router acts as a traffic controller. It analyzes the user’s intent. Is this a simple greeting? Send it straight to the LLM. Is it a complex legal question? Route it to the high-precision vector index.

- Fusion: Sometimes one search isn’t enough. Fusion modules perform multiple searches (perhaps using different query rewrites) and use techniques like Reciprocal Rank Fusion (RRF) to merge the results into a single, high-quality list.

- Schedulers: Schedulers manage the “looping” logic. If the initial retrieved data doesn’t fully answer the question, the scheduler can trigger another round of retrieval.

Advanced Orchestration and Flow Patterns

Once you have your modular RAG pipeline components ready, the next step is deciding how they talk to each other. This is called orchestration. We’ve moved far beyond simple linear flows into more sophisticated patterns.

Multi-Hop Reasoning and Query Decomposition

Complex questions often require multiple steps. For example, “How does the revenue of Company A compare to the industry average in the Midwest?” requires finding Company A’s revenue, then finding the industry average, then performing a comparison.

Frameworks like ComposeRAG: Modular Multi-Hop QA use a “Question Decomposition” module to break that big question into three smaller ones. The system then “hops” through the data, using the answer from the first hop to inform the search for the second.

Self-Reflection and Correction Loops

One of the most exciting trends in modular RAG is the “Self-Correction” loop. After the generator produces an answer, an Answer Verification module checks it against the retrieved documents. If the answer contains a claim that isn’t supported by the data, the scheduler sends the query back to the retriever to find better evidence. This “verification-centric” approach has been shown to reduce ungrounded answers by over 10% in low-quality retrieval settings.

Best Practices for Deploying Modular RAG in Production

Building a modular RAG system in a lab is one thing; keeping it running in a production environment like Minneapolis is another. At Clayton Johnson SEO, we focus on the “infrastructure” of growth, and that applies to AI pipelines too.

Version Control and Index Management

You wouldn’t deploy code without Git, so don’t deploy vector indexes without versioning. Tools like lakeFS allow you to treat your vector embeddings and preprocessed data like code. You can create branches for new index versions, test them, and roll back if the performance drops. This is critical for maintaining a “structured growth architecture” where every change is measurable.

Security and Privacy

When dealing with enterprise data, security is non-negotiable.

- RBAC (Role-Based Access Control): Ensure that the retriever only accesses documents the user is authorized to see.

- Data Encryption: Use TLS 1.3 for data in transit and AES-256 for data at rest.

- GDPR Compliance: Ensure your modular pipeline can handle “right to be forgotten” requests by easily purging specific data from your vector stores.

Monitoring and Optimization

You can’t improve what you don’t measure. We recommend using evaluation frameworks like RAGAS or TruLens. These tools look at the “RAG Triad”:

- Context Relevance: Did the retriever find the right stuff?

- Faithfulness: Is the answer derived only from the retrieved context?

- Answer Relevance: Does the answer actually address the user’s query?

To keep costs down and performance up, consider quantization for your embedding models and caching (using Redis) for frequently asked questions. For deeper technical implementation details, we always refer to the latest technical documentation on RAG deployment.

Frequently Asked Questions about Modular RAG

What is the difference between modular RAG and naive RAG?

Naive RAG is a rigid, three-step linear process (Retrieve -> Augment -> Generate). It is easy to build but hard to scale or debug. Modular RAG decomposes the pipeline into independent, swappable components like routers, rerankers, and verifiers. This allows for much higher flexibility, better accuracy, and easier maintenance in production environments.

How does modular RAG improve accuracy in multi-hop QA?

Multi-hop questions are too complex for a single search. Modular RAG uses “Question Decomposition” modules to break complex queries into sub-questions. It then uses a “Scheduler” to iteratively retrieve information for each sub-question, “hopping” through the knowledge base until it has enough context to provide a complete, verified answer. Research shows this can improve accuracy by up to 15% over monolithic baselines.

Which tools are best for building modular RAG systems?

For orchestration, LangChain and LlamaIndex are the industry standards, offering extensive libraries of pre-built modules. For retrieval, FAISS, Pinecone, and Milvus are top-tier vector databases. If you are looking for a more “LEGO-like” framework specifically designed for modularity, check out FlashRAG or Haystack. For production versioning of your data, lakeFS is a must-have.

Conclusion

The shift toward modular RAG pipeline components represents a maturing of the AI industry. We are moving away from “magic box” solutions and toward structured, engineered systems that provide predictable value.

At Demandflow.ai, we know that tactics without architecture are just noise. Whether we are building taxonomy-driven SEO systems or AI-augmented marketing workflows, the goal is always the same: create a foundation that allows for compounding growth.

Modular RAG is that foundation for the AI era. It gives you the clarity to see how your data is being used, the structure to swap out underperforming parts, and the leverage to scale your knowledge across your entire organization.

If you are a founder or marketing leader looking to move beyond AI experiments and start building “structured growth infrastructure,” we are here to help. From competitive positioning models to the implementation of advanced AI execution systems, our mission is to turn your data into your greatest competitive advantage.

Ready to build your own high-performance AI architecture? Build your structured growth infrastructure with us today.