Traffic Strategy Frameworks: Navigating the Road to High Performance

Why Scalable Traffic Strategies Matter More Than Ever

Scalable traffic strategies are the systems and architectural decisions that allow your digital infrastructure to handle growth—from hundreds to millions of users—without breaking, slowing down, or burning your budget. At their core, these strategies combine:

- Architectural foundations: Horizontal scaling, stateless services, and asynchronous processing

- Infrastructure automation: Auto scaling, load balancing, and API gateways

- Database optimization: Read replicas, caching, sharding, and connection pooling

- Marketing diversification: Multi-channel traffic sources across organic, paid, referral, and direct

- Data-driven prediction: Analytics frameworks for congestion forecasting and pattern recognition

The stakes are high. According to industry data, online sales are expected to reach $8.1 trillion by 2026. Yet 88% of consumers won’t return after a negative site experience, and 53% of mobile visits are abandoned if pages take longer than 3 seconds to load. Meanwhile, businesses that fail to scale proactively face what researchers call “the critical challenge: high traffic congestion” that can turn viral success into catastrophic outages.

The landscape is shifting fast. In 2007, five mobile phone manufacturers controlled 90% of industry profits—until Apple’s iPhone “burst onto the scene and began gobbling up market share.” The lesson? Scale now trumps differentiation as a strategic imperative. Modern platform businesses achieve this through network effects and architectural resilience, not just faster servers.

But scaling isn’t just about infrastructure. It’s about building systems—from API architecture to marketing channels—that grow efficiently. Research on urban mobility changes shows that quick wins can achieve 3-4% modal shift in under a year, representing 25% of total goals. Similarly, digital twin simulations have saved organizations over $1 billion in infrastructure investments while reducing response times by 35%. The same principle applies to web traffic: proactive, multi-layered strategies prevent failure before it happens.

The challenge is complexity. Traditional path-search algorithms and reinforcement learning methods “struggle to scale to city-wide networks due to the nonlinear, stochastic, and coupled dynamics” of traffic systems—whether physical or digital. Your API faces similar challenges when trying to route millions of requests through evolving congestion patterns.

This guide synthesizes research from academic traffic modeling, cloud infrastructure best practices, and proven marketing frameworks to give you a complete playbook. We’ll cover everything from designing stateless microservices to predicting congestion with shape-based analytics, from AWS Auto Scaling configurations to diversifying your traffic sources beyond single-channel dependency.

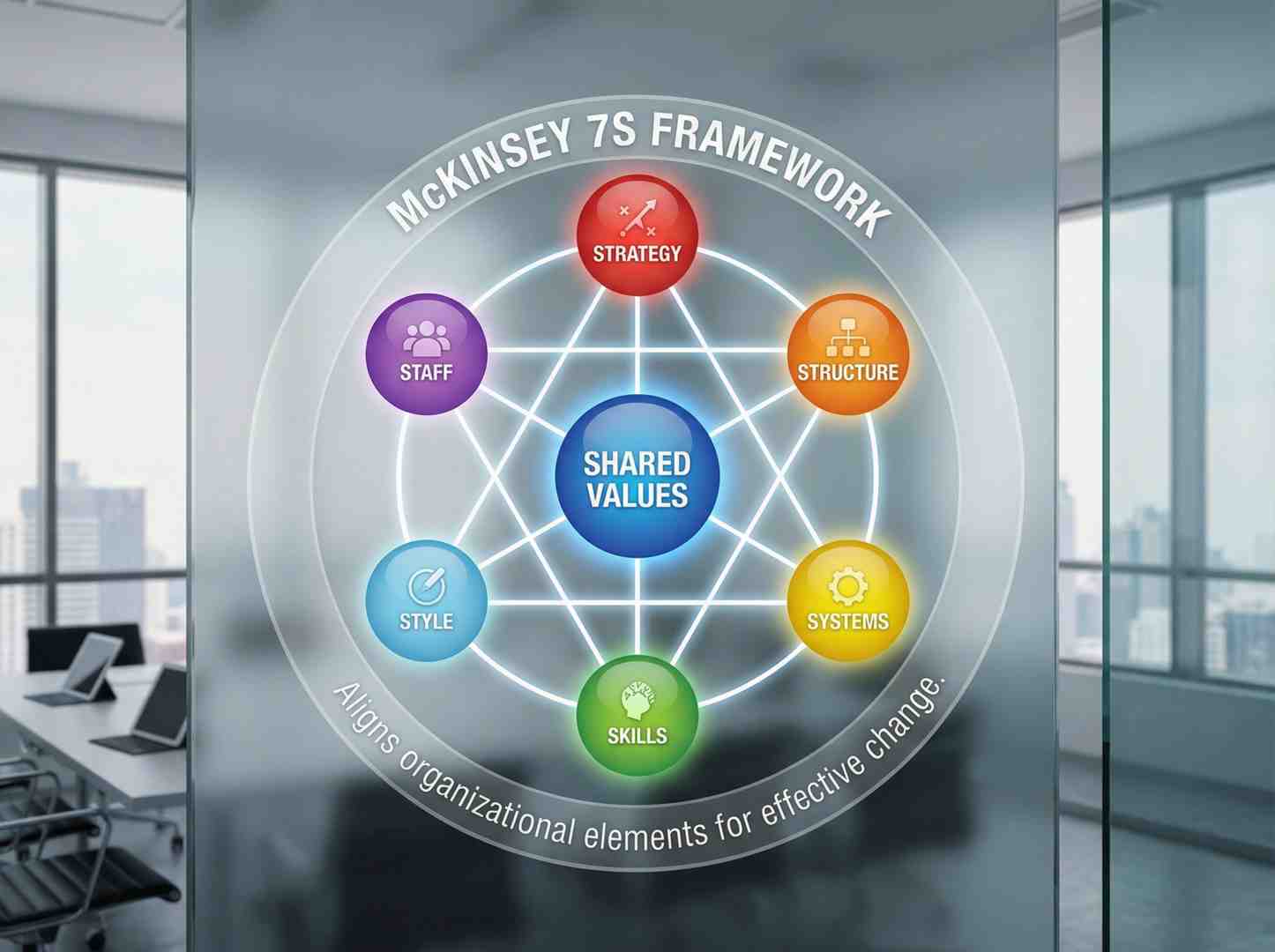

I’m Clayton Johnson, and I’ve spent years building scalable traffic strategies for companies across industries—turning fragmented marketing efforts into coherent growth engines through search intent, structured content, and measurable systems. My work focuses on the intersection of technical SEO, strategic positioning, and AI-augmented workflows that compound over time.

The Foundation of Scalable Traffic Strategies: Architectural Principles

Before we throw more servers at a problem, we need to look at the blueprint. If your application’s architecture is a monolithic “spaghetti” mess, even the most expensive cloud setup won’t save you. Building scalable traffic strategies starts with the code and how services communicate.

We often see founders waiting for their API to break before they think about scaling. That’s a recipe for disaster. Effective high traffic API scaling requires a proactive mindset: assume growth will happen. This begins with a deep market analysis to understand your potential load and user behavior patterns.

| Feature | Horizontal Scaling (Scale Out) | Vertical Scaling (Scale Up) |

|---|---|---|

| Method | Adding more machines to the pool | Adding more power (CPU/RAM) to one machine |

| Limits | Virtually limitless | Limited by hardware capacity |

| Cost | Pay-as-you-go, highly efficient | Exponentially expensive at high tiers |

| Availability | High (redundancy) | Low (single point of failure) |

| Complexity | Higher (requires load balancing) | Lower (simple hardware swap) |

Horizontal vs. Vertical Scaling: Why “Scaling Out” Wins

Vertical scaling is like buying a bigger truck to move more boxes. It works for a while, but eventually, you can’t buy a bigger truck. Horizontal scaling is like hiring a whole fleet of smaller trucks. For modern, cloud-native systems, “scaling out” is the foundation of resilience. It allows for resource pooling and ensures that if one server goes down, the others pick up the slack. This redundancy is what keeps your site alive during a surprise traffic surge.

Designing Stateless Services for Seamless Expansion

If a server “remembers” a user’s session locally, that user is stuck to that server. This is called “stateful” design, and it’s the enemy of horizontal scaling. Stateless services are a non-negotiable prerequisite. When a service is stateless, any server in your fleet can handle any request.

This architectural flexibility allows load balancers to distribute traffic evenly without worrying about breaking a user’s session. It makes your system “liquid”—able to flow into new resources as they are added and contract when demand drops.

Asynchronous Processing for Long-Running Tasks

Nothing kills API performance faster than a request that hangs while waiting for a heavy task (like generating a PDF or processing a large image) to finish. Instead of making the user wait, we use asynchronous processing.

By using message queues, your API can return a “202 Accepted” status immediately, telling the client, “I’ve got this, I’ll let you know when it’s done.” This decouples tasks from the main request-response cycle, significantly reducing latency and protecting your system reliability during spikes.

Infrastructure and Database Scaling: Managing High-Load Systems

Once the architecture is sound, we move to the infrastructure. In Minneapolis, we know that road systems need to adapt to rush hour; your digital infrastructure is no different. We use SEO & content marketing to drive the traffic, but your infrastructure must be ready to receive it. This is where conversion optimization meets technical performance.

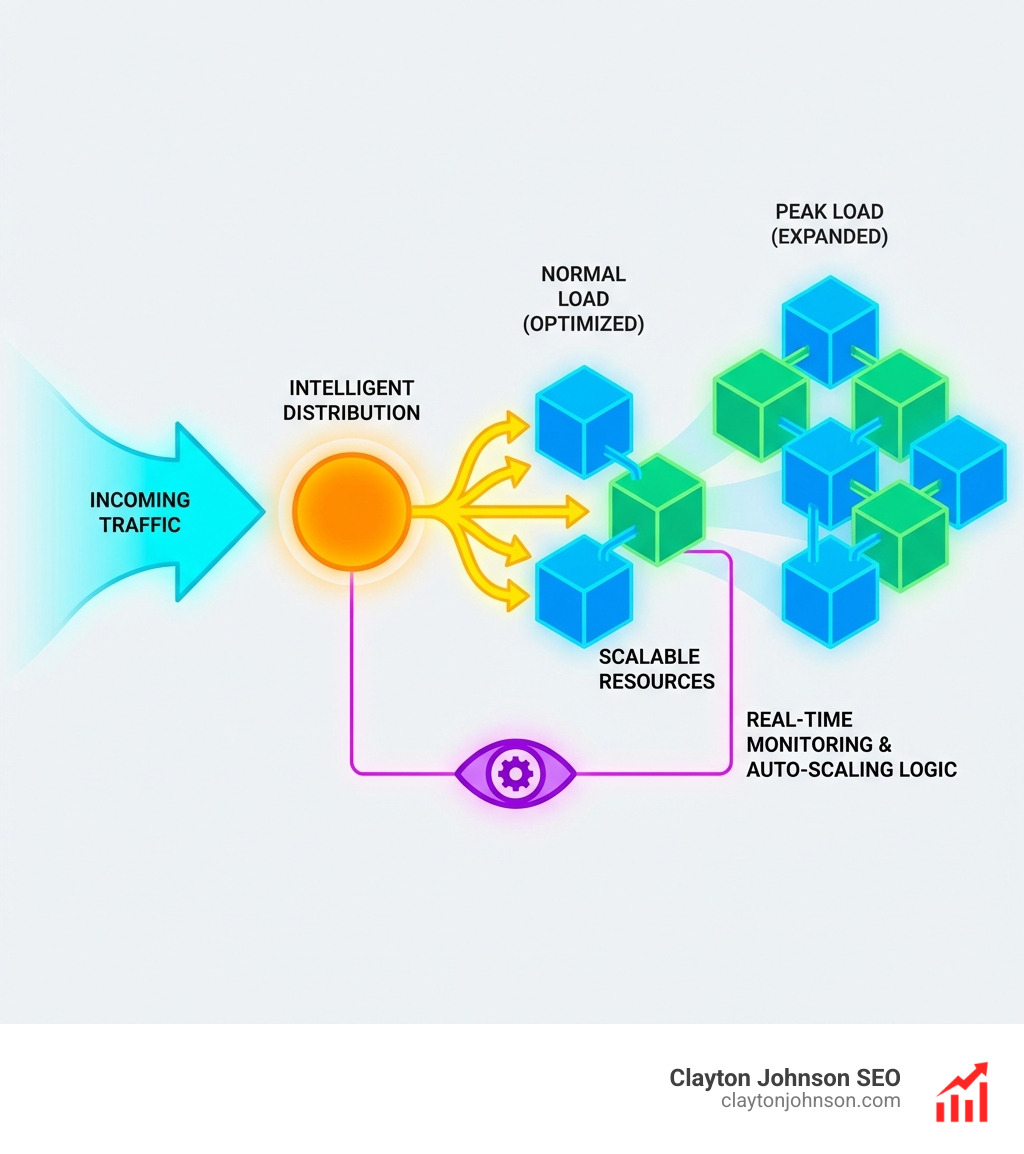

Implementing Scalable Traffic Strategies via AWS Auto Scaling

AWS Auto Scaling is the gold standard for dynamic traffic management. It allows you to maintain application availability by automatically adding or removing EC2 instances based on real-time demand.

We recommend target tracking policies. For example, you can set a policy to “keep average CPU utilization at 50%.” If traffic spikes and CPU hits 70%, AWS automatically spins up more instances. Conversely, during low-traffic periods, it terminates unnecessary instances to save costs. It’s the ultimate “pay-per-use” model for high-performance systems.

API Gateways and Load Balancing: Your First Line of Defense

An API gateway acts as a sophisticated traffic cop. It’s your first line of defense against congestion. Key functions include:

- Load Balancing: Using algorithms like Round-Robin (even distribution) or Least Connections (routing to the least busy server).

- Edge Caching: Storing frequent responses closer to the user to reduce backend load.

- Rate Limiting: Protecting your system by capping requests (e.g., 100 requests per minute per user) to prevent abuse or accidental denial-of-service.

Read Replicas, Sharding, and Caching Strategies

Your database is often the first thing to “cry” under heavy load. To scale it before it fails, consider these tactics:

- Read Replicas: Create clones of your database. Route all “write” operations to the primary and “read” operations to replicas.

- Connection Pooling: Don’t let 10,000 users try to open individual connections. Use a pool (like pg-pool for Node.js) to manage a limited set of open seats.

- Sharding and Partitioning: Break large tables into smaller, more manageable pieces. Sharding distributes data across different servers (e.g., by User ID), while partitioning divides data within a single server (e.g., by Sale Date).

- In-Memory Caching: Use Redis or Memcached to store the results of expensive queries. This is significantly faster than hitting the disk every time.

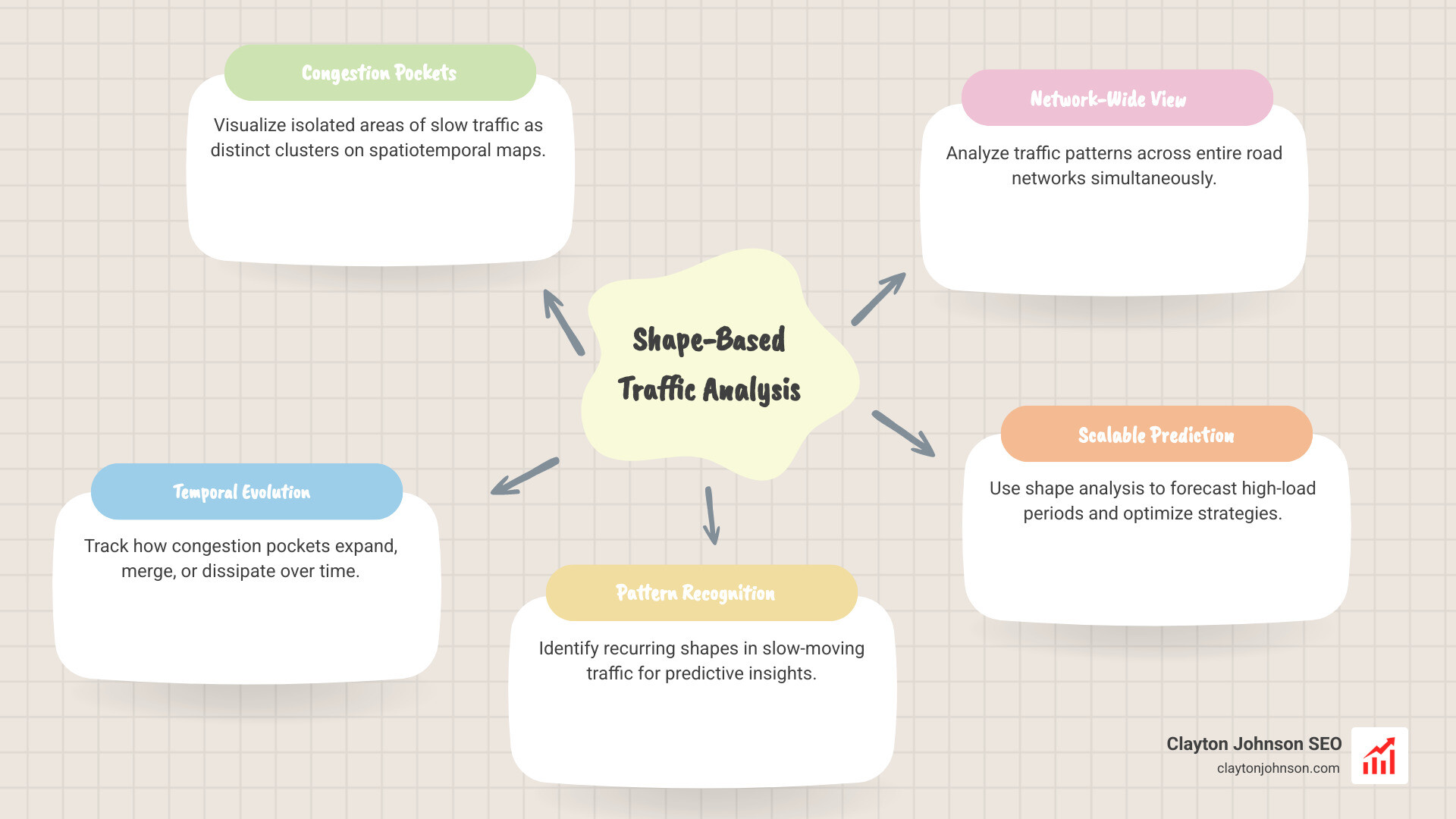

Advanced Analytics: Shape-Based Analysis for Traffic Prediction

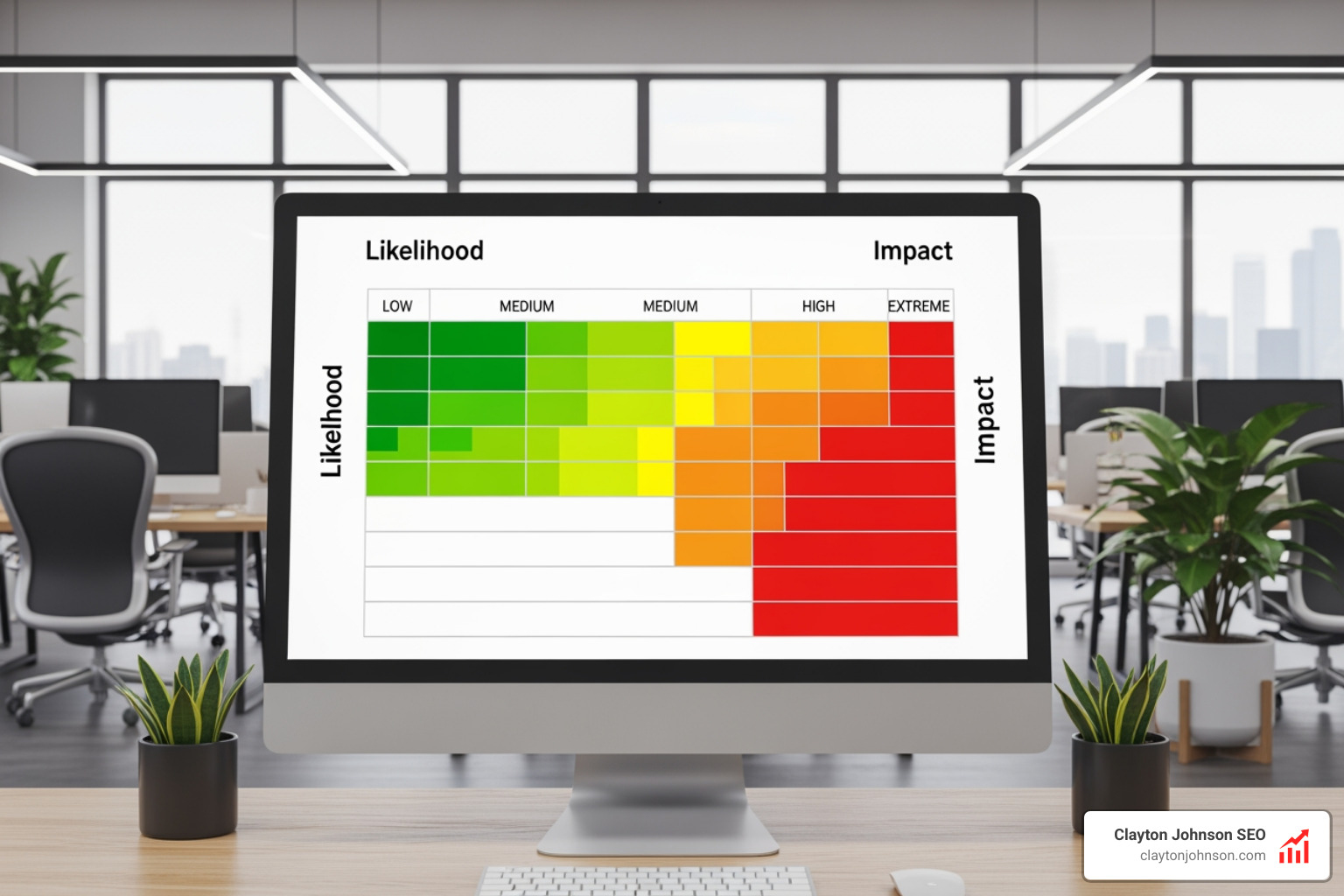

Scaling isn’t just about reacting; it’s about predicting. Recent research has shown that we can use computer vision techniques to understand traffic dynamics. By analyzing “congestion pockets” in 3D spatiotemporal maps, we can represent complex network behavior with incredible accuracy.

Our team uses analytics & data to find these patterns in your digital traffic. Just as researchers found that 95% of traffic shape variations can be represented by just 2 base shapes, we look for the “base shapes” of your user behavior to optimize your systems.

Compact Feature Vectors and Dimensionality Reduction

One of the biggest problems in large-scale analysis is the sheer volume of data. Scientific research on shape-based traffic prediction highlights a breakthrough: 99% dimensionality reduction. By reducing a daily feature vector from 9,984 dimensions to just 40, researchers achieved a 97% reduction in complexity while maintaining a 93% classification accuracy. This means we can process massive amounts of data—millions of points per day—in a fraction of the time it used to take.

Predicting Congestion with Statistical Shape Models

Using these compact vectors, we can apply Statistical Shape Models (SSM) to predict travel times and system delays. In urban studies, this method achieved an 8% Mean Absolute Percentage Error (MAPE), a 44% improvement over traditional consensus methods. For your business, this means predicting exactly when your API will hit a bottleneck and scaling before the user experiences a lag.

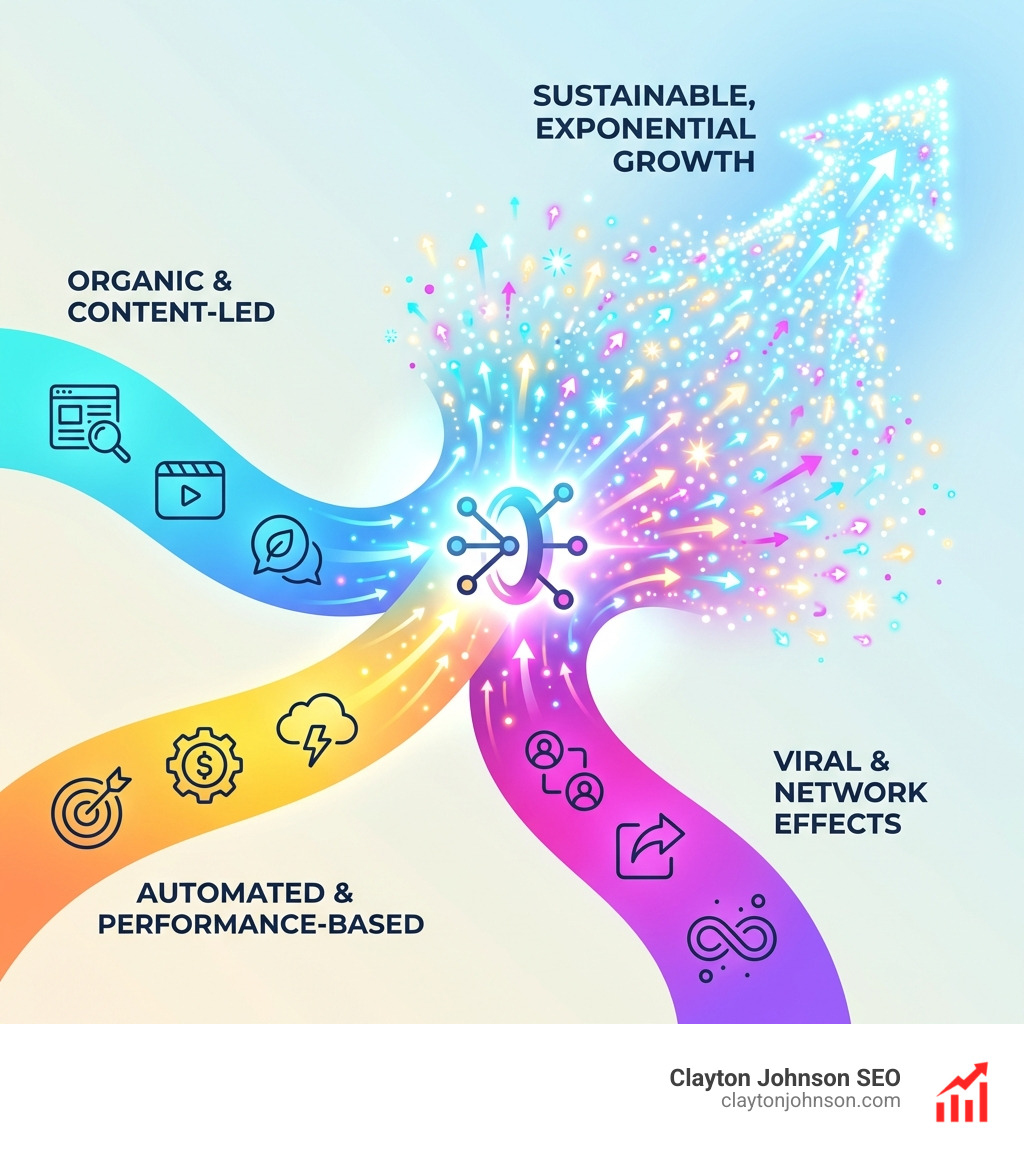

Marketing Frameworks for Scalable Traffic Strategies

You can have the most resilient infrastructure in the world, but it doesn’t matter if no one is visiting. At Clayton Johnson, we focus on the digital marketing pillars that ensure your growth is sustainable and diversified.

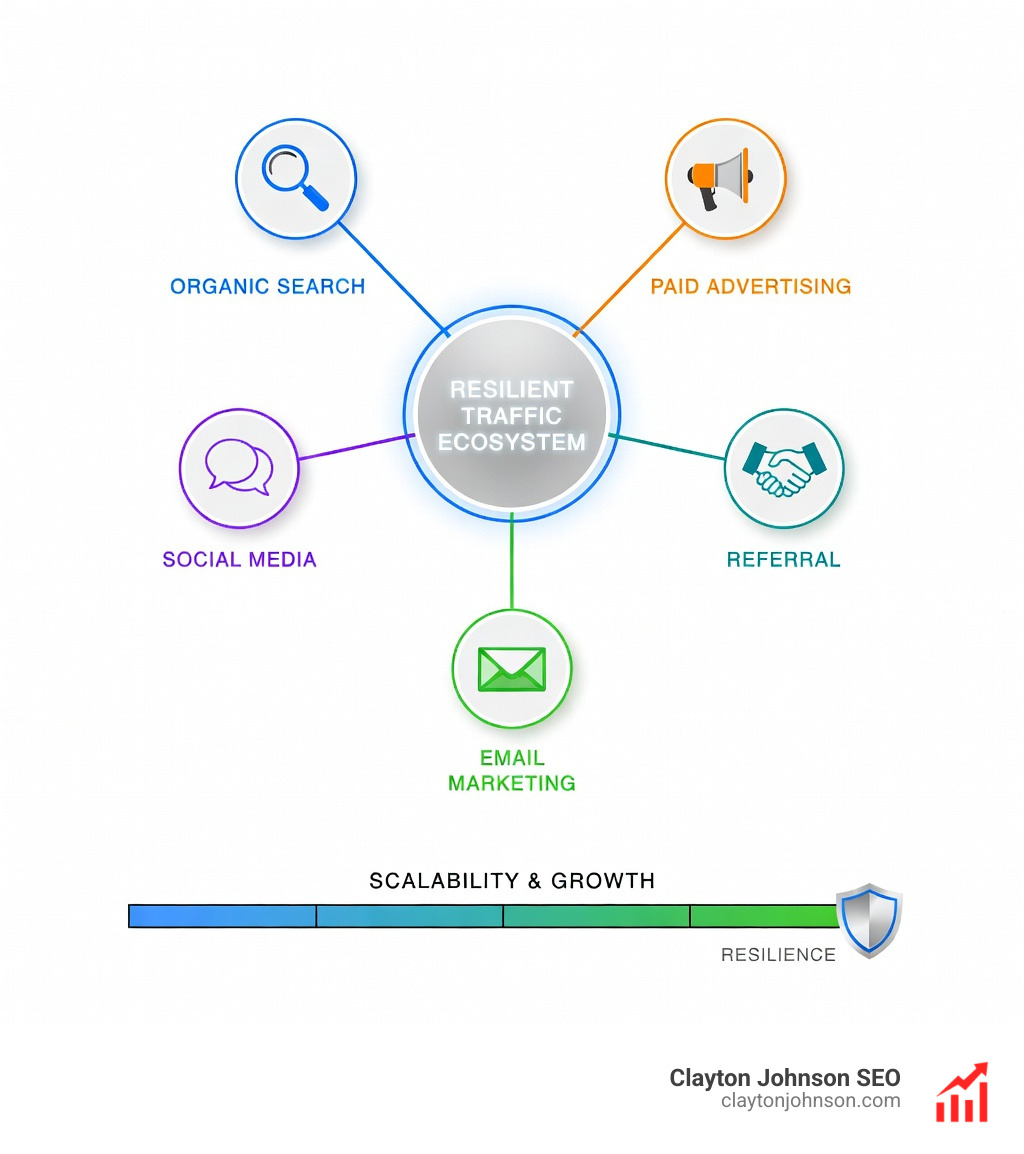

Diversifying Channels to Avoid Single-Source Dependency

Relying on a single traffic source—like just Google Ads or just one social platform—is risky. If an algorithm changes, your business could vanish overnight. Diversifying traffic sources is essential for long-term stability.

- Direct Traffic: Built through strong brand identity and memorable URLs.

- Referral Traffic: Generated through partnerships and guest blogging on high-authority sites.

- Organic Search: The “slow-burn” engine that provides the highest ROI over time.

- Paid Social and Retargeting: Quick ways to scale, provided you monitor conversion rates closely.

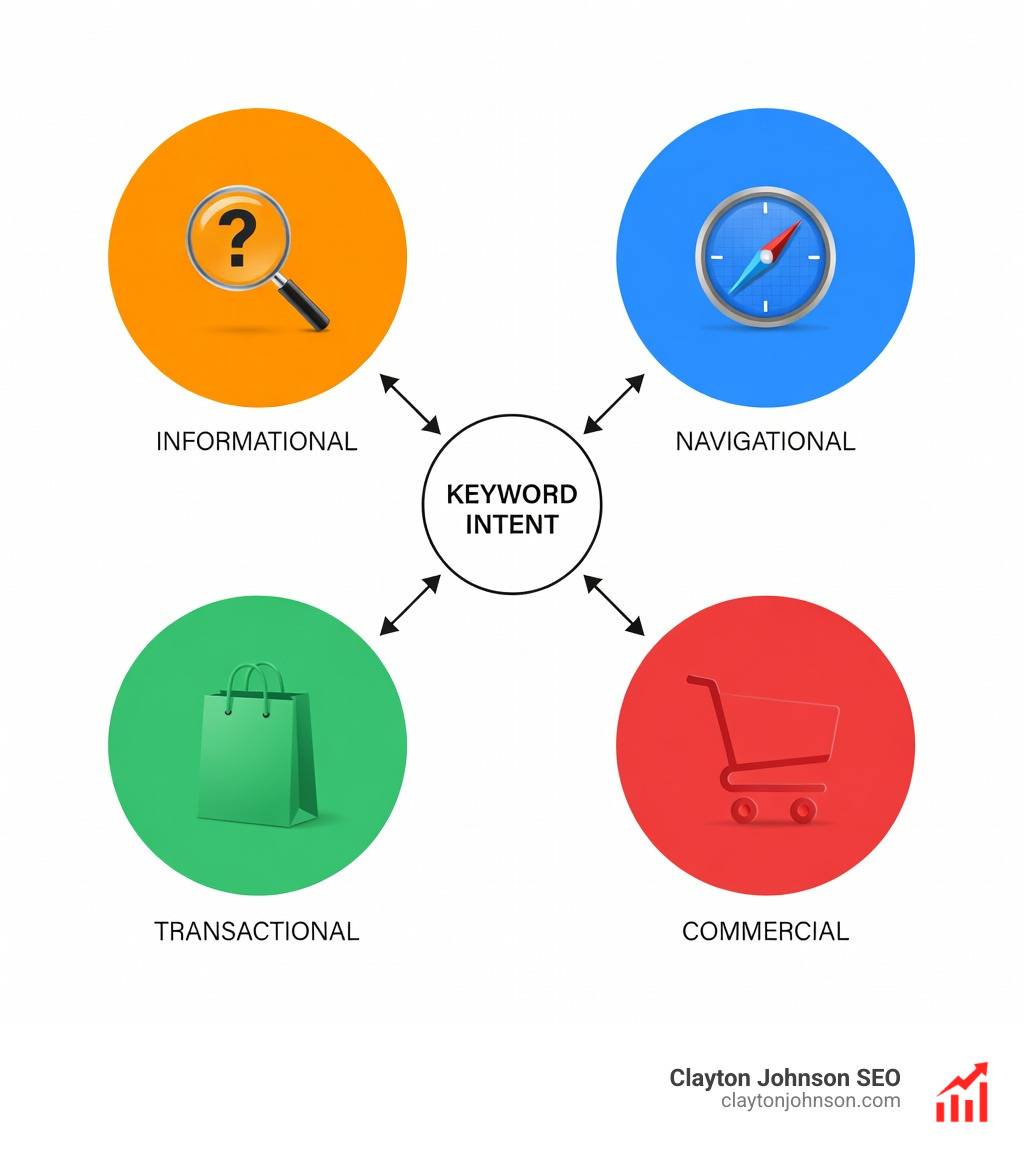

Scaling Organic Reach with Long-Tail SEO and Linkable Content

To scale organic traffic without a massive budget, we focus on long-tail keywords. These are more specific, less competitive, and often have higher intent. We also create “linkable assets”—reports, infographics, or tools—that other sites naturally want to reference. This builds your domain authority and drives consistent referral traffic.

Frequently Asked Questions about Scalable Traffic Strategies

What is the difference between horizontal and vertical scaling?

Vertical scaling (scaling “up”) involves adding more power (RAM, CPU) to your existing server. Horizontal scaling (scaling “out”) involves adding more servers to your pool. Horizontal scaling is preferred for modern systems because it offers better redundancy and virtually limitless growth.

Why are stateless services essential for high-traffic APIs?

Stateless services don’t store user session data on the server itself. This means any server in a cluster can handle any incoming request. This is vital for load balancing and auto-scaling, as it allows traffic to be shifted between servers seamlessly without interrupting the user experience.

How do read replicas help overcome database bottlenecks?

In most applications, users read data far more often than they write it. Read replicas are copies of your primary database that handle “read” (SELECT) queries. By offloading these queries to replicas, you free up the primary database to handle “writes” (INSERT, UPDATE), significantly improving overall performance.

Conclusion

Building scalable traffic strategies is not a one-time task; it’s an ongoing commitment to excellence in both architecture and marketing. From the initial API design to the advanced analytics that predict your next traffic spike, every layer must be built for growth.

At Clayton Johnson, we help founders and marketing leaders in Minneapolis and beyond steer this complexity. Whether you need a robust SEO strategy, a more efficient content system, or a complete growth framework, we provide the tools and expertise to ensure your road to high performance is clear.

Don’t wait for your systems to break. Start building your scalable future today.