Beyond the Hype: A Guide to Real-World Enterprise AI Tools

Enterprise AI Tools Are Reshaping How Businesses Operate

Enterprise AI tools are software platforms that help organizations automate workflows, analyze data, and build intelligent applications at scale. Here are the most widely adopted categories:

- Productivity AI – Microsoft 365 Copilot, Google Gemini Enterprise

- Customer Support AI – Salesforce Einstein, Intercom, Ada

- Developer Tools – GitHub Copilot, JetBrains AI Assistant

- Data and Analytics – Power BI Copilot, Databricks, DataRobot

- Content and Marketing – Writer AI, Jasper, Copy.ai

- Security AI – CrowdStrike Falcon, Darktrace, Splunk AI

- Workflow Automation – Workato, MuleSoft, Automation Anywhere

- Specialized Platforms – Vertex AI, Cohere, C3 AI

The gap between AI early adopters and everyone else is growing fast. According to OpenAI’s enterprise research, frontier firms use AI at twice the intensity of the median organization — and that gap is compounding.

The data backs this up. Analysis of over 530 real enterprise generative AI projects shows that 49% focus on customer support alone. Customer issue resolution leads all applications, appearing in 35% of projects. Marketing content creation, IT software development, and R&D prototyping follow closely behind.

Yet most organizations are still figuring out which tools actually deliver results — and which ones just generate demos that fall apart in production.

The challenge is not a shortage of options. It is knowing what to build on, what to buy, and what to avoid entirely.

I’m Clayton Johnson, an SEO and growth strategist who works at the intersection of AI-augmented workflows, content architecture, and scalable marketing systems — and evaluating enterprise AI tools for real operational leverage is central to how I help founders and marketing leaders build structured growth engines. In this guide, I break down the platforms, infrastructure requirements, and real-world ROI evidence you need to make a confident decision.

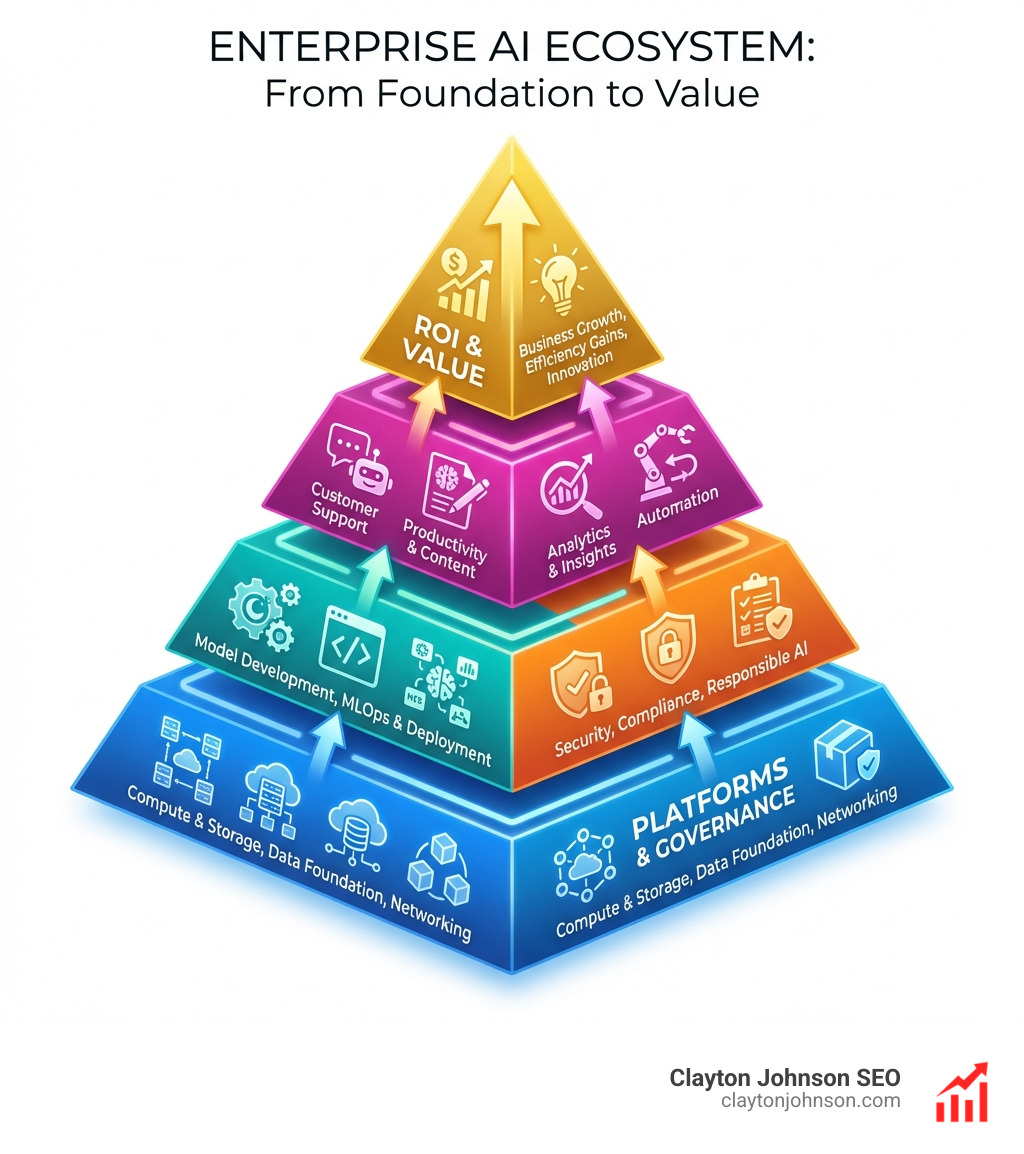

The Infrastructure of Successful Enterprise AI Tools

Before we can talk about the flashy applications that generate poetry or code, we have to talk about the “plumbing.” Successfully deploying enterprise AI tools requires more than just a subscription to a chatbot. It requires a robust backend that ensures data is clean, models are reliable, and security is airtight.

Many organizations in the Minneapolis area and beyond start by building data engineering pipelines. These pipelines handle the heavy lifting of moving data—whether through streaming or batch processing—into environments like data warehouses or a data mesh. Without this foundation, your AI is essentially a genius with no memory.

To keep things running smoothly, we look toward AI infrastructure best practices for smart organizations. This involves setting up MLOps and LLMOps (Machine Learning and Large Language Model Operations). Think of these as the factory assembly lines for AI; they automate the lifecycle of a model from training to deployment, ensuring that updates don’t break the system.

A central model registry is another “must-have.” It acts as a library where every version of an AI model is stored, tracked, and governed. This prevents different departments from accidentally using outdated or unapproved versions of a tool.

Centralized Data Governance

One of the biggest hurdles we see is “messy data.” When information is scattered across different silos, AI can’t find what it needs. This is how AI-based analytics makes sense of your messy data: by using data catalogs and centralized governance to create a map of where everything lives.

A data mesh approach allows different business units to own their data while still making it accessible for a centralized AI platform. This ensures secure retrieval, where only authorized users (and bots) can access sensitive information.

Real-Time Monitoring and Reliability

AI models aren’t “set it and forget it.” They can suffer from “drift,” where their performance degrades over time, or “hallucinations,” where they confidently state something completely false.

To combat this, we advocate for keeping humans in the loop without losing your mind. Real-time monitoring tools check for accuracy and relevance constantly. If a model starts acting up, a human is alerted to step in and course-correct.

RAG vs. Model Fine-Tuning: Which One Do You Need?

When tailoring enterprise AI tools to your specific business, you generally have two paths: Retrieval-Augmented Generation (RAG) or fine-tuning.

| Feature | Retrieval-Augmented Generation (RAG) | Model Fine-Tuning |

|---|---|---|

| Primary Goal | Grounding the AI in your latest internal data. | Teaching the AI a specific style or deep domain logic. |

| Speed to Deploy | Very Fast (Days). | Slow (Weeks/Months). |

| Cost | Lower (Uses existing models). | Higher (Requires significant compute). |

| Data Freshness | Real-time (Connects to live docs). | Static (Requires retraining to update). |

| Use Case | Customer support bots, internal knowledge bases. | Specialized medical or legal reasoning. |

Leading Platforms for Security and Scalability

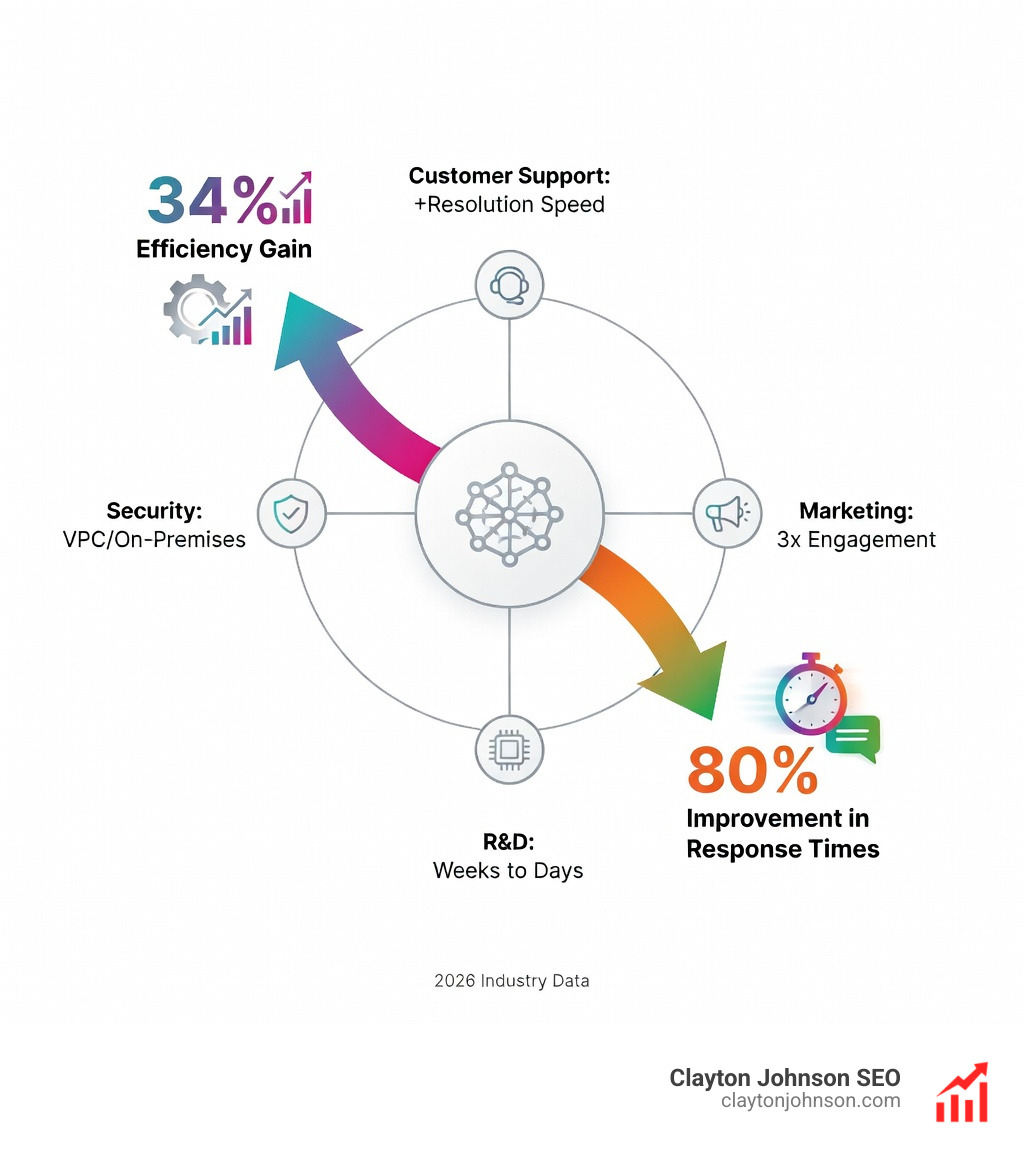

When selecting a platform, “security” isn’t just a checkbox; it’s the whole page. Leading enterprise AI tools offer multi-layered protection, often allowing companies to deploy within a Virtual Private Cloud (VPC) or even on-premises. This ensures that your proprietary data never leaves your controlled environment to train someone else’s model.

Enterprise AI Tools for Global Scale

Google’s Vertex AI is a heavyweight in this space. It provides access to the latest Gemini models Vertex AI offers, which are built for multimodal processing. This means they can understand and generate not just text, but images, video, and code.

Vertex AI Studio allows developers to prototype quickly, testing how these models handle complex reasoning tasks. For a large organization, this scalability is vital—you can move from a small pilot to a global rollout without changing your underlying tech stack.

Customization and Business Flexibility

While Google offers the “all-in-one” cloud experience, platforms like Cohere focus on being “model-agnostic” and business-first. Cohere allows for deep customization, letting you train models on your proprietary data to create a unique competitive advantage. We call this how to build an AI moat that actually holds water.

Cohere’s Command models are particularly popular for their multilingual support, covering dozens of languages for global communication. On the other hand, C3 AI takes a different approach by offering over 40 turnkey applications. These are pre-built for specific industries like manufacturing or financial services, allowing companies to see results almost immediately without building from scratch.

High-Impact Applications and Business ROI

The most successful enterprise AI tools aren’t just toys; they are ROI engines. We’ve seen that winning the AI arms race with smart integration into existing workflows is the key to measurable success.

Top Generative Enterprise AI Tools by Department

The impact varies by department, but the results are consistently impressive:

- Customer Support: This is the undisputed champion of AI adoption. Take the Klarna AI agent results as an example: their AI assistant handled the workload of 700 full-time support agents in its first month, resolving two-thirds of all customer chats.

- Marketing: Teams are using AI marketing systems to scale content creation. NC Fusion, a nonprofit, used AI to reduce email drafting time from 60 minutes to just 10, leading to a 3x increase in customer engagement.

- R&D and Product Design: In biotechnology, companies are using generative models to prototype novel proteins. What used to take months of lab work can now be narrowed down in weeks via simulation.

Measurable Impact and Case Evidence

The numbers don’t lie. Telstra, the Australian telecom giant, introduced AI tools that helped agents summarize past interactions and access account details instantly. This led to a 20% reduction in follow-up contacts, as issues were resolved correctly the first time.

In the public sector, Covered California used AI for document verification. They saw their verification rate jump from 30% to a staggering 84%. This is the essence of how to assess LLM scalability for the enterprise—finding the repetitive, high-volume tasks and automating them to free up human talent for more complex work.

Low-Code to Pro-Code: The Microsoft AI Ecosystem

Microsoft has positioned itself as the “operating system” for AI. Their ecosystem ranges from tools that anyone can use to deep developer frameworks. The core of this is Microsoft 365 Copilot, which lives inside the apps your team already uses—Word, Excel, and Teams. Because it operates within the Microsoft Graph, it has “tenant context,” meaning it knows about your emails, meetings, and files while maintaining strict security.

For those looking to build more than just a chat assistant, the multi-model guide to winning at AI search explains how Azure AI Search provides the “knowledge base” for these systems, using vector and hybrid search to find the exact right answer in a sea of data.

Agent Development and Orchestration

The next frontier is “Agents”—AI that doesn’t just talk, but actually does things. Microsoft Copilot Studio is a low-code environment where you can build these agents. You might create a “declarative agent” that follows a specific set of instructions to process invoices or manage a calendar.

For more complex needs, the Foundry Agent Service provides a pro-code environment for orchestrating multi-agent systems. This is the architect’s guide to AI growth: moving from a single bot to a team of specialized agents that can collaborate on a project.

Developer Tools for Production-Grade AI

Developers are the ones building the future of enterprise AI tools, and they need the right gear. GitHub Copilot has become the standard for AI-assisted coding, with 77% of developers reporting increased productivity.

Beyond just writing code, tools like AI Builder allow for advanced document processing. You can train a model to extract specific data from thousands of PDFs—like price lists or contract terms—and feed that directly into your business systems. This is why every developer needs AI tools for programming: it eliminates the “grunt work” of coding and data entry.

Governance, Risk, and the DIY vs. Turnkey Debate

As the saying goes, “with great power comes great responsibility” (and potentially great lawsuits). Mastering enterprise AI governance and regulatory standards is non-negotiable for any serious organization. This involves network isolation, identity management through tools like Microsoft Entra ID, and strict Role-Based Access Control (RBAC).

The Complexity of DIY Platforms

There is a tempting urge to “build it ourselves.” However, building a custom enterprise AI platform is astronomically complex. Some experts estimate that a DIY platform requires managing nearly 10^13 (that’s 10 trillion) API connections.

DIY platforms often suffer from “brittleness.” One small update to an underlying library can break the entire system. This leads to common AI selection mistakes, where companies spend millions on a custom build only to realize they’ve created a mountain of technical debt. Turnkey solutions, while potentially less flexible, offer the stability and maintenance that internal teams often can’t sustain.

Compliance and Ethical Implementation

Finally, we must address ethics. Charting the course for ethical AI implementation means ensuring your AI isn’t biased and that it respects data sovereignty. This is especially important in regulated industries like healthcare or finance. Audit logging—keeping a detailed record of everything the AI does—is essential for compliance.

Frequently Asked Questions about Enterprise AI

What are the core components of enterprise AI infrastructure?

The core components include data engineering pipelines (to move and clean data), MLOps/LLMOps (to manage the model lifecycle), a central model registry (for version control), and robust monitoring tools to prevent drift and hallucinations.

How do enterprise AI tools handle data security and privacy?

Leading platforms use multi-layered security, including network isolation (VPCs), data encryption, and identity management (like Entra ID). Most importantly, enterprise-grade tools guarantee that your data is not used to train public models.

What is the difference between DIY AI platforms and turnkey solutions?

DIY platforms are built in-house using open-source tools and cloud microservices. They offer maximum flexibility but come with extreme complexity and risk of failure. Turnkey solutions are pre-built by vendors (like C3 AI or Microsoft) and are designed for rapid deployment and reliability.

Conclusion

The era of AI experimentation is over. We are now in the era of enterprise AI tools that drive compounding growth and operational excellence. Whether you are looking to automate your customer support like Klarna or scale your marketing like NC Fusion, the key is to start with a structured strategy.

At Clayton Johnson SEO and through our work with Demandflow.ai, we believe that clarity leads to structure, and structure leads to leverage. Most companies don’t lack tactics; they lack a growth operating system for founders and marketing leaders.

By combining actionable strategic frameworks with AI-augmented workflows and taxonomy-driven SEO systems, we help businesses in Minneapolis and beyond build infrastructure that doesn’t just work—it scales. Don’t let your AI strategy be a collection of disconnected bots. Build a system that turns intelligence into a measurable competitive advantage.