How to Use AI for Voice Search Like a Pro

Where Voice Search Meets AI: What It Means and Why It Matters

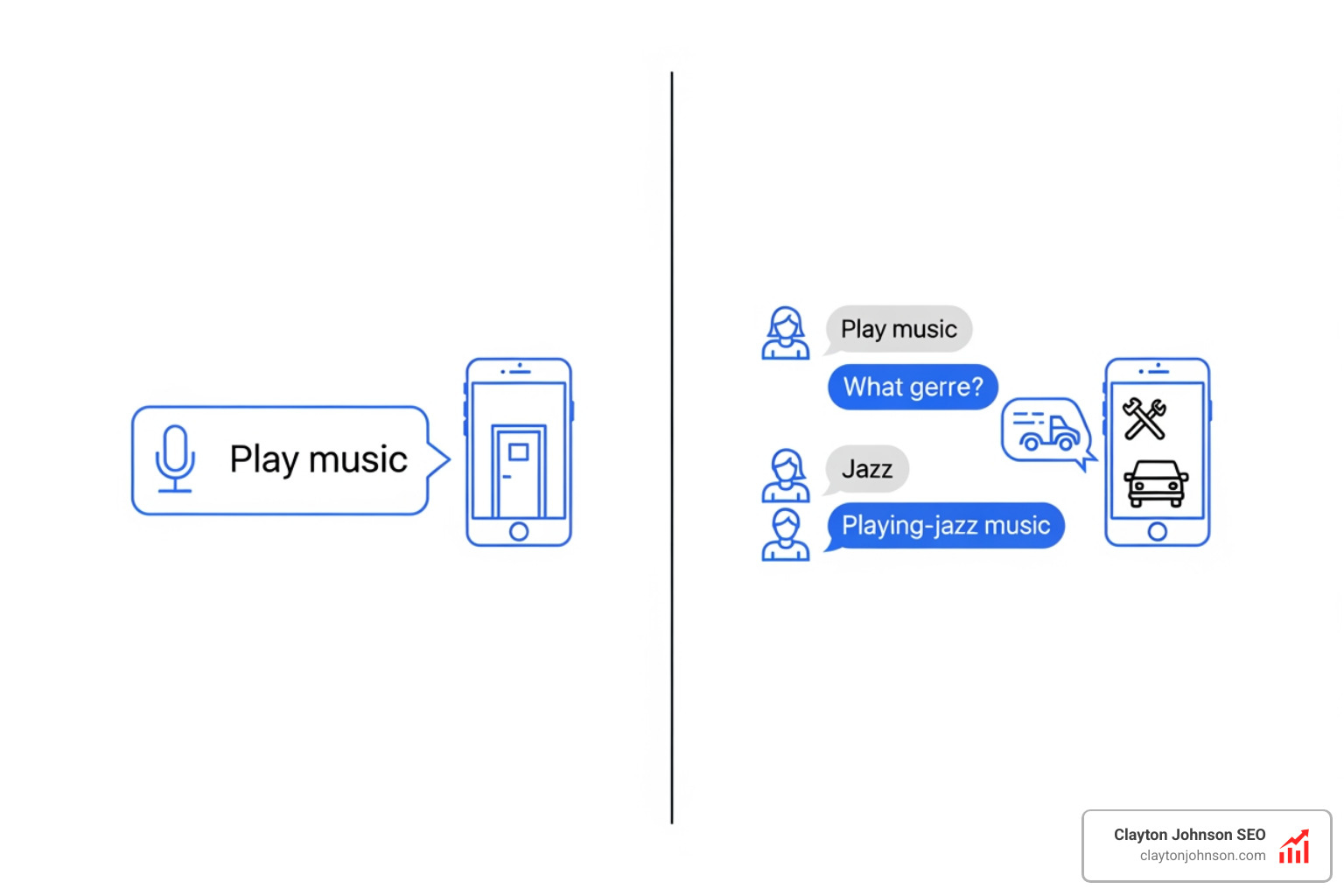

Where voice search meets AI is the point where spoken language becomes a real-time, intelligent conversation — not just a keyword lookup. Here is a quick overview of what that means in practice:

- Voice search lets users speak queries instead of typing them

- AI integration means the system understands intent, context, and follow-up questions — not just words

- The result is a conversational search experience powered by Large Language Models (LLMs), Natural Language Processing (NLP), and multimodal inputs like camera and voice combined

- Key platforms include Google AI Mode with Search Live, Gemini Live, Amazon Alexa, and Apple Siri

- Why it matters for SEO is that over 4 billion users conduct voice searches globally, with nearly half of U.S. consumers using voice assistants to find local services

This shift changes how people find information — and how businesses need to show up in search.

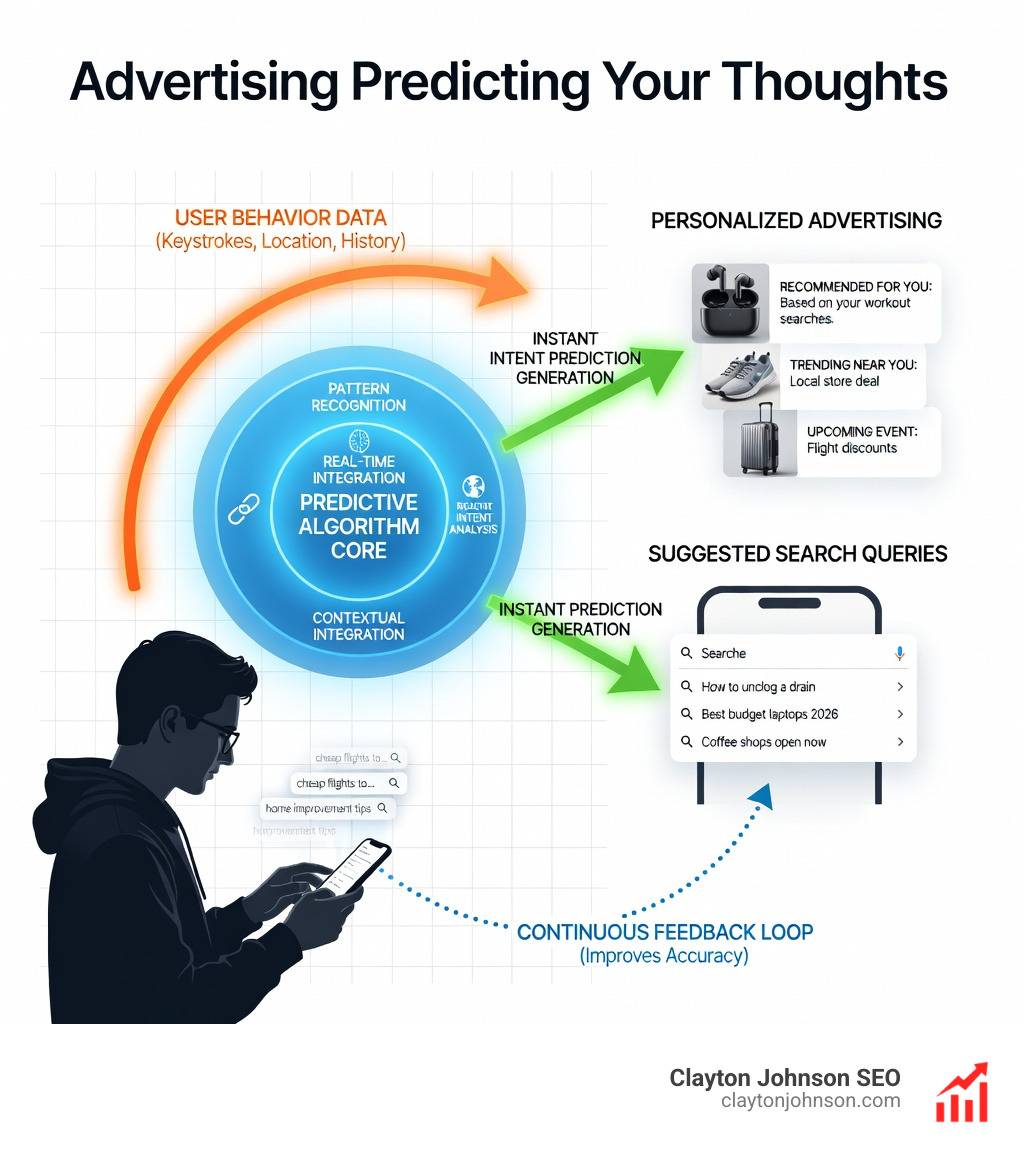

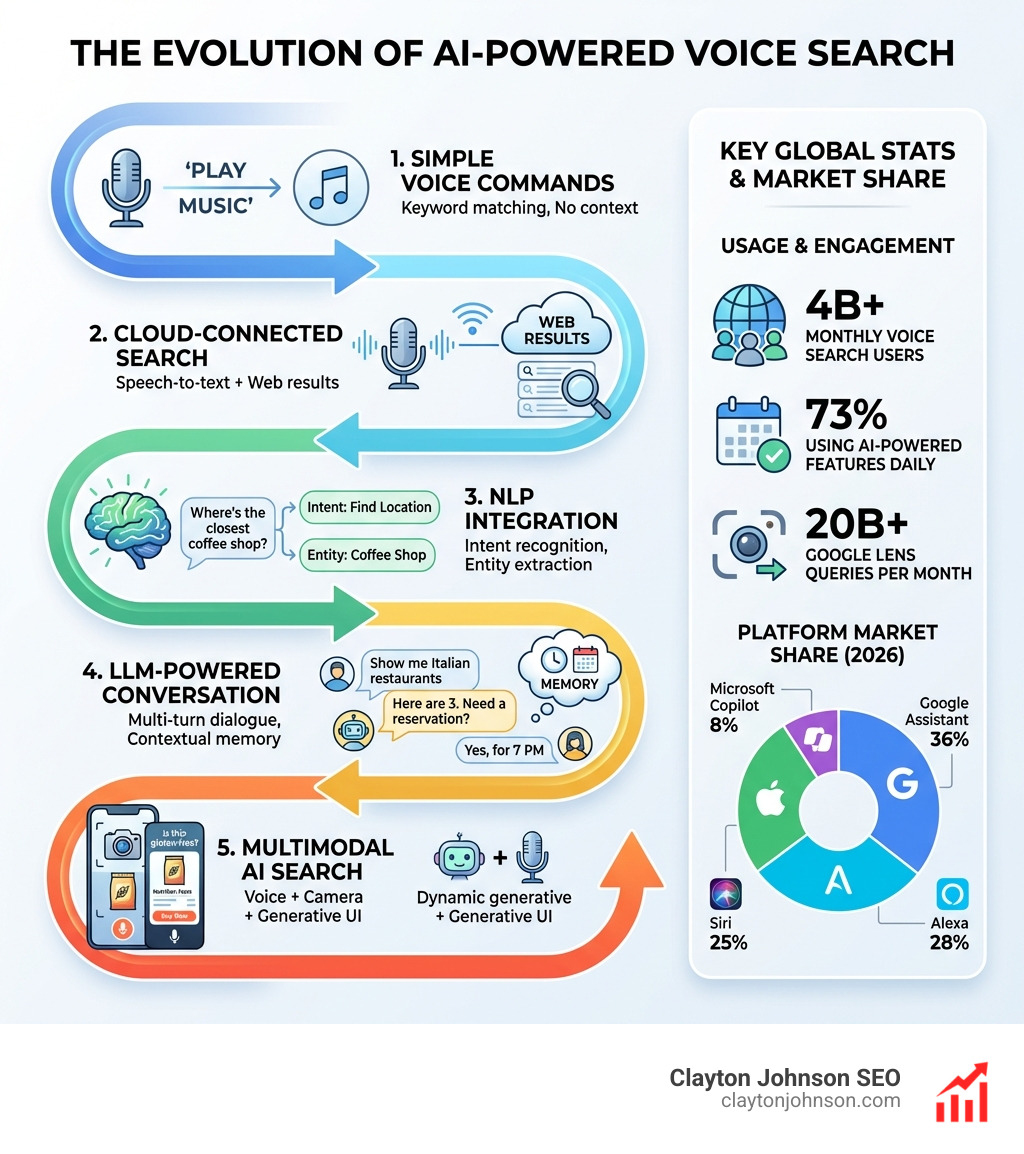

Voice search started as a simple dictation tool. You spoke, it transcribed, it searched. Today, AI has transformed it into something far more dynamic. Systems like Google’s Search Live can hold back-and-forth conversations, handle multi-part questions, and even process what your camera sees in real time. Google Lens alone generates over 20 billion queries per month. The gap between typing a query and having a genuine dialogue with a search engine has never been smaller — and it is closing fast.

The challenge is that most businesses and marketers have not caught up. Content is still written for keyboards, not conversations. SEO strategies still target typed queries, not spoken intent. That disconnect creates a real visibility gap — and a real opportunity for those who move first.

I’m Clayton Johnson, an SEO strategist who has spent years building structured, AI-augmented growth systems at the intersection of search architecture and emerging technology — including the practical application of where voice search meets AI for founders and marketing leaders. In this guide, I’ll walk you through everything you need to understand and act on this shift, from the technical foundations to the SEO strategies that compound over time.

Simple Where voice search meets AI word guide:

The Evolution of Where Voice Search Meets AI

The journey of voice technology has moved from rigid, command-based systems to fluid, human-like interactions. In the early days, if you didn’t say the exact “wake word” or use a specific phrase, the machine would simply tilt its digital head in confusion. Now, we are in an era of deep conversational awareness.

Natural Language Processing and LLMs

At the heart of this evolution are Large Language Models (LLMs) and advanced Natural Language Processing (NLP). These technologies allow AI to move beyond “matching words” to “understanding meaning.” When we talk about where voice search meets AI, we are talking about a system that understands that “the big orange bridge in San Francisco” refers to the Golden Gate Bridge, even if you never use the formal name.

Google’s Search Live and AI Mode

Google has fundamentally shifted its strategy with the introduction of Search Live capabilities. This feature, born from the Project Astra research at Google DeepMind, allows users to engage in a back-and-forth voice conversation. Instead of getting a single list of links, you get a response that feels more like a chat.

One of the most impressive technical feats here is the query fan-out technique. When you ask a complex question, the AI doesn’t just run one search. It breaks your question into several sub-queries, searches for them simultaneously, and then synthesizes the most relevant information into a cohesive audio response.

Conversational Fluidity: Gemini Live

Gemini Live represents a massive investment in user experience (UX). It isn’t just about the words; it’s about the delivery. The system now simulates the rhythm of human speech, including natural pauses and even breathing. This level of fluidity allows for “interruption-aware” conversations—you can cut the AI off mid-sentence to clarify a point, and it will adapt instantly without losing the thread of the discussion.

Technical Architecture and Multimodal Capabilities

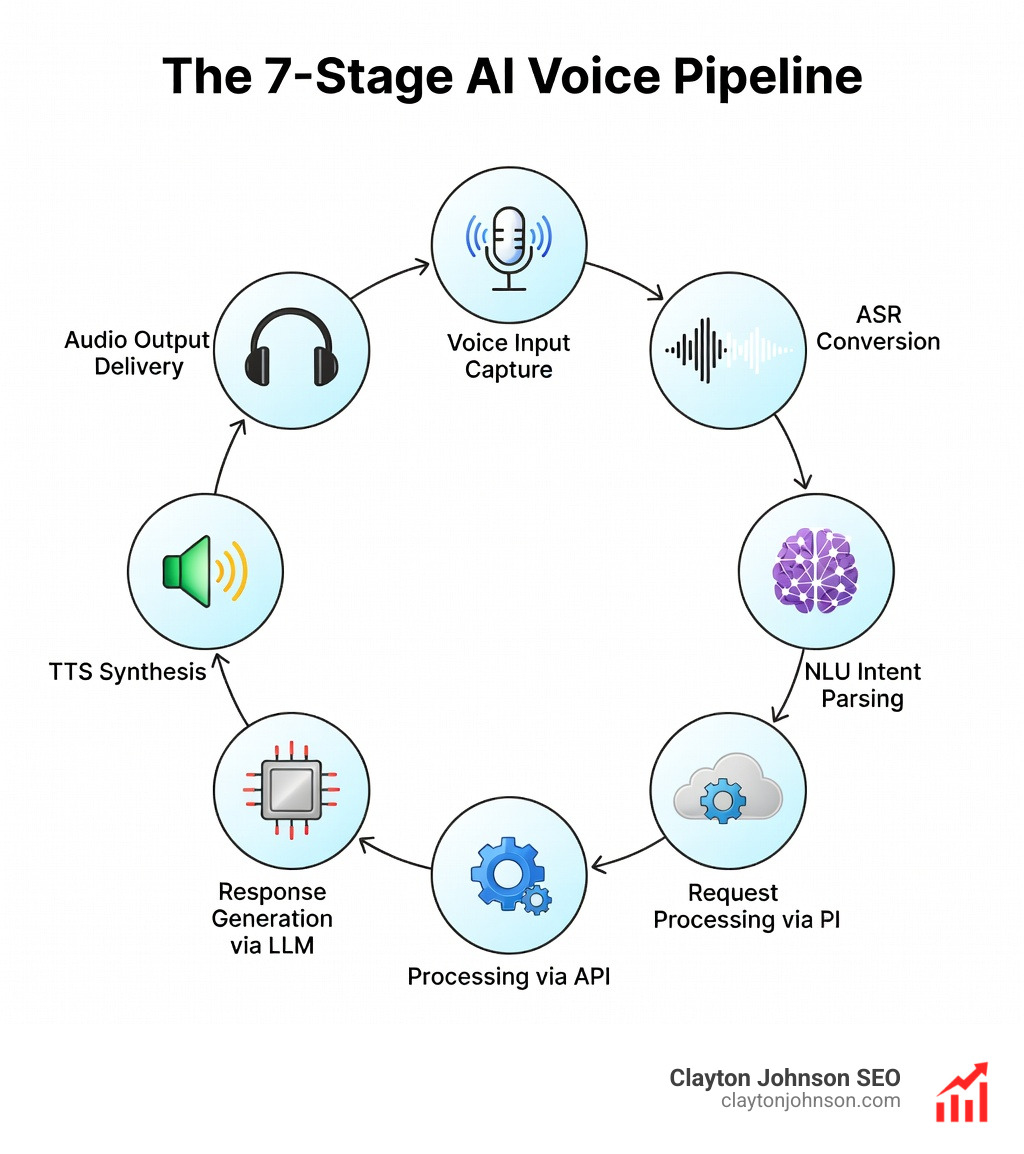

To understand where voice search meets AI as a professional, you have to look under the hood. The “magic” of a talking computer is actually a highly orchestrated pipeline of several distinct technologies.

- Automatic Speech Recognition (ASR): This converts the analog sound waves of your voice into digital text. Modern ASR systems use deep neural networks to extract acoustic features like Mel-frequency cepstral coefficients (MFCCs).

- Natural Language Understanding (NLU): Once the voice is text, NLU parses the intent. It maps what you said to a specific goal (e.g., “book a flight”) and extracts entities (e.g., “to Minneapolis”).

- Text-to-Speech (TTS): To talk back, the AI uses models like Tacotron or WaveNet. These aren’t the robotic voices of the past; they use neural audio codecs like SoundStream to ensure the output has human-like prosody (rhythm) and timbre (quality).

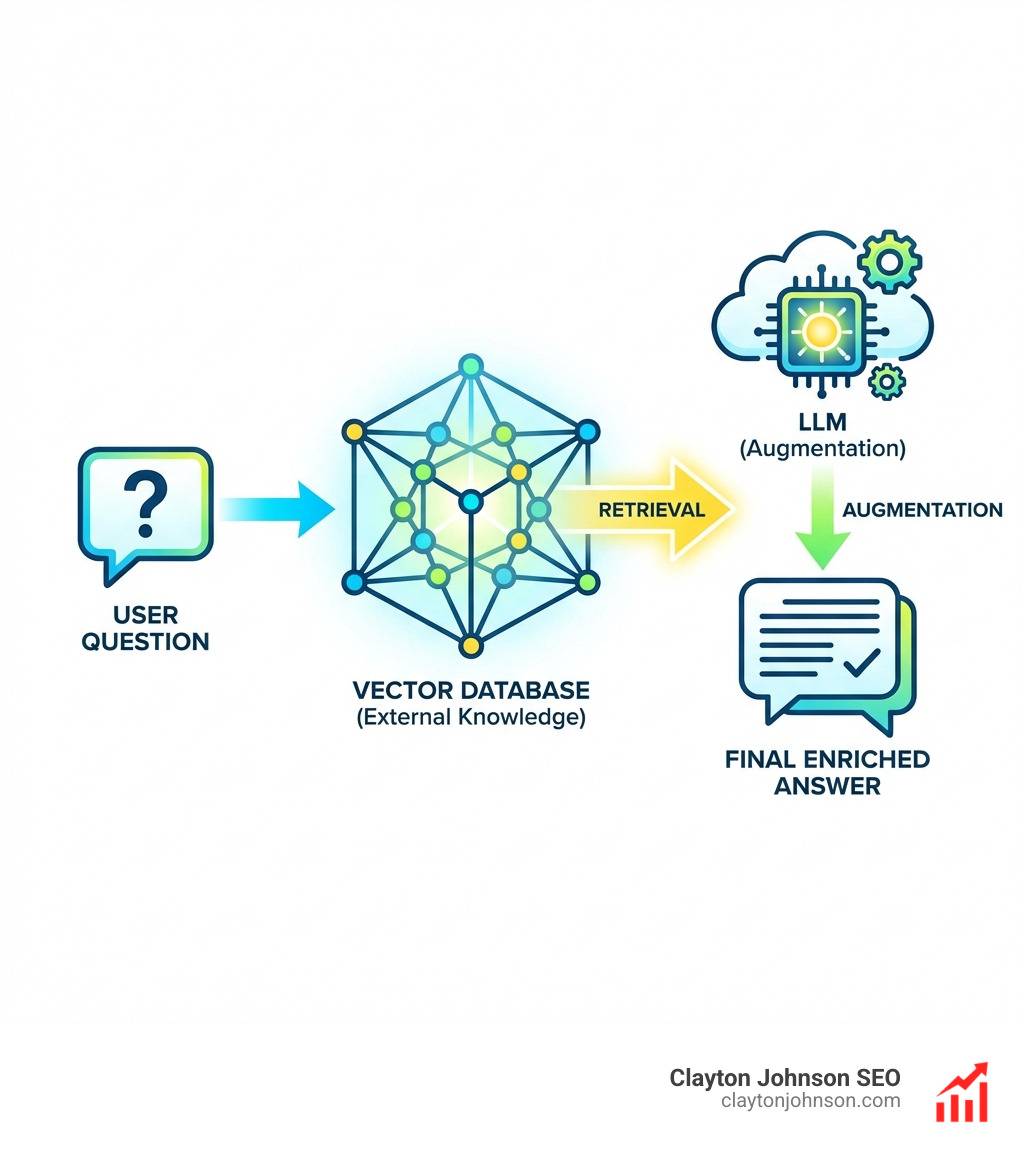

Speech-to-Retrieval (S2R)

Traditional systems often lose information during the “handshake” between speech recognition and the search engine. Speech-to-Retrieval (S2R) research aims to solve this by creating an end-to-end approach. Instead of converting voice to text and then searching, S2R looks at the audio features directly to find the most relevant information, reducing errors and improving the “Mean Reciprocal Rank” (the probability that the first answer is the right one).

Multimodal Search: Where Voice Search Meets AI and Vision

The most exciting frontier is not just voice, but “Voice + Vision.” This is where multimodal AI takes the lead.

- Google Lens Integration: Users can now use Lens to ask questions about what they see. Imagine pointing your camera at a broken bike chain and asking, “How do I fix this?” The AI processes the video frames and your voice simultaneously to provide a tailored tutorial.

- Generative Interfaces: With Gemini 3, we are seeing the rise of “generative UI.” The AI doesn’t just give you a text answer; it can dynamically construct an entire user interface on the fly. If you ask for a travel itinerary, it might build an interactive map and a scheduling widget right there in the search results.

SEO Strategies for the Voice-First Era

If the search engine is now a conversation partner, your SEO strategy must become conversational. You can no longer rely on high-volume, short-tail keywords alone. To win in the era of where voice search meets AI, you need to optimize for how people speak.

1. Target Conversational Keywords

People don’t speak in “keywordese.” They don’t say “Minneapolis SEO agency.” They ask, “Who is the best SEO expert in Minneapolis for small businesses?”

- Focus on the “Who, What, Where, When, Why, and How.”

- Long-tail phrases: Use natural language that mimics a real person’s speech patterns.

2. Implement FAQ Schema and Featured Snippets

AI assistants often pull their answers directly from “Featured Snippets.” To be the source of that answer, you must provide clear, concise responses to common questions.

- FAQ Schema: Use structured data to tell Google exactly what questions your page answers.

- Clear Formatting: Use bullet points and short paragraphs (40-50 words) that are easy for an AI to read aloud.

3. Build Authority with Structured Data

At Clayton Johnson SEO, we emphasize that AI SEO strategies require a foundation of trust. Google uses structured data to verify your business’s “NAP” (Name, Address, Phone number). This is critical for local voice searches like “find a coffee shop near me.”

4. Mobile-First and Page Speed

Most voice searches happen on mobile devices while people are on the go. If your site takes five seconds to load, the voice assistant will have moved on to a faster source before your page even registers. Use a mobile-first design and optimize your technical SEO to ensure near-instant delivery.

Overcoming Limitations Where Voice Search Meets AI

Despite the breakthroughs, AI voice systems aren’t perfect. We still face challenges like:

- Word Error Rate (WER): Background noise in a busy café can still trip up even the best ASR models.

- Cultural and Nuance Gaps: AI can struggle with “grey zone” questions involving culture, emotions, or complex legal realities where there isn’t a binary “yes or no” answer.

- Hallucination: Sometimes, AI models confidently state incorrect information. As a business, your content must be factually robust to ensure you don’t become part of a “hallucinated” answer.

Real-World Applications and Enterprise Use Cases

Voice AI has moved out of the living room and into the boardroom. Across various industries, businesses are using these tools to shave minutes off tasks and improve customer satisfaction.

| Industry | Use Case | Impact |

|---|---|---|

| Healthcare | Nurses using Nuance DAX Copilot for clinical charting | Trims after-shift charting by 35% |

| Enterprise | Telia Nordic contact centers using AI voice agents | 9% reduction in handle time; higher resolution |

| Retail | Oracle MICROS badges for inventory queries | Inventory data returned in under 3 seconds |

| Automotive | BMW Intelligent Personal Assistant | Proactive window opening at parking garages |

| Customer Service | Virtual agents for 24/7 inbound triaging | Reduces wait times and operational costs |

Frequently Asked Questions

How does AI improve voice search accuracy?

AI improves accuracy through “continual learning” and Large Language Models. Unlike old systems that used simple pattern matching, AI-powered voice search understands the context of the entire sentence. It can distinguish between “bank” (a river bank) and “bank” (a financial institution) based on the surrounding words in your query.

What is the difference between a chatbot and a voice assistant?

The primary difference is the input modality and the integration. Chatbots are typically text-based and live on a website. Voice assistants use ASR and TTS to handle spoken language and are often integrated into hardware (like smartphones, smart speakers, or cars) to provide a hands-free, eyes-free experience.

Is voice search data private and secure?

Privacy is a major concern. Most major platforms now offer features like “on-device processing,” where the voice data never leaves your phone. However, users should always review their “Web & App Activity” settings and manage their voice history to ensure their data is being handled according to their comfort level.

Conclusion

The intersection of where voice search meets AI is more than just a technological trend; it is a fundamental shift in the human-computer interface. We are moving toward a world where keyboards feel “extravagant” for simple tasks and where talking to our devices is the path of least resistance.

At Clayton Johnson SEO, we believe that success in this new era requires more than just chasing the latest “hack.” It requires a structured growth architecture. Our platform, Demandflow.ai, is designed to help founders and marketing leaders build the Leveraging AI for SEO growth systems needed to stay visible as search evolves.

We focus on:

- SEO Content Architecture: Building content that speaks to both humans and AI.

- Authority-Building Ecosystems: Ensuring your brand is the trusted source for voice answers.

- AI-Enhanced Execution: Using the latest multimodal tools to stay ahead of the competition.

Most companies don’t lack tactics—they lack the structure to make those tactics compound. If you’re ready to move beyond basic SEO and build a growth engine that thrives where voice search meets AI, we are here to help you architect that future.