The Magic of User Query Generative Expansion Explained

The Future of Search: Understanding User Query Generative Expansion

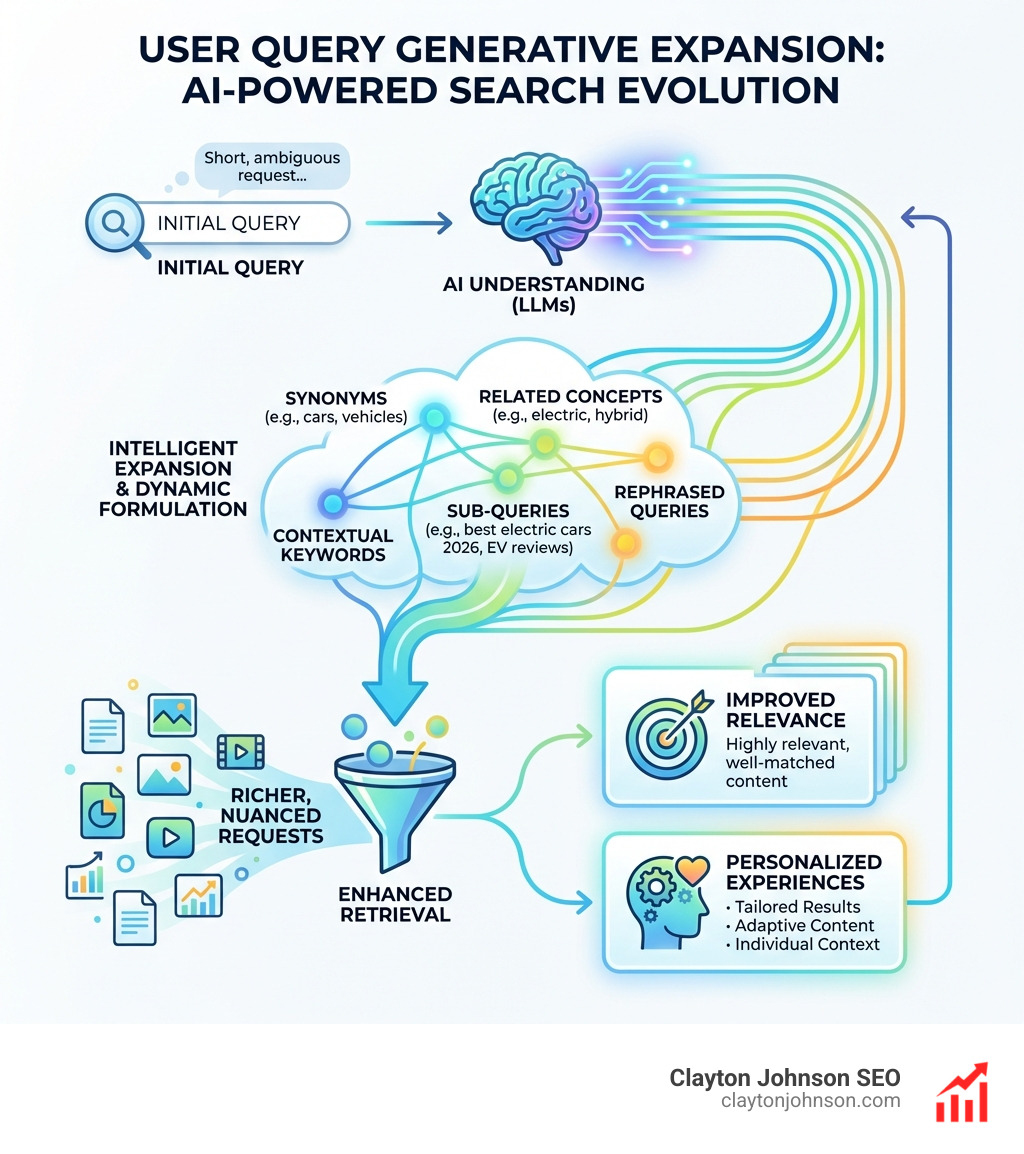

User Query Generative Expansion is a powerful technique that revolutionizes how search systems understand and respond to user needs. It leverages advanced artificial intelligence to transform a user’s initial search query, making it more comprehensive and precise.

Here’s a quick overview of what generative query broadening techniques entail:

- AI-Powered Query Understanding: Uses AI, particularly Large Language Models (LLMs), to interpret the user’s true intent behind their search phrase.

- Intelligent Expansion: Automatically adds synonyms, related concepts, and contextually relevant keywords to the original query.

- Dynamic Query Formulation: Can generate entirely new sub-queries or rephrase the original query to explore diverse facets of the user’s information need.

- Enhanced Retrieval: Aims to significantly improve the accuracy and completeness of search results by providing the search engine with a richer, more nuanced request.

- Personalized Experiences: Adapts to individual user contexts, delivering more relevant and tailored information.

This approach moves beyond simple keyword matching. It uses generative AI to anticipate what you really mean, even when your query is short, ambiguous, or uses different words than the content it needs to find. This helps search systems overcome the “vocabulary mismatch” problem, where valuable information might be missed simply because it uses different terminology. By generating more comprehensive and semantically rich query variations, search engines can retrieve far more relevant and personalized results, making your search experience much more effective.

I’m Clayton Johnson, an SEO strategist passionate about architecting scalable growth systems. My work focuses on building infrastructure that leverages innovative techniques like user query generative expansion to connect strategic intent with measurable business outcomes.

The Mechanics of User Query Generative Expansion

At its core, user query generative expansion is designed to solve the “vocabulary mismatch” problem. This happens when the words we use to search don’t exactly match the words used in the perfect document. Think about searching for “automobiles” but missing out on a great article that only uses the word “cars.”

This gap exists because of several linguistic hurdles:

- Synonymy: Different words having the same meaning (e.g., “tiny” vs. “small”).

- Polysemy: One word having multiple meanings (e.g., “apple” as a fruit vs. “Apple” as a tech giant).

- User Phrasing: We often type short, choppy, or incomplete phrases that don’t capture our full intent.

By using Large Language Models (LLMs), we can map these queries to a broader semantic space. Instead of just looking for character-by-character matches, the system understands the concept of the search. Research into Exploring the Best Practices of Query Expansion with Large Language Models shows that LLMs can act as a “creative bridge,” using their internal knowledge to suggest terms that a traditional dictionary or thesaurus might miss.

Overcoming Traditional Search Limitations

Before generative AI, search engines primarily relied on a method called Pseudo-Relevance Feedback (PRF). PRF works by taking the top few results from an initial search, assuming they are relevant, and grabbing common words from them to “expand” the query.

While PRF was a step up from basic keyword matching, it suffered from “query drift.” If the first few results were slightly off-topic, the expanded query would wander even further away from what the user actually wanted. User query generative expansion changes the game by using the LLM’s inherent “brain” to expand the query before or alongside the search, ensuring higher precision.

| Feature | Traditional PRF | Generative Expansion |

|---|---|---|

| Source of Terms | Top-ranked documents | LLM Internal Knowledge |

| Risk of Drift | High (if top results are poor) | Low (anchored in intent) |

| Contextual Awareness | Minimal | High |

| Handling Rare Queries | Poor | Excellent |

5 Techniques for Generative Query Broadening

How do we actually make this magic happen? We use various prompting strategies to guide Large Language Models in generating the best possible expansion terms. Whether it’s through zero-shot prompting (asking the model to expand without examples) or few-shot learning (giving it a few “before and after” examples), the goal is to create a richer search request.

According to Query Expansion by Prompting Large Language Models, these generative abilities allow us to recover documents that have zero lexical overlap with the original query but are perfectly relevant to the user’s needs.

1. Chain-of-Thought (CoT) Reasoning

One of the most effective ways to expand a query is through Chain-of-Thought (CoT) prompts. Instead of just asking for synonyms, we ask the AI to “think step-by-step” about the query.

For example, if a user searches “how to fix a leaky faucet,” a CoT prompt might instruct the AI to:

- Identify the likely tools needed (wrench, O-ring, washer).

- Identify the types of faucets (compression, ball, cartridge).

- List common symptoms (dripping, low pressure).

This produces a “verbose” output filled with high-value technical terms. Research shows that CoT prompts can improve Recall@1K (the ability to find the right document in the top 1,000 results) significantly—moving metrics from a baseline of 87.82 to 90.61 on standard benchmarks like MS-MARCO.

2. Query Fan-Out for Complex Intent

Sometimes, a single search query is actually hiding three or four different questions. This is where Query Fan-Out comes in. Instead of trying to find one perfect answer, the system deconstructs the initial query into multiple sub-queries that are run in parallel.

Imagine searching for “the best laptop for a graphic designer under $1,500.” A Query Fan-Out system might trigger separate searches for:

- “Top-rated laptops for Adobe Creative Cloud”

- “Laptops with high color accuracy displays under $1,500”

- “Graphic design laptop hardware requirements”

This “fan-out” approach is a cornerstone of modern AI-driven search environments. It allows for a much deeper dive into the web, gathering diverse perspectives to synthesize a comprehensive answer. We see this in action with Google’s AI Overviews, which use these sub-queries to ground their summaries in factual data from multiple sources. You can explore more about this in Query Fan-Out: A Data-Driven Approach to AI Search Visibility.

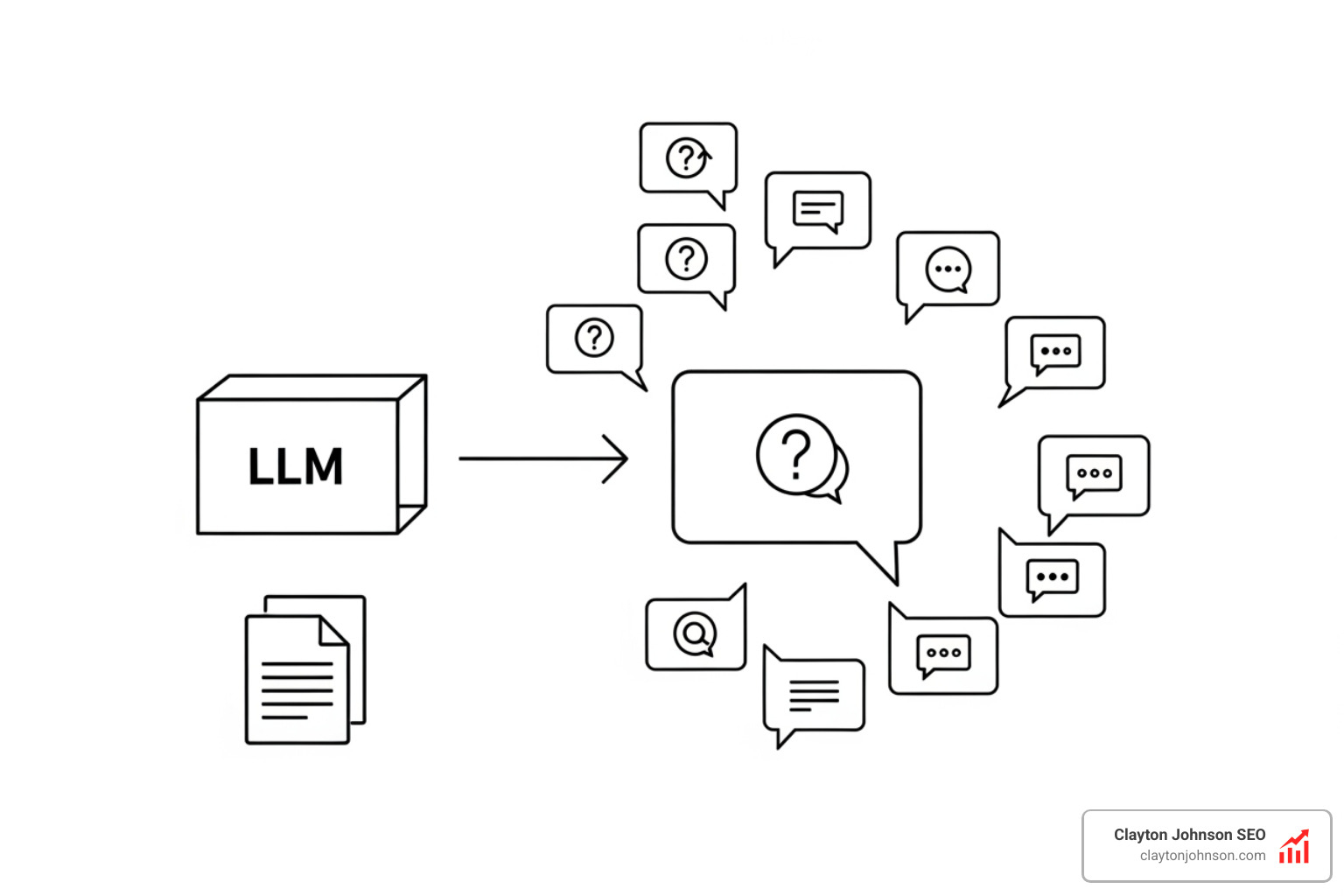

3. Synthetic Query Generation

What if the search engine could “practice” by imagining what users might ask? Synthetic query generation involves using LLMs to create artificial queries based on existing documents.

This is incredibly useful for:

- Filling Coverage Gaps: Creating queries for topics that are under-represented in search logs.

- Training Data: Helping search models learn how to rank content for rare or “long-tail” searches.

- Personalization: Simulating how different users (e.g., a pro vs. a beginner) might phrase the same need.

By generating these variants, we can bridge the gap between how a technical manual is written and how a regular person actually searches for help.

4. Pseudo-Document Expansion (Query2Doc)

A fascinating technique called Query2Doc involves asking an LLM to write a “fake” or “pseudo-document” that answers the user’s query. We don’t show this fake document to the user; instead, we take the text of that document and add it to the search query.

Because LLMs have “memorized” a massive portion of the internet, their pseudo-documents are often packed with the exact keywords found in real, high-quality sources. This technique has been shown to boost the performance of traditional search engines (like BM25) by 3% to 15%. It’s a simple but effective way to disambiguate a query and provide the search engine with a “cheat sheet” of what the perfect result should look like.

5. GAN-Based Keyword Discovery

In e-commerce and search advertising, precision is everything. Generative Adversarial Networks (GANs) are being used to discover high-value keywords, especially for rare queries.

A GAN consists of two AI models:

- The Generator: Tries to create relevant keywords for a product.

- The Discriminator: Tries to guess if the keywords are actually relevant or just “noise.”

Through this “adversarial” competition, the system becomes incredibly good at finding semantic “hidden gems.” In e-commerce, this means showing a user exactly what they want even if they use highly specific or unusual phrasing. Studies have shown that GAN-based expansion can increase semantic similarity between queries and documents by nearly 10%, leading to better user experiences and increased revenue for platforms.

Integrating Expansion with RAG and SEO Strategy

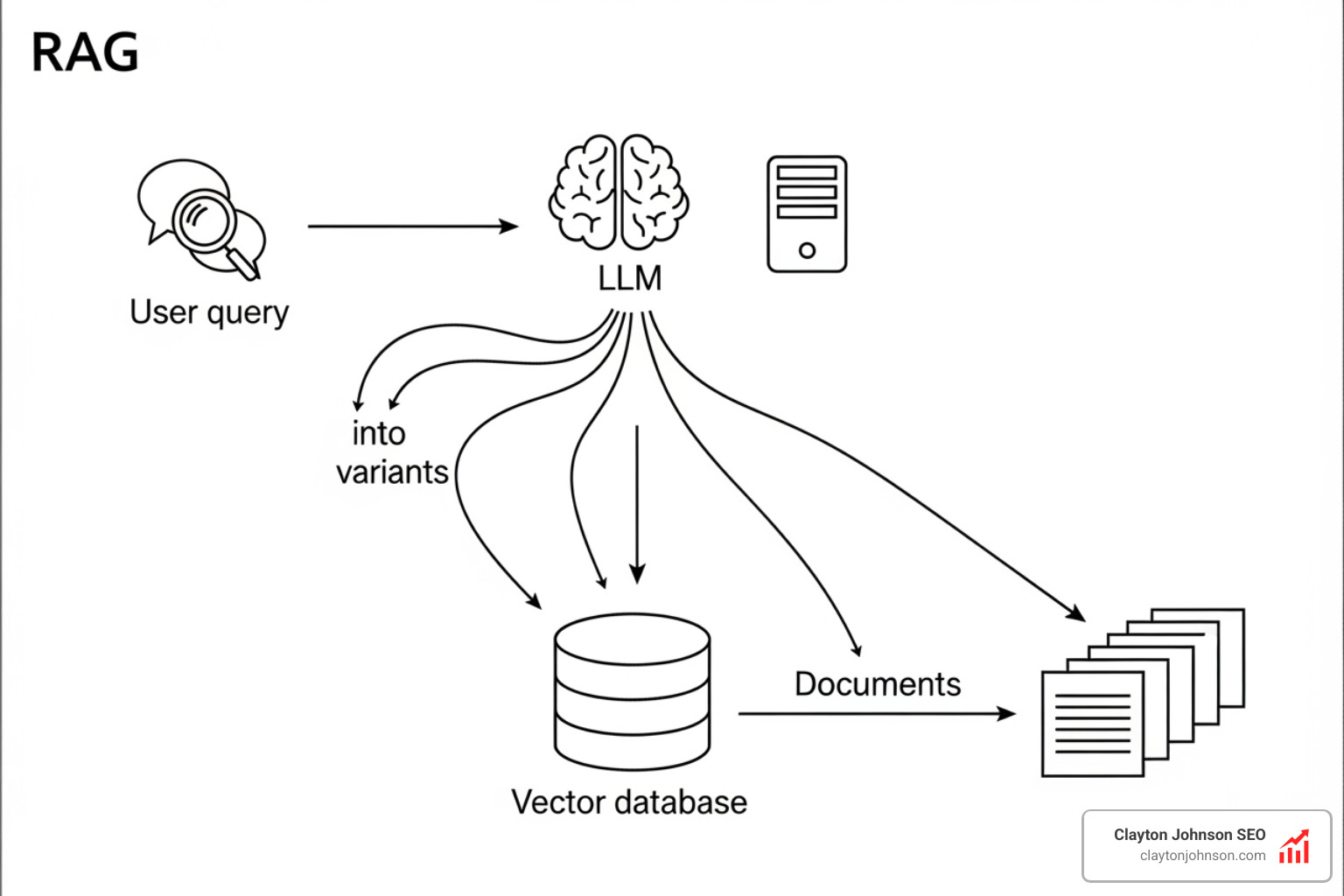

For businesses, user query generative expansion isn’t just a technical curiosity—it’s a core part of a modern SEO strategy. It is particularly vital for Retrieval-Augmented Generation (RAG) systems.

In a RAG setup, the AI doesn’t just “hallucinate” an answer; it first searches a private database for facts and then summarizes them. If the initial search is poor, the final answer will be poor. Generative expansion acts as a “sophisticated scout,” ensuring the RAG system finds the most relevant “grounding” information before the LLM starts writing.

Enhancing Recall with User Query Generative Expansion

The ultimate goal of these techniques is to improve “Recall”—the ability of a system to find all the relevant information available. In technical evaluations using the MS-MARCO and BEIR benchmarks, LLM-based expansion consistently outperforms older methods.

For example, using a “Q2D” (Query-to-Document) prompt has produced some of the highest average recall scores across diverse datasets. Importantly, these generative methods don’t just find more results; they find better results, improving top-heavy metrics like MRR@10 (Mean Reciprocal Rank), which measures how often the right answer is in the very first few spots.

Future Trends in User Query Generative Expansion

As we look ahead, the way we interact with search will continue to shift from “keyword entry” to “intent deconstruction.”

- Dynamic Fan-Out: Systems will become smarter about when to use expansion. A simple query like “What is the capital of Minnesota?” doesn’t need expansion, but “How do I start a small business in Minneapolis?” will trigger a massive, multi-faceted expansion.

- AI Agents: We are moving toward a world where AI agents will perform these expansions autonomously, navigating the deep web on our behalf.

- Hyper-Personalization: Expansion will be tailored not just to the query, but to your specific history, location, and expertise level.

To stay ahead, we must focus on building “structured growth architecture” that can handle these complex interactions. You can Learn more about AI search evolution and how it’s changing the landscape of digital information.

Frequently Asked Questions

How does generative expansion improve search experiences?

It makes search results more relevant and easier to understand. By expanding a query to include context and synonyms, the system can find the “needle in the haystack” even if the user doesn’t know the exact technical terms to use. It also allows for client-side benefits, such as rewording complex search results into “plain English” to help users decide what to click on more effectively.

What is the difference between Query Fan-Out and traditional expansion?

Traditional expansion adds a few words to a single search. Query Fan-Out breaks one complex intent into multiple, separate sub-queries that run at the same time. This allows the system to explore different subtopics (like technical specs, user reviews, and pricing) simultaneously, providing a much more comprehensive answer.

Can query expansion be implemented on the client-side?

Yes! While much of the heavy lifting happens on the server, client-side generative AI can provide immediate benefits. For example, an LLM can analyze a user’s query as they type and suggest “expanded” versions or categories to help them narrow down their search. It can also be used to “auto-tag” results, making a messy list of links much easier to navigate.

Conclusion

The magic of user query generative expansion lies in its ability to turn a simple string of text into a deep understanding of human intent. By moving beyond literal keyword matching and embracing the semantic power of LLMs, we can create search experiences that feel intuitive, helpful, and incredibly precise.

At Clayton Johnson SEO, we believe the future of growth isn’t about chasing individual keywords. It’s about building structured growth infrastructure that helps your content get discovered across an AI-driven search landscape. Whether that means SEO strategy, content architecture, or AI-assisted execution systems, our goal is to create the kind of leverage that compounds over time.

Ready to build a search-intent aligned ecosystem for your business? Get more info about growth infrastructure and how Demandflow.ai can transform your marketing workflow.