Breaking the Chain of Bad AI Responses

Understanding Prompt Chaining for Complex SEO Workflows

To master how to refine prompt chains, we first need to look at the architecture of the chain itself. Think of prompt chaining as an assembly line for information. Instead of asking one worker to build an entire car from scratch, you break the process down. One prompt handles the research, the next creates the outline, and a third writes the draft.

This process of task decomposition is essential because LLMs, while powerful, have a “focus” limit. When we give a model ten instructions in one prompt, it might follow seven perfectly but “drop the ball” on the other three. By using sequential logic, we ensure the model gives its full attention to one subtask at a time.

Furthermore, chaining allows for model specialization. We might use a high-reasoning model like GPT-4.5 for the initial strategy and a faster, more cost-effective model for simple formatting tasks later in the chain. This is a foundational technique in prompt engineering that moves us away from “hoping for magic” and toward predictable engineering.

According to IBM’s insights on prompt chaining, this approach is particularly vital for document QA and complex data transformation where accuracy is non-negotiable.

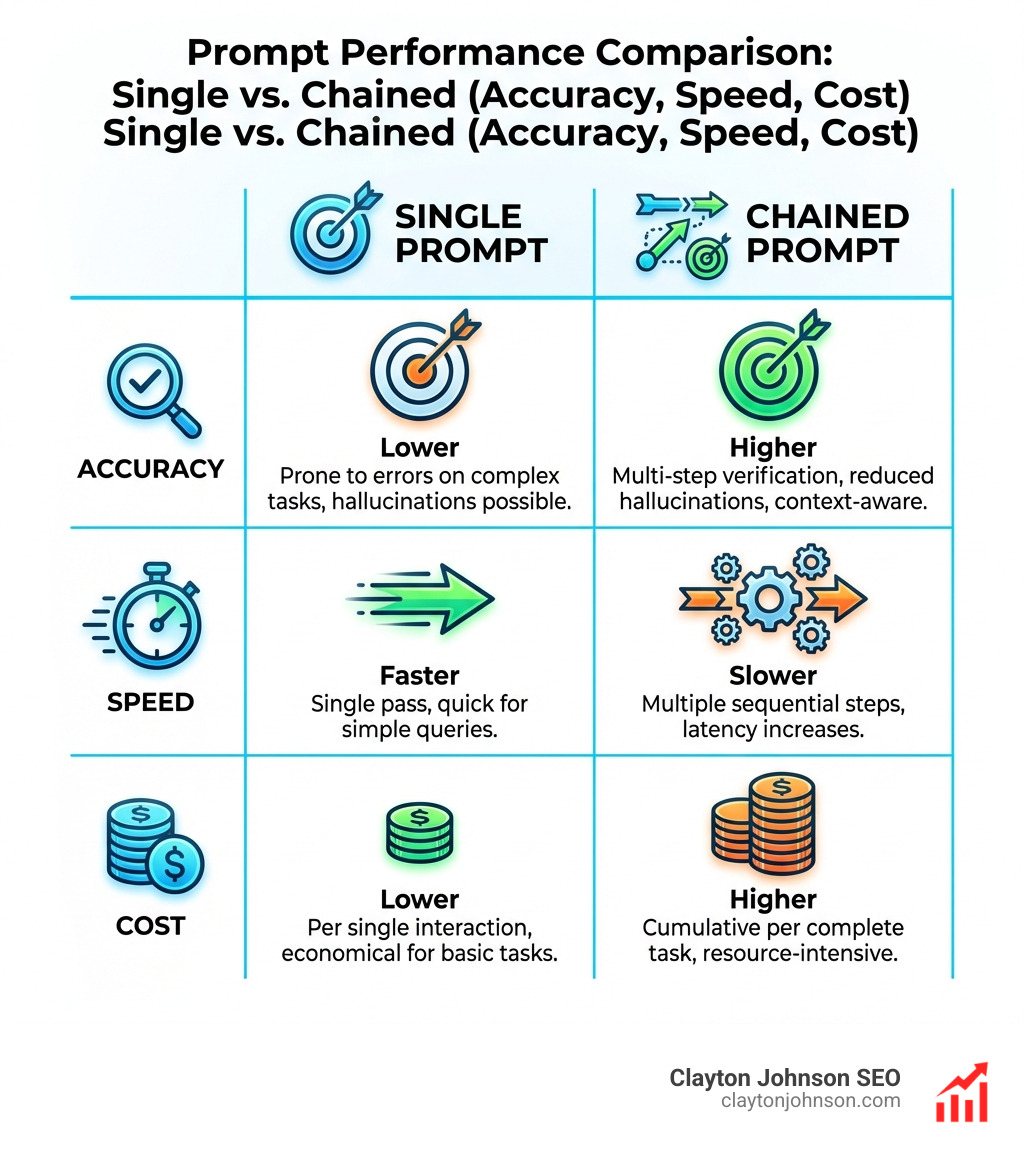

When to Use Prompt Chaining vs. Single Prompts

We often get asked: “Can’t I just write a really long, detailed prompt?” You can, but you shouldn’t always.

We choose prompt chaining over single prompts when:

- The task is multi-step: If you need to research, then analyze, then write, a chain is superior.

- The context window is a concern: Large documents can overwhelm a model’s “memory.” Chaining allows you to process chunks of data and pass only the relevant summaries to the next step.

- Accuracy is paramount: Scientific research on ensemble methods suggests that combining outputs or using multi-step verification produces more robust answers than relying on a single model pass.

- You need to reduce hallucinations: By forcing the model to extract facts in Step 1 before using them in Step 2, you provide a “grounding” mechanism that prevents the AI from making things up.

How to Refine Prompt Chains for Accuracy

Refinement starts with input-output mapping. We look at what goes into a prompt and what comes out. If the output isn’t right, we don’t just “yell” at the bot with more capital letters. We isolate the error.

If the final blog post is boring, is it because the writer prompt is bad, or because the outline prompt (the previous link in the chain) didn’t provide enough detail? This traceability is the “secret sauce” of professional AI workflows. For those just starting, our beginners guide to AI prompt engineering covers the basics of setting these boundaries.

How to Refine Prompt Chains for Maximum Reliability

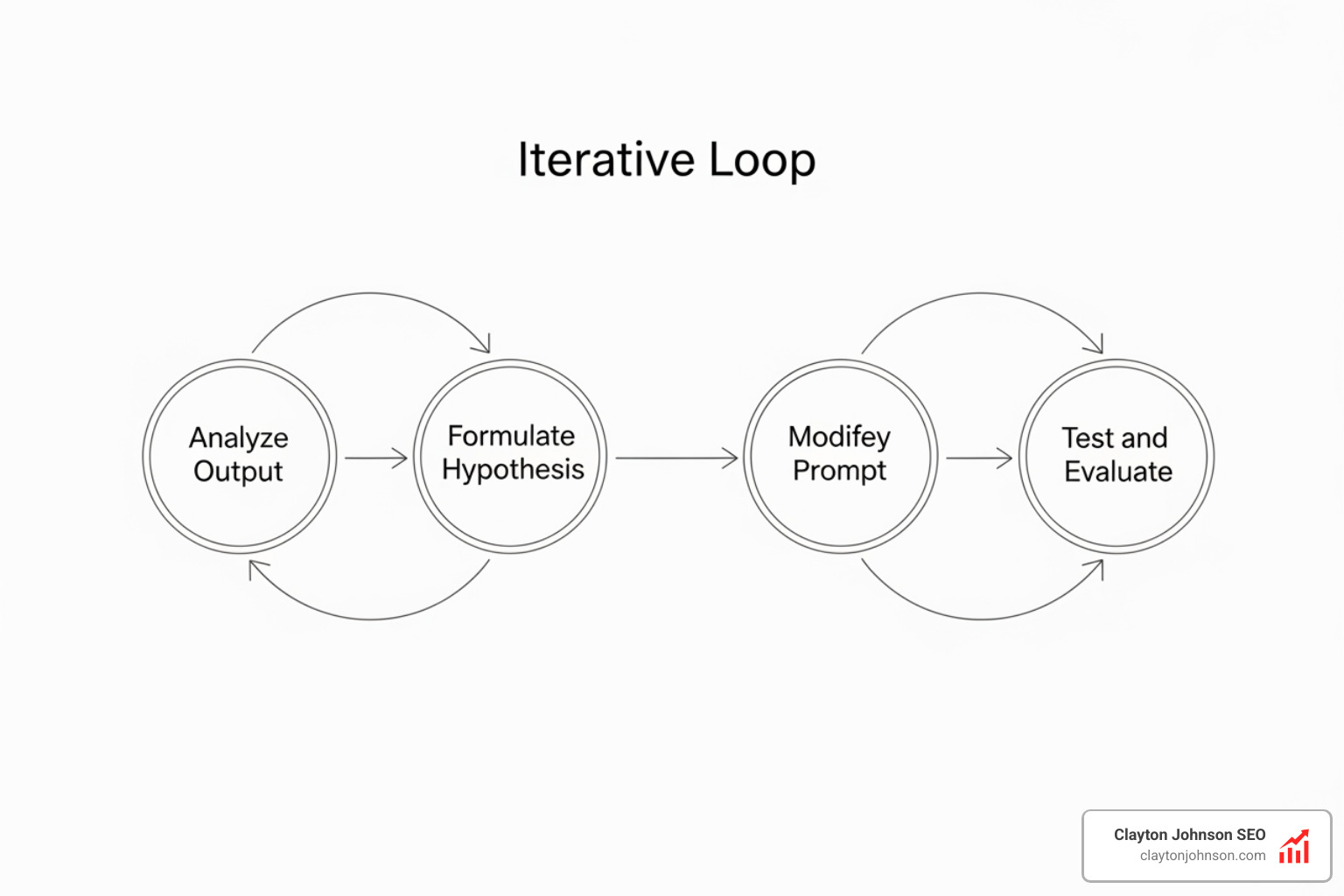

Refinement is a scientific process, not a guessing game. At Demandflow, we view it as a cycle of systematic experimentation.

- Analyze Output: Compare the AI’s response against your specific requirements.

- Formulate Hypothesis: Why did it fail? Was the instruction vague? Did it lack an example?

- Modify Prompt: Make one targeted change.

- Test and Evaluate: Run the new prompt and see if the specific issue is resolved.

Identifying and Breaking Down Tasks into Optimal Subtasks

The first step in knowing how to refine prompt chains is knowing where to cut the thread. We look for “transformation steps.”

For an SEO content workflow, the breakdown might look like this:

- Subtask 1: Extract keywords and intent from a top-ranking URL.

- Subtask 2: Create a semantic content outline based on those keywords.

- Subtask 3: Draft the section content using a specific brand voice.

- Subtask 4: Review the draft against a checklist of SEO constraints.

By mapping the workflow this way, we ensure the logic flow is sound before we ever write a single line of a prompt.

Strategies to Refine Prompt Chains Iteratively

When we refine, we use “incremental narrowing.” This means we start with a broad instruction and get more specific based on the AI’s “mistakes.”

If we are refining an email extraction chain, we might move from a generic instruction like “Extract the data” to a specific constraint like “Extract the ‘orderId’ and ‘reportedIssue’ only into a JSON format.” This targeted fix is much more effective than rewriting the entire sequence from scratch. We also recommend using a “test suite”—a set of 5 to 10 different inputs—to ensure that a fix for one problem doesn’t break the solution for another.

Advanced Strategies for Refining Prompt Sequences

Once the basic logic is sound, we use advanced formatting to tighten the “handshakes” between prompts.

One of the most effective tools for this is the use of XML tags. By wrapping inputs in tags like

We also employ:

- Few-Shot Learning: Providing 3–5 examples of exactly what a “good” output looks like.

- Role Prompting: Telling the AI to “Act as a Senior SEO Strategist” for the analysis step and a “Professional Copywriter” for the drafting step.

- Constraint-Based Design: Using negative constraints (e.g., “Do not use the word ‘delve'”) to prune unwanted behaviors.

Using Examples and Formatting for Better Control

Formatting isn’t just for aesthetics; it’s for control. When chaining prompts, we often require the output of Step 1 to be in a structured format like JSON or Markdown. This ensures that Step 2 receives clean, predictable data.

Using XML tags allows the model to distinguish between your instructions and the data it’s supposed to process. If you’re passing a long transcript into a chain, wrapping it in

How to Refine Prompt Chains to Avoid Context Drift

A common pitfall in long chains is “context drift.” This happens when the model starts to forget the original goal because it’s too focused on the current subtask.

To combat this, we practice “context refreshing.” In each new prompt of the chain, we briefly restate the high-level objective. We also use message roles (Developer, User, Assistant) to maintain an instruction hierarchy, ensuring the model knows that the “Developer” instructions always take priority over any data found in the “User” input.

Testing, Tracking, and Avoiding Common Pitfalls

The biggest mistake teams make is “over-engineering.” Not every task needs a 10-step chain. If a single prompt gets you 95% of the way there, stick with it.

Other pitfalls include:

- Assumption Stacking: Assuming the model knows what “good” means without defining it.

- Template Rigidity: Making a chain so specific that it breaks when the input format changes slightly.

- Vague Refinement: Telling the AI to “make it better” instead of “add two statistics to the second paragraph.”

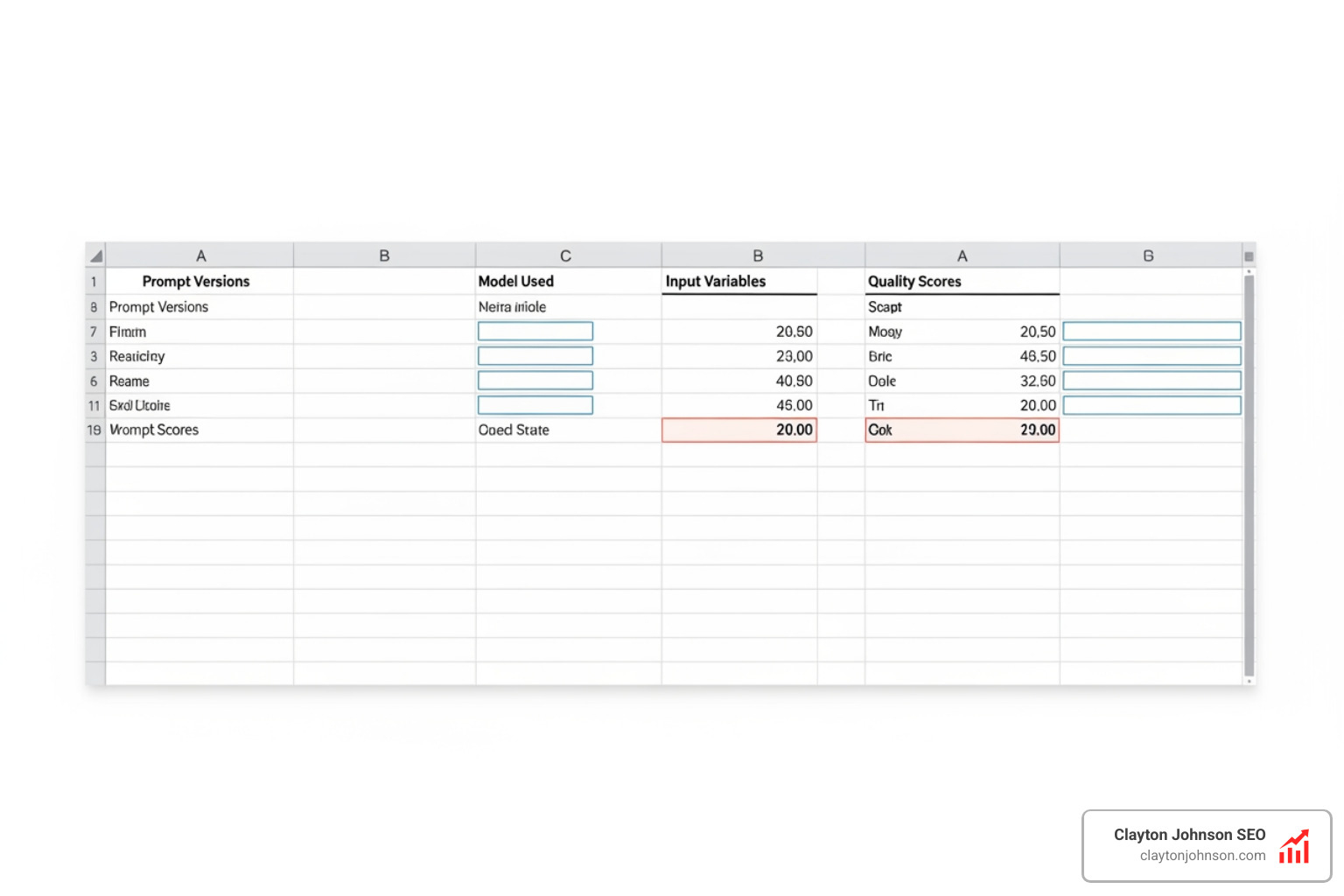

Measuring Performance and Version Control

You cannot refine what you do not measure. We recommend using objective metrics (like word count, keyword density, or JSON validity) to score each iteration.

Tracking your versions in a simple spreadsheet or via Git allows you to see the “evolution” of your chain. This is vital because sometimes a refinement in Step 4 actually requires you to go back and change Step 2. Without documentation, you’ll be lost in a maze of “PromptFinalV2REALFINAL.txt” files.

Real-World Examples of Successful Chain Refinement

Let’s look at a content creation pipeline. A generic prompt might produce a blog post that feels “AI-ish.”

By refining the chain, we transformed the process:

- Prompt 1 (Research): “Extract the top 3 pain points from these 5 customer reviews.”

- Prompt 2 (Outline): “Create a blog outline that addresses these 3 pain points specifically.”

- Prompt 3 (Drafting): “Write the intro using a ‘Problem-Agitate-Solution’ framework.”

- Prompt 4 (Review): “Check this draft for any generic ‘hype’ language and replace it with concrete examples.”

The result? A post that reads like it was written by a human expert who actually understands the customer.

Prompt Chain Use Cases

- Market Research: Chaining prompts to scrape data, categorize competitors, and then identify “blue ocean” opportunities.

- Legal Analysis: Extracting clauses from a contract, comparing them to a standard template, and flagging deviations.

- Multi-Step Translation: Translating text to a target language, then having a second prompt “back-translate” it to English to verify accuracy.

- Verification Loops: Having one prompt generate a fact-based response and a second prompt act as a “fact-checker” to verify every claim against a provided source.

Frequently Asked Questions about Prompt Chaining

How many steps should a prompt chain have?

Most complex tasks reach 95% quality within 3 to 5 steps. If your chain has more than 7 steps, you may be over-engineering or your subtasks might be too small. Aim for “meaningful transformations” at each step.

Can I use different AI models in the same chain?

Yes! In fact, this is a “pro” move. Use a “heavy” model like GPT-4.5 for strategic planning or complex reasoning, and a “lighter,” faster model for formatting, summarizing, or simple data extraction to save on API costs and latency.

Does prompt chaining increase API costs?

Initially, yes, because you are sending more tokens across multiple calls. However, because chaining produces much higher accuracy, you save money by reducing the number of manual “re-dos” and human editing time. Often, the “cost of bad output” is much higher than the API fees.

Conclusion

Mastering how to refine prompt chains is about moving from a “slot machine” mindset to an “assembly line” mindset. By breaking tasks down, isolating variables, and iterating with a scientific approach, you unlock the true power of LLMs.

At Clayton Johnson’s Demandflow.ai, we believe that clarity leads to structure, and structure leads to leverage. Our goal is to help you build the “growth architecture” your business needs to scale. If you’re ready to stop yelling at the bot and start engineering results, you can master prompt engineering for SEO through our frameworks and diagnostic tools.

Refining your chains isn’t just about better text—it’s about building a reliable system for compounding growth. Start small, isolate your changes, and watch your AI workflows transform from “unreliable” to “unstoppable.”