Charting the Course for Ethical AI Implementation

Why AI Ethics Framework Implementation Steps Matter Right Now

ai ethics framework implementation steps are the structured actions organizations take to ensure their AI systems are fair, transparent, accountable, and safe — from the first line of code to ongoing deployment.

Here is a quick overview of the core steps:

- Establish governance and leadership commitment — form an AI ethics council with real authority

- Assess risks and customize your framework — audit for bias, privacy gaps, and stakeholder impacts

- Integrate ethics into the AI development lifecycle — embed checks at every stage, not just at launch

- Operationalize transparency and accountability — document models, data sources, and decision logic

- Build human oversight and contestability mechanisms — keep humans in the loop at critical decision points

- Monitor continuously and audit regularly — track fairness metrics, data drift, and compliance over time

- Align with global standards — map your framework to ISO 42001, GDPR, the EU AI Act, and relevant industry rules

AI is moving fast. Most organizations are moving faster than their ethics practices can keep up with.

Stanford University’s Human-Centered AI initiative found that many organizations “talk the talk” of AI ethics without fully integrating these principles into their operations. Meanwhile, real-world failures — like the COMPAS recidivism algorithm, which flagged Black defendants as high-risk at twice the rate of white defendants who were never rearrested — show exactly what happens when ethics is treated as an afterthought.

The risks are not abstract. They are reputational. Operational. Legal.

And most business leaders admit they feel unprepared to handle the ethically complex situations AI presents.

That gap — between knowing AI ethics matters and actually knowing what to do — is exactly what this guide closes.

I’m Clayton Johnson, an SEO strategist and systems-focused growth operator who works at the intersection of AI-assisted workflows, strategic frameworks, and scalable marketing architecture. Developing structured, implementation-ready frameworks — including ai ethics framework implementation steps — is core to how I help founders and marketing leaders build systems that compound over time. Let’s walk through exactly how to build yours.

Understanding the Pillars of an AI Ethics Framework

Before we dive into the “how,” we have to understand the “what.” An AI ethics framework isn’t just a list of suggestions; it’s a foundational document that dictates how our technology interacts with humans. Think of it as the constitutional law for our algorithms.

At its core, an ethical framework ensures that as we adopt AI, we aren’t just chasing efficiency at the cost of our values. We want systems that are helpful, not harmful. This requires a socio-technical perspective—one that realizes AI isn’t just code; it’s code that impacts people’s lives, jobs, and privacy.

The foundational principles often cited in Scientific research on foundational AI ethics principles include fairness, transparency, accountability, privacy, and security. We’ve explored how these interact in our guide on building a responsible AI framework. By prioritizing human-centered values, we ensure that societal impact is weighed just as heavily as ROI.

Ethical vs. Responsible AI: Defining the Difference

It is easy to use these terms interchangeably, but in a professional setting, they represent two different sides of the same coin.

- Ethical AI is largely philosophical and theoretical. It asks: What is the right thing to do? It focuses on abstract principles like justice, beneficence, and non-maleficence.

- Responsible AI (RAI) is the operationalized version of those ethics. It asks: How do we actually do it? It’s about the tactical deployment of guardrails, remediation processes, and compliance checks.

| Feature | Ethical AI | Responsible AI |

|---|---|---|

| Focus | Philosophical & Societal | Operational & Practical |

| Goal | Defining “Right” vs “Wrong” | Compliance & Risk Mitigation |

| Output | Values & Principles | Policies, Audits, & Governance |

| Nature | Theoretical | Tactical |

In short, ethics provides the “why,” while ai ethics framework implementation steps provide the “how.”

Core Principles: Fairness, Transparency, and Accountability

If our framework were a building, these three principles would be the load-bearing walls.

- Fairness (Bias Mitigation): We must ensure our models don’t perpetuate historical prejudices. This means using diverse datasets and constantly testing for disparate impacts across protected classes.

- Transparency (Explainable AI): If an AI makes a decision—especially one that affects a customer’s credit or a job application—we need to be able to explain why. Algorithmic honesty is the bedrock of trust.

- Accountability (Decision Hierarchies): When an AI makes a mistake, who is responsible? We must establish clear lines of authority so that humans remain “the throat to squeeze” when things go sideways.

Following OECD guidelines for trustworthy AI helps us align our internal goals with global expectations for algorithmic integrity.

Essential AI Ethics Framework Implementation Steps

Implementing these ethics isn’t a one-and-done task. It’s an iterative process that requires moving from high-level “talk” to ground-level “walk.” Most companies fail because they treat ethics as a legal hurdle rather than a strategic differentiator.

We view these ai ethics framework implementation steps as a strategic roadmap. It starts with leadership and ends with a culture of continuous improvement. For a deeper look at the broader picture, check out our enterprise AI strategy guide. The Latest research on walking the walk in AI ethics emphasizes that without organizational capacity—meaning the actual budget and staff to enforce these rules—the framework is just a piece of paper.

Step 1: Establishing Governance and Leadership Commitment

Governance is the “teeth” of your framework. Without it, ethics is just a suggestion. We recommend establishing a cross-functional AI Ethics Governance Council. This shouldn’t just be IT folks; it needs legal, risk, operations, and even HR.

- Appoint a Senior Responsible Owner (SRO): Someone at the C-suite level must be accountable for the AI’s ethical performance.

- Define Authority: Does the ethics board have the power to shut down a project? (Hint: They should).

- Culture Shift: Leadership must foster a culture where employees feel safe flagging ethical concerns without fear of slowing down “innovation.”

Effective AI governance ensures that policy evolves as fast as the technology does.

Step 2: Assessing Risks and Customizing Your AI Ethics Framework Implementation Steps

Every business is different. A healthcare provider faces different ethical risks than a marketing agency. You need to map your specific stakeholders and potential harms.

- Bias Audits: Regularly check your training data. ProPublica research on algorithmic bias showed how easily bias can hide in “neutral” data.

- Impact Assessments: Conduct a Data Protection Impact Assessment (DPIA) and an Equality Impact Assessment (EIA) for every high-risk project.

- Risk Gating: Create a “gate” system. If a project is deemed high-risk (e.g., it processes personal data or makes autonomous decisions), it requires a higher level of scrutiny before deployment.

Step 3: Integrating Ethics into the AI Development Lifecycle

Ethics shouldn’t be a “final check” before launch. It needs to be baked into the development lifecycle.

- Diverse Datasets: Ensure your training data represents the real world, not just a narrow slice of it.

- Human-in-the-Loop (HITL): For critical decisions, ensure a human reviews the AI’s output. This is vital for safety and reliability.

- Technical Toolkits: Use tools like SHAP or LIME for explainability, and bias-detection software to scan models during training.

- Robustness Testing: Test how your model handles “adversarial attacks” or data drift (where the real-world data starts to look different from the training data).

For more on the technical side, see our AI infrastructure best practices.

Operationalizing Transparency and Accountability

Transparency is more than just being “open.” It’s about providing the right information to the right people. Your developers need technical logs; your customers need plain-English explanations.

We use “Model Cards” and “Data Sheets” to document the intent, limitations, and training provenance of every AI system. This level of documentation is essential for scaling AI frameworks across a large organization. It also helps with vendor transparency—if you’re buying an AI tool, you need to know exactly how it was built.

Human Oversight and Contestability Mechanisms

A system is only as ethical as its ability to be challenged. If an AI denies a user a service, that user must have a clear path to appeal to a human.

- Meaningful Involvement: Humans shouldn’t just “rubber stamp” AI decisions. They need the training and time to actually evaluate the output.

- Contestability: Provide a clear “Contact Us” or appeal button. Following the Commonwealth Ombudsman guide on automated decision-making is a great way to ensure people remain at the center of service delivery.

- Frontline Support: Give your customer service teams the tools to explain AI decisions to frustrated users.

Continuous Monitoring and Auditing for Long-Term Success

AI systems are not static. They learn, they change, and they drift.

- Performance Metrics: Track more than just accuracy. Track fairness indicators (like demographic parity) and transparency scores.

- Annual Reviews: Just like a financial audit, your AI systems should undergo an annual ethical review.

- Feedback Loops: Create a system where users and employees can report “weird” or biased behavior from the AI. This real-world feedback is often faster than any automated audit.

Aligning with Global Compliance and Standards

In Minneapolis, we are subject to a growing web of regulations. While the U.S. landscape is shifting, global standards like the EU AI Act and GDPR often set the baseline for enterprise software.

- ISO 42001: This is the international standard for AI Management Systems. Aligning with it shows you’re serious about governance.

- GDPR: If you have customers in the EU (or even if you just want to follow best practices), GDPR’s rules on automated decision-making and data privacy are non-negotiable.

- EU AI Act: This is the world’s first comprehensive AI law. It categorizes AI by risk level—unacceptable, high, limited, and minimal.

Staying ahead of these is part of a robust AI governance system.

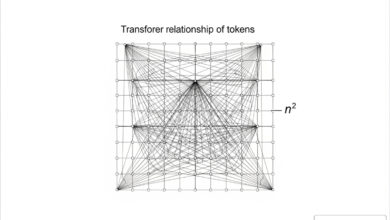

Navigating AI Ethics Framework Implementation Steps for Generative AI

Generative AI (like LLMs) introduces new headaches. We have to worry about “hallucinations” (the AI making stuff up), intellectual property theft, and environmental sustainability.

- Data Minimization: Only collect what you need. LLMs are data-hungry, but that doesn’t mean we should throw privacy out the window.

- Worker Rights: Be transparent with employees about how their data is used to train internal models. Ensure they have the right to opt-out where appropriate.

- Environmental Impact: Training large models uses massive amounts of energy and water. We should track our “carbon footprint” using tools like CodeCarbon or the Data Carbon Ladder.

For founders, this is part of a broader enterprise AI approach that balances innovation with responsibility.

Metrics and Audits for Measuring Effectiveness

How do we know if our ai ethics framework implementation steps are actually working? We measure them.

- Fairness Indicators: Are error rates higher for one demographic than another?

- Incident Response Times: How fast did we catch and fix a biased output?

- Transparency Scores: Can a non-technical stakeholder understand why a decision was made?

- Audit Trails: Is every decision logged and traceable back to a specific model version and dataset?

The ROI of ethics isn’t just about avoiding fines; it’s about building a brand that customers actually trust.

Frequently Asked Questions about AI Ethics

What are the core principles of ethical AI?

The most widely recognized principles are Fairness (avoiding bias), Transparency (explainability), Accountability (human responsibility), Privacy (protecting data), and Security (protecting the system). Others include Beneficence (doing good), Justice, and Respect for Law.

How do organizations measure the effectiveness of an ethics framework?

Effectiveness is measured through regular audits, bias detection metrics, compliance reviews against standards like ISO 42001, and stakeholder impact assessments. We also look at incident reports and how quickly ethical “bugs” are remediated.

Who should be involved in AI governance?

Governance should be cross-functional. This includes executive leadership, a dedicated AI Ethics Board, legal counsel, data owners, and diverse stakeholders from across the business to ensure different perspectives are represented.

Conclusion

Implementing an AI ethics framework isn’t about slowing down; it’s about building a foundation that allows you to speed up safely. Without structure, AI is a liability. With a structured growth architecture, it becomes a compounding asset.

At Demandflow, we believe that clarity leads to structure, which leads to leverage. By following these ai ethics framework implementation steps, you aren’t just checking a compliance box—you’re building the growth infrastructure of the future.

Ready to build a system that lasts? Explore our deep dives into AI governance and strategy to see how we help organizations turn complex technology into clear, measurable growth.