Stop Writing Monoliths and Start Chaining Your AI Prompts

Why Most AI Prompts Fail Before They Even Start

AI prompt chaining best practices are the difference between an AI workflow that compounds in quality and one that collapses under complexity.

Here is a quick-reference summary:

AI Prompt Chaining Best Practices at a Glance

- Break tasks into focused sub-tasks — one clear objective per prompt

- Pass outputs as inputs — each step feeds directly into the next

- Use structured formatting — XML tags or JSON to maintain clean handoffs

- Validate at each step — catch errors early before they propagate

- Run independent steps in parallel — save time and tokens

- Build self-correction loops — have the model review its own output for high-stakes tasks

- Iterate and refine — test each link in the chain individually

Think about the last time you gave an AI a complex task in a single prompt. Maybe you asked it to research a topic, extract key data, and write a polished summary — all at once. The output was probably fine in places and frustrating in others.

That is not a model problem. That is a workflow problem.

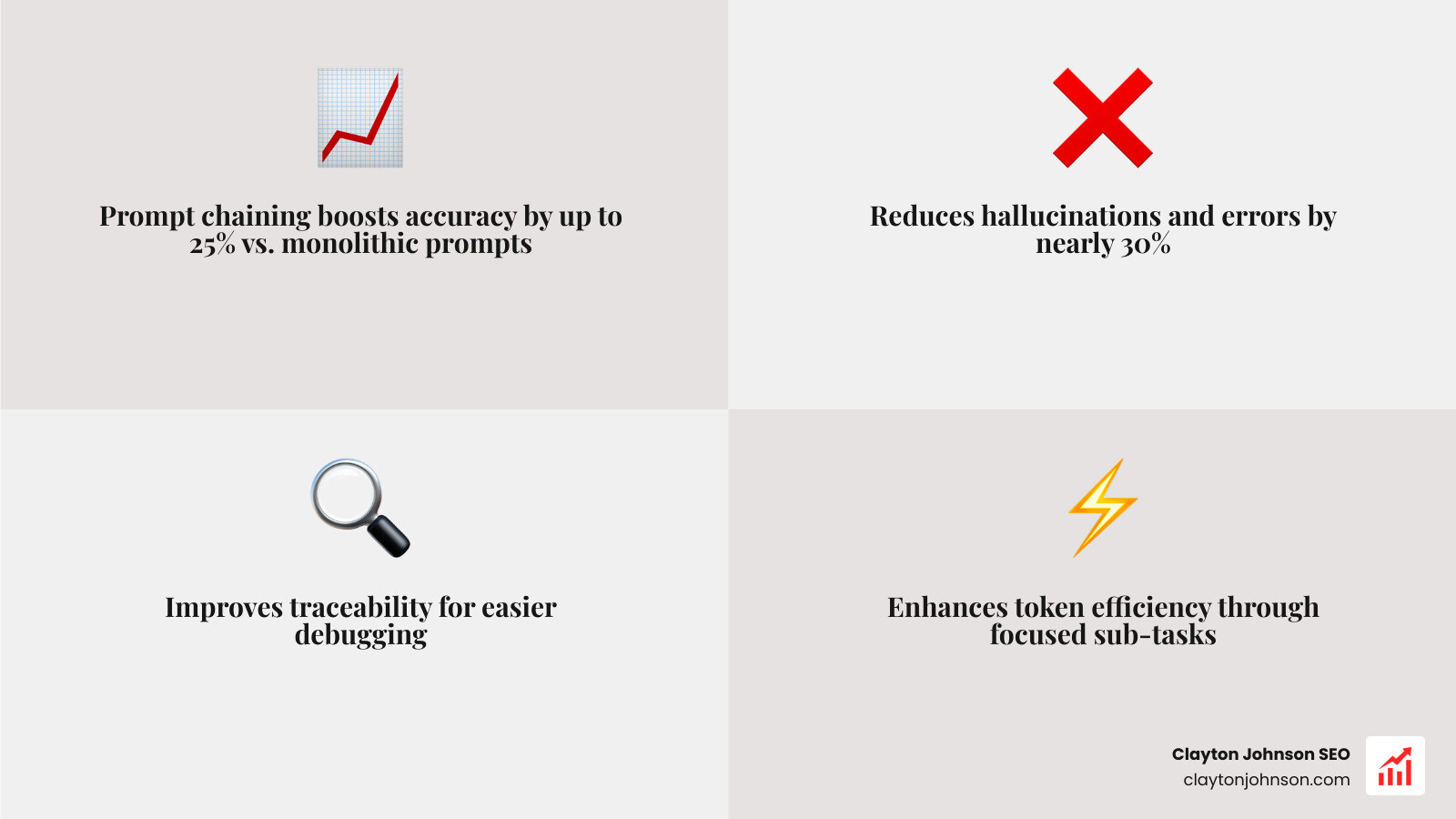

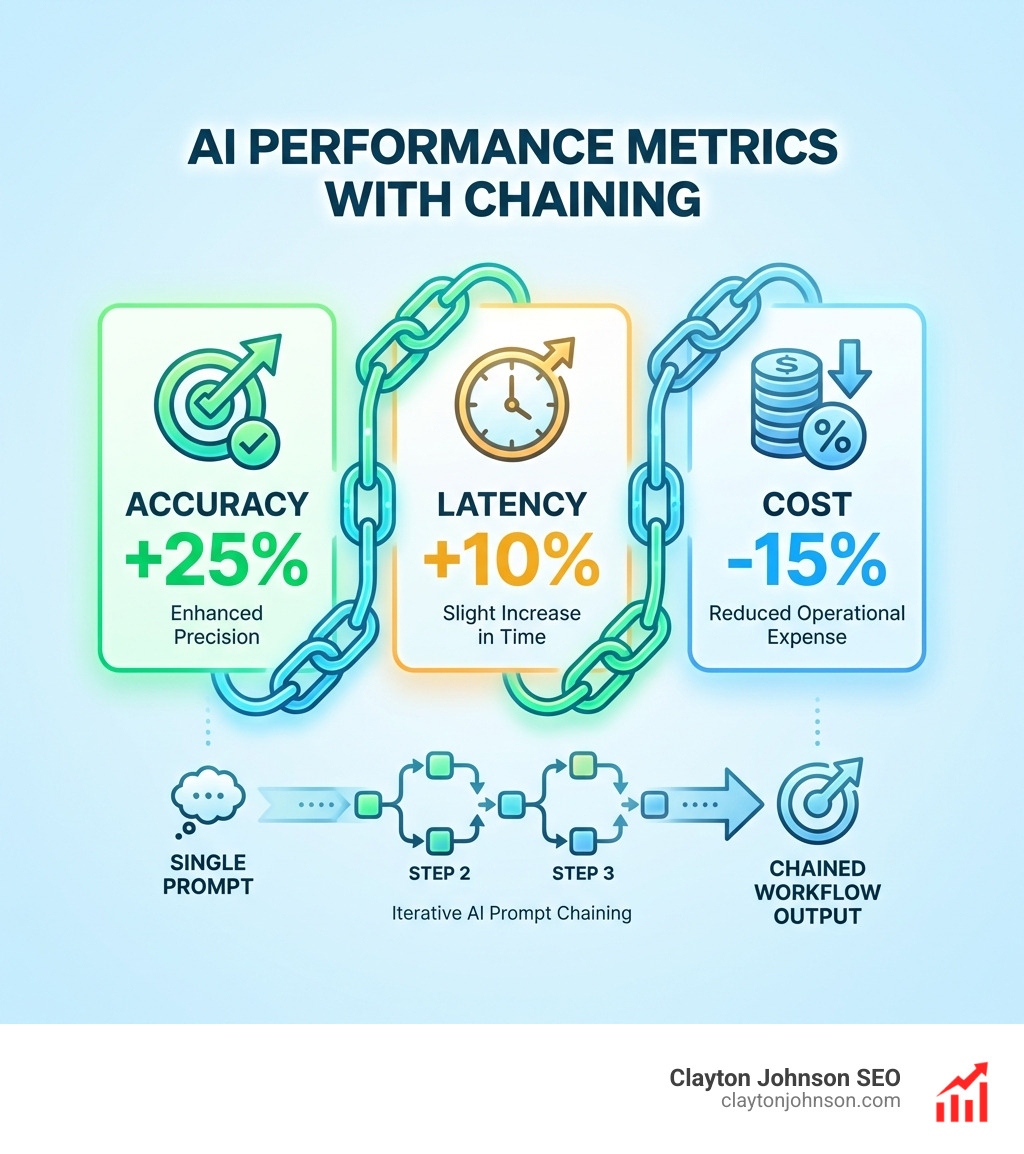

When you overload a single prompt, you are asking one instruction to do the work of seven. The model splits its attention, loses precision, and compounds small errors into big ones. Research consistently shows that breaking complex tasks into sequential, focused steps can improve accuracy by up to 25% and reduce mistakes by nearly 30%.

Prompt chaining solves this by treating AI the way a smart operations leader treats a production line — each station does one thing well, and the output moves cleanly to the next step.

I’m Clayton Johnson, an SEO strategist and growth systems architect who builds AI-augmented marketing workflows for founders and marketing leaders applying AI prompt chaining best practices to drive measurable, compounding results. The frameworks in this guide come directly from the systems I use to build scalable content architectures and AI-assisted operations.

Understanding the Mechanics of Prompt Chaining

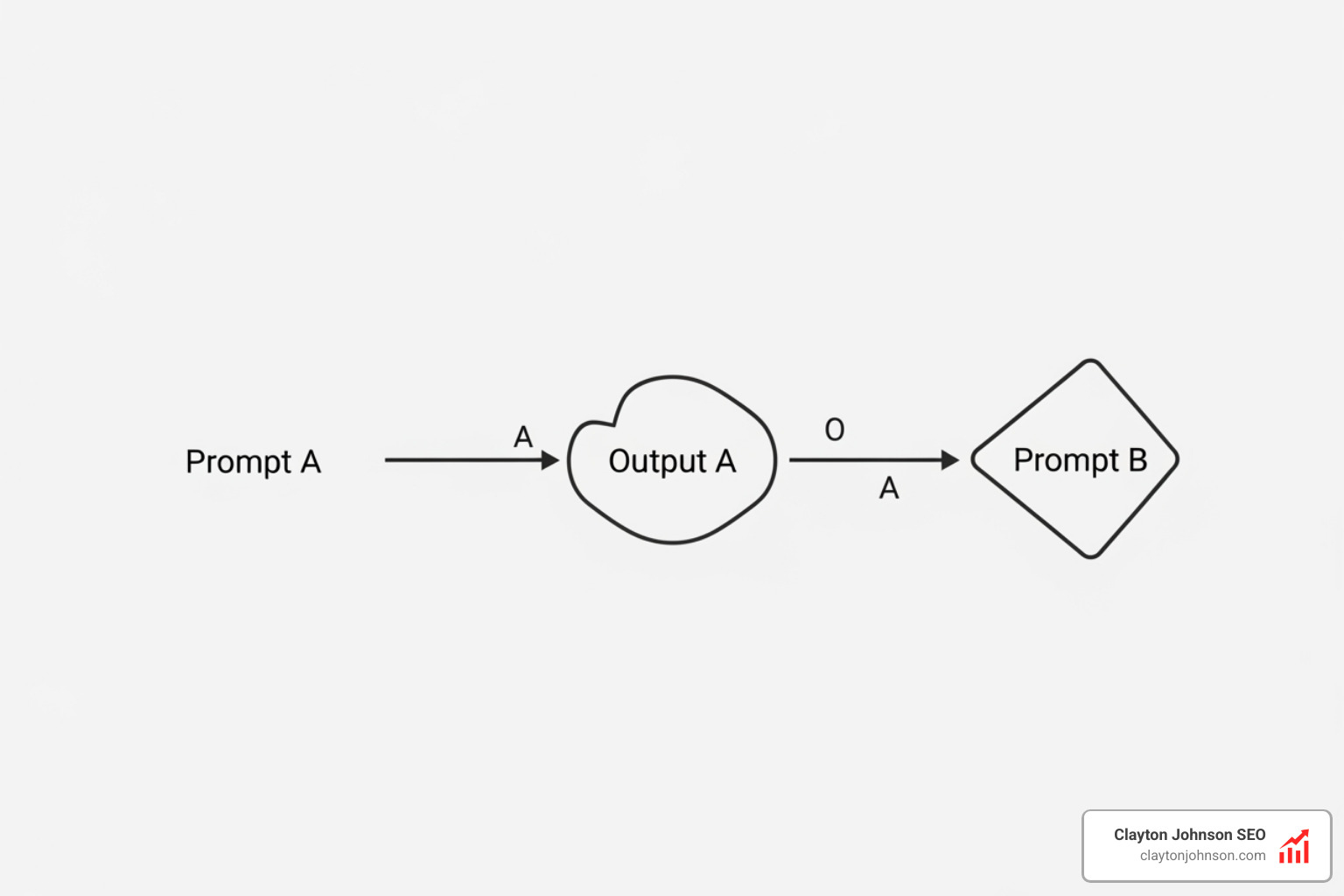

At its core, prompt chaining is the process of breaking a large, complex task into smaller, manageable subtasks. Instead of sending one giant “monolithic” prompt to an LLM, we create a sequence of prompts where the output of one step becomes the input for the next.

Think of it like an assembly line. If you ask a single worker to build an entire car, they might forget a bolt or misalign a door. If you have one worker focus entirely on the chassis, another on the engine, and a third on the electronics, the final product is significantly more reliable. This “Output-as-Input” (OAI) philosophy is what powers high-level AI orchestration.

Why Chaining Outperforms Monolithic Prompts

When we cram too many instructions into one prompt, we hit the limits of the model’s “attention.” Large models are powerful, but they can suffer from “middle-of-the-prompt” neglect or hallucination when juggling multiple constraints.

Research indicates that prompt chaining achieves up to 15.6% better accuracy than monolithic prompts. By isolating tasks, we gain:

- Accuracy Boosts: Each prompt gets the model’s full “cognitive” energy on a single goal.

- Hallucination Reduction: You can verify the facts in Step 1 before they are used to generate the creative copy in Step 2. Research on medical QA ensemble methods shows that combining specialized outputs leads to much more robust answers than relying on a single-pass response.

- Traceability and Debugging: If the final output is wrong, you can look back through the chain to see exactly which step failed. In a monolith, it’s a black box.

For a deeper look at the fundamentals of how these models process data, check out our Beginner’s guide to AI prompt engineering.

Core Types of AI Prompt Chaining Best Practices

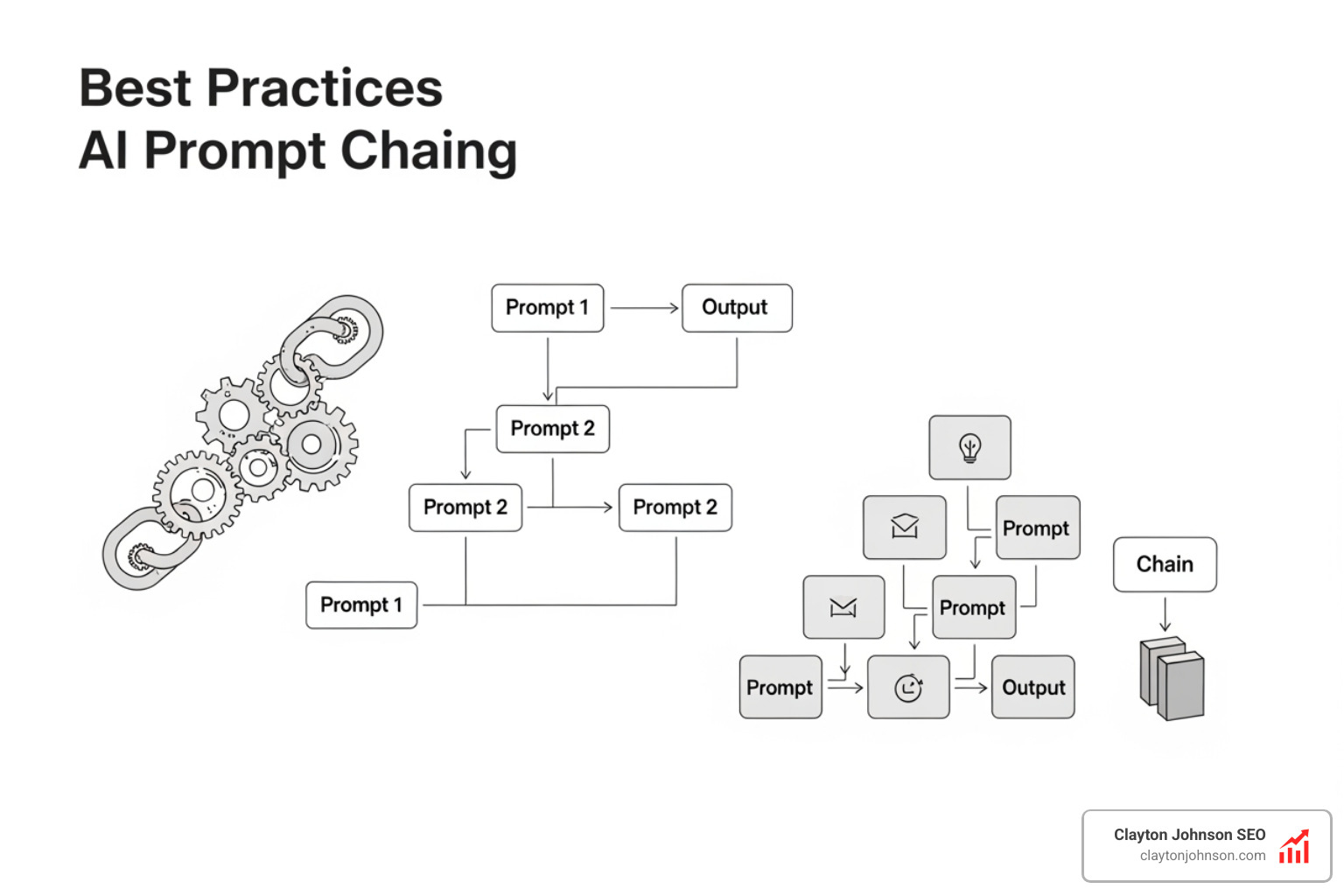

Not all chains are straight lines. Depending on your objective, we use different architectures:

- Sequential Chains: A simple A → B → C flow. Useful for document summarization or translation tasks.

- Branching Logic (Conditional): The AI evaluates the output of Step 1 and chooses path A or path B. For example, if a customer email is “Angry,” route to a “Refund Specialist” prompt; if “Happy,” route to a “Testimonial Request” prompt.

- Parallel Synthesis: Running multiple prompts at once to gather different perspectives, then using a final “aggregator” prompt to synthesize them.

- Recursive Loops: The AI repeats a task (like editing) until a specific quality threshold is met.

For more technical patterns, refer to the Prompt Chaining fundamentals provided by the Prompt Engineering Guide.

Designing High-Performance Prompt Workflows

To build a chain that actually works, we have to stop treating the AI like a magic wand and start treating it like a junior employee who needs clear, modular instructions. The goal is to reduce the “cognitive load” on the model at every step.

Implementing AI Prompt Chaining Best Practices with Structured Data

One of the biggest mistakes in chaining is passing messy, unstructured text between steps. If Step 1 outputs a rambling paragraph, Step 2 has to waste “brainpower” just trying to parse what’s important.

We use structured formatting to maintain clean boundaries:

- XML Tags: Wrapping inputs and outputs in tags like

- JSON Formatting: For data-heavy tasks, forcing the AI to output JSON ensures that the next prompt in your chain receives a predictable, machine-readable format.

- Markdown Boundaries: Using headers (H2, H3) and separators (—) helps the model understand the hierarchy of the information you are providing. For more on this, see CommonMark help.

If you’ve found yourself frustrated with poor outputs, you might need to Stop yelling at your AI and start prompt engineering.

Advanced Techniques: Self-Correction and Metaprompting

Once you master basic chains, you can move into “Agentic” territory:

- Self-Correction Loops: This involves a “Generator” prompt and a “Reviewer” prompt. The Reviewer checks the Generator’s work against a rubric and sends feedback back for a second draft.

- Metaprompting: This is the “Easy Button.” You write a prompt that tells the AI to design the prompt chain for you. It automates the automation process.

We’ve detailed how to execute these deeper reasoning paths in The ultimate Claude chain of thought tutorial.

Real-World Applications and Use Cases

Prompt chaining isn’t just a theoretical exercise; it’s a productivity multiplier for business operations. Projects using task breakdown methods are 45% more likely to be successful within deadlines and budget.

| Use Case | Step 1 | Step 2 | Step 3 |

|---|---|---|---|

| Document QA | Extract relevant quotes from a 50-page PDF. | Validate quotes against the original text. | Synthesize quotes into a concise answer. |

| Content Creation | Research 5 top-ranking URLs for a keyword. | Create a comprehensive H2/H3 outline. | Draft sections one by one based on the outline. |

| Legal Analysis | Identify all “Change of Control” clauses. | Summarize the risk level of each clause. | Draft a summary email for the legal team. |

Anthropic provides excellent documentation on these types of chained workflows specifically for their Claude models.

Content Creation and SEO Strategy

In our work at Clayton Johnson SEO, we use chaining to build “Authority-building ecosystems.” A single prompt might write a mediocre blog post, but a chain can:

- Analyze the search intent for a specific keyword.

- Identify “Information Gaps” in the current top 10 results.

- Draft a unique angle that fills those gaps.

- Optimize the final draft for SEO entities and readability.

This structured approach is what we call “growth infrastructure.” You can learn more about our philosophy on Artificial Intelligence/Prompt Engineering here.

Technical Workflows and Code Refactoring

For developers, prompt chaining is essential for maintaining code quality. Instead of asking an AI to “fix this file,” a chain might:

- Analyze the code for security vulnerabilities.

- Suggest specific refactors for performance.

- Generate unit tests for the new code.

- Update the documentation to reflect the changes.

This is particularly useful when improving frontend design through skills, where visual hierarchy and animations require multi-step refinement.

Essential AI Prompt Chaining Best Practices for Testing

You cannot build a high-performance chain without a testing rig. The average steps per trace in LangChain (a popular chaining framework) recently doubled from 2.8 to 7.7, showing that workflows are becoming more complex and require better oversight.

Managing Context and Error Propagation

The biggest risk in chaining is “Error Propagation.” If Step 1 makes a mistake, Step 2 will build on that mistake, and by Step 5, the output is useless.

- State Management: Keep a “log” of what has been decided in previous steps.

- Fallback Mechanisms: If the AI fails to generate JSON in Step 2, have a “Retry” prompt or a human-in-the-loop notification.

- Token Budget Tracking: Each step in the chain consumes tokens. While sequential chaining can sometimes reduce token usage by up to 85% (by not sending the whole document every time), you still need to monitor your “context window” to ensure the model doesn’t lose the thread.

To avoid the most common frustrations, read our guide on how to Stop yelling at the bot: A guide to better Claude prompts.

Frequently Asked Questions about Prompt Chaining

How does chaining differ from Chain of Thought?

Chain of Thought (CoT) is an internal reasoning process where you ask the AI to “think step-by-step” within a single prompt. Prompt chaining is an external workflow where you break the task into separate API calls. Chaining offers more control, transparency, and the ability to intervene between steps.

When should I use a single prompt instead?

Chaining adds latency and can increase costs if not managed well. Use a single prompt for:

- Simple, one-off questions.

- Tasks where low latency is critical (e.g., real-time chat).

- Requests that don’t require high precision or multi-stage logic.

Which models handle chaining most effectively?

Models with high “instruction following” scores excel here. Claude 3.5 Sonnet and GPT-4o are currently industry leaders for chaining because they handle structured data (like XML and JSON) with high reliability. Reasoning models like OpenAI’s o1 series are also powerful for the “logic” steps within a chain.

Conclusion

The shift from writing “monoliths” to building “chains” is the shift from playing with AI to building with it. At Clayton Johnson SEO, we believe that clarity leads to structure, and structure leads to leverage.

By applying AI prompt chaining best practices, you aren’t just getting better text; you are building a structured growth architecture. Whether you are automating your SEO strategy or refactoring a codebase, the assembly-line approach ensures that your AI efforts result in compounding growth rather than one-off experiments.

Ready to move beyond simple prompts? Explore our full library of SEO strategy and content architecture frameworks to see how we turn these techniques into measurable business results.