Fine-Tuning LLMs for Marketing: Teaching Your Bot to Sell

Why Fine-Tuning LLMs for Marketing Is the Growth Edge Most Teams Are Missing

Fine-tuning LLMs for marketing means taking a pre-trained AI model and retraining it on your own curated data — so it learns your brand voice, follows your SEO patterns, and stops producing the generic, off-brand content that wastes your team’s time.

Here’s the fast answer if you need it:

How to fine-tune LLMs for marketing (quick overview):

- Define your use case — brand voice, SEO content, product descriptions, FAQs

- Curate 100–1,000 high-quality prompt/completion pairs from your best-performing content

- Choose a provider — OpenAI, Google Vertex AI, or Hugging Face

- Train and validate using a held-out test set and a clear rubric

- Deploy into your content stack and version your models for A/B testing

- Iterate quarterly based on live performance metrics

71% of organizations regularly use generative AI in at least one business function. Yet only 25.6% of marketers say AI-generated content actually outperforms human content.

That gap is the problem.

Most teams are stuck at basic prompt engineering — asking a generic model to “write like us” and then spending hours fixing the output. The model doesn’t know your brand. It doesn’t know your audience. And it definitely doesn’t know your SEO strategy.

Fine-tuning changes that. Instead of fighting the model every time, you teach it — once — and it starts generating content that sounds like you, ranks like you, and converts like you.

The results back this up. Internal tests at one SEO content platform showed a lightweight fine-tuned model cut editing time per article by 37% compared to GPT-4 out of the box. Other teams report 30–50% reductions in long-term editing and revision costs.

This guide walks you through exactly how to get there — from dataset prep to deployment to measuring ROI.

I’m Clayton Johnson, an SEO strategist and growth operator who builds AI-augmented marketing systems for founders and marketing leaders at the $500K–$20M ARR stage. My work at the intersection of technical SEO, content architecture, and fine-tuning LLMs for marketing is what this guide is built on — let’s get into the framework.

Understanding Fine-Tuning vs. Prompting and RAG

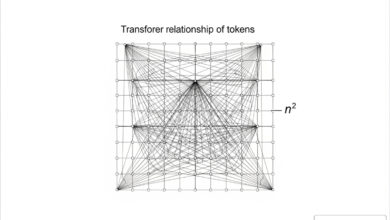

To master fine-tuning LLMs for marketing, we first need to clear up the “AI alphabet soup.” Many marketers confuse fine-tuning with prompt engineering or Retrieval-Augmented Generation (RAG). Think of it like this: Prompting is giving a student a better set of instructions; RAG is giving them an open-book exam; and fine-tuning is sending them to a specialized graduate school for your brand.

Prompt Engineering: The Foundation

Prompt engineering involves crafting detailed instructions within the “context window” (the model’s short-term memory). While strategic prompting can reduce revision cycles by 40-60%, it has limits. You are paying for every word of those instructions in every single request, which increases latency and costs.

RAG: The Fact-Checker

RAG technology and information retrieval allow an LLM to access current, authoritative data sources rather than relying solely on its static training data. It’s excellent for competitive analysis or real-time pricing—implementation can reduce research time by 60%. However, RAG doesn’t fundamentally change how the model speaks; it just changes what it knows.

Fine-Tuning: The Specialist

Fine-tuning actually modifies the model’s weights. We are baking your brand’s DNA directly into the “neurons” of the AI. This reduces the need for long, repetitive instructions in your prompts, leading to significant latency reduction and lower inference costs over time.

| Feature | Prompting | RAG | Fine-Tuning |

|---|---|---|---|

| Primary Goal | Better instructions | Accuracy & facts | Style & pattern matching |

| Data Scope | Minimal | Massive (External DB) | Targeted (100-1k pairs) |

| Setup Speed | Minutes | Days/Weeks | Weeks |

| Cost per Request | High (Long prompts) | Medium | Low (Short prompts) |

| Marketing Use | Ad-hoc posts | Product specs/Knowledge base | Brand voice/SEO structure |

Why Strategic Fine-Tuning LLMs for Marketing is the New Competitive Standard

There is a 71% organization adoption rate for generative AI, yet most output remains mediocre. Companies are drowning in content that feels “AI-ish.” Fine-tuning LLMs for marketing is how we move from generic to authoritative.

Brand Voice and SEO Optimization

Generic models often fail at strict brand guidelines. They might use “synergy” when your brand voice is “no-nonsense.” Fine-tuning trains the model on your top-performing, on-brand assets. This ensures every output follows your specific SEO content architecture—like internal linking patterns and keyword placement—without you having to remind it every time.

Hallucination Mitigation and Safety

Hallucinations occur when AI generates plausible but false information. This can be a legal nightmare, as seen in the Air Canada chatbot hallucination case, where a chatbot made up a refund policy. Fine-tuning grounds the model in your specific domain, making it far more reliable for niche industries.

Domain Specificity

Standard models have “knowledge cutoffs” and lack specialized vocabulary. Just as BloombergGPT and Med-PaLM 2 examples show specialized models for finance and medicine, marketers need models that understand their specific product categories and customer pain points.

The Technical Workflow: How to Specialize Your Models

When we talk about fine-tuning LLMs for marketing, we are usually referring to Supervised Fine-Tuning (SFT). This is a process where we provide “labeled” data—essentially a list of questions (prompts) and the perfect answers (completions).

The goal is pattern matching. We want the model to see 500 examples of how we write a blog intro and think, “Aha, I see the rhythm, the tone, and the SEO hooks they use.” This level of specialization is why many choose to invest in more info about SEO content marketing services to ensure their baseline content is high enough quality to train on.

Curating High-Quality Datasets for Fine-Tuning LLMs for Marketing

Your model is only as good as your data. If you train on “meh” content, you get “meh” output.

- Quantity vs. Quality: You don’t need millions of rows. Start with 100–300 high-quality examples. Diminishing returns often kick in after 1,000.

- The 1:5 Rule: Aim for a 1:5 ratio between the length of your input instructions and the output completion for instruct models.

- Formatting: Most providers require JSONL files. Use the OpenAI Tokenizer tool to ensure your examples don’t exceed context limits.

- Diversity: Include various marketing formats—emails, blog posts, social captions—so the model doesn’t become a one-trick pony.

- Validation: Always hold back 5-10% of your data to test the model later. This is essential for fine-tuning data validation to ensure the model isn’t just memorizing (overfitting) but actually learning patterns.

Technical Implementation: Best Practices for Fine-Tuning LLMs for Marketing

Once your data is ready, you need a place to train.

- Choose a Provider: OpenAI is the easiest for most marketing teams. Google Vertex AI offers great enterprise integration. Hugging Face is the go-to for open-source models like Llama.

- Efficiency with PEFT: You don’t always need to train the whole model. Parameter-Efficient Fine-Tuning (PEFT) methods like LoRA and QLoRA efficiency#Low-rank_adaptation) allow you to update a tiny fraction of the model’s weights. This is faster, cheaper, and prevents the model from “forgetting” its basic logic.

- Hyperparameter Tuning:

- Epochs: How many times the model sees the data. 2-3 is usually the “sweet spot.” Too many leads to overfitting.

- Learning Rate: How aggressively the model updates its weights. Start small.

- Tracking: Use tools like Weights & Biases experiment tracking to visualize your training “loss” (error rate) and compare different versions of your model.

Measuring ROI and Avoiding Common Pitfalls

We don’t fine-tune just for the sake of cool tech; we do it for leverage. Organizations implementing comprehensive measurement see 15-25% performance improvements in their AI systems.

The ROI of Fine-Tuning

The most immediate win is time. If a fine-tuned model cuts editing time by 37%, your content team can produce nearly double the volume without increasing headcount. Furthermore, human-in-the-loop ROI statistics show that organizations combining AI with expert review achieve 68% higher ROI than those using AI alone.

Pitfalls to Avoid

- Overfitting: This happens when the model learns your training data too well. It might start repeating exact phrases from your dataset rather than generating new ideas.

- Catastrophic Forgetting: If you train too aggressively, the model might lose its general reasoning abilities. It might become a great copywriter but forget how to do basic math or follow logic.

- Neuron Overwrite: Modern LLMs pack a lot of knowledge into few neurons. Fine-tuning can sometimes overwrite critical general insights with your specific brand data.

To mitigate these, we use Model Versioning. Never just replace your model; run A/B tests to ensure the new version actually performs better on your KPIs (like bounce rate, conversion, or SEO rankings) before a full rollout.

Frequently Asked Questions about LLM Fine-Tuning

Does fine-tuning leak proprietary marketing data?

This is a common concern. Most major providers like OpenAI and Google isolate your fine-tuned models. Your data is not used to train their base models for other customers. For maximum security, enterprises often use on-premise or “private cloud” open-source models (like Llama) where the data never leaves their own infrastructure.

When should I choose RAG over fine-tuning for content?

Choose RAG when your content relies on “dynamic facts” that change daily (like stock prices or inventory). Choose fine-tuning LLMs for marketing when you need to nail a specific “style” or “format” (like a very specific brand voice). The RAG market growth suggests many teams are moving toward a hybrid approach: fine-tuning for the “how” (style) and RAG for the “what” (facts).

How does fine-tuning impact SEO and Google compliance?

Google’s stance is clear: they reward high-quality, helpful content, regardless of how it’s produced. Fine-tuning actually helps with compliance because it allows you to bake E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) patterns directly into the model. By training on content that features quotes, statistics, and citations—which get 30-40% higher visibility in LLM responses—you are naturally aligning with Google’s quality standards.

Conclusion

The era of “just prompt it” is ending. As generative AI traffic grows by over 1,200%, the competitive advantage will belong to those who build proprietary AI assets. Fine-tuning LLMs for marketing is the bridge between generic automation and a true growth engine.

At Clayton Johnson, we are building Demandflow.ai to provide the structured growth architecture companies need to scale. We don’t just do content marketing; we build the infrastructure—the taxonomy-driven SEO systems and AI-augmented workflows—that turns AI from a toy into a compounding asset.

Clarity leads to structure, and structure leads to leverage. If you’re ready to stop fighting with generic AI and start building a model that understands your business, it’s time to build your AI model strategy with a partner who understands the architecture of growth.