Why Most LLM Leaderboards are Actually Bullshit

Why Most LLM Leaderboard Scores Are Misleading (And What to Do Instead)

LLM benchmark leaderboards explained simply: they are ranking systems that score large language models on standardized tests to help you compare performance across models.

Here is what you need to know fast:

- What they are: Platforms that run LLMs through structured benchmarks and publish ranked scores

- What they measure: Tasks like reasoning, math, coding, language understanding, and instruction following

- Popular examples: Hugging Face Open LLM Leaderboard, LMSYS Chatbot Arena, MTEB, BigCodeBench, Stanford HELM

- How scores are calculated: Metrics like accuracy, F1 score, BLEU, perplexity, and Elo ratings from human votes

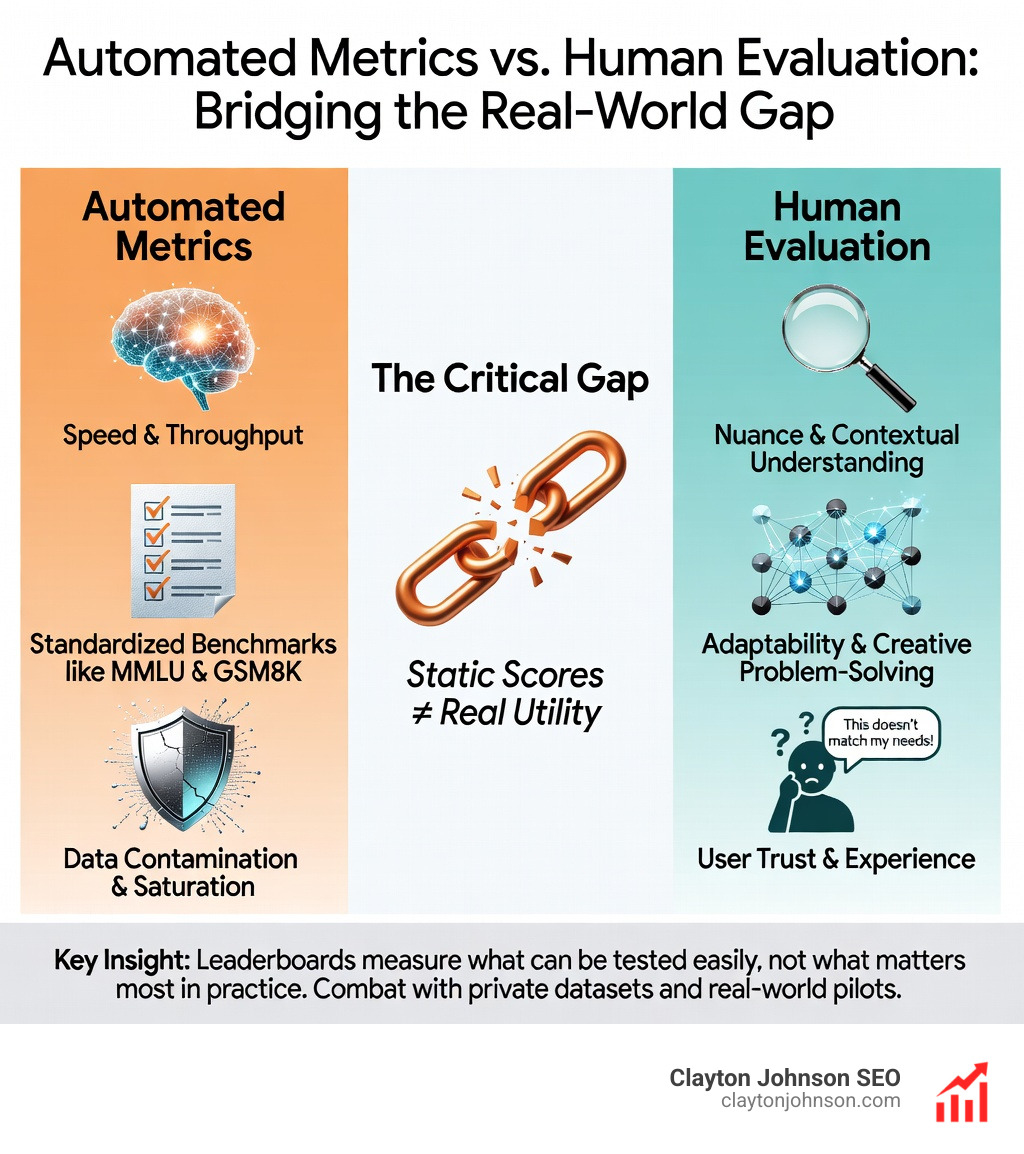

- The core problem: High benchmark scores often do not predict how a model performs in your actual use case

The problem is not that leaderboards are useless. It is that they are widely misread.

A model can rank #1 on one leaderboard and #5 on another. That is not a glitch. It reflects real differences in what each leaderboard measures, how it scores, and whether the training data contaminated the test set.

New model releases drop constantly, each accompanied by benchmark scores and bold claims. Most people have no framework to evaluate those numbers. This guide gives you one.

I’m Clayton Johnson, an SEO strategist and growth operator who works at the intersection of AI systems, content architecture, and measurable marketing outcomes — and understanding LLM benchmark leaderboards explained properly is foundational to making smart model selection decisions in AI-assisted workflows. Let’s break down what these systems actually measure, where they break down, and how to evaluate models in a way that reflects real-world performance.

Simple guide to LLM benchmark leaderboards explained:

LLM benchmark leaderboards explained: The Good, The Bad, and The Contaminated

When we talk about LLM benchmark leaderboards explained, we are looking at a landscape designed to filter genuine progress from marketing fluff. With new models arriving weekly, these rankings provide a centralized way to track who is actually leading the state-of-the-art (SOTA).

The most prominent example is the Hugging Face Open LLM Leaderboard. It evaluates open-source models using the EleutherAI Evaluation Harness, focusing on six key dimensions. These static benchmarks are the “SATs” of the AI world. They include:

- ARC (AI2 Reasoning Challenge): Grade-school science questions that require more than simple pattern matching.

- HellaSwag: A test of commonsense inference where models must predict the end of a sentence. While easy for humans (~95% accuracy), it famously tricks AI into choosing absurd but linguistically likely endings.

- MMLU (Massive Multitask Language Understanding): The industry heavyweight covering 57 subjects across STEM, the humanities, and more.

- TruthfulQA: A critical benchmark based on the Paper on TruthfulQA that measures whether a model mimics human falsehoods or provides accurate facts.

- GSM8K: High-quality grade school math word problems that require multi-step reasoning.

- Winogrande: An adversarial challenge focused on pronoun resolution and linguistic ambiguity.

While these provide a standard “weight class” for comparison, they suffer from a major flaw: saturation. When models start scoring 90%+ on a test, the test no longer differentiates between “good” and “great.”

Why Static Scores Fail to Predict Real-World Performance

Static benchmarks are increasingly under fire because they are “fixed” targets. If a model’s training data includes the questions and answers from the MMLU, it isn’t “smart”—it’s just reciting a cheat sheet. This is known as data contamination.

We see this frequently in the “race to the bottom” where companies optimize models specifically to rank high on public leaderboards. This overfitting results in high scores on paper but “fragile” performance in production. For example, changing the order of multiple-choice answers can sometimes cause a model’s score to plummet, revealing that it hasn’t actually learned the logic.

To combat this, the SEAL LLM Leaderboards use private, non-public datasets. By keeping the “test paper” hidden, they prevent developers from training their models on the evaluation data.

Common metrics used in these rankings include:

- Accuracy: The percentage of correct answers.

- F1 Score: A balance between precision and recall, vital for tasks like classification.

- BLEU: Often used in translation, though it is increasingly criticized for not capturing nuance.

- Perplexity: A measure of how well a probability model predicts a sample; lower is generally “better” for language fluency.

However, research on benchmark sensitivity shows that even slight changes in prompting (like adding a space or a specific instruction) can shift rankings significantly.

The Top 10 Ranking Systems for Every Use Case

Not all leaderboards are created equal. Depending on whether you are building a chatbot, a coding assistant, or a medical diagnostic tool, you need to look at different signals.

The LMSYS Chatbot Arena is the current gold standard for “vibe checks.” It uses a pairwise human voting system where users interact with two anonymous models and vote for the better response. This feeds into an Elo rating system—the same system used to rank chess players. Because it relies on millions of real-world interactions, it is much harder to “game” than static tests.

For those focused on the infrastructure side, Artificial Analysis provides essential data on latency, throughput (tokens per second), and price. It’s one thing for a model to be smart; it’s another for it to be fast enough for a real-time SEO application.

General Knowledge and LLM benchmark leaderboards explained

As MMLU becomes saturated, new challenges like MMLU-Pro and GPQA have emerged. MMLU-Pro increases the difficulty by expanding the choice set from four to ten options and focusing on reasoning. GPQA (Graduate-Level Google-Proof Q&A) uses questions written by experts that are designed to be “unsearchable,” making it a much better differentiator for high-end models. You can find the original MMLU dataset here, but keep in mind that experts now view it as a baseline rather than a ceiling.

Specialized Domains and LLM benchmark leaderboards explained

Specialized tasks require specialized rankings:

- Embeddings: The MTEB Leaderboard evaluates embedding models across 58 datasets and 112 languages.

- Coding: BigCodeBench uses over 1,000 functional programming tasks to test if a model can actually write working code, not just snippets.

- Medical: The Open Medical LLM Leaderboard tests models on professional medical exams like MedQA and PubMedQA.

- Hallucinations: The HHEM (Hughes Hallucination Evaluation Model) specifically measures how often a model makes things up in RAG (Retrieval-Augmented Generation) setups.

How to Run Your Own Evaluations and Ignore the Hype

At Demandflow.ai, we believe in structured growth architecture. In the context of AI, that means building your own evaluation pipeline rather than blindly trusting a public leaderboard.

If you want to move beyond the hype, you should run your own tests using tools like the DeepEval framework or the EleutherAI LM Evaluation Harness. These allow you to plug in your own data—your customer support logs, your specific SEO content requirements, or your proprietary code—to see which model actually moves the needle.

We also suggest looking at what LLM throughput benchmarks mean to understand the trade-offs between intelligence and speed.

What is the difference between MMLU and GPQA?

MMLU is broad and covers high-school to introductory college levels. GPQA is deep and expert-level. While a non-expert might score only 34% on GPQA, frontier models are now pushing into the 90% range, making it the primary tool for testing “Ph.D.-level” reasoning.

How does the Elo rating system work in Chatbot Arena?

The Elo system calculates a model’s relative skill level. If a “weak” model beats a “strong” model, its score jumps significantly. This crowdsourced alignment is crucial because it captures human preference, which often favors conciseness, formatting, and tone—things a static math test can’t measure.

Can models cheat on public leaderboards?

Yes. Data leakage is a massive problem. If a benchmark is public, it’s almost certain that some of its data has leaked into the massive web-scale training sets of major LLMs. This is why we advocate for “LLM-as-a-judge” systems where you use a very strong model (like GPT-4o or Claude 3.5 Sonnet) to grade the outputs of smaller, more cost-effective models on your specific tasks.

Conclusion: Building a Structured Growth Architecture

Public leaderboards are a starting point, not a destination. They are useful for shortlisting models, but the final decision should always be based on your specific SEO and growth infrastructure needs.

At Clayton Johnson SEO, we focus on clarity and leverage. Whether you are reviewing my subjective comparison of major AI models or implementing a full-scale SEO system, the goal is compounding growth through structure. Don’t let a synthetic score on a leaderboard distract you from real-world utility.

Ready to dive deeper into which models are actually worth your time? Check out our breakdown of Artificial Intelligence Models and how they fit into a modern marketing workflow. We are based in Minneapolis, Minnesota, and we help founders build growth architecture that lasts.