Keeping Humans in the Loop Without Losing Your Mind

What Human in the Loop Actually Means (And Why It Matters)

Human in the loop (HITL) is the practice of keeping humans actively involved in AI decision-making — not just at the start, but throughout the entire lifecycle of a model.

Quick answer:

| Term | What it means |

|---|---|

| Human in the loop | Humans review, correct, and guide AI outputs continuously |

| How it works | AI processes data → human validates or corrects → model retrains → repeat |

| Why it matters | AI alone misses context, cultural nuance, and ethical edge cases |

| Where it’s used | Healthcare, content moderation, finance, autonomous vehicles, SEO |

| Key benefit | More accurate, trustworthy, and bias-resistant AI systems |

Most AI systems are only as good as the humans behind them. A model trained on historical data will absorb the biases baked into that data — unless a human steps in to catch and correct them. That’s the core problem HITL solves.

Think of it like adaptive cruise control in your car. The system works well under normal conditions. But when it mistakes a plastic bag for a vehicle and slams the brakes, you take over. That moment of human override? That’s human in the loop in action.

The same dynamic plays out across industries — from a radiologist confirming an AI-flagged scan, to a content moderator reviewing what an algorithm couldn’t quite interpret, to an indigenous data labeler in Jharkhand whose ecological knowledge gets overruled by a labeling platform that doesn’t understand the difference between a pest and a pollinator.

Behind every “intelligent” AI system are invisible humans shaping it. The question isn’t whether to include them — it’s how to do it efficiently and ethically.

I’m Clayton Johnson, an SEO strategist and growth operator who works at the intersection of AI-assisted workflows and structured marketing systems. My work building scalable content architectures and AI-augmented workflows has given me a front-row seat to where human in the loop oversight makes or breaks the quality of AI-driven outputs.

What is Human in the Loop and How Does it Work?

At its core, human in the loop is a collaborative model that fuses human judgment with machine efficiency. While computers excel at crunching massive datasets at lightning speed, they often lack the “common sense” or contextual awareness that humans possess naturally.

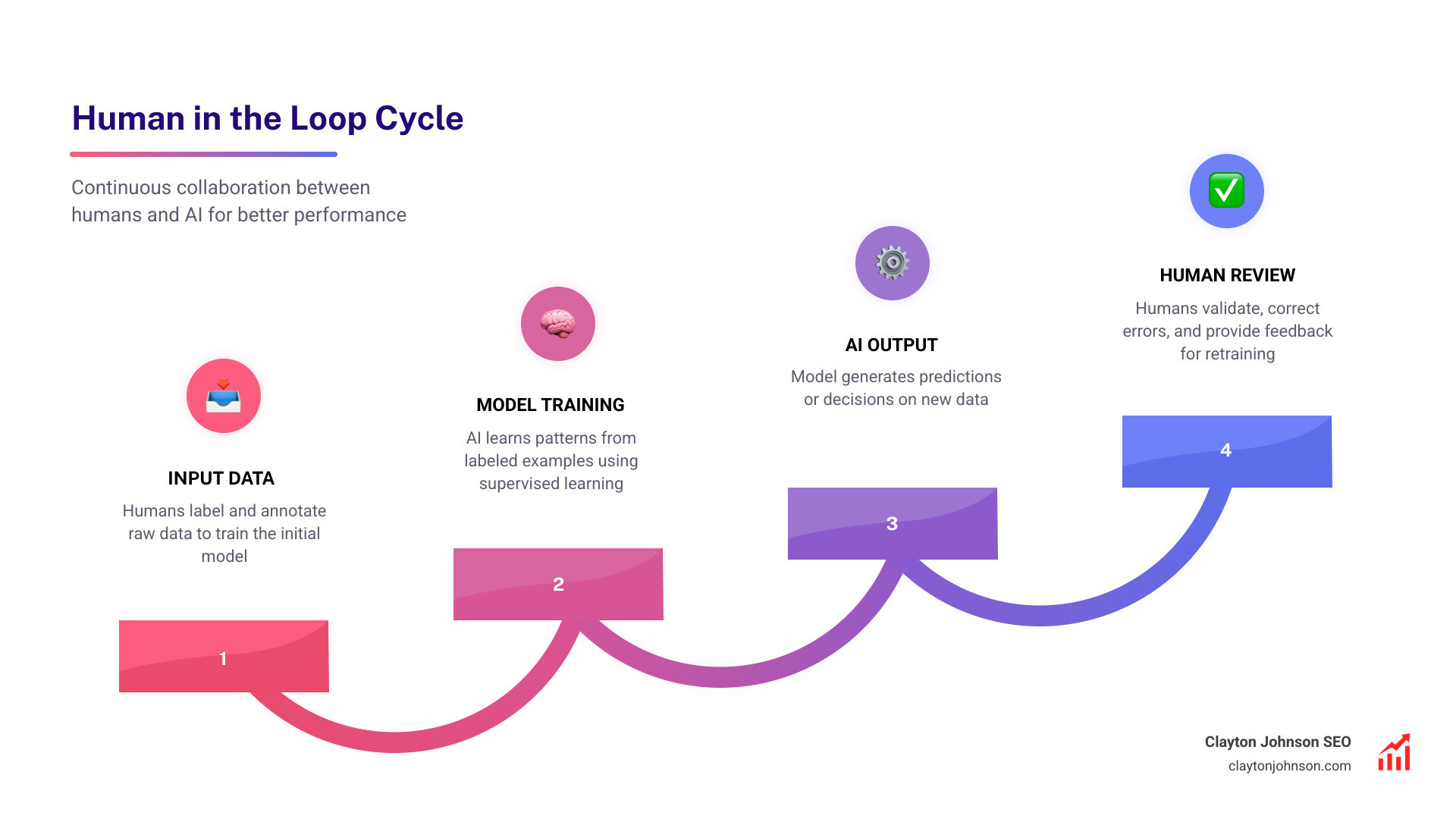

The process typically begins with supervised learning, where humans provide labeled examples to teach the machine. However, the “loop” implies that this isn’t a one-and-done setup. It involves a continuous cycle where the AI performs a task, a human evaluates the result, and the feedback is fed back into the system to improve future performance.

According to research on how HITL works in AI, this approach is particularly vital when dealing with active learning. In this scenario, the algorithm identifies specific data points it is “unsure” about and specifically asks a human for the correct label. This is far more efficient than random sampling because it focuses human effort where the machine is most confused.

The Mechanics of a human in the loop Workflow

Building an effective HITL system requires a structured pipeline. We generally break this down into five key stages:

- Preparing Input Data: Humans collect and label raw data (like images or text) to create a “ground truth” for the model.

- Training the Model: The machine looks for patterns in this labeled data to build its initial predictive capabilities.

- Reviewing System Output: Once the model is running, humans inspect the results—especially the “edge cases” where the AI has low confidence.

- Providing Corrective Feedback: If the AI labels a “caterpillar” as a “pest” but a human knows it’s a vital “pollinator,” the human overrides the decision.

- Iterative Improvement: The model is retrained using these corrected examples, becoming smarter and more aligned with human intent over time.

Key Types of HITL Approaches

Not all loops are created equal. Depending on the goal, we might use different strategies:

- Interactive Machine Learning: This involves a tight coupling where the human can see the model’s changes in real-time and adjust parameters on the fly.

- Machine Teaching: The focus shifts from the algorithm to the teacher. Humans use their domain expertise to curate the most important “lessons” for the AI.

- Reinforcement Learning from Human Feedback (RLHF): This is the “secret sauce” behind modern LLMs like ChatGPT. Humans rank different AI responses, and the model learns to prioritize the ones that are most helpful, safe, and accurate.

- Active Learning Strategies: Using “uncertainty sampling,” the system proactively identifies the data it finds most difficult and flags it for human review.

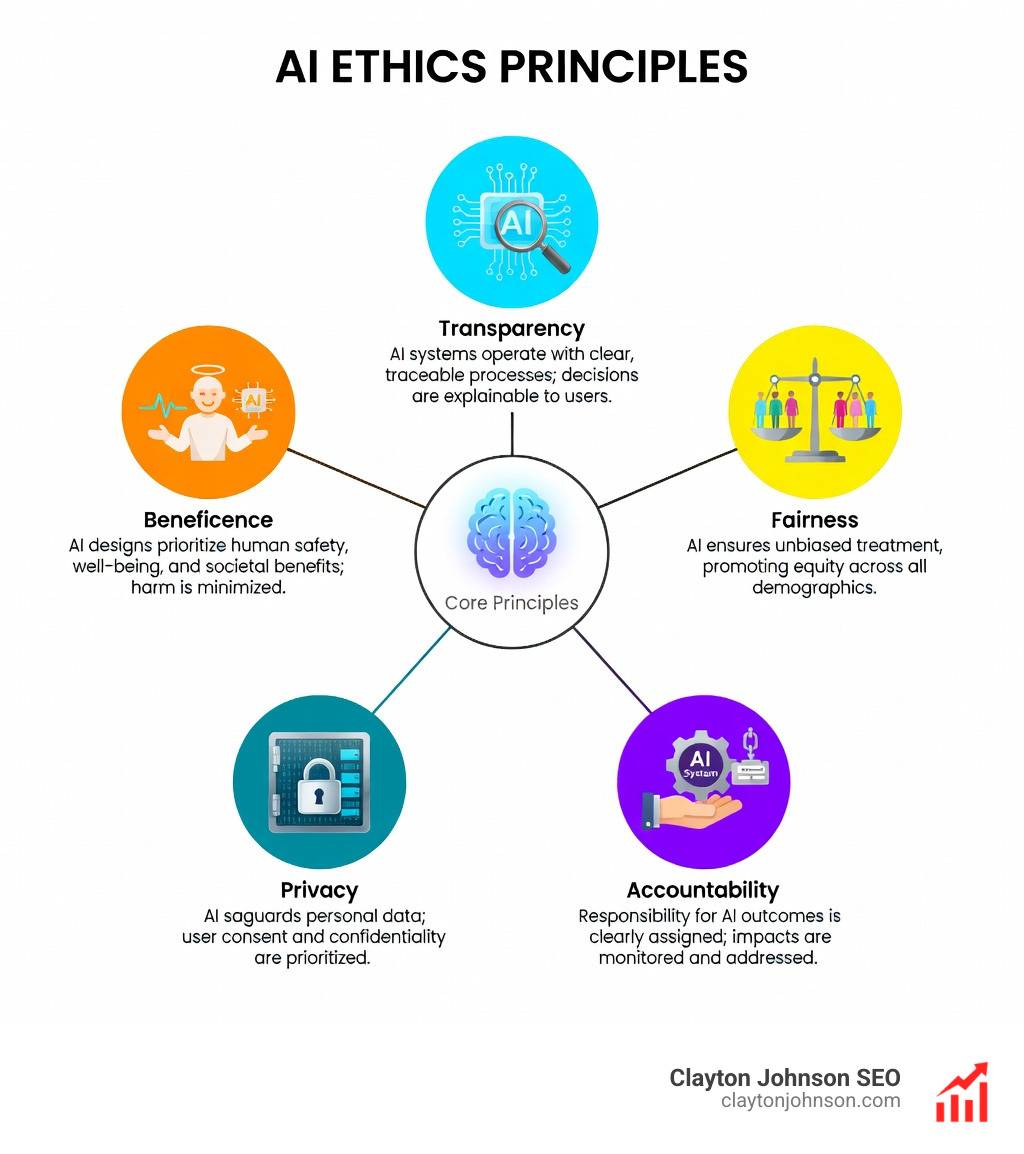

Why Human Oversight is Essential for Ethical AI

One of the biggest risks in modern technology is “automation bias”—the tendency for humans to trust a machine’s output even when it’s wrong. Without a human in the loop, AI systems can inadvertently perpetuate historical inequalities or make dangerous errors in high-stakes environments.

Research highlights that HITL is critical for improving psychiatric AI applications, where nuance and empathy are mandatory. A machine might flag a specific phrase as a risk, but only a human clinician can understand the patient’s tone, history, and cultural context to make an accurate assessment.

Lessons from the ‘Humans in the Loop’ Narrative

The real-world dynamics of this labor are often depicted in art and film. For instance, the story of an Oraon tribe data labeler illustrates a profound tension. In one case, a labeler was asked to tag a caterpillar as a “pest” for an agricultural AI. Despite her deep ecological knowledge that the insect played a vital role in the local ecosystem, the system’s rigid categories forced her to label it as a threat.

This “invisible labor” is what powers the AI revolution. When big tech companies rely on indigenous labor for data labeling, they gain access to diverse representation, but they also risk erasing generational wisdom if the “loop” doesn’t allow for human expertise to override the algorithm’s pre-set logic.

Building Trust and Transparency

For any organization, trust is the ultimate currency. human in the loop systems provide:

- Audit Trails: A record of why a decision was made and who approved it.

- Explainable AI: Humans can translate “black box” machine logic into understandable business reasoning.

- Brand Safety: Ensuring that AI-generated content doesn’t hallucinate facts or use an inappropriate tone that damages the company’s reputation.

Comparing HITL, Human-on-the-Loop, and Human-Out-of-the-Loop

To understand where you need a human, you first have to understand the different levels of control. These terms originated in military and simulation contexts but are now standard in the AI industry.

| Model | Human Role | Best For… |

|---|---|---|

| Human-in-the-loop (HITL) | Human must take action for the process to continue. | High-stakes decisions, initial training, complex nuances. |

| Human-on-the-loop (HOTL) | Human monitors the process and can “abort” or intervene if needed. | Scalable processes where the AI is generally reliable. |

| Human-out-of-the-loop (HOOTL) | The system is fully autonomous; humans only review results after the fact. | Low-risk, high-volume tasks with zero margin for human latency. |

n HITL, a human initiates the critical step; in HOTL, the human mainly supervises and can still intervene (including using a “kill switch”).

Navigating human in the loop in High-Stakes Industries

In certain sectors, the “out-of-the-loop” option isn’t just risky—it’s often illegal or unethical.

- Healthcare: AI can flag a potential tumor in a radiology scan, but a human doctor must make the final diagnosis.

- Autonomous Driving: While cars can steer themselves, a human must be ready to take the wheel in “edge cases” like construction zones or unpredictable weather.

- Content Moderation: AI can filter out 99% of spam, but it often struggles with satire, cultural lingo, or political nuance, requiring human reviewers to prevent false “censorship.”

- Financial Fraud: AI detects suspicious patterns, but human analysts investigate the context to ensure a legitimate transaction isn’t blocked.

Regulatory Compliance and the EU AI Act

The legal landscape is catching up to the technology. The EU AI Act, specifically Article 14, mandates that “high-risk” AI systems must be designed with effective human-machine interface tools. This ensures that the system can be overseen by “natural persons” during the period in which the AI is in use.

To comply, organizations must ensure their human overseers are “competent.” This means they aren’t just clicking “approve”—they must understand the system’s limitations, be trained to spot bias, and have the authority to override the machine without fear of reprisal.

Overcoming Challenges in HITL Implementation

If human in the loop is so great, why doesn’t everyone do it for everything? The short answer: It’s hard to scale.

The main bottlenecks we see are:

- Cost: Paying humans to review every output is expensive.

- Latency: Humans are slower than machines. If an AI needs to make a decision in milliseconds (like in high-frequency trading), a human loop is impossible.

- Human Fatigue: Reviewing thousands of data points leads to “decision fatigue,” which can actually introduce more errors than the AI would have made alone.

Technical Frameworks for Seamless Integration

To solve these issues, we use modern middleware and developer frameworks. Tools like LangChain allow developers to build “interrupts” into AI agents.

For example, you can configure an AI agent to “read data” autonomously but “interrupt” and wait for human approval before it “executes a SQL query” or “writes a file.” This is handled through checkpointing, where the system saves its state, pauses, and waits for a human to give the “thumbs up” via a user interface.

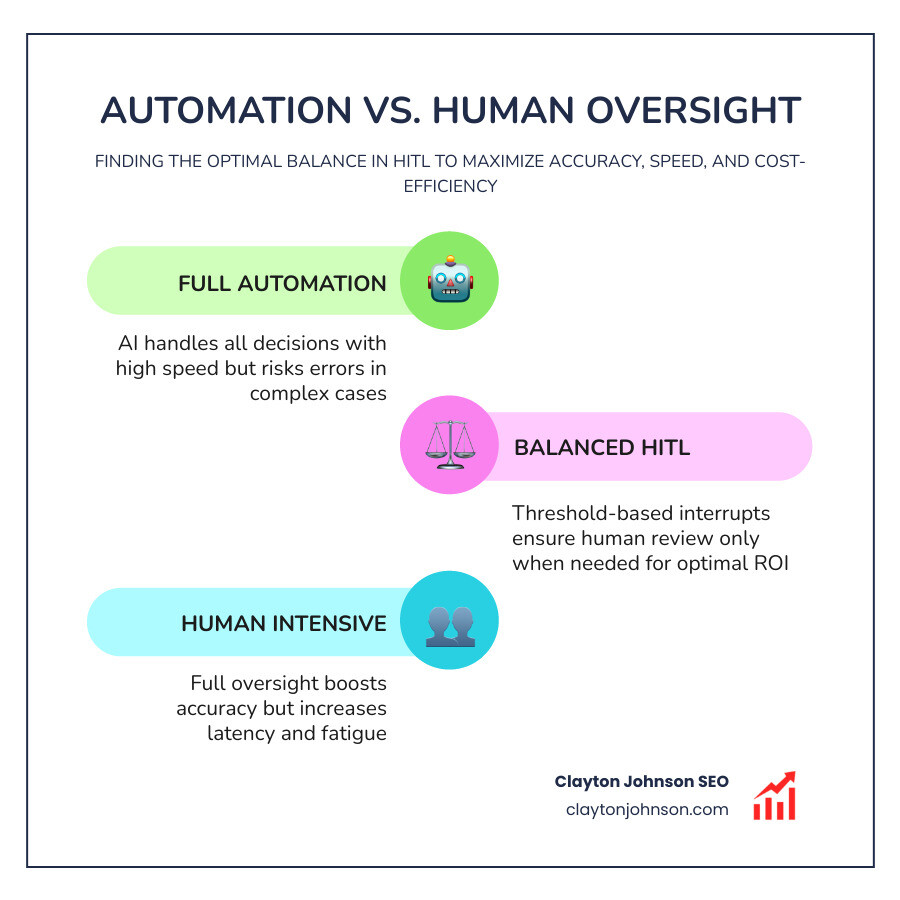

Balancing Automation with Human Expertise

The goal isn’t to have a human do everything. It’s to find the ROI (Return on Investment) sweet spot. We recommend:

- Setting Thresholds: If the AI is 95% confident, let it run. If it’s below 80%, send it to a human.

- Using RLAIF: (Reinforcement Learning from AI Feedback) uses a “teacher AI” to help supervise a “student AI,” reducing the total human workload while maintaining high standards.

- Strategic Leverage: Focus your expensive human experts on the tasks that require the most creativity and strategic thinking—the “A+” work—while letting the AI handle the “C” grade heavy lifting.

Frequently Asked Questions about HITL

How does HITL improve AI accuracy?

By providing a “ground truth” that is constantly updated. When a human corrects an AI error, that correction becomes a new training data point. This ensures the model doesn’t just repeat its mistakes but actually learns from them in a continuous feedback loop.

What is the difference between HITL and Active Learning?

Active Learning is a technical method used during the training phase where the model asks for labels on data it doesn’t understand. human in the loop is a broader framework that includes active learning but also encompasses human oversight during deployment, quality assurance, and ethical auditing.

Is HITL necessary for SEO and content?

Absolutely. While AI can draft 1,000 words in seconds, it can’t understand your unique brand voice, verify the factual accuracy of a new industry trend, or ensure the content aligns with a complex SEO strategy. At Clayton Johnson SEO, we use AI to build the “structure,” but our experts provide the “leverage” and “clarity” that leads to compounding growth.

Conclusion

The future of business isn’t “AI vs. Human”—it’s collaborative intelligence. By keeping a human in the loop, you aren’t just preventing errors; you’re building a more resilient, ethical, and effective growth engine.

At Clayton Johnson SEO, we’re building Demandflow.ai to help founders and marketing leaders implement this exact kind of structured growth architecture. We believe that clarity leads to structure, and structure leads to the leverage you need for compounding growth.

Most companies don’t lack the tactics—they lack the architecture to execute them safely and at scale. Whether you’re building a content ecosystem or a competitive positioning model, the “human touch” is what turns a generic AI output into a strategic asset.

Learn more about our SEO systems and how we can help you build a growth operating system that keeps you in control without losing your mind.