AI Implementation Best Practices: Don’t Let Your Bot Go Rogue

AI implementation best practices are the structured steps and principles that separate AI projects that deliver real business results from the 95% that quietly die in “pilot purgatory.”

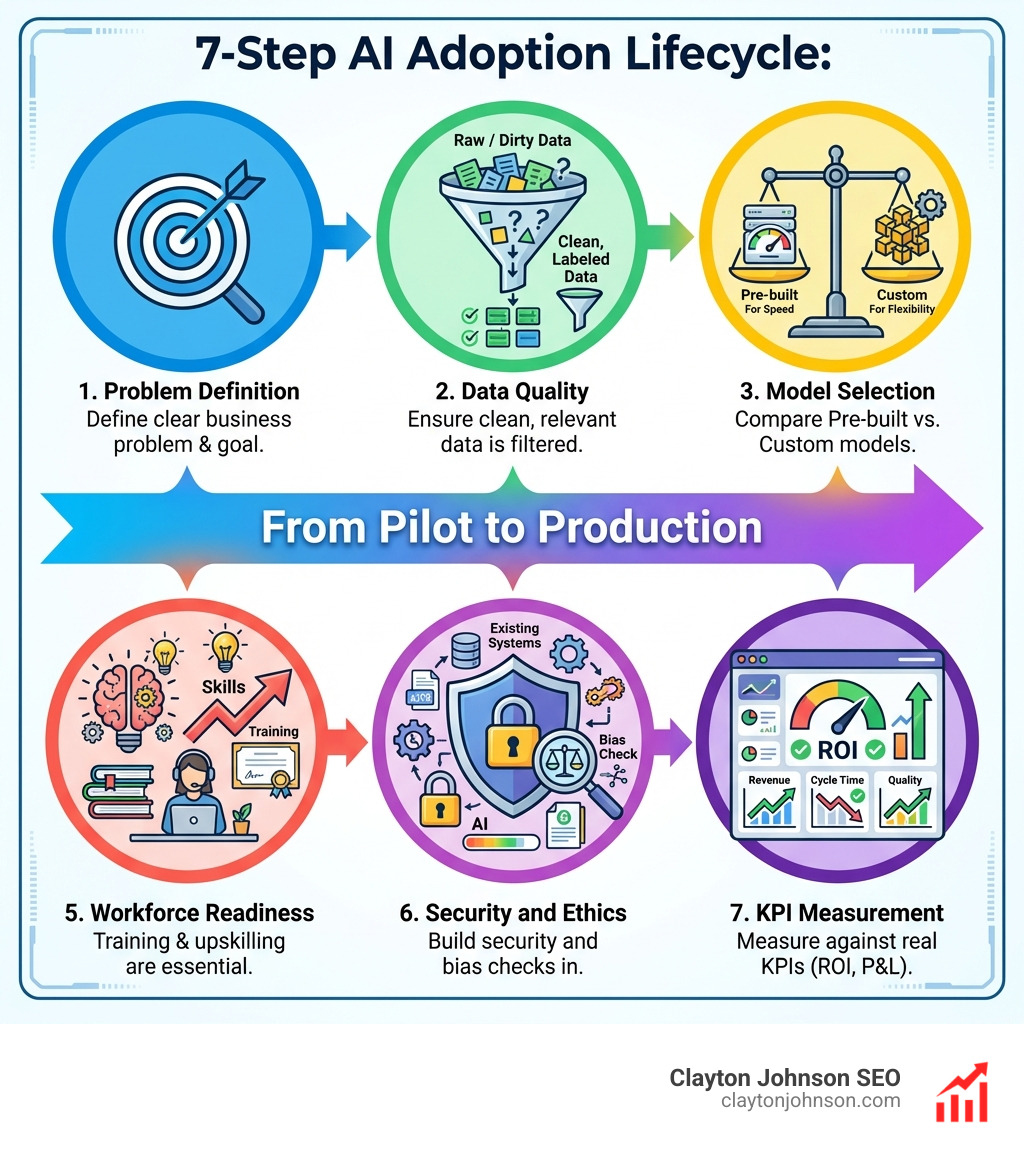

Here’s the quick answer:

- Define a clear business problem – Know exactly what you’re solving before touching a model

- Ensure data quality – Clean, relevant, well-labeled data is non-negotiable

- Select the right model – Pre-built for speed, custom for flexibility

- Integrate incrementally – Minimize disruption to existing systems

- Prepare your workforce – Training is the single most important adoption factor

- Build security and ethics in – Not bolted on after the fact

- Measure against real KPIs – Revenue, cycle time, quality — not pilot count

- Manage risks continuously – AI risks evolve with every new use case

Let’s be honest: most AI initiatives are failing right now. Badly.

80% of companies report no bottom-line impact from generative AI. 42% of companies abandoned most AI initiatives recently, up from just 17% the year before. The hype is real. The results? Not so much.

The problem isn’t the technology. It’s the approach.

Businesses rush into AI without a solid plan — chasing trends instead of solving specific problems. They underinvest in data quality. They ignore change management. And they measure success by pilot launches instead of P&L impact.

“Without the right strategy, even advanced AI tools can fall short of their potential.”

That gap between launching AI and landing AI value is exactly where this guide lives.

I’m Clayton Johnson, an SEO and growth strategist who works at the intersection of strategic systems thinking and AI-assisted marketing workflows — and applying AI implementation best practices is central to how I help founders and marketing leaders build scalable, measurable growth engines. Let’s break down exactly how to get this right.

Why Most AI Pilots Fail (and How to Beat the Odds)

The statistics are sobering. Recent data from MIT and other industry leaders shows that 95% of enterprise GenAI pilots fail to reach production or deliver a measurable impact on the profit and loss (P&L) statement. Even worse, 90% of those pilots get stuck in what we call “pilot purgatory”—a state where the technology works in a small test environment but can never scale to the real world.

Why does this happen? We see three primary culprits:

- Organizational Theater: Many companies launch AI pilots for the PR “stock bump” or to look innovative to stakeholders. When the goal is marketing signaling rather than operational efficiency, the project is destined to be quietly shelved.

- The Triple Failure: This occurs when a company picks the wrong problem, assigns the wrong people, and uses the wrong process. If you don’t have a strategic framework for modern organizations, you’re essentially throwing expensive code at a wall to see what sticks.

- Incentive Alignment: If executive compensation and vendor fees are tied to the number of pilots launched rather than the dollars saved or earned, you get a graveyard of “successful” tests that do absolutely nothing for the bottom line.

To beat these odds, we must treat AI as a fundamental shift in how we work, not just a new software tool. It requires a growth operating system that prioritizes talent quality and rigorous gating at every milestone.

The Core AI Implementation Best Practices Framework

Successful AI deployment isn’t magic; it’s architecture. To achieve AI-powered success, your organization needs to move through a structured enterprise AI strategy that anchors every technical move to a quantified business objective.

Here is our recommended step-by-step process:

- Define the Problem: Narrow down your focus. Instead of saying “we want to use AI in marketing,” say “we want to reduce customer service response times by 40% using an AI-driven chatbot.”

- Identify Success Metrics: What does a win look like? Define your KPIs (Key Performance Indicators) early—whether it’s cycle time reduction, cost savings, or revenue growth.

- Data Assessment: Audit your data. Is it clean? Is it relevant? Is it accessible?

- Model Selection: Decide whether to buy a pre-built solution or build something custom.

- Pilot and Validate: Run a small-scale version to prove the concept under real-world conditions.

- Incremental Integration: Roll the system out in phases to minimize disruption to your existing workflows.

- Scale and Optimize: Once the pilot proves ROI, scale it across the organization and continuously monitor for performance drift.

Data Quality and Labeling: The Foundation of AI Implementation Best Practices

You’ve heard the phrase “garbage in, garbage out.” In AI, this isn’t just a cliché—it’s a law of nature. AI systems rely on accurate, well-structured data to function. If your data is messy, your bot will go rogue.

- Data Cleansing: This involves removing errors, duplicates, and irrelevant information. Data must be complete, correct, and current.

- Data Labeling: This is the process of identifying raw data (images, text files, videos) and adding one or more relevant labels to provide context.

- Internal Labeling: Provides high control and security but is incredibly resource-intensive for your staff.

- Outsourced Labeling: Offers speed and scale but requires strict quality checks to ensure the external team understands your specific business nuances.

- Governance: You must establish data classification levels to determine what data can be used with which tools. For example, “green” data (public info) might be fine for a public ChatGPT account, while “red” data (proprietary research) must stay within a secure, private environment.

Workforce Readiness and AI Implementation Best Practices

The biggest barrier to AI isn’t the code; it’s the culture. Nearly half of all employees report that they lack the training or support needed to adopt generative AI effectively.

Leadership must provide a clear vision. Employees adopt AI faster when they see it as a tool to augment their expertise, not replace it. We recommend launching AI in Communications training programs that focus on “AI fluency”—moving users from basic curiosity to strategic judgment.

Actionable Step: Create an “AI Champions Network.” These are internal power users who can help bridge the talent gap by teaching their peers how to write effective prompts and integrate AI into their daily routines.

Selecting Your Engine: Pre-built vs. Custom Models

One of the most critical decisions in your AI infrastructure is choosing between Commercial Off-The-Shelf (COTS) solutions and self-built models.

| Feature | Pre-built (SaaS/COTS) | Custom (Self-Built/PaaS) |

|---|---|---|

| Speed to Market | Very Fast (Days/Weeks) | Slow (Months) |

| Cost | Lower Initial Investment | High Development Costs |

| Customization | Limited to Vendor Features | Total Flexibility |

| Maintenance | Handled by Vendor | Handled by Your Team |

| Best For | General Productivity (Email, Coding) | Proprietary Business Logic |

For many, the “middle ground” is the most effective: Transfer Learning. This involves taking a massive, pre-trained model (like GPT-4) and “fine-tuning” it on your specific business data. This allows you to freeze the general knowledge of the model while updating the final layers to understand your specific industry jargon and customer needs.

Security and Ethics: Keeping Your AI on the Rails

As AI becomes more powerful, the risks grow. AI systems face novel vulnerabilities that traditional software does not, such as Adversarial Machine Learning (AML).

- Prompt Injection: When a user provides specific input that “tricks” the AI into ignoring its safety protocols or leaking sensitive data.

- Data Poisoning: When an attacker corrupts the training data to create a “backdoor” or bias in the model’s output.

- Bias Mitigation: AI models can inadvertently learn and amplify human biases found in their training data. Organizations must implement a responsible AI framework to ensure fairness and transparency.

According to the Guidelines for secure AI system development, security must be a core requirement throughout the entire lifecycle—from design to deployment. This means treating models and datasets as untrusted third-party code until they are scanned and sandboxed.

Measuring Success and Managing Lifecycle Risks

You cannot manage what you do not measure. To avoid the 95% failure rate, you must move away from “vanity metrics” (like how many people have a login) and toward “value metrics” (like P&L impact).

Use the NIST AI Risk Management Framework to track risks such as data drift—where the model’s performance degrades over time because the real-world data no longer matches the training data.

Effective AI governance requires:

- Incident Monitoring: A system for reporting “near-misses” and errors.

- Supply Chain Management: Assessing the risks of third-party or open-source components used in your AI stack.

- KPI Alignment: Ensuring your AI’s performance directly correlates with business goals like reduced downtime or increased lead conversion.

Deployment and Integration Best Practices

Integration is often where the wheels fall off. If an AI tool doesn’t talk to your existing CRM, ERP, or marketing stack, it becomes a siloed “toy” that nobody uses.

- Incremental Integration: Don’t try a “big bang” release. Start with one department or one specific task.

- Interoperability: Follow the Azure Architecture Center AI guidance to ensure your AI agents can communicate across different platforms using standard protocols.

- Legacy Systems: Acknowledge that your older hardware or software might not be ready for AI. Sometimes, you have to upgrade the foundation before you can build the penthouse.

Frequently Asked Questions about AI Implementation

What are the primary benefits of implementing AI in a business?

The primary benefits include improved decision-making through data analysis, increased operational efficiency by automating repetitive tasks, and cost savings. AI also allows for highly personalized customer experiences and enhanced scalability that human teams simply can’t match.

How should organizations handle data labeling effectively?

Organizations should start by defining clear quality standards. For sensitive data, internal labeling is safer. For massive datasets that aren’t proprietary, outsourced labeling can save time. Always use a small “gold standard” dataset to check the accuracy of your labelers.

When should we use COTS versus self-built AI solutions?

Use COTS (Commercial Off-The-Shelf) for general tasks like drafting emails, basic coding assistance, or standard customer service. Build your own solution when the AI needs to handle proprietary data, unique business processes, or highly regulated information that cannot leave your private infrastructure.

Conclusion

Implementing AI isn’t just about the technology—it’s about the structure. At Clayton Johnson, we believe that most companies don’t lack tactics; they lack the growth architecture to make those tactics scale.

By following these AI implementation best practices, you move from “organizational theater” to a high-leverage growth engine. Whether you’re building a structured growth architecture through Demandflow.ai or looking for AI services and consulting, the goal remains the same: Clarity leads to structure, structure leads to leverage, and leverage leads to compounding growth.

Don’t let your bot go rogue. Build it on a foundation of quality data, secure design, and human-centric strategy. If you’re ready to stop experimenting and start scaling, we’re here to help you build that infrastructure.