How to Build a Content House That Google Actually Wants to Visit

Why Content Architecture SEO Technical Is the Foundation of Every Ranking Win

Content architecture SEO technical is the practice of organizing your website’s pages, URLs, internal links, and content hierarchy so search engines can crawl, understand, and rank your site efficiently.

If you want the quick answer, here it is:

How to build strong technical content architecture for SEO:

- Keep important pages within 3 clicks of your homepage

- Group related content into topic clusters with a central pillar page

- Use clean, descriptive URLs (under 75 characters, hyphens, lowercase)

- Link pages intentionally — from high-authority pages down to supporting content

- Fix orphan pages — pages with no internal links pointing to them

- Submit an XML sitemap so Google doesn’t miss your content

- Add schema markup so search engines (and AI) understand your content’s context

Think about walking into a massive library where the books are scattered randomly across the floor. No signs. No sections. No order. That’s exactly how Google’s crawlers feel when they land on a poorly structured website.

Your site’s architecture isn’t just a design decision. It’s an SEO decision. It determines which pages get crawled, which pages earn authority, and which pages rank — or disappear entirely.

One financial services site saw a 67% increase in organic traffic within 60 days simply by fixing crawlability issues in their site architecture. No new content. No backlinks. Just structure.

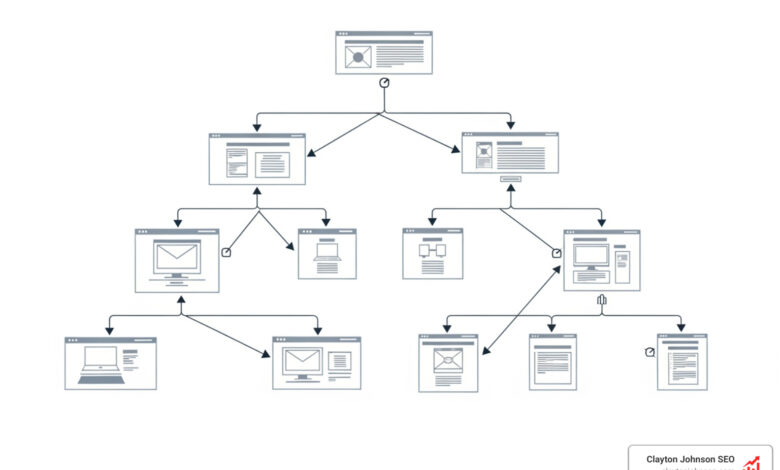

Pages buried more than four clicks from your homepage are seen as less important by search engines. Your crawl budget — the limited number of pages Google will crawl on any visit — gets wasted on deep, disorganized structures. Meanwhile, your best content sits unseen.

This guide walks you through exactly how to build a content architecture that Google wants to explore — and that turns visitors into leads.

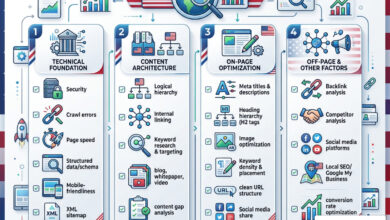

The Blueprint for Content Architecture SEO Technical Excellence

Building a website without a structural plan is like trying to build a house by throwing bricks in a pile and hoping they form a kitchen. To succeed in modern search, we need a blueprint. This blueprint is what we call content architecture seo technical.

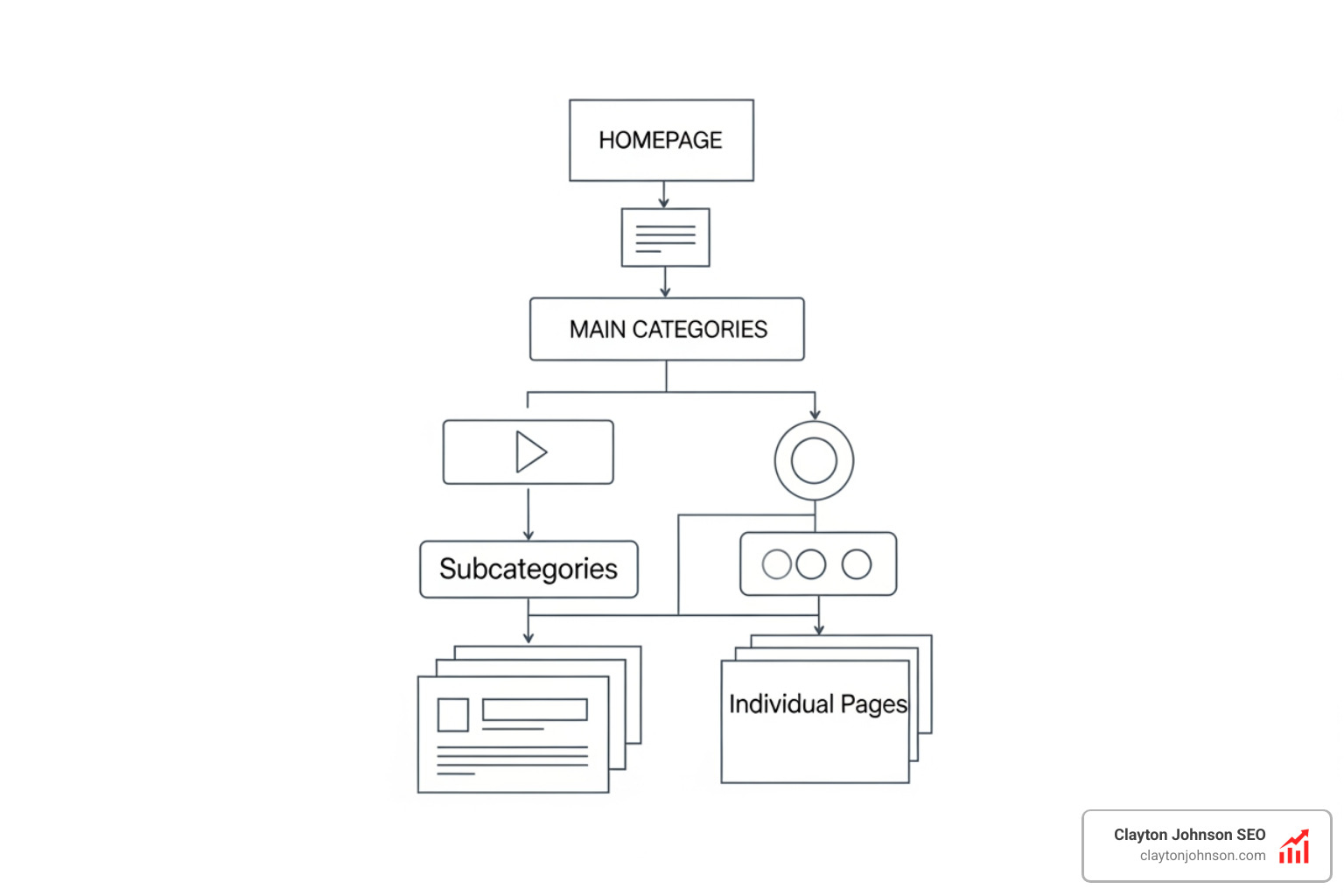

At its core, site architecture is the skeleton of your digital presence. It determines how link equity (often called “link juice”) flows from your homepage down to your deepest blog posts. If your skeleton is broken, your content—no matter how brilliant—will struggle to stand upright in the Search Engine Results Pages (SERPs).

One of the most critical concepts here is the “Crawl Budget.” Google doesn’t have infinite time to spend on your site. According to Google’s guide to managing crawl budget, search engines only visit a certain number of pages before moving on. If your architecture is a maze, the crawler might spend its entire budget on “junk” pages, leaving your high-converting landing pages unindexed.

Flat vs. Deep Architecture: Which Wins?

When designing your structure, you generally choose between a flat or deep hierarchy.

| Feature | Flat Architecture | Deep Architecture |

|---|---|---|

| Definition | Most pages are 1-3 clicks from the home page. | Pages are buried 5, 10, or 20 clicks deep. |

| Crawlability | Excellent; bots find everything quickly. | Poor; deep pages are often ignored. |

| User Experience | High; users find info fast. | Frustrating; too many menus to click through. |

| Authority Flow | Strong distribution of PageRank. | Equity gets “diluted” as it travels deeper. |

| Best For | Small to medium sites, SaaS, Local. | Massive e-commerce sites, huge news archives. |

As Google’s SEO Starter Guide notes, a clear, logical structure doesn’t just help bots; it signals to search engines exactly what your site is about by grouping related topics together.

Understanding Site Structure and Crawl Efficiency

Crawl efficiency is the art of making Google’s job easy. We follow the “3-click rule”: any important page on your site should be reachable within three clicks from the homepage. Once you hit 4 or 5 clicks, search engines start perceiving those pages as less valuable.

To help the bots navigate, we use several technical “roadmaps”:

- XML Sitemaps: Think of this as a backup plan. Even if your internal links are messy, a sitemap submitted to Google Search Console ensures Google knows your URLs exist.

- Robots.txt: This acts as the gatekeeper, telling bots which areas of your house are “off-limits” (like your admin login or checkout pages) so they don’t waste time there.

- Faceted Navigation: Common in e-commerce, this allows users to filter by color, size, or price. However, Shopify warns that faceted search can create thousands of duplicate URLs. We manage this using canonical tags or “noindex” rules to keep the crawl budget focused.

Using a Semrush SEO toolkit can help you visualize these paths and identify where the “bottlenecks” are in your current crawl path.

The Role of Content Architecture SEO Technical in Topical Authority

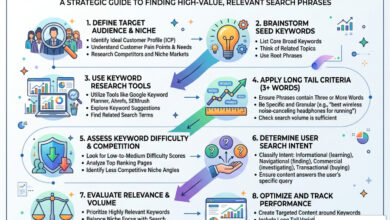

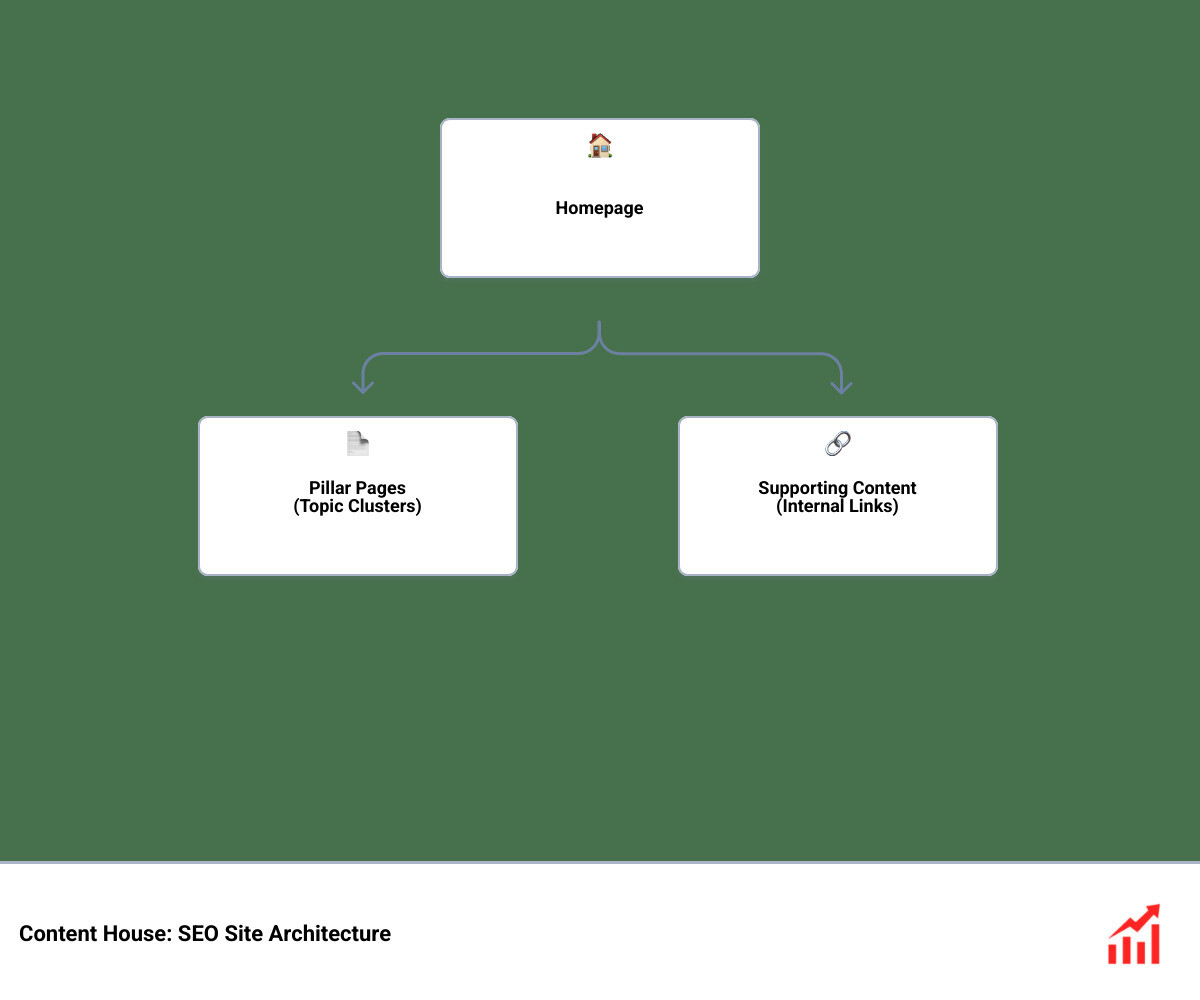

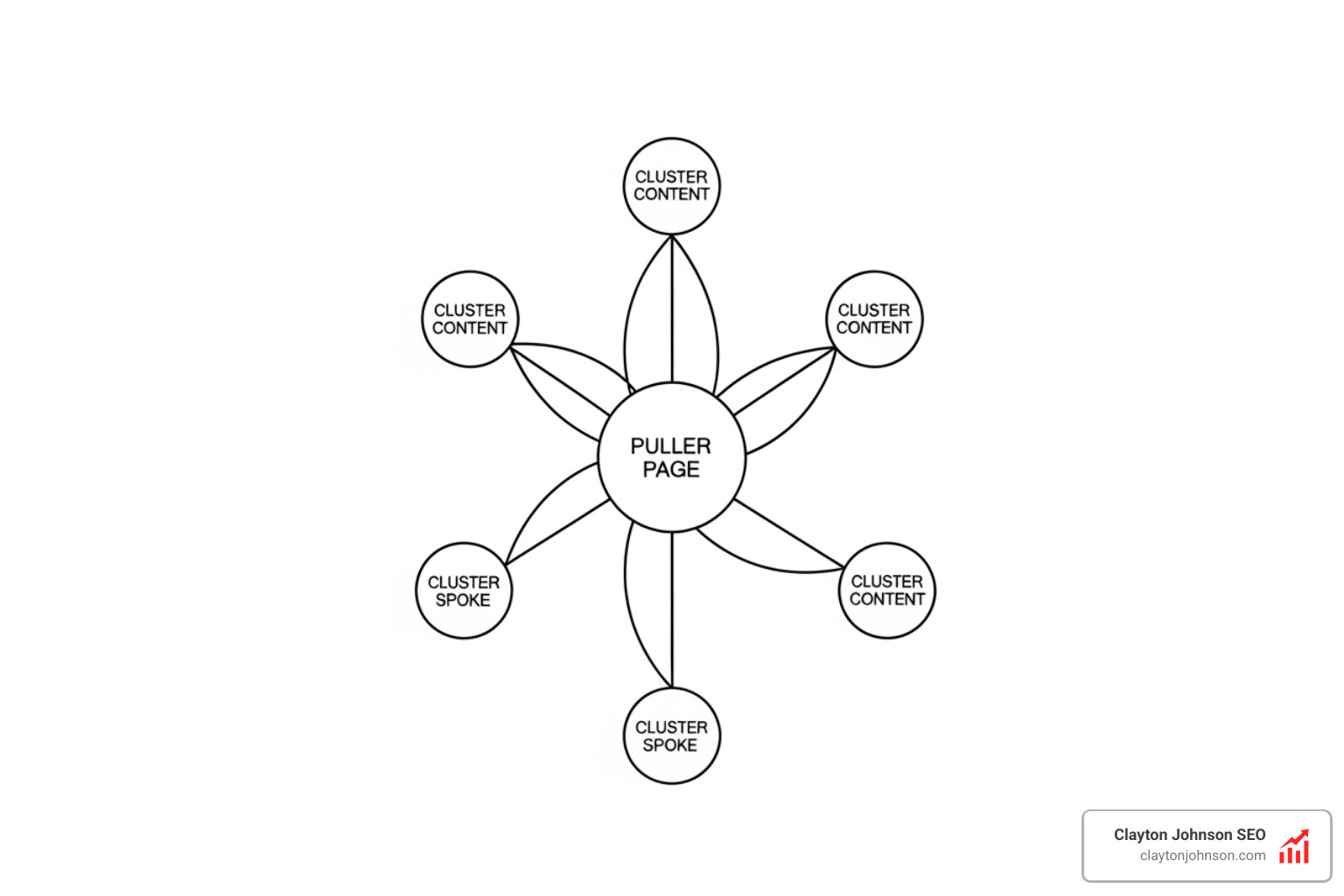

Google has moved beyond matching keywords; it now looks for topical authority. It wants to know if you are an expert in your field. We demonstrate this expertise through the Hub-and-Spoke model, also known as topic clusters.

In this model, you create one massive “Pillar Page” that covers a broad topic (e.g., “The Ultimate Guide to Technical SEO”). You then create several “Spoke” or cluster pages that dive deep into specific subtopics (e.g., “Crawl Budget Optimization” or “Schema Markup Guide”). As we explain in our guide on pillar pages and topic clusters, the magic happens when you link them all together.

By interlinking these pages, you create a “semantic web.” This tells Google, “We don’t just have one article on SEO; we have an entire ecosystem of knowledge.” This builds E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness). High-impact SEO content stands out because it is organized to satisfy both the user’s intent and the search engine’s need for context.

Strategic Internal Linking and URL Taxonomy

Internal linking is the circulatory system of your website. It carries the “blood” (authority and PageRank) to every page. Without it, your pages wither away as “orphans.”

When we link, we use anchor text—the clickable words in a link. Instead of saying “click here,” we use descriptive text like “technical site audit.” This gives search engines a massive hint about what the destination page covers. According to Wikipedia’s entry on internal and external links, these connections are what allow PageRank to flow throughout a domain.

Next, let’s talk about URL Taxonomy. A URL should be a readable breadcrumb trail.

- Bad URL:

claytonjohnson.com/p=123?id=xyz - Good URL:

claytonjohnson.com/seo/technical-architecture/

In our guide on how to build a content taxonomy, we emphasize that your URL should mirror your site hierarchy. Keep them under 75 characters, use hyphens (not underscores), and avoid stop words like “the” or “and” to keep things clean.

Optimizing Content Architecture SEO Technical for AI and LLMs

The search landscape is changing. With the rise of AI Overviews and Large Language Models (LLMs), our content architecture seo technical needs to be “machine-readable.”

AI systems don’t just “crawl” like Googlebot; they “ingest” and “retrieve.” To help them, we use Schema Markup. Schema is a specific code you add to your HTML that tells AI exactly what your content represents—whether it’s a recipe, a product, or a “how-to” guide. While Google notes that structured data helps search engines understand the page, it provides the clear context AI needs to understand entity relationships.

By mastering AI SEO taxonomy systems, we ensure that our “entities” (topics, people, brands) are distinct and easy for AI to map. This increases the odds of our brand being cited correctly in AI-powered summaries.

We also practice “chunking.” This involves breaking content into logical, self-contained sections with clear headings. As MongoDB explains, chunking allows systems to retrieve the most relevant piece of information without needing to process the entire document, which is exactly how AI search tools function.

Auditing for Orphan Pages and Structural Debt

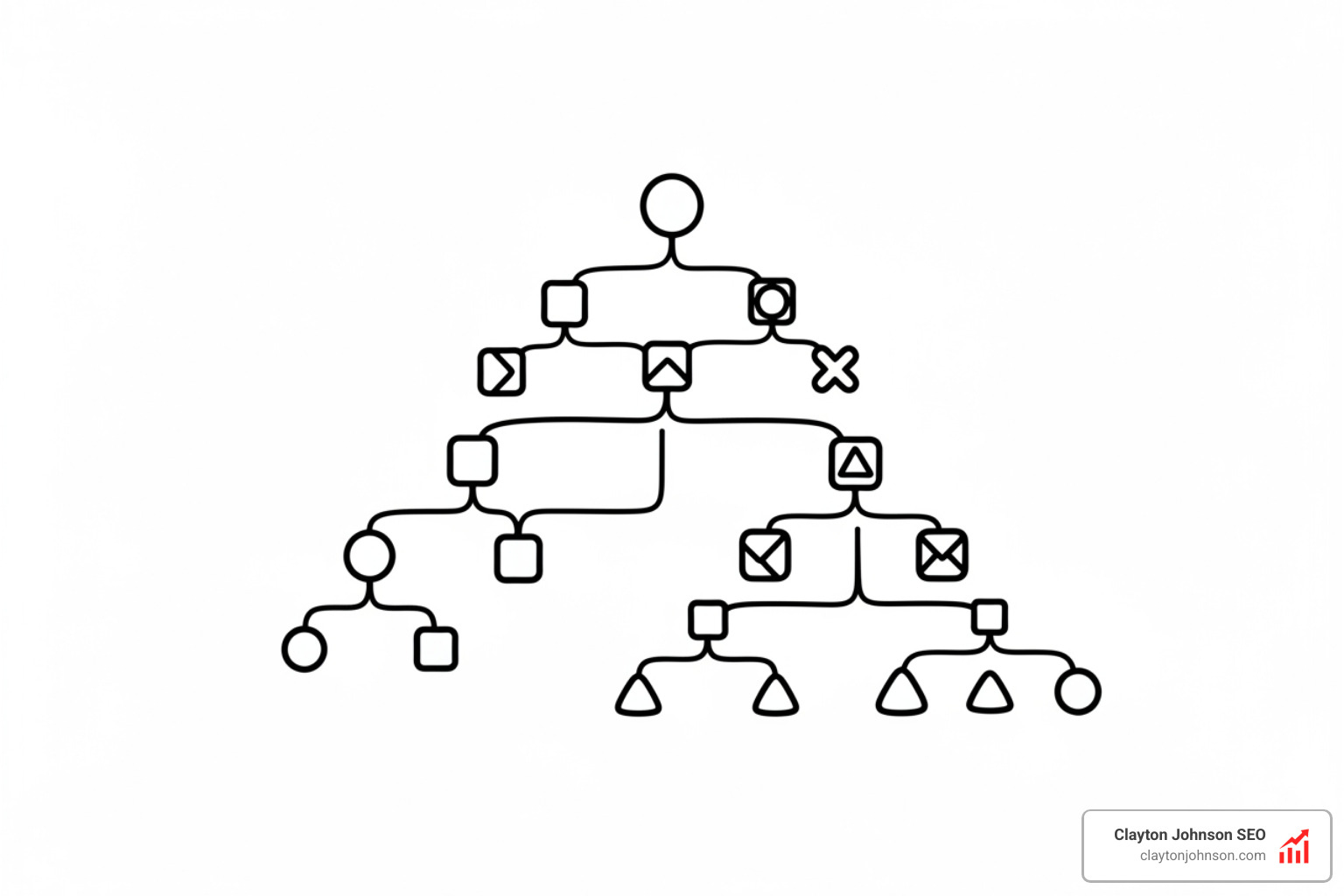

Even the best-built house eventually needs a renovation. Over time, websites develop “structural debt”—broken links, outdated categories, and the dreaded orphan pages.

An orphan page is a page that exists on your server but has zero internal links pointing to it. Since Google follows links to find content, these pages are effectively invisible. You can discover orphan pages with Semrush or Screaming Frog and fix them by adding them back into your topical clusters.

Another common issue is duplicate content caused by URL parameters. This happens when the same page is accessible via multiple URLs (e.g., ?sort=price vs ?sort=new). This confuses search engines and splits your ranking power. To stay healthy, you should regularly diagnose your site health to catch these issues before they tank your rankings.

Implementing a Scalable Content Taxonomy

Taxonomy is simply a fancy word for “classification.” As your site grows from 50 pages to 5,000, you need a system that scales. A data-driven taxonomy allows you to add new categories without breaking the existing link equity flow.

We recommend starting with a human-centered approach to taxonomy. Ask yourself: “How would a real person look for this?” Don’t rely solely on AI taxonomy tools that might suggest structures that make sense to a robot but confuse a human.

When you boost rankings with data-driven taxonomy, you ensure that your site is ready for mobile-first indexing. Google now primarily uses the mobile version of your site for ranking. If your mobile menu is stripped down or missing the logical categories found on your desktop site, your rankings will suffer. Don’t forget Image SEO—ensure your images are placed within relevant content silos and have descriptive alt-text to help them appear in visual search.

Measuring Success and Future-Proofing Your Content House

Success in content architecture seo technical isn’t measured by how “pretty” the site map looks. It’s measured by compounding growth. When you build a durable system, every new piece of content you publish gains an immediate advantage because it’s being “plugged into” a high-authority, well-organized ecosystem.

At Clayton Johnson SEO, we don’t just chase the latest algorithm update. We build traffic systems driven by search intent and structured strategy. By aligning your technical depth with strategic positioning, we turn fragmented marketing efforts into a coherent growth engine.

If your site feels like a disorganized library, it’s time to bring in the architects. We specialize in system-level thinking—transforming messy URL collections into structured ecosystems. Whether you need a full audit or a scalable traffic system, we focus on measurable business impact, not vanity metrics.

Ready to build a site that Google loves to visit? Contact Clayton Johnson today, and let’s start building your compounding growth engine. Remember: Clarity leads to Structure, which leads to Leverage, which leads to Growth. Let’s get to work.