The Claude Prompting Bible: Best Practices and Templates

To move beyond “vibe coding,” we must understand that Claude doesn’t just read a prompt; it processes a statistical landscape of tokens. Effective claude prompt engineering is less about “talking to a bot” and more about architectural design. We are building a container for Claude’s reasoning.

The Shift from Prompting to Context Engineering

While traditional prompt engineering focuses on the specific instructions you send, context engineering is the art of managing the entire environment Claude operates in. This includes the system instructions, the message history, the tools available, and the external data provided.

Anthropic views context engineering as the natural progression of prompting. In a long conversation, the “context” evolves. If we don’t curate it, we run into “context rot”—a phenomenon where the model’s performance degrades as the conversation grows longer and noisier.

The Attention Budget and Token Economics

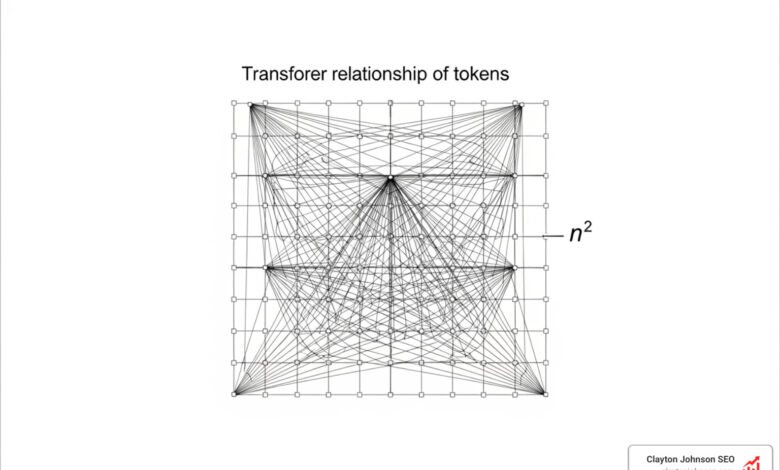

Every token we add to a prompt isn’t just a cost; it’s a tax on Claude’s attention. LLMs are based on the transformer architecture, which uses an attention mechanism where every token can potentially attend to every other token. This creates $n^2$ pairwise relationships. As the context grows, Claude has more “distractions” to navigate.

Understanding token economics helps us make better engineering decisions. For example, using the Model Context Protocol (MCP) can be “eager,” loading up to 32,000 tokens of tool metadata into every single message. At roughly $0.16 per message in overhead, a developer sending 50 messages a day could spend $160 a month just on idle metadata. Mastering claude prompt engineering means learning to load context “lazily” rather than “eagerly.”

| Feature | Prompt Engineering | Context Engineering |

|---|---|---|

| Focus | Writing the “perfect” instruction | Managing the entire state/history |

| Scope | Single turn of inference | Long-horizon, multi-turn tasks |

| Goal | Accuracy for a specific task | Maintaining coherence over time |

| Tools | XML tags, few-shot examples | Compaction, subagents, memory |

Core Techniques for Claude Prompt Engineering

If we want Claude to perform at its peak, we need to use the right tools for the job. Here are the foundational pillars of a professional prompt.

1. Few-Shot Prompting: The Gold Standard

Few-shot prompting is arguably the most powerful basic technique. Instead of describing a “professional yet witty tone,” we show Claude 3-5 examples of exactly what that looks like. Research shows that providing concrete examples outperforms abstract descriptions every time. It allows Claude to match patterns in length, structure, and style without guessing.

2. XML Tags for Structure

Claude is specifically trained to recognize and respect XML tags (like

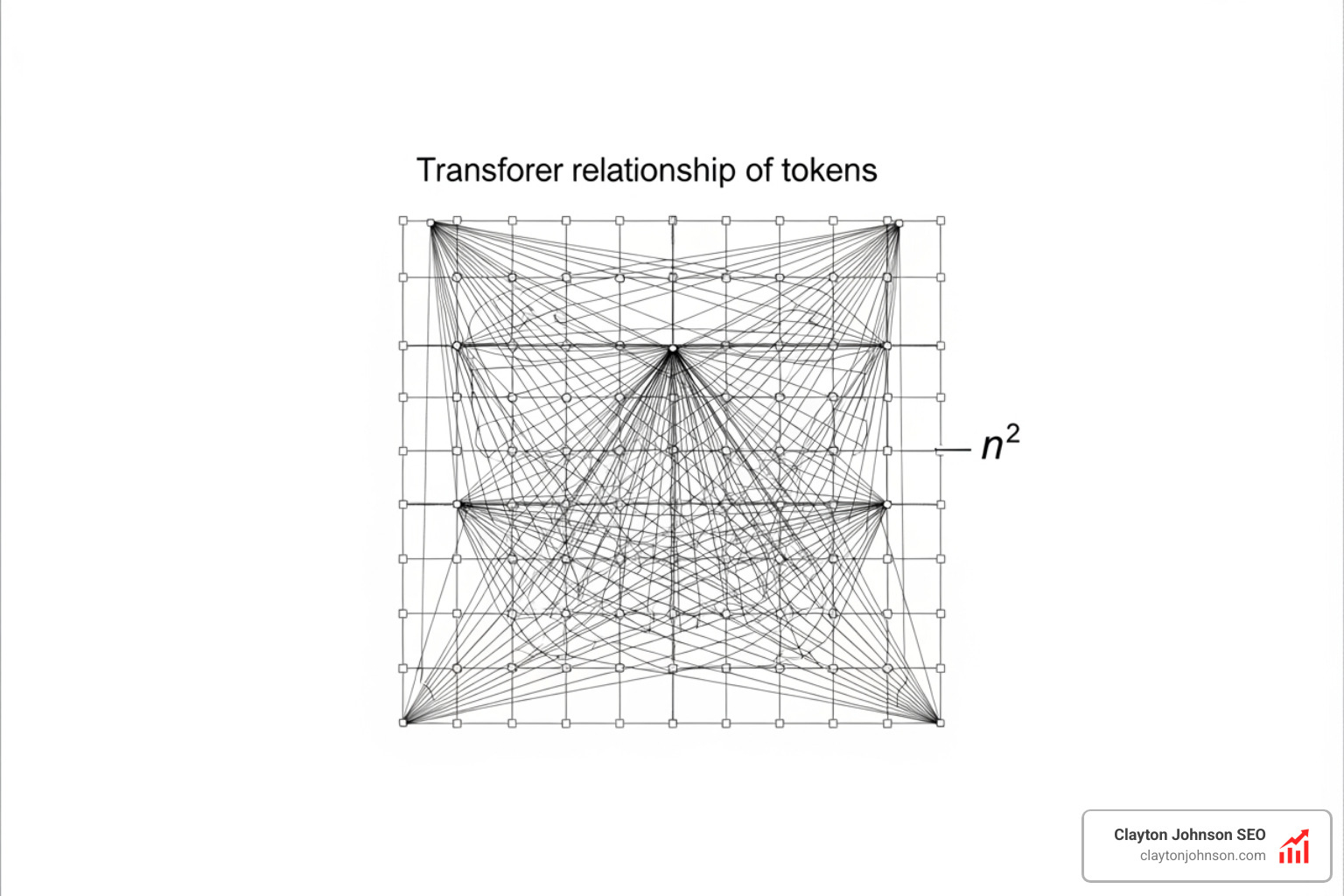

3. Structured Output and Prefilling

To make Claude’s output machine-readable (like JSON), we can use a technique called “prefilling.” By ending our prompt with the opening bracket {, we force Claude to continue in that format. This eliminates the “Here is your JSON:” preamble that breaks many automated workflows.

Advanced Context Engineering and Subagent Orchestration

As tasks become more complex, we can’t fit everything into a single prompt. This is where we move into the “agents as functions” paradigm.

Skills vs. MCP

While MCP provides the “what” (capabilities like searching a database), “Skills” provide the “how” (expertise). Skills are reusable, markdown-based instruction sets that can be lazy-loaded. A skill’s metadata might only cost 100 tokens, while the full body is only pulled in when Claude actually needs it. This effective context engineering for AI agents saves money and keeps Claude’s attention focused.

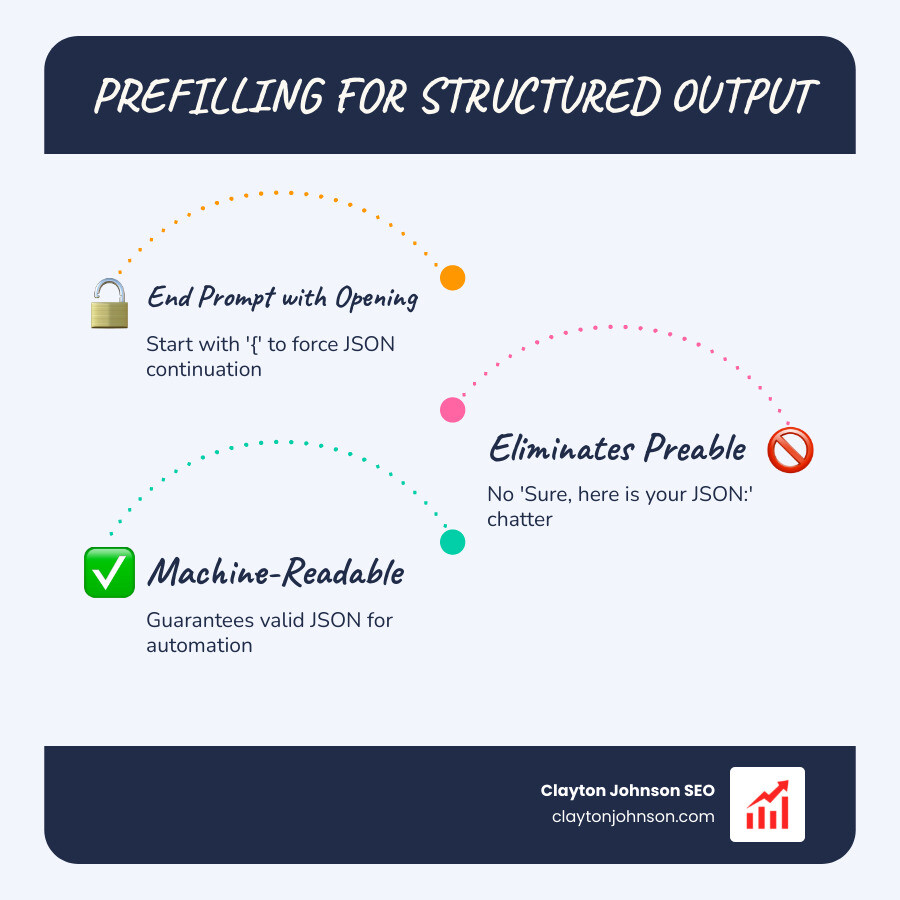

The Power of Subagents

Instead of one massive “monolith” prompt that tries to do everything, we should stop writing monoliths and start chaining your AI prompts.

A subagent is a separate Claude instance called by the main agent to handle a specific sub-task. For example, the main agent might delegate “researching competitor pricing” to a subagent. The subagent might use 20,000 tokens to do the work but returns only a 1,000-token summary to the main agent. This keeps the main agent’s context window clean and focused.

Avoiding Failure Modes in Claude Prompt Engineering

Even experts run into walls. Understanding common failure modes is key to systematic optimization.

- Context Rot: As the conversation gets longer, Claude might lose the “needle in the haystack.” Research on context rot suggests that models lose focus as token counts climb. The solution is compaction: periodically asking Claude to summarize the key points of the conversation and starting a fresh thread with that summary.

- The Role Prompting Myth: Many users still start prompts with “You are a world-class marketing expert.” While this can help with tone, research shows that for accuracy tasks, role prompting often results in improvements that are statistically insignificant (often only 0.01 apart). It is better to focus on what the model should do rather than who it should be.

- Overthinking: Claude’s “extended thinking” feature is brilliant for math and logic, but it can lead to “over-engineering” on simple tasks. If Claude is getting too “wordy,” we can dial back the “effort” parameter or use more direct, constrained instructions. If you find yourself frustrated, it might be time to break the chain of bad AI responses by resetting the context entirely.

The Future of Agentic Workflows and Systematic Optimization

The future of claude prompt engineering isn’t just about better words; it’s about better systems. We are moving toward a world where agents act like functions in a software program—specialized, isolated, and composable.

Long-Horizon Tasks and Memory Management

For tasks that span days or thousands of steps—like Claude playing a video game—we use structured note-taking. Instead of relying on the raw message history, we instruct Claude to maintain a “state file” or a “memory log.”

This “agentic memory” allows Claude to track progress (“I have completed 4 of 10 tasks”) without re-reading the entire history every time. You can find deep-dive examples of this in the memory and context management cookbook. For those looking to master the logic behind these steps, our ultimate Claude chain of thought tutorial provides a roadmap for structured reasoning.

Token Economics and Performance Benchmarking

Systematic optimization requires measurement. If you are building a production system, you should benchmark your prompts against a “golden dataset” of 100-1,000 examples.

We look for three things:

- Quality: Does it meet the success criteria?

- Latency: How long does it take to think and respond?

- Cost: How many tokens are we burning?

Often, the best way to improve results is to stop yelling at the bot and start providing better data. If a human colleague would be confused by your prompt, Claude will be too.

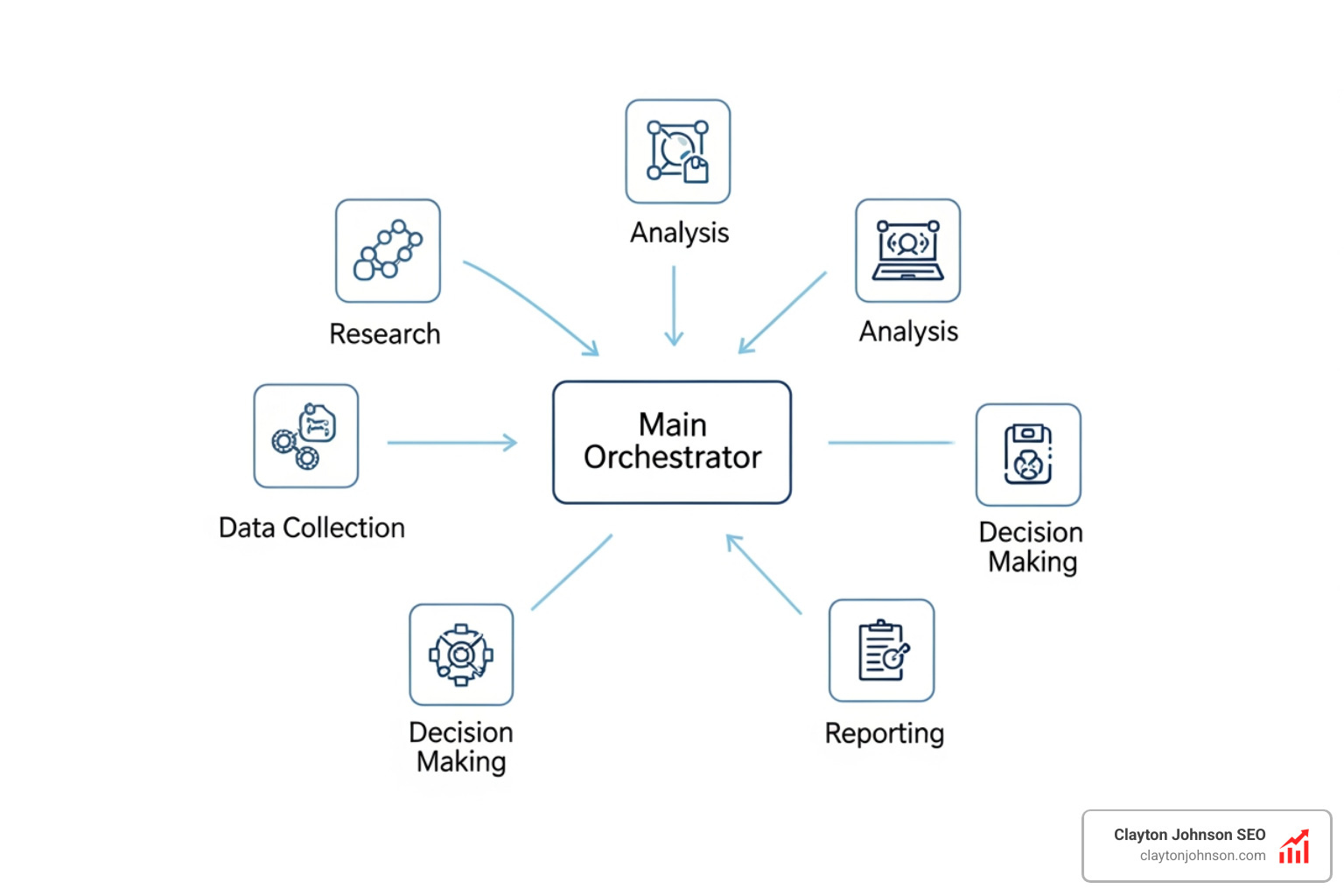

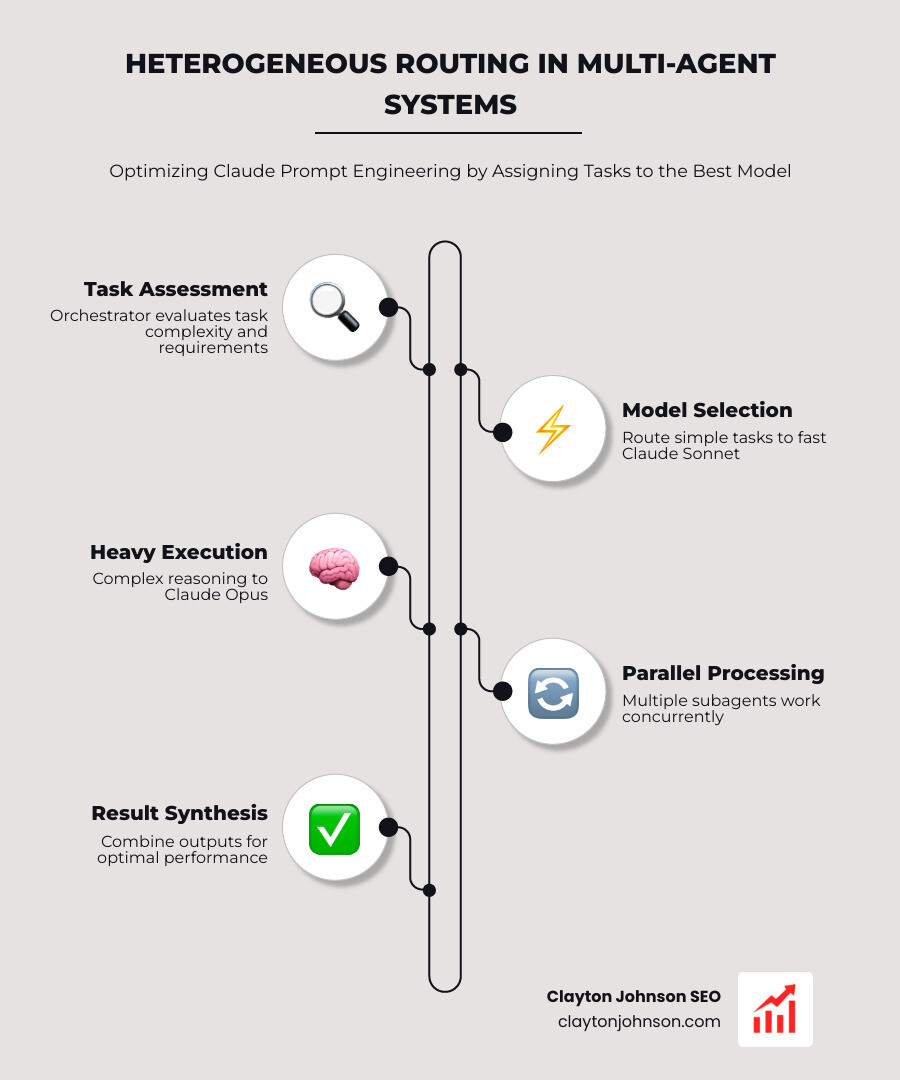

Multi-Agent Parallelism and Orchestration

The cutting edge of claude prompt engineering involves multi-agent parallelism. Using Anthropic’s multi-agent research system, we can spin up multiple subagents concurrently. One subagent might check for bugs, another for security vulnerabilities, and a third for documentation—all at the same time.

Anthropic’s agent teams feature is the first step toward this peer-to-peer collaboration. By routing different tasks to different models (e.g., using the heavy-duty Claude Opus for orchestration and the faster Claude Sonnet for execution), we optimize for both intelligence and speed.

Building Your Own Leveraged Systems

At the end of the day, claude prompt engineering is about leverage. My philosophy at Clayton Johnson SEO is built on the idea that Clarity → Structure → Leverage → Compounding Growth. By building structured “prompt contracts” and durable context engineering systems, we turn AI from a “gamble” into a reliable growth engine.

If you’re looking to build these types of AI-augmented marketing workflows or need a technical SEO architecture that actually scales, we can help. Whether it’s through our SEO services or custom AI workflow implementations, our goal is to help you build systems that work while you sleep.

Ready to move beyond “vibe coding” and start building for real impact? Contact us today to see how we can help you operationalize these advanced AI strategies.